Containers

Integrating cross VPC ECS cluster for enhanced security with AWS App Mesh

NOTICE: October 04, 2024 – This post no longer reflects the best guidance for configuring a service mesh with Amazon ECS and its examples no longer work as shown. Please refer to newer content on Amazon ECS Service Connect.

——–

Customers often have applications owned by different teams in different Amazon ECS clusters. Alternatively, they may have many applications running in Amazon EC2 and some in Amazon ECS. Additionally, the applications may be running in their own VPCs in each cluster. In all these cases, it is harder to get consistent connectivity, observability, and security between services. Additionally, security communication between these services may introduce additional complexity. In this blog, we cover creating a mesh to connect services and securing communication between applications using encryption, in cases such as services deployed across VPCs and across ECS and EC2.

App Mesh is a managed service mesh that can be used with Amazon Elastic Container Service (ECS), Amazon Elastic Kubernetes Service (EKS), Amazon EC2 instances, and with self-managed Kubernetes on EC2. App Mesh makes it easy to connect all services into a common mesh and enable configuration of encryption via the mesh. Applications connected to the same mesh emit consistent metrics and logs, which makes it easier for service owners to troubleshoot in the event of cross service issues. App Mesh, when used with ACM, handles certificate distribution and trust validation so that all services connected to the mesh can rely on this, without having to make any code changes to configure end to end TLS.

This post will cover how to:

- Connect three applications deployed on different compute services to one mesh and enable them to access each other.

- Services are deployed on ECS with Fargate launch type and EC2 (non containerized)

- Connect these applications running in different VPCs using VPC Peering and integrate them with App Mesh

- Enable end-to-end encryption between the applications using AWS Certificate Manager

Overview of application

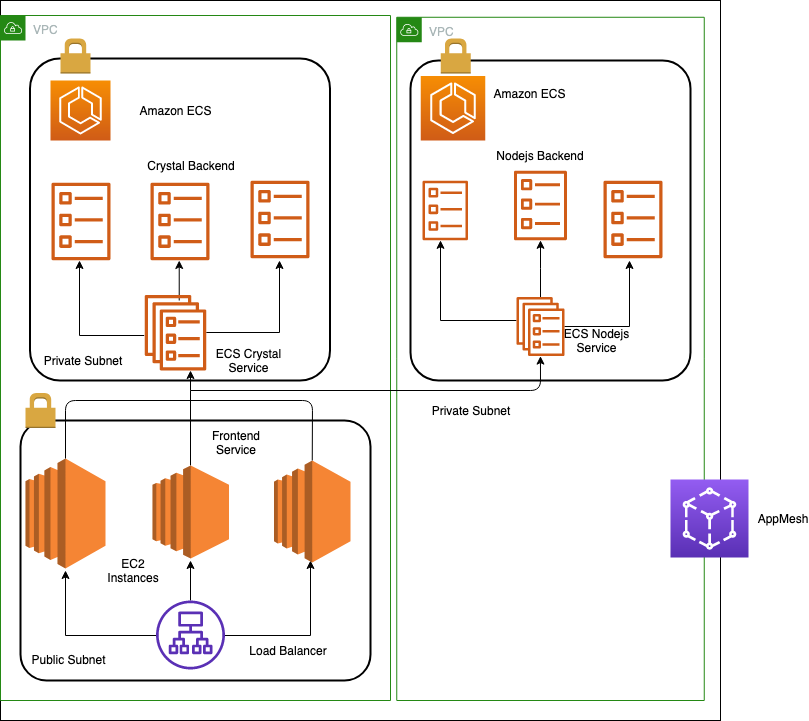

Solution overview

The solution involves an application composed of three components, a front end application hosted on EC2 instance and two back-end application, one on the VPC as same as the front end, and another one on the second VPC. The backend applications are running on separate ECS clusters deployed on separate VPCs. A mesh is created to span all clusters and VPCs. For this demonstration, we are creating a single mesh as the front end and backend components are part of one single application. The connectivity of the application between the VPCs is accomplished by peering the VPC. A transit gateway can also be used as an alternative to peering.

Below are the components we will be setting up as part of this demonstration:

Application Components:

Compute

The “frontend application” uses three EC2 instances and a load balancer sending the traffic to the instances. This application, written in ruby, makes calls to crystal and Node.js backend application, each of which are an ECS service running on Fargate tasks.

AWS CloudMap namespace

The front end, crystal backend, and Node.js backend services will have service discovery enabled with Cloud Map with entries in the appmeshworkshop.hosted.local namespace

App Mesh

The front end, crystal backend, and Node.js backend each are represented by a virtual service.

Each virtual service gets the information for the corresponding virtual node.

Infrastructure Components:

ECS clusters

We will spin up two ECS clusters in 2 VPCs hosting the two applications called “crystal ECS service” and “Node.js ECS service”

VPC peering

A VPC peering connection is a networking connection between two VPCs that enables you to route traffic between them using private IPv4 addresses or IPv6 addresses. Instances in either VPC can communicate with each other as if they are within the same network. VPC hosting the frontend and the crystal backend application will be peered with the Node.js backend application.

Prerequisites

In order to successfully carry out the base deployment:

- Make sure to have newest AWS CLI installed, that is, version 1.16.268 or above.

- Make sure to have jq installed.

- IAM role AppMesh-Workshop-Admin with necessary permission

You can create the resources from a local workstation with AWS credentials while deploying the CloudFormation template. Alternatively, you can also follow the steps listed here to create a workspace, attach the IAM role and deploy the CloudFormation stack.

Cluster provisioning

Let’s use the below CloudFormation stack to deploy the front end application and crystal backend application.

git clone https://github.com/aws/aws-app-mesh-examples.git

cd aws-app-mesh-examples/blogs/ecs-ec2-crossvpc-with-tls/Deploying the CloudFormation stack:

export EnvoyImage=<Use the latest image from https://docs.aws.amazon.com/app-mesh/latest/userguide/envoy.html>

aws cloudformation deploy --template-file appmesh-baseline.yml --stack-name appmesh-frontend-crystal --capabilities CAPABILITY_IAM --parameter-override EnvoyImage=$EnvoyImage

The CloudFormation template will launch the following:

- VPC with private and public subnets including routes, NAT gateways, and an internet gateway

- VPC endpoints to privately connect your VPC to AWS services

- An ECS cluster with no EC2 resources because we’re using Fargate

- Task definition file with the backend and envoy container config.

- ECR repositories for your container images

- A launch template and an Auto Scaling Group for your EC2 based services

One external ALB

Let’s now deploy the second CloudFormation stack that will create the Nodejs ECS cluster, the task definition files, ECS services, and the Cloud Map namespace.

export EnvoyImage=<Use the latest image from https://docs.aws.amazon.com/app-mesh/latest/userguide/envoy.html>

aws cloudformation deploy --template-file appmesh-nodejs.yml --stack-name appmesh-nodejs --capabilities CAPABILITY_IAM --parameter-override EnvoyImage=$EnvoyImage

The CloudFormation stacks would have deployed the necessary application components. One of the key requirements is to have the VPC peering as the backends are in different VPCs. In order for the front end to reach the Node.js in the second VPC, we will have to peer them and add the necessary routes. The appmesh-nodejs CloudFormation stack successfully created the peering between the VPC.

To discover the endpoints, we are now going to associate the VPC to private zones.

STACK_NAME1=appmesh-frontend-crystal

aws cloudformation describe-stacks --stack-name "$STACK_NAME1" | jq -r '[.Stacks[0].Outputs[] | {key: .OutputKey, value: .OutputValue}] | from_entries' > cfn-crystal.json

STACK_NAME2=appmesh-nodejs

aws cloudformation describe-stacks --stack-name "$STACK_NAME2" | jq -r '[.Stacks[0].Outputs[] | {key: .OutputKey, value: .OutputValue}] | from_entries' > cfn-nodejs.json

# Associate VPC

zone_id=$(aws route53 list-hosted-zones-by-name |jq -r '.HostedZones[] | select(.Name=="appmeshworkshop.hosted.local.")'.Id |awk -F/ '{print $3}');

VPC2=$(jq < cfn-nodejs.json -r '.VpcId')

aws route53 associate-vpc-with-hosted-zone --hosted-zone-id $zone_id --vpc VPCRegion="$AWS_REGION",VPCId="$VPC2"To summarize, we have deployed the following components:

- External Application Load Balancer: the ALB is forwarding the HTTP requests it receives to a group of EC2 instances in a target group

- Ruby Frontend: the Ruby application is responsible for assembling the UI. It runs on a group of EC2 instances that are configured in a target group and receive requests from the ALB mentioned above. To assemble the UI, the Ruby app has a dependency on two backend applications (described below)

- Crystal / Node.js Backends: These backend application run on ECS/Fargate. They listen on port 3000 and provide clients with some internal metadata, such as IP address and AZ where they are currently running on

- There is a client side script running on the web app that reloads the page every few seconds

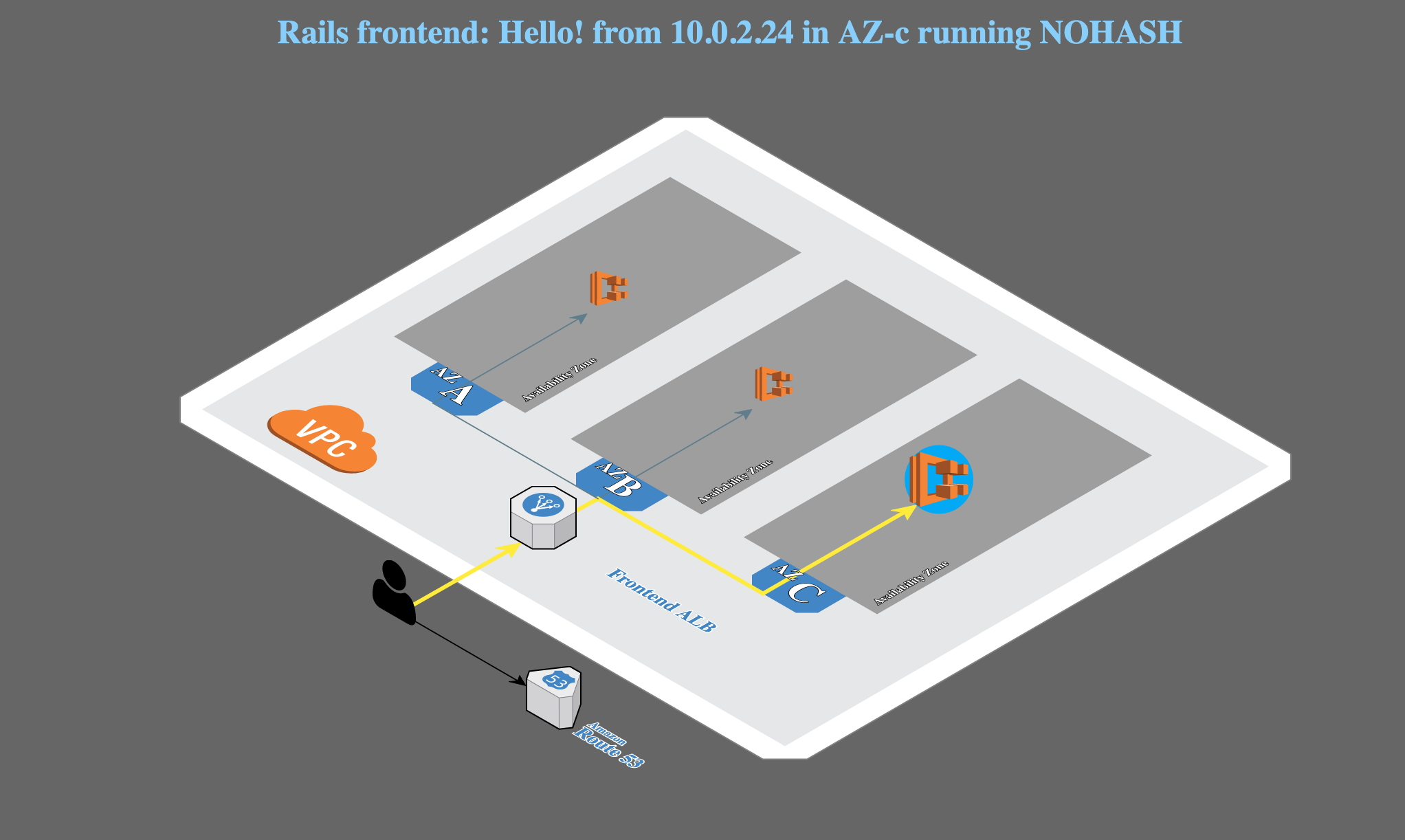

At this point, you can do hit the external load balancer url in the browser and should be able to see the complete application stack as below:

Setting up TLS communication

Virtual node certificate sources: ACM

In App Mesh, traffic encryption works between virtual nodes, and thus between Envoys in your service mesh. This means your application code is not responsible for negotiating a TLS-encrypted session. Instead, it allows the local proxy to negotiate and terminate TLS on your application’s behalf.

With ACM, you can host some or all of your Public Key Infrastructure (PKI) in AWS, and App Mesh will automatically distribute the certificates to the Envoys configured by your virtual nodes. App Mesh also automatically distributes the appropriate TLS validation context to other virtual nodes which depend on your service by way of a virtual service.

In the below step, we will be creating a CA cert and a wild card certificate *.appmeshworkshop.hosted.local. The CA Cert will be used by the front end ruby application to do the handshake with the backend which acts as the server and will have the wildcard certificate configured in their backend.

aws cloudformation deploy --template-file acm.yml --stack-name appmesh-acm --capabilities CAPABILITY_IAM

Deploying App Mesh components

aws cloudformation deploy --template-file mesh.yml --stack-name appmesh-components --capabilities CAPABILITY_IAMto create an SSM document to define the actions that SSM will perform on the managed instances. Documents use JavaScript Object Notation (JSON) or YAML and use Associations to associate with the Ruby Instances.

sh frontend-envoy.shWe have now added the services to the App Mesh and it’s time for us to test the application.

Test the application

Let’s try to curl the external load balancer and we will be able to see the envoy proxy intercepting the traffic

curl -v $(jq -r .ExternalLoadBalancerDNS cfn-crystal.json)

OUTPUT:

Rebuilt URL to: ExtLB-appmesh-frontend-crystal-1689116176.eu-west-2.elb.amazonaws.com/

* Trying 3.9.15.217...

* TCP_NODELAY set

* Connected to ExtLB-appmesh-frontend-crystal-1689116176.eu-west-2.elb.amazonaws.com (3.9.15.217) port 80 (#0)

> GET / HTTP/1.1

> Host: ExtLB-appmesh-frontend-crystal-1689116176.eu-west-2.elb.amazonaws.com

> User-Agent: curl/7.61.1

> Accept: */*

>

< HTTP/1.1 200 OK

< Date: Sat, 03 Oct 2020 15:19:57 GMT

< Content-Type: text/html; charset=utf-8

< Transfer-Encoding: chunked

< Connection: keep-alive

< x-frame-options: SAMEORIGIN

< x-xss-protection: 1; mode=block

< x-content-type-options: nosniff

< etag: W/"c5625f8ec4f17d5fe9a19478a061914c"

< cache-control: max-age=0, private, must-revalidate

< x-request-id: e12dd957-9faf-95a9-8206-1e9dacaf4d07

< x-runtime: 0.098909

< server: envoy

< x-envoy-upstream-service-time: 99

<

Notice the server header envoy when calling the external load balancer. We were able to successfully set up the applications with App Mesh and we can infer that it is the envoy intercepting the traffic when a request is being made.

We were also able to encrypt the traffic from our front end application to the backends using a certificate from ACM

Conclusion

From the solution, we can see the ease of using App Mesh for connecting, securing, and observing your cross-compute, cross-cluster, and cross-VPC workload. When customers are tasked with adopting new technology, they ask us how to connect and discover services across different clusters and VPCs in an easy way. This solution solves the very same problem using VPC peering and Cloud Map.

Clean Up

To tear down the environment, execute the following commands :

aws cloudformation delete-stack --stack-name appmesh-components

aws cloudformation delete-stack --stack-name appmesh-acm

aws cloudformation delete-stack --stack-name appmesh-nodejs

aws cloudformation delete-stack --stack-name appmesh-frontend-crystal

Next Steps

Here are few links that you can check out for more hands-on tutorials. Also, please refer to the AWS App Mesh documentation for more information with the service as well as the App Mesh user guide, which provides sections on Getting Started, Best Practices, and Troubleshooting.