AWS Developer Tools Blog

Tag: .NET

Working with dependency injection in .NET Standard: inject your AWS clients – part 2

In part 1 of this blog post, we explored using the lightweight dependency injection (DI) provided by Microsoft.Extensions.DependencyInjection. By itself, this is great for libraries and small programs, but if you’re building a nontrivial application, you have other problems to contend with: You might have complex configuration needs (development versus production, multiple sources, etc.) How […]

Working with dependency injection in .NET Standard: inject your AWS clients – part 1

Dependency injection (DI) is a central part of any nontrivial application today. .NET has libraries like Ninject for implementing inversion of control (IOC) in their development and, as of .NET Core 1.0 (specifically, .NET Standard 1.1), lightweight DI can be provided by Microsoft.Extensions.DependencyInjection. This was used primarily in the context of developing .NET Core web applications, but it can be […]

AWS CDK for .NET

AWS CDK for .NET The AWS Cloud Development Kit (AWS CDK) Developer Preview now has support for .NET! The AWS CDK is a software development framework to define cloud infrastructure as code and provision it through AWS CloudFormation. This provides familiarity, IDE and tools support, and flexibility that are unavailable when using static text definitions. […]

Amazon S3 Select Support in the AWS SDK for .NET

We are releasing support for Amazon S3 Select in the AWS SDK for .NET. This feature enables developers to run simple SQL queries against objects in Amazon S3. Today, if you’re frequently pulling entire objects to use portions of them, this functionality could dramatically improve performance. S3 Select works on objects stored in CSV format or JSON […]

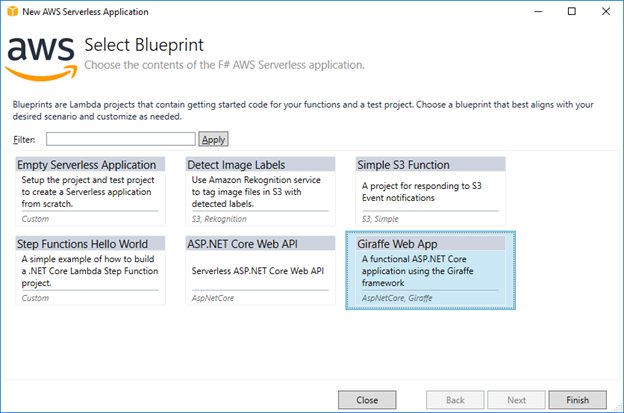

F# Tooling Support for AWS Lambda

F# is a functional language that runs on .NET and enables you to use packages written in other .NET languages, like the AWS SDK for .NET that’s written in C#. Today we have released a new version of the AWS Toolkit for Visual Studio 2017 with support for writing AWS Lambda functions in F#. These […]

Serverless ASP.NET Core 2.0 Applications

In our previous post, we announced the release of the .NET Core 2.0 AWS Lambda runtime and new versions of our .NET tooling to help you develop .NET Core 2.0-based serverless applications. Also, with the new .NET Core 2.0 Lambda runtime, we’ve released our ASP.NET Core NuGet Package, Amazon.Lambda.AspNetCoreServer, for general availability. Version 2.0.0 of […]

AWS Lambda .NET Core 2.0 Support Released

Today we’ve released the highly anticipated .NET Core 2.0 AWS Lambda runtime that is available in all Lambda-supported regions. With .NET Core 2.0, it’s easier to move existing .NET Framework code to .NET Core with the much larger API defined in .NET Standard 2.0, which .NET Core 2.0 implements. Using Visual Studio 2017 The easiest […]

Deploy an Amazon ECS Cluster Running Windows Server with AWS Tools for PowerShell – Part 1

This is a guest post from Trevor Sullivan, a Seattle-based Solutions Architect at Amazon Web Services (AWS). In this blog post, Trevor shows you how to deploy a Windows Server-based container cluster using the AWS Tools for PowerShell. Building and deploying applications on the Windows Server platform is becoming a significantly lighter-weight process. Although you […]

Writing and Archiving Custom Metrics using Amazon CloudWatch and AWS Tools for PowerShell

This is a guest post from Trevor Sullivan, a Seattle-based Solutions Architect at Amazon Web Services (AWS). Since 2004, Trevor has worked intimately with Microsoft technologies, including PowerShell since its release in 2006. In this article, Trevor takes you through the process of using the AWS Tools for PowerShell to write and export metrics data […]

Deploying .NET Web Applications Using AWS CodeDeploy with Visual Studio Team Services

Today’s post is from AWS Solution Architect Aravind Kodandaramaiah. We recently announced the new AWS Tools for Microsoft Visual Studio Team Services. In this post, we show you how you can use these tools to deploy your .NET web applications from Team Services to Amazon EC2 instances by using AWS CodeDeploy. We don’t cover setting up the tools in […]