AWS for Industries

Enabling mainframe automated code build and deployment for financial institutions using AWS and Micro Focus solutions

Mainframes are used by financial institutions for critical applications, batch data processing, online transaction processing, and mixed concurrent workloads. They have non-functional requirements such as performance, security, and resource availability to process all workloads, even in development environments. However, potential resource and parallelism reduction may occur during the development of new programs and subsequent testing.

This blog post presents a solution to the resource availability issue in the COBOL development process, using Continuous Integration/Continuous Delivery services (CI/CD) from AWS connected to an IDE, such as Eclipse or Visual Studio. In the same development pipeline, the user can connect the Micro Focus solution called Enterprise Developer, for the step of compiling and running unit and functional tests.

Micro Focus is an Advanced Technology Partner in the AWS Partner Network. With AWS and Micro Focus solutions, financial institutions can migrate mainframe workloads to AWS or deploy Micro Focus cloud solutions portfolio.

Benefits for financial institutions

Here are some interesting benefits for financial institutions through the AWS and Micro Focus partnership approach for agile development, even with legacy programming languages such as COBOL:

- Possibility of creating a CI/CD pipeline outside the legacy mainframe environment for each project.

- EC2 instances can run as Spot Instances or like On-Demand Capacity Reservation for cost reduction.

- Greater autonomy for parallel development of new programs and resources, avoiding deadlocks.

- Improved developer productivity.

- Possibility of horizontally scaling the number of development teams.

- Reduction of a significant amount of MIPS used, reducing mainframe infrastructure costs.

- Test automation: possibility of functional and unit testing outside the mainframe environment.

- Automated code review and impact using Micro Focus Analyzer.

- Faster time-to-market (TTM) with agile development.

DevOps and CI/CD using AWS services

AWS provides a suite of flexible services designed to enable financial institutions to create and distribute products faster and more securely using best practices and DevOps. These services simplify infrastructure provisioning and management, application code deployment, automation of software release processes, and monitoring the performance of your application and infrastructure.

DevOps is the combination of cultural philosophies, practices, and tools that enhance a financial institution’s ability to deliver applications and services at high speed: Optimizing and enhancing products at a faster rate than others using traditional software development processes and infrastructure management. This speed enables financial institutions to better serve their customers and compete more effectively in the market.

AWS Developer Tools help you safely store and control the source code of your application, and automatically create, test, and deploy applications on AWS. Figure 1 shows the software release steps that we used in our solution.

Figure 1 – Software release steps

1. AWS CodeCommit is a fully managed source control service that hosts protected Git-based repositories. It allows developers to easily collaborate on code in a secure and highly scalable ecosystem. AWS CodeCommit eliminates the need to operate your own source control system or worry about infrastructure scalability. With the AWS CodeCommit service, COBOL developers can securely store anything from source code to binary files. Another advantage is to use Eclipse or Visual Studio IDEs to make code changes to an AWS CodeCommit repository. Integration with these toolkits is designed to work with Git credentials and an AWS Identity & Access Management (IAM) user.

2. AWS CodeBuild is a fully managed service that compiles source code, runs tests, and produces ready-to-deploy software packages. With AWS CodeBuild, the COBOL developer does not have to provision, manage, and scale their own build servers. AWS CodeBuild scales continuously and processes multiple builds at the same time, preventing them from waiting in a queue. Optionally, during the CodeBuild process, the user may include third party tools for testing code quality, such as SonarQube. This option avoids unnecessary compilation of unapproved codes.

3. AWS CodeDeploy automates code deployments for any instance, including Amazon EC2 instances and on premises servers. AWS CodeDeploy enables the rapid launch of new features, helps you avoid downtime during COBOL application deployment, and deals with the complexity of updating them.

4. AWS CodePipeline is a seamless integration and continuous delivery service for fast and reliable application and infrastructure upgrades. CodePipeline creates, tests, and deploys code whenever a code change occurs, according to defined release process templates. This enables you to make features and updates available quickly and reliably.

5. Amazon CloudWatch is a monitoring and observation service created for DevOps engineers, developers, etc. CloudWatch provides practical data and insights to monitor applications, respond to system-wide performance changes, optimize resource utilization, and gain a unified view of operational integrity, throughout the process of developing new programs, modules or features using the COBOL programming language.

Micro Focus Enterprise Developer

Micro Focus Enterprise Developer provides a contemporary development set for mainframe application development and maintenance, regardless of whether the target deployment is inside or outside the mainframe. With this architecture, it’s offering the development of COBOL programs outside the mainframe, aiming at generating autonomy for the COBOL developers and reducing mainframe MIPS/MSU consumption.

Micro Focus Enterprise Developer is for financial institutions looking to develop and modernize mainframe applications in a productive, Windows-based development environment such as Amazon EC2. Developers can choose between Visual Studio or Eclipse-based IDE, and development and testing tools are provided for all target environments currently supported by Micro Focus.

Micro Focus’ product benefits include:

1. Full desktop application development lifecycle support: From initial application design to unit analysis, development, compilation, testing and debugging, and support for COBOL and PL/1 (Programming Language 1).

2. Code analysis and pattern verification: Integrated directly into the IDE (Figure 2) at the switch point, developers can make changes to existing programs with greater confidence.

Figure 2 – Compatible IDEs (Eclipse and Visual Studio)

3. Mainframe source control integration: Including Micro Focus ChangeMan ZMF. Developers have full access to tools and projects inside and outside the mainframe from a single development environment.

4. Effective teamwork and collaboration: Application work grouping enables developers to share source code, data, and program executables. This ensures secure, centralized management of teams and applications, and greatly simplifies the task of setting up a shared development environment for multiple users.

5. Comprehensive mainframe compatibility: Allows mainframe applications to be developed and tested on Windows Operating System without relying on the mainframe. Support is provided for various IBM mainframe COBOL dialects, including support for Enterprise COBOL 6.2.

6. Extensive support for mainframe data: For editing, accessing and transforming different types of mainframe data. Developers can locally access their own datasets such as QSAM and VSAM, Generation Data Groups (GDGs), IMSDB, and DB2 database emulators for testing.

7. Efficient application modernization: Tools and processes to support application modernization, extending access via J2EE, COM, Web Services, and SOA.

Solution overview

The user can download the Toolkit for Eclipse to connect to the AWS CodeCommit repository. Use your access key and the password of your AWS IAM registered user. Once installed and configured, the developer can clone a CodeCommit repository in Eclipse or create a CodeCommit repository from Eclipse (Figure 3) via the AWS Explorer tab.

Figure 3 – CodeCommit repository created via Eclipse IDE

The automated build process uses an EC2 server as its environment. To run and for that server to be automatically configured, the process uses the functionality of launch templates. Launch templates allow you to store execution parameters so that you do not have to specify them each time you run an instance. For example, a launch template might contain the Operating System, instance type, permissions, and network settings that you typically use to run instances. Figure 4 shows an example of this model used in the process. An example buildspec for this automated build process is available here.

Figure 4 – Instance execution model

Using the execution template described above, the process creates an EC2 instance according to Figure 5, which will be used in the code compilation process and also in the emulation of the mainframe environment. This EC2 instance has Micro Focus Enterprise Developer installed, which contains the COBOL compiler and Enterprise Server to perform the required tests, whether functional or unitary. Attached to this blog post are the main commands used to compile COBOL code.

Figure 5 – EC2 instance created with execution template

The process launches the EC2 instance using the execution template and waits until this new instance is connected to the management platform AWS Systems Manager. When the connection to the management platform is complete, the process uses the functionality of Run Commands remotely to begin the validation and compilation phase of COBOL code. Figure 6 shows the registration of this process in Amazon CloudWatch, which is performed using code that runs on the AWS serverless platform, called AWS Lambda. An example of this Lambda code is available here.

Figure 6 – EC2 instance execution log

Remote command execution, used to perform the COBOL code validation and compilation process, can send records to the AWS CloudWatch, thus keeping process execution records on the same platform, unifying and simplifying the monitoring and troubleshooting process as shown in Figure 7. An example for automating this process using a batch file is available here.

Figure 7 – Command execution log within EC2 instance

When the process is finished, the script sends the compiled files and processing logs to the Amazon Simple Storage Service (S3) bucket. That way, other processes may use this information for processing. These files are listed in Figure 8 below.

Figure 8 – Bucket of S3 with artifacts resulting from the process

The next step in the process is waiting for the user to perform their unit and functional tests, with Micro Focus Enterprise Developer accessing the already running EC2 instance and setting up the emulation environment, including the application version newly compiled. To make this possible, the developer receives an email announcement (Figure 9) sent by the platform Amazon Simple Notification System (SNS) together with Amazon Simple Email System (SES).

Figure 9 – Build and deploy process completion notification

One of the features CodePipeline provides is to pause pipeline execution pending user approval (Figure 10). Once the user approves, the pipeline continues to perform the next steps.

Figure 10 – Pipeline awaiting user approval

All pipeline steps as well as their status and execution times are available for consultation and follow up in the CodePipeline console (Figure 11).

Figure 11 – Pipeline steps with execution status

In Figure 12, we have each AWS service of the proposed architecture. The developer can use Git-compliant IDEs such as Eclipse or Visual Studio to make changes to COBOL code. As an alternative to local desktops, the developer can use an Amazon EC2 instances with the desired Operating System or Amazon WorkSpaces (Desktop as a Services) that is a managed AWS service. In this case, each developer can have their own secure, scalable, and elastic desktop to install the preferred COBOL development IDEs.

Figure 12 – Proposed solution architecture

Below is the summary of the execution flow:

- User connects and commits changes to AWS CodeCommit repository.

- AWS CodePipeline starts build pipeline.

- AWS CodeBuild sends build Instructions for an AWS Lambda function (addendum).

- AWS Lambda stores source code in an Amazon S3 bucket.

- AWS Lambda starts an Amazon EC2 instance.

- AWS Lambda sends build instructions to AWS Systems Manager (addendum).

- AWS System Manager sends the Amazon EC2 instance build instructions (addendum).

- The Amazon EC2 instance downloads the source code from the Amazon S3 bucket.

- The Amazon EC2 instance builds artifacts back to the Amazon S3 bucket.

- The Amazon EC2 instance sends build status to AWS CodeBuild.

- AWS CodePipeline sends an email via Amazon SNS to inform the developer that the build is complete and the EC2 instance IP to connect.

- AWS CodePipeline begins the deployment process.

- AWS CodeDeploy sends approved source code to S3 bucket.

With approved source code, developer can be sent back to the mainframe to perform the final recompilation through the connection with Micro Focus Changeman ZMF, for example. Another alternative is to use Micro Focus Enterprise Test Server for integration testing between programs before sending back to mainframe.

Why use AWS for CI/CD in COBOL development?

A pillar of modern application development, Continuous Delivery expands upon Continuous Integration by deploying all code changes to a testing environment and/or a production environment after the build stage. When properly implemented, developers will always have a deployment-ready build artifact that has passed through a standardized test process.

Running on AWS, Continuous Delivery lets COBOL developers automate testing beyond just unit tests so they can verify application updates across multiple dimensions before deploying to customers. With AWS, it is easy and cost-effective to automate the creation and replication of multiple environments for testing, which was previously difficult to do on-premises.

The main advantages of developing COBOL applications on AWS are:

- Update applications/programs quickly;

- Reduce impact on code changes;

- Standardize and automate delivery operations;

- Simplify management of management infrastructure;

- Operate and manage your infrastructure and development processes at scale;

- Build more effective teams under a DevOps cultural model, which emphasizes values such as ownership and accountability;

- Move quickly while retaining control and preserving compliance. You can adopt a DevOps model without sacrificing security by using automated compliance policies, fine-grained controls, and configuration management techniques.

Besides that, the AWS Well-Architected Framework is designed to help cloud architects build an infrastructure with the highest levels of security, performance, resiliency, and efficiency possible for their applications, based on five pillars (Table 1): Operational Excellence, Security, Reliability, Performance Efficiency, and Cost Optimization.

AWS Well-Architected Framework provides a consistent approach for financial institutions to evaluate architectures and implement designs that will scale over time, with the ability to exceed the needs of the most demanding mainframe applications, in the production or development environment.

| Name | Description |

| Operational Excellence | The ability to run and monitor systems to deliver business value and to continually improve supporting processes and procedures. |

| Security | The ability to protect information, systems, and assets while delivering business value through risk assessments and mitigation strategies. |

| Reliability | The ability of a system to recover from infrastructure or service disruptions, dynamically acquire computing resources to meet demand, and mitigate disruptions. |

| Performance Efficiency | The ability to use computing resources efficiently to meet system requirements, and to maintain that efficiency as demand changes and technologies evolve. |

| Cost Optimization | The ability to run systems to deliver business value at the lowest price point. |

Operational Excellence

From the six design principles for operational excellence in AWS, at least 3 were used in the proposed solution:

- Perform operations as code: Running on AWS the COBOL development environment for mainframe, the user can apply the same engineering discipline used for application code to your entire environment. The COBOL developer can script your operations procedures and automate their execution by triggering them in response to events. For automation pipelines, Infrastructure as Code and CI/CD, AWS CloudFormation describes and provisions features, and Amazon Machine Images (AMI) can preconfigure Enterprise Developer on AWS instances.

- Make frequent, small, reversible changes: Design development workloads to allow components to be updated regularly to increase the flow of beneficial changes into your workload. Make changes in small increments that can be reversed if they fail to aid in the identification and resolution of issues introduced to your COBOL development environment.

- Anticipate failure: With the complete pipeline running on AWS added to the Micro Focus solutions, the COBOL developer can test your failure scenarios and validate your understanding of their impact. Test your response procedures to ensure they are effective and that teams are familiar with their execution.

Security

Security is AWS’s top priority, and one of the advantages of AWS cloud is that financial institutions inherit the best practices of policies, and operational processes designed to meet the security requirements from financial institutions.

The COBOL developer, rather than just focusing on protection of a single outer layer, can apply a defense-in-depth approach with other security controls like Amazon Virtual Private Cloud (VPC), subnets, and network access control lists (ACL).

To highlight the Enterprise Developer integration with the AWS Cloud through AWS IAM, the user can implement the principle of least privilege and enforce separation of duties with appropriate authorization for each interaction with your AWS resources. In addition, can leverage full audit via AWS CloudTrail and notifications with Amazon CloudWatch Alarms.

Reliability

A development environment that is almost the same as a production environment must have reliability. To achieve it, the environment must have a well-planned foundation and monitoring in place, with mechanisms for handling changes in demand or requirements. The system should be designed to detect failure and automatically heal itself.

For change management, AWS CloudTrail records AWS API calls for your account and delivers log files to you for auditing. AWS Auto Scaling is a service that will provide an automated demand management for a deployed workload. Amazon CloudWatch provides the ability to alert on metrics, including custom metrics. Amazon CloudWatch also has a logging feature that can be used to aggregate log files from your resources.

Setting up a continuous integration and continuous delivery (CI/CD) pipeline on AWS for COBOL, the user can stop guessing capacity, that is a common cause of failure in on-premises systems due to resource saturation. Thus, running on AWS the user could monitor demand and system utilization, and automate the addition or removal of resources to maintain the optimal level to satisfy demand without over or under-provisioning.

Performance Efficiency

From the five design principles for performance efficiency in AWS, the COBOL developer can take advantage of at least three principles:

- Democratize advanced technologies: Technologies that are difficult to implement can become easier to consume by pushing that knowledge and complexity into the cloud vendor’s domain. In AWS, these technologies become services that team of developers can consume. This lets your team focus on product development rather than resource provisioning and management.

- Go global in minutes: Easily deploy of pipeline for development in multiple AWS Regions around the world with just a few clicks. This allows to provide lower latency and a better experience for developers at minimal cost.

- Experiment more often: With virtual and automatable resources, the user can quickly carry out comparative testing using different types of instances or configurations.

Cost Optimization

Using the appropriate instances and resources for your workload is key to cost savings. AWS offers on-demand, pay-as-you-go, enabling the user to obtain the best return on your investment for each specific development case. AWS services do not have complex dependencies or licensing requirements, so you can get exactly what you need to build innovative, cost-effective solutions using the latest technology.

Amazon EC2 and Amazon S3 are building-block AWS services, whereas AWS CodeCommit, AWS CodeBuild, AWS CodeDeploy, and AWS CodePipeline are managed services. The COBOL developer, by selecting the appropriate building blocks and managed services, can optimize the development workload for cost. In the proposed architecture, using managed services for CI/CD, it’s possible to reduce or remove much of administrative and operational overhead, freeing developer to work on development of new applications.

Another possibility to reduce costs is to apply tags to your AWS resources (such as EC2 instances or S3 buckets), and AWS generates a cost and usage report with your usage and your tags. You can apply tags that represent organization categories (such as cost centers, programs, projects, workload names, or owners) to organize your costs across multiple services.

Resources

- All AWS features of the demonstrated architecture (Figure 12) are available on GitHub, including Lambda code, batch script, and buildspec files used in the automated build and deploy process.

- Also request a license of Micro Focus Enterprise Developer to compile and run all unit and functional tests. For integration testing between programs, you can use the Micro Focus Enterprise Test Server.

- Want to work with Micro Focus? Click here to connect.

- Check back on the Industry blog for a continuation on this topic, addressing agile mainframe development, testing, and CI/CD with AWS and Micro Focus.

Micro Focus – APN Partner Spotlight

Micro Focus is an AWS Competency Partner. They enable financial institutions to utilize new technology solutions while maximizing the value of their investments in critical IT infrastructure and business applications.

Contact Micro Focus | Solution Overview

Already worked with Micro Focus? Rate this Partner

Addendum

COBOL compilation instructions

Following are the commands the developer can use to compile COBOL programs via command line using Micro Focus Enterprise Developer. All commands have been entered into the CodeBuild build script:

For Windows:

cobol <nome-programa>.cbl,,, preprocess(EXCI) USE(diretivas_compilacao.dir);

The above command references the file named “diretivas_compilacao.dir“. This file must have the necessary build directives for COBOL/CICS programs, for example, from the BANKDEMO server.

Below is the content of the diretivas_compilacao.dir file:

NOOBJ

DIALECT"ENTCOBOL"

COPYEXT"cpy,cbl"

SOURCETABSTOP"4"

COLLECTION"BANKTEST"

NOCOBOLDIR

MAX-ERROR"100"

LIST()

NOPANVALET NOLIBRARIAN

WARNING"1"

EXITPROGRAM"GOBACK"

SOURCEFORMAT"fixed"

CHARSET"EBCDIC"

CICSECM()

ANIM

ERRFORMAT(2)

NOQUERY

NOERRQ

STDERR

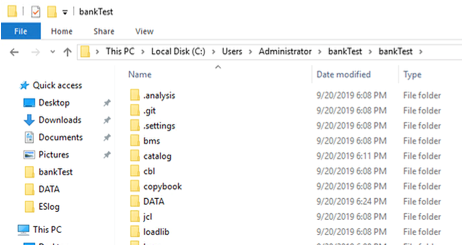

If using Windows, the developer needs to run the cobol command in the directory where the source code is. The developer needs to copy to the same directory the copybooks used by these programs (.cpy extension files), or point to the directory containing the copybooks through the COBCPY environment variable.

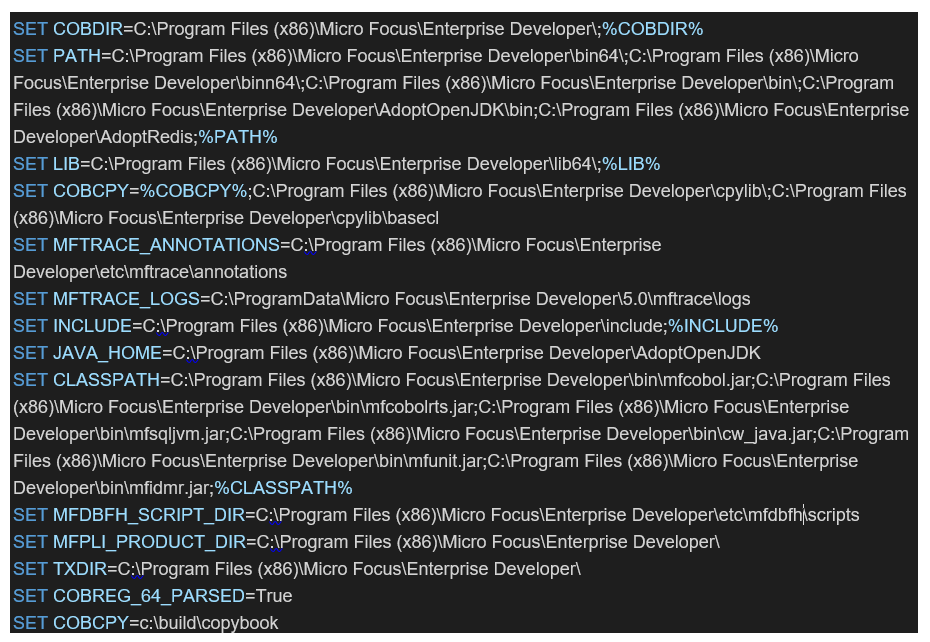

The build script contains the environment variables required to run the BANKDEMO server:

For some non-COBOL/CICS programs, such as BANKDEMO’s UDATECNV.CBL and SSSECUREP.CBL, simply run the commands without the “preprocess (EXCI)” directive. This directive is responsible for calling the CICS precompiler.

Commands for generating DLL files

The developer must execute the command “cbllink” to produce a dynamic link library file of programs. Example:

C:\>cbllink -d name_pgm.obj

The “-d” parameter indicates that a .DLL file is generated. The output from compilation “name_pgm.obj” will be used as input to the link.

After that, the developer needs to copy them to the destination directory (… \ loadlib) only the result of the link, i.e., the name_pgm.DLL file.

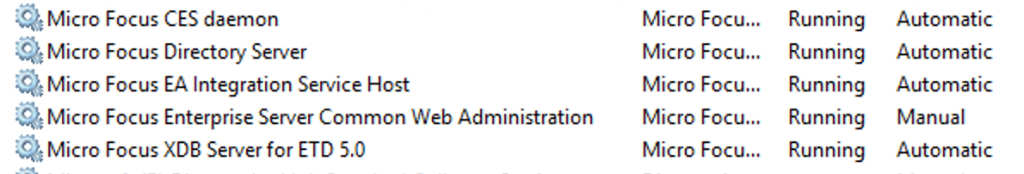

Commands to start Micro Focus services

After the files are compiled, you can start Micro Focus services and the BANKDEMO server on the EC2 instance to run the tests because Micro Focus Enterprise Developer already contains the Enterprise Server.

Micro Focus CES daemon and Directory Server services must be started:

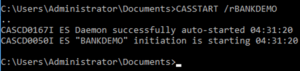

We enter the command lines to activate the server, for example BANKDEMO, in the build script. The command is: > casstart /r <name-of-server>. In the case of the BANKDEMO example, the command used is: > casstart /rBANKDEMO

This documentation gives more details on command lines.

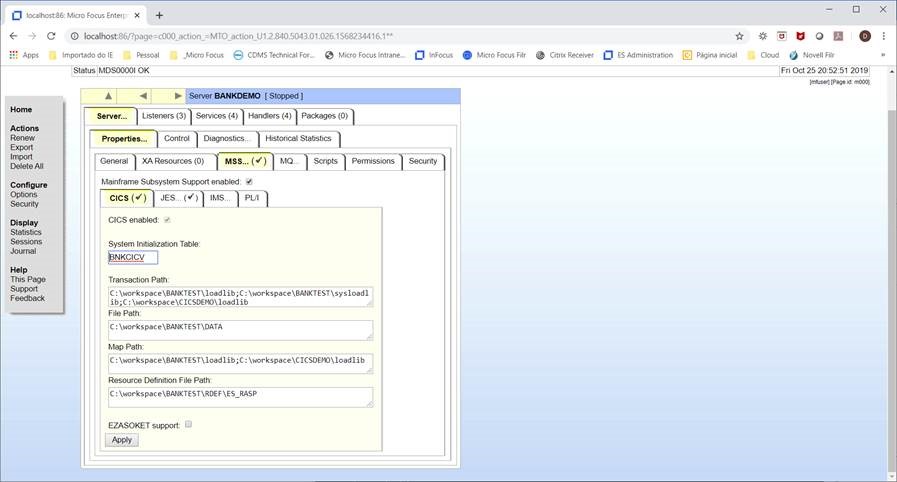

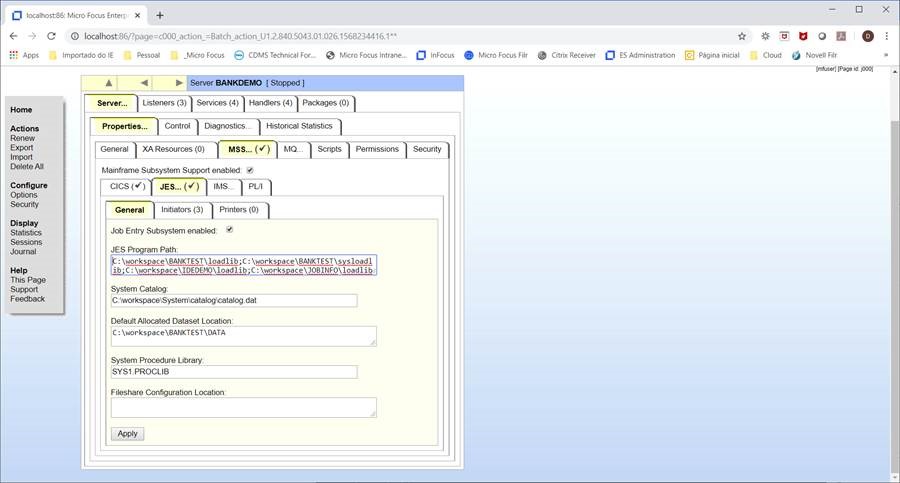

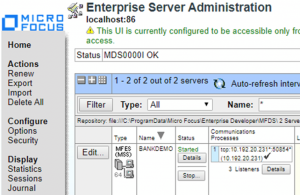

Check that the BANKDEMO server is started in the browser (https://localhost:86).

Set up the directory structure with the “Transaction Path” and “Map Path” fields for CICS programs and the “JES Program path” field for Batch programs. In these fields, the developer needs to point to the directories where they copied the .DLLs files of programs.