Artificial Intelligence

Understanding Amazon Bedrock model lifecycle

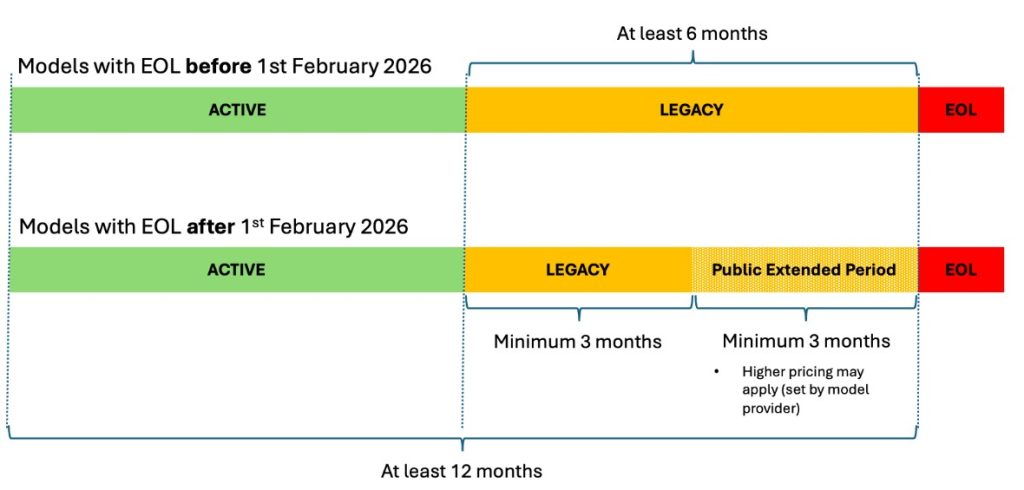

This post shows you how to manage FM transitions in Amazon Bedrock, so you can make sure your AI applications remain operational as models evolve. We discuss the three lifecycle states, how to plan migrations with the new extended access feature, and practical strategies to transition your applications to newer models without disruption.

Accelerate Generative AI Inference with NVIDIA NIM Microservices on Amazon SageMaker

In this post, we provide a walkthrough of how customers can use generative artificial intelligence (AI) models and LLMs using NVIDIA NIM integration with SageMaker. We demonstrate how this integration works and how you can deploy these state-of-the-art models on SageMaker, optimizing their performance and cost.

Llama 3.1 models are now available in Amazon SageMaker JumpStart

Today, we are excited to announce that the state-of-the-art Llama 3.1 collection of multilingual large language models (LLMs), which includes pre-trained and instruction tuned generative AI models in 8B, 70B, and 405B sizes, is available through Amazon SageMaker JumpStart to deploy for inference. Llama is a publicly accessible LLM designed for developers, researchers, and businesses to build, experiment, and responsibly scale their generative artificial intelligence (AI) ideas. In this post, we walk through how to discover and deploy Llama 3.1 models using SageMaker JumpStart.

Deploy thousands of model ensembles with Amazon SageMaker multi-model endpoints on GPU to minimize your hosting costs

Artificial intelligence (AI) adoption is accelerating across industries and use cases. Recent scientific breakthroughs in deep learning (DL), large language models (LLMs), and generative AI is allowing customers to use advanced state-of-the-art solutions with almost human-like performance. These complex models often require hardware acceleration because it enables not only faster training but also faster inference […]