Artificial Intelligence

Deploy LLMs on Amazon EKS using vLLM Deep Learning Containers

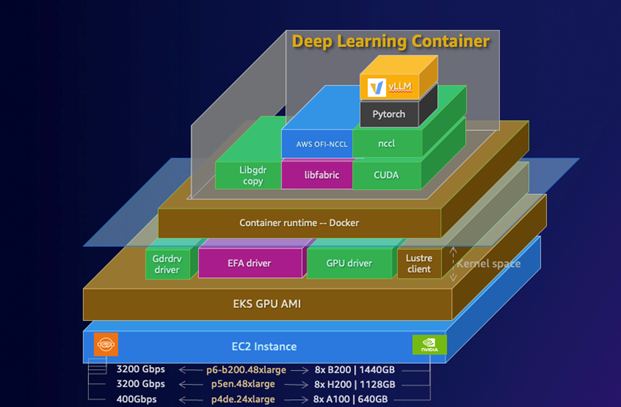

In this post, we demonstrate how to deploy the DeepSeek-R1-Distill-Qwen-32B model using AWS DLCs for vLLMs on Amazon EKS, showcasing how these purpose-built containers simplify deployment of this powerful inference engine. This solution can help you solve the complex infrastructure challenges of deploying LLMs while maintaining performance and cost-efficiency.

Security best practices to consider while fine-tuning models in Amazon Bedrock

In this post, we implemented secure fine-tuning jobs in Amazon Bedrock, which is crucial for protecting sensitive data and maintaining the integrity of your AI models. By following the best practices outlined in this post, including proper IAM role configuration, encryption at rest and in transit, and network isolation, you can significantly enhance the security posture of your fine-tuning processes.

Use Amazon SageMaker Model Cards sharing to improve model governance

One of the tools available as part of the ML governance is Amazon SageMaker Model Cards, which has the capability to create a single source of truth for model information by centralizing and standardizing documentation throughout the model lifecycle.

SageMaker model cards enable you to standardize how models are documented, thereby achieving visibility into the lifecycle of a model, from designing, building, training, and evaluation. Model cards are intended to be a single source of truth for business and technical metadata about the model that can reliably be used for auditing and documentation purposes. They provide a fact sheet of the model that is important for model governance.