AWS for M&E Blog

EngineLab AI: Production-ready AI for studios and creators on AWS

Studios face a critical dilemma: AI tools promise to accelerate production workflows, but adopting them means confronting real concerns about security, intellectual property (IP) protection, and production stability. This post introduces a solution designed to resolve that trade-off.

You can use AI tools such as ComfyUI—an open source node-based interface for AI content generation— to graphically design and orchestrate complex AI-driven functionality by connecting models, processing tools, and creative operations into production-grade workflows. But as often happens with nascent technology that offers to accelerate production workflows or drive new efficiencies, it can introduce risks. Rapidly evolving AI landscapes create challenges around standardization, pipeline integration, and application stability. Security concerns are even more complex, because you need to understand the provenance of the models you use to navigate legal requirements and keep the intellectual property flowing through AI workflows private, as required by the strict security standards expected when working with IP. Without proper vetting and approval of models and related artifacts, unauthorized and potentially malicious tools and code can be introduced into working environments.

Historically, creators have had to balance these benefits and risks at the cost of bringing AI into production.

EngineLab, an AWS media and entertainment specialist partner, has launched a managed deployment platform called EngineLab AI. Built on AWS infrastructure, it packages AI apps like ComfyUI into a stable, secure, production-ready toolset. Purpose-built for the media and entertainment industry, it understands your workflows and puts powerful AI capabilities in the hands of every artist on your team—from the advanced authors of workflows to the general users wanting to benefit from defined processes.

“Studios are telling us they want to use AI in production, but they can’t accept the instability and risk that comes with it today. We’re building the platform that removes that barrier, pipelining AI tools into studio workflows with the security and control the industry demands.” – Sam Reid, Co-Founder and CEO, EngineLab

In this post, we show you how to solve these challenges with EngineLab AI: deploying directly into your AWS account for complete data sovereignty, tapping the global GPU resources provided by AWS for optimal availability and cost, and integrating security controls that protect your IP without sacrificing the creative flexibility your workflows demand.

Enabling stable, scalable AI workflows

Acquiring high-performance GPU hardware is getting harder and more expensive for studios. Component shortages, rising costs, and the overhead of maintaining on-premises infrastructure make it increasingly difficult to build and sustain the GPU capacity that AI workloads demand. Even when hardware can be acquired, studios face the challenge of keeping it stable and efficiently allocated across diverse projects and teams.

ComfyUI’s web-based architecture offers a key advantage over traditional remote desktop solutions. Typical cloud-based workstations use Virtual Desktop Infrastructure sessions, to stream the desktop from its remote location to a local client—including user interface interactions from keyboard, mouse, and Wacom tablet inputs—but ComfyUI runs in a local web browser with the compute-intensive processing handled server-side. The user interface isn’t sensitive to latency constraints, which unlocks a key architectural advantage: compute resources can be provisioned remotely without latency sensitivity.

EngineLab AI uses this flexibility to dynamically use the AWS Global Infrastructure, drawing upon AWS Regions (geographically isolated cluster of AWS data centers) with available Amazon Elastic Compute Cloud (Amazon EC2) capacity (an AWS scalable virtual server service that provides on-demand GPU instances for compute-intensive workloads). This allows EngineLab AI to access a global pool of GPU powered Amazon EC2 instance types, using a range of NVIDIA GPU architectures—including Blackwell, Ada Lovelace, Ampere, and others—with varying vCPU counts and memory configurations, expanding availability across Regions for greater resource access, reducing competition for fixed local hardware. By using Amazon EC2 instances, this solution reduces fixed costs for AI workflows, allowing studios to access GPU resources on-demand, as-and-when required.

This also means studios can match compute to the current task. Not every artist needs the equivalent of a high-end graphics card under their desk when cloud infrastructure can dynamically allocate appropriate resources for each job. GPU resources can be partitioned across multiple users, further reducing costs without sacrificing performance, delivering stable access to GPU compute at scale, without the fixed costs and operational overhead of maintaining on premises hardware.

EngineLab AI: A managed platform for studios and creators

Building on the AWS global infrastructure, EngineLab has developed a managed platform designed from the ground up for media and entertainment, addressing the specific challenges studios face when bringing AI tools into production.

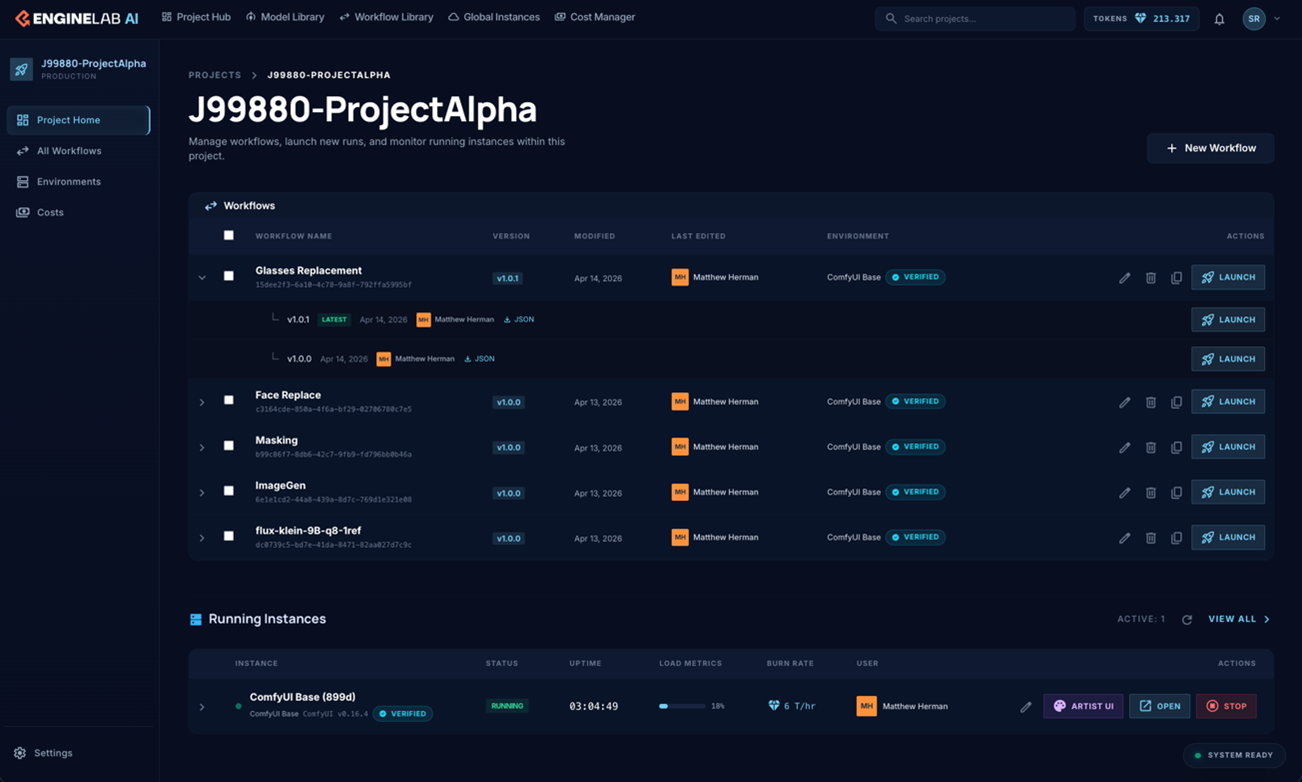

The project management interface provides a centralized view of your workflows, as shown in Figure 1. From here, administrators can track which workflows are active, monitor resource utilization, and manage artist access.

Figure 1: EngineLab AI’s project management interface showing how administrators track workflows, monitor resources, and manage artist access.

Instant sessions with zero complexity

Artists shouldn’t need to understand cloud infrastructure to use AI tools. With EngineLab AI, launching an AI app is as straightforward as selecting and launching the application. The solution handles everything underneath: provisioning the right compute, loading the right environment, and delivering a stable, ready-to-use session every time. No setup, no troubleshooting, no waiting. Studios organize work into projects, and artists launch the apps needed within them. The experience is consistent and reliable, which matters when working to a deadline, where a session must not fail to start or stop midway.

Artist UI: Expert workflows for every artist

Every studio has its ComfyUI experts (the technical power-users) who build sophisticated workflows using complex node graphs. But at scale, artists typically want to benefit from those workflows without becoming technical experts themselves. The Artist UI bridges that gap by exposing basic endpoints, such as an input, a prompt, and an output. Artists can then upload an image, describe what they want, and get results back; powered by the expert workflows running underneath without needing to navigate the node graph.

This also solves an important consideration for studios: protection of IP. Those custom workflows represent real competitive advantage, and without a layer between the workflow and the user, there’s nothing stopping a freelancer from taking them to their next job. The Artist UI acts as a boundary, giving the whole studio access to the capability without exposing the underlying work.

Data sovereignty: Security for you

The solution deploys exclusively into the customer’s own AWS account and environment, providing comprehensive control and data sovereignty. Customer data isn’t used for training: what a studio creates stays theirs, with no ambiguity about how it might be used. In an environment where many platforms are purposefully vague about data handling, EngineLab AI is purposefully explicit. This approach also addresses the security risks inherent in running community-developed AI workflows, including controls designed to help protect against unauthorized and potentially malicious code injection. For studios working with major clients who have strict data requirements, this is essential for production environments.

Model training: Secure environments, full control

The platform includes training apps that allow studios to integrate their own content and train fine-tuned foundation models as well as Low-Rank Adaptations (LoRAs), which modify only specific parameters, making them faster and more cost-effective for style customization. This is performed within your platform environment so that training data never leaves your private account. After being trained, these custom models become directly available to ComfyUI workflows while the studio maintains full control over both the models and the data used to create them. This addresses an important need for studios that want the power of custom AI models without accepting the risk of their proprietary data being used to train models elsewhere or benefit competitors.

Full control: Access management and provenance

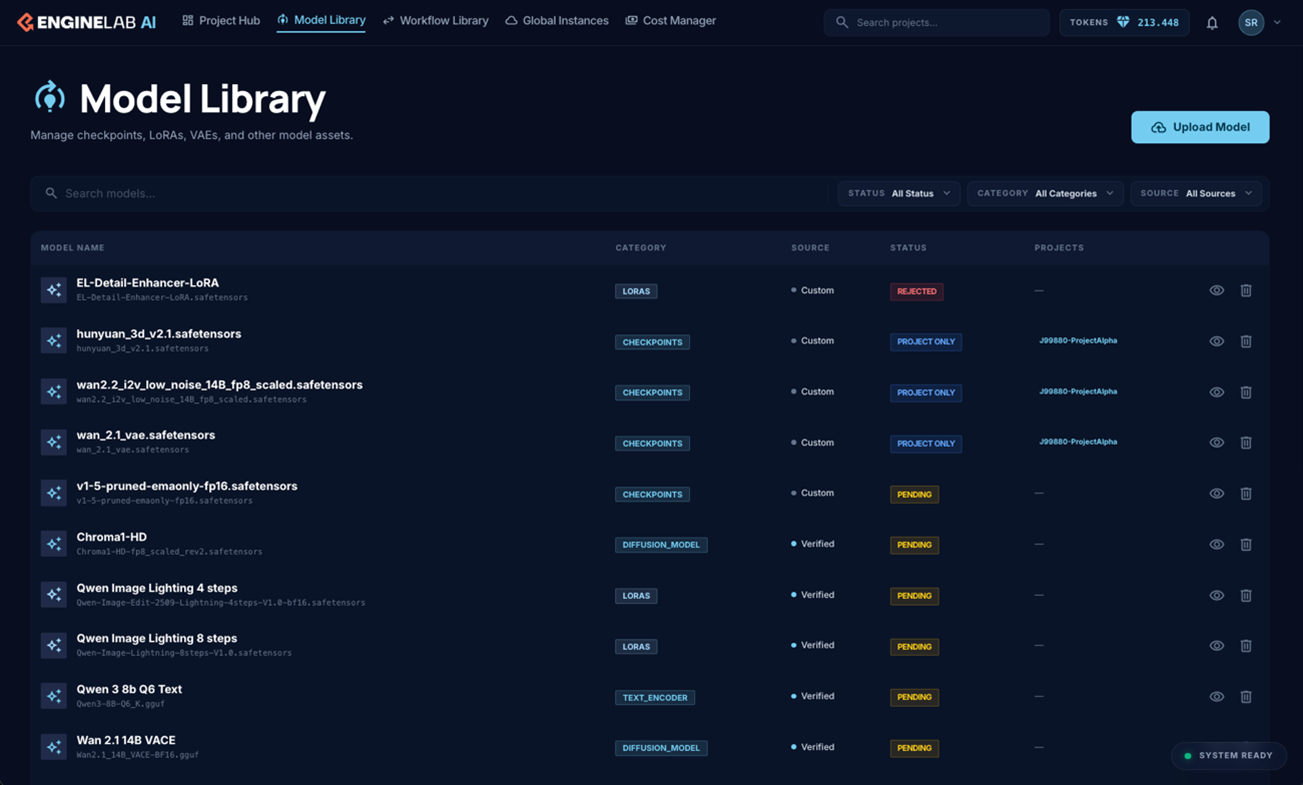

Administrators receive granular control over the platform through a least permissions model—granting users and resources only the access they need to perform their specific tasks—where experts and administrators can upload and approve models while other users retain restricted access. The system supports vendor-specific approvals, so that only approved models are used on specific projects—a crucial requirement for studios working with clients who have strict compliance requirements. Comprehensive audit trails track model usage per project for client reporting and compliance verification, while provenance tracking helps studios understand where models originate and exactly how they’re being used across the organization.

The AI Model Library interface in Figure 2 provides a centralized view to categorize, approve and manage your models and LoRAs.

Figure 2: A screenshot of EngineLab AI’s AI Model Library management pane.

Pipeline integration: Compute across studio workflows

EngineLab brings a team with deep pipeline expertise and extensive experience in high-end creative workflows to the platform. Recognizing that artist time is paramount, the team is actively working to integrate related GPU and CPU compute with ComfyUI directly into existing render farm workflows, using AWS Deadline Cloud—a fully-managed render farm service that automatically scales compute resources to match workflow demands. This integration allows the ComfyUI frontend to run on lightweight machines while heavy GPU tasks are offloaded to a render farm, decoupling the interface from the processing power. This natural progression uses EngineLab’s understanding of how tools need to integrate into production environments, handling the complexity so studios don’t have to.

“We’ve been partnering with EngineLab as they develop the platform. Our team already has strong ComfyUI expertise in-house, but having a managed, secure environment that lets us scale that across the whole studio—with the control and governance we need for client work—is exactly what we’ve been looking for.” – Sean Costelloe, Managing Director, Selected Works

Conclusion

EngineLab AI transforms ComfyUI from experimental tool to production-grade platform, addressing the critical challenges studios face when adopting AI tools. By deploying directly into a studio’s AWS account, the solution provides comprehensive data sovereignty and security while using the global GPU resources provided by AWS for optimal availability and pricing. Studios gain production-grade AI capabilities without the risks and costs of traditional approaches: stable, secure access to AI generation with the control required for client work, integrated into familiar workflows.

Learn more about the AWS infrastructure

EngineLab AI is built on proven AWS services designed for compute-intensive creative workloads:

- Amazon EC2 – Scalable virtual servers with GPU instances for AI and rendering workloads

- AWS Deadline Cloud – Managed render scheduling service for scaling compute resources

Next steps

For more information about AWS and how to get started using cloud infrastructure for your creative workflows, contact your AWS account team or visit AWS for Media & Entertainment.

Visit EngineLab AI to discover how your studio can leverage secure, scalable AI workflows on AWS.