Networking & Content Delivery

Dual-stack IPv6 architectures for AWS and hybrid networks

Introduction

An increasing number of organizations are adopting IPv6 in their environments, driven by the public IPv4 space exhaustion, private IPv4 scarcity, especially within large-scale networks, and the need to provide service availability to IPv6-only clients. An intermediary step in the path to fully supporting IPv6 are dual-stack IPv4/IPv6 designs, which leverage both versions of the IP protocol in parallel.

This blog post explores some of the dual-stack IPv6 architectures you can leverage today for AWS and hybrid networks. We will go through the networking and content delivery IPv6 supported implementations, focusing on the dual-stack architectures and constructs that enable the IPv6 adoption path for your AWS environment. For detailed information on the full extent of the configuration options for each referenced service, we recommend you follow the documentation links in each section.

The following dual-stack architectures are in scope for this blog post:

- Dual-stack Amazon Virtual Private Cloud (VPC) and Elastic Compute Cloud (EC2) instances

- Dual-stack Internet connectivity for the Amazon VPC

- Dual-stack public endpoints for your applications using Elastic Load Balancing

- Dual-stack VPC connectivity with VPC peering

- Dual-stack VPC connectivity at scale using AWS Transit Gateway

- AWS VPN and Direct Connect in dual-stack hybrid networks

- Amazon CloudFront dual-stack distributions

We assume you are familiar with the Amazon VPC constructs and the IPv4 functionality and configuration options for the services mentioned. Also, you should be aware of the IPv6 protocol definition, types of addresses and configuration mechanisms. We will be discussing dual-stack implementations that use both IPv4 and IPv6, focusing on the interoperability with IPv4-only networks and building additional functionalities by enabling dual-stack support.

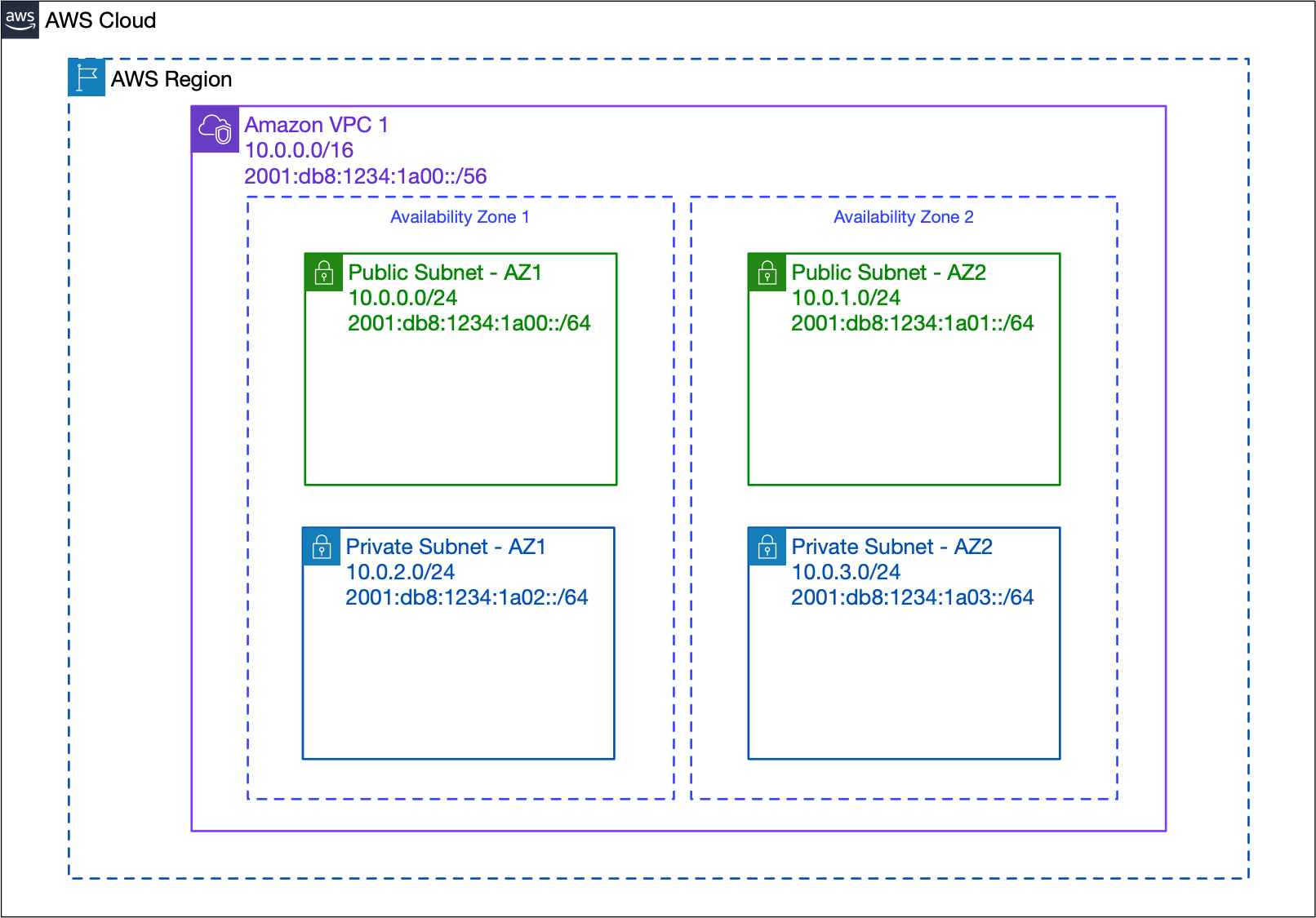

1. Dual-stack Amazon VPC

When creating your Amazon VPC, you are defining its primary IPv4 Classless Inter-Domain Routing (CIDR) block. For both existing and new VPCs, to enable IPv6, you simply need to associate one /56 IPv6 CIDR block, either by editing the VPC CIDRs or at VPC creation time. You can allocate the IPv6 CIDR from the Amazon pool of IPv6 addresses, or you can use one /56 from a BYOIPv6 (Bring Your Own IPv6) pool you define with IPv6 addresses you own. AWS IPv6 addresses are globally unique and routed over the Internet, while for BYOIPv6 pools, you can decide if the CIDRs are advertised in the Internet by AWS or not. We’re going to take a closer look at the difference between the two options in section 2 below.

So, once you’ve allocated the /56 IPv6 CIDR to your VPC, you can start assigning /64 CIDRs to your dual-stack subnets. Also, since you can configure IPv6 assignments for your VPC subnets in flexible manner, by choosing which ones are dual-stack and which ones aren’t, it’s possible to have a mix of subnets with and without IPv6 within the same VPC. This allows you to pace your IPv6 adoption journey by gradually enabling support for IPv6 in different subnets, based on your use case.

Fig. 1: Dual-stack Amazon VPC

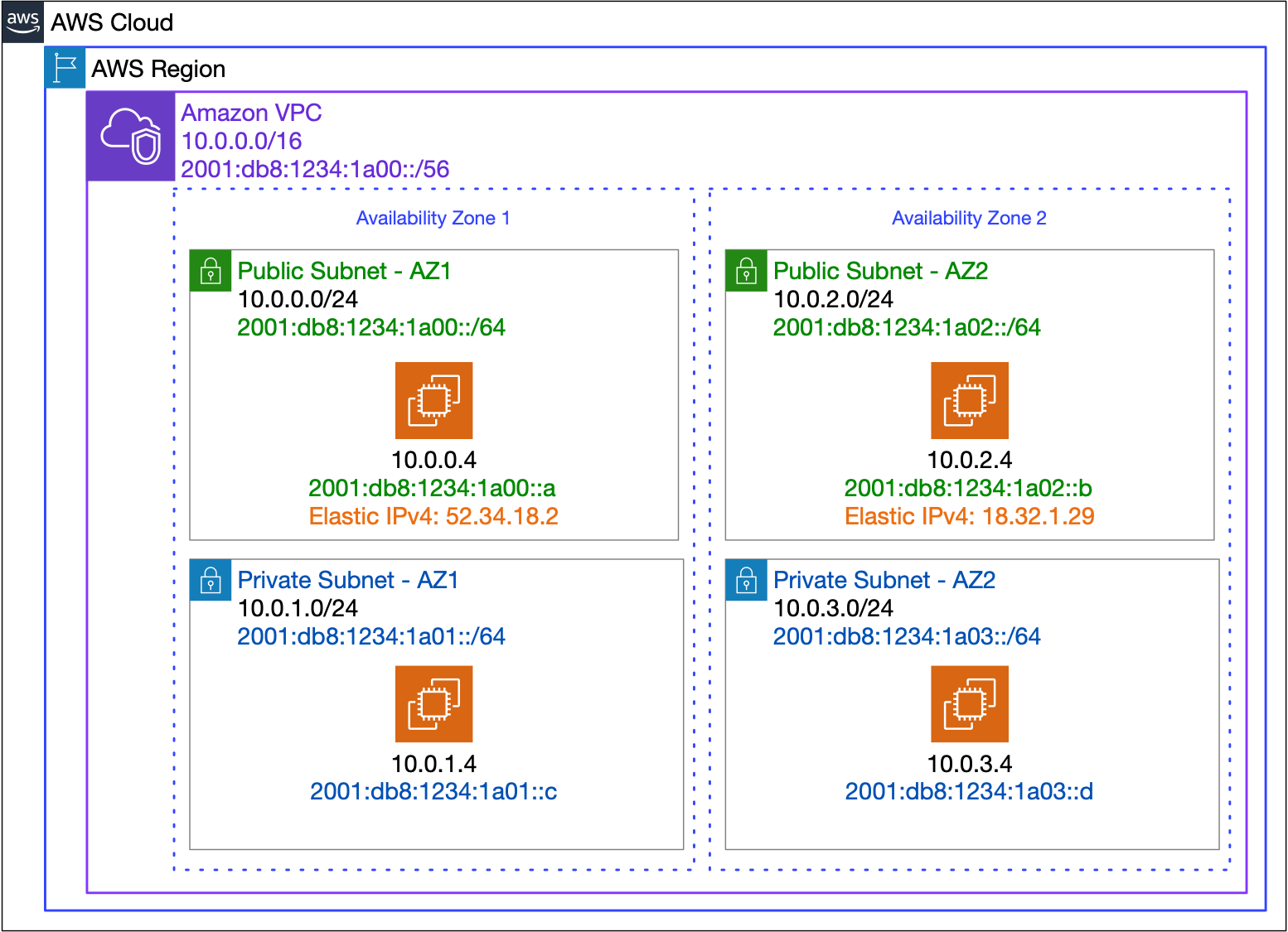

Now, you can spin up dual-stack EC2 instances in the VPC. An EC2 instance placed into an IPv6 enabled subnet may be created with and/or modified to have an IPv6 address assigned. You configure this behavior per elastic network interface (ENI), and you can choose to either have AWS auto assign the IPv6 address for you, by means of DHCPv6, or you can manually specify an unused address in the subnet’s allocated range.

Fig. 2: Dual-stack EC2 instances

IPv6 addressing is supported on all current generation instance types and the C3, R3, and I2 previous generation instance types, while the number of network interfaces you can attach to an instance varies (For more information, see Instance types and the number of available IPv6 addresses per network interface). Also, IPv6 is automatically supported on AMIs that are configured for DHCPv6, while for other AMIs, you must manually configure your instance to recognize any assigned IPv6 addresses. You can disassociate the IPv6 address on a network interface, and unless you do so, the IPv6 address persists when you stop and start your instance, and is released when you terminate your instance. Also, for simplified VPC management and IPv6 allocation, especially in container environments, you can also assign entire /80 prefixes to your network interfaces.

The IPv6 addresses for VPCs are by default routable in the Internet, but that doesn’t mean that all the IPv6 addresses used inside your VPC will in fact be able to reach, or be reachable from the Internet. Instead, you have full control over the Internet connectivity of your subnets using a combination of gateway types, routes, security groups and network access control lists (ACLs). So, let’s have a look at how Internet connectivity can be achieved for private and public subnets in the dual-stack VPC.

2. Dual-stack VPC Internet connectivity

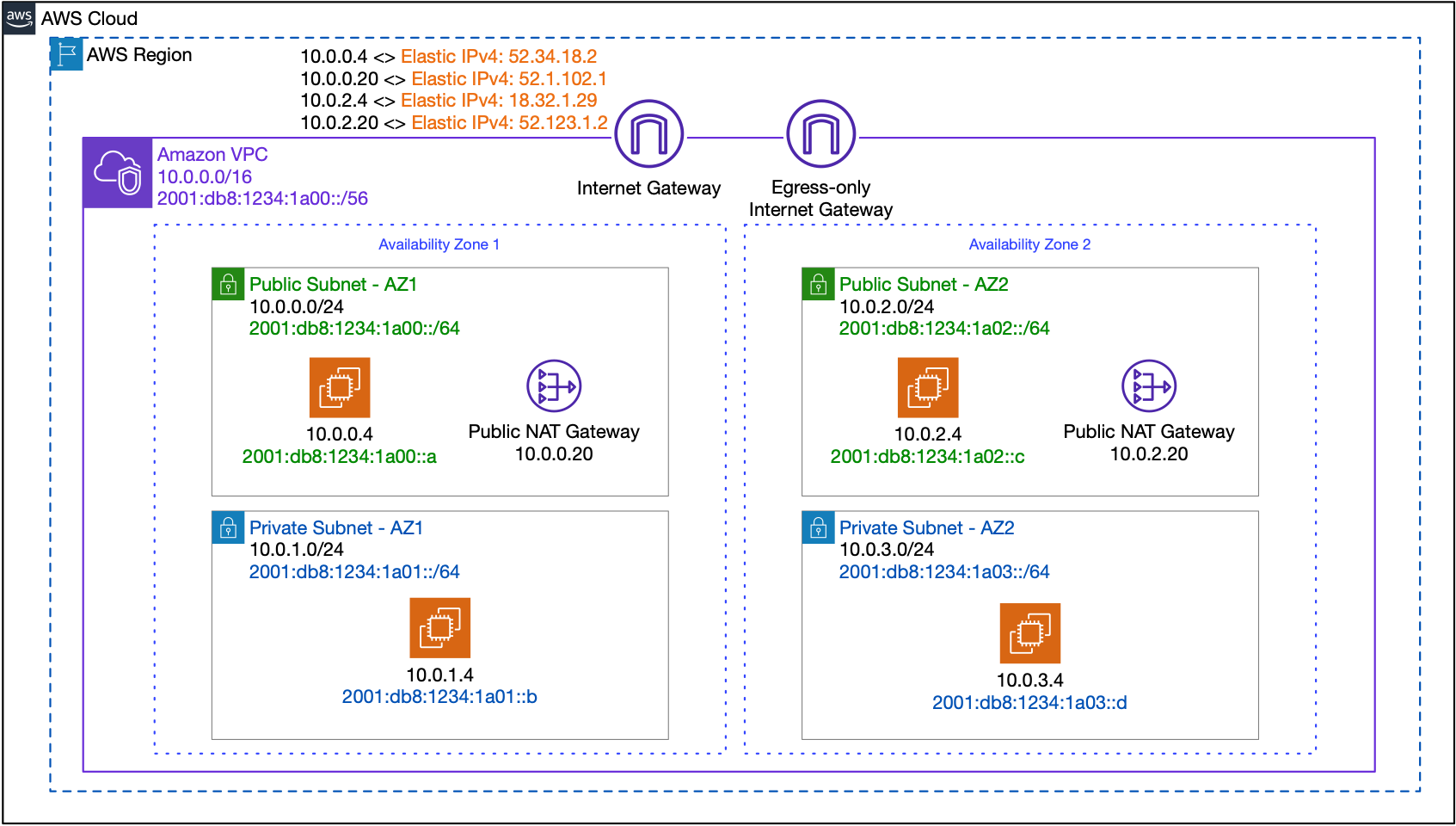

In a dual-stack VPC, we maintain the same Internet connectivity constructs for the IPv4 stack, while we add the Egress-only Internet Gateway (EIGW) for the IPv6 stack. An egress-only internet gateway is a horizontally scaled, redundant, and highly available VPC component that allows outbound communication over IPv6 from instances in your VPC to the internet. The role of the EIGW is to keep the concept of private subnets consistent across both IPv4 and IPv6 stacks. It is logically very similar to a NAT Gateway, preventing the Internet from initiating an IPv6 connections to your instances, and allowing only return traffic to reach the instances, without relying on address or port translation. The diagram below shows the dual-stack VPC-related concepts mentioned above:

Fig. 3: Dual-stack VPC Internet connectivity

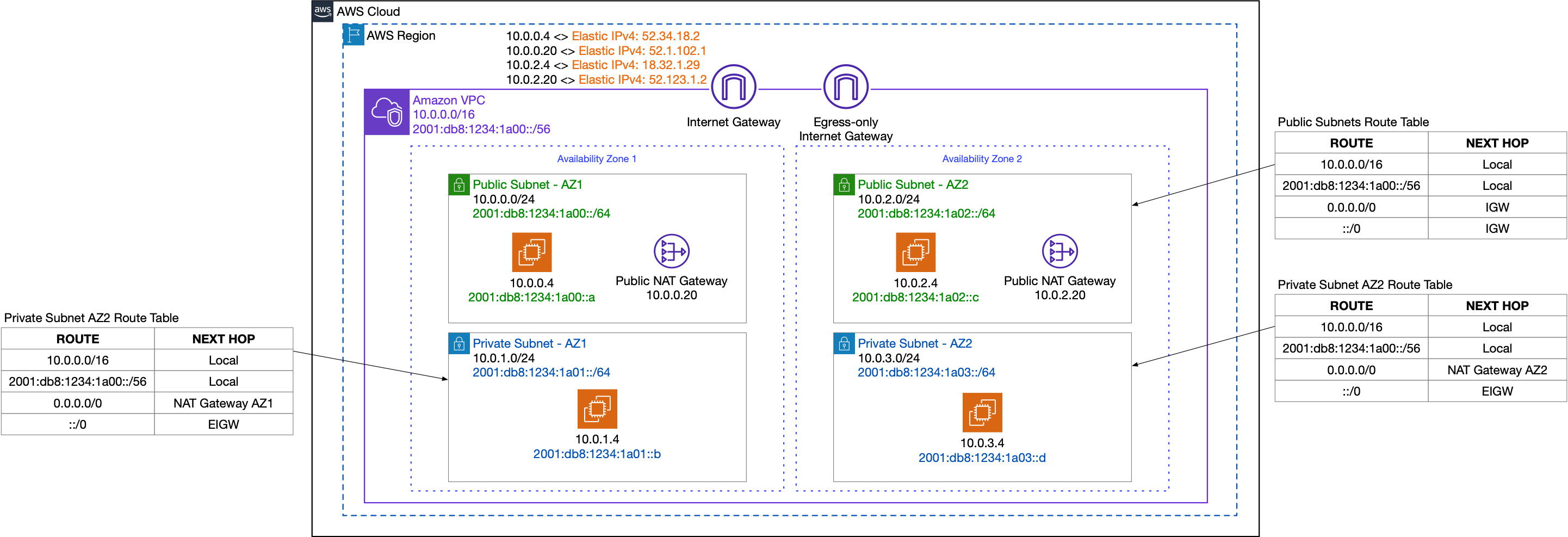

Once the Egress-only Internet Gateway is associated to the VPC, you need to configure the route tables for the public and private subnets accordingly. For both IPv4 and IPv6 stacks, the default route typically points to the Internet Gateway for public subnets. For the IPv4 stack, private subnets’ default route usually points to the NAT Gateway, whilst for the IPv6 stacks, it would point to the Egress-only Internet Gateway. The following diagram depicts the route tables for the dual-stack public and private subnets:

Fig. 4: VPC route tables configuration for Internet connectivity

Remember we mentioned above about the differences between BYOIPv6 pools that are advertised by AWS or not? If you choose to have your IPv6 CIDR block advertised by AWS, you must – just as with AWS-provided IPv6 CIDRs – use the Internet Gateway or the Egress-only Internet Gateway for your VPC, to achieve Internet connectivity. Otherwise, if you choose to keep the IPV6 CIDR routing under your control, Internet connectivity for your VPC must be ensured from your on-premises advertisement location, over VPN or Direct Connect, as AWS will not be advertising these CIDRs publicly. The table below summarizes the above options for the different types of IPv6 CIDRs:

3. Dual-stack public endpoints for your applications using Elastic Load Balancing

Let’s continue with enabling scalable public-facing application delivery with dual-stack Elastic Load Balancing. While Application Load Balancer (ALB) is best suited for load balancing of HTTP and HTTPS, the Network Load Balancer (NLB) is best suited for load balancing of Transmission Control Protocol (TCP), User Datagram Protocol (UDP), and Transport Layer Security (TLS) traffic where extreme performance is required (for detailed configuration options and supported features, check the ALB documentation and NLB documentation pages).

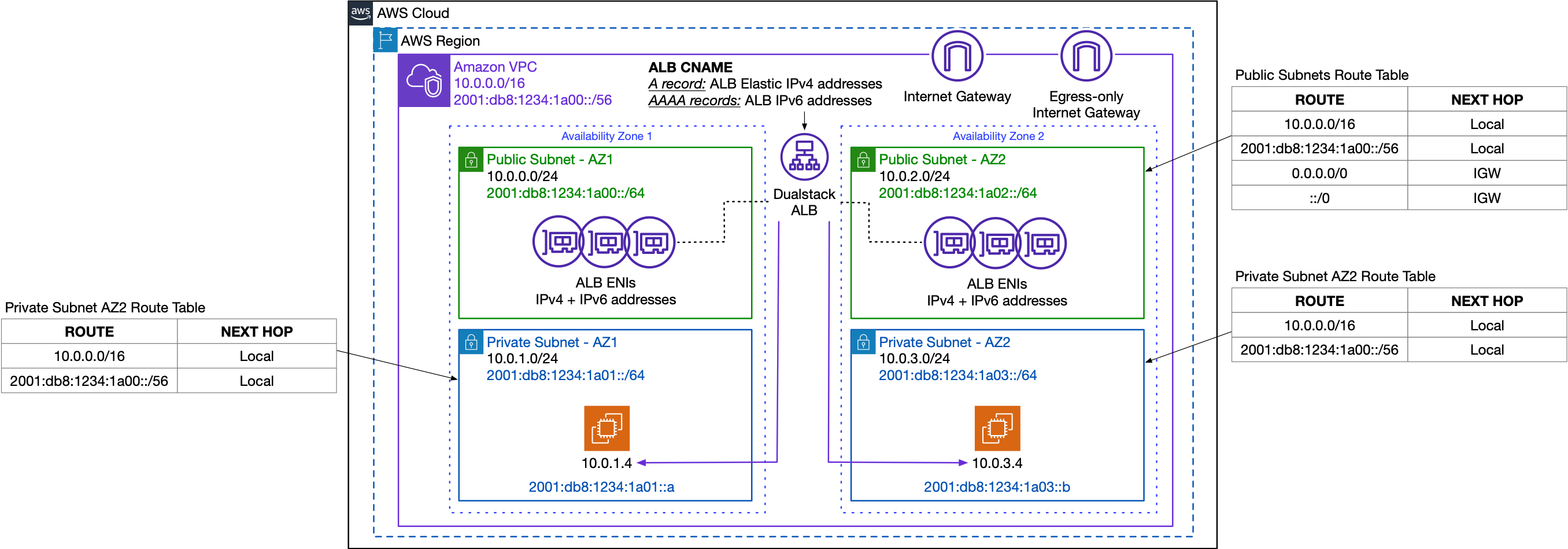

When you configure your public-facing load balancer, you can choose dual-stack for the IP addresses that the load balancer will use in the selected public subnets. This enables your application clients to use both IPv4 and IPv6 addresses to communicate with the load balancer. When choosing dual-stack, the load balancer subnets must have IPv6 CIDR blocks associated with them. You can manually assign the IPv6 addresses to your Load Balancer interfaces, or let them be randomly assigned by AWS. The diagram below shows the dual-stack ALB deployment and route tables configuration for dual-stack internet-facing ALB:

Fig. 5: Dual-stack ALB

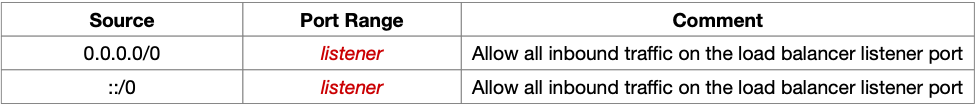

Since you have now created a dual-stack IPv4 and IPv6 application endpoint, the ALB inbound security groups need to allow traffic from both IPv4 and IPv6 clients:

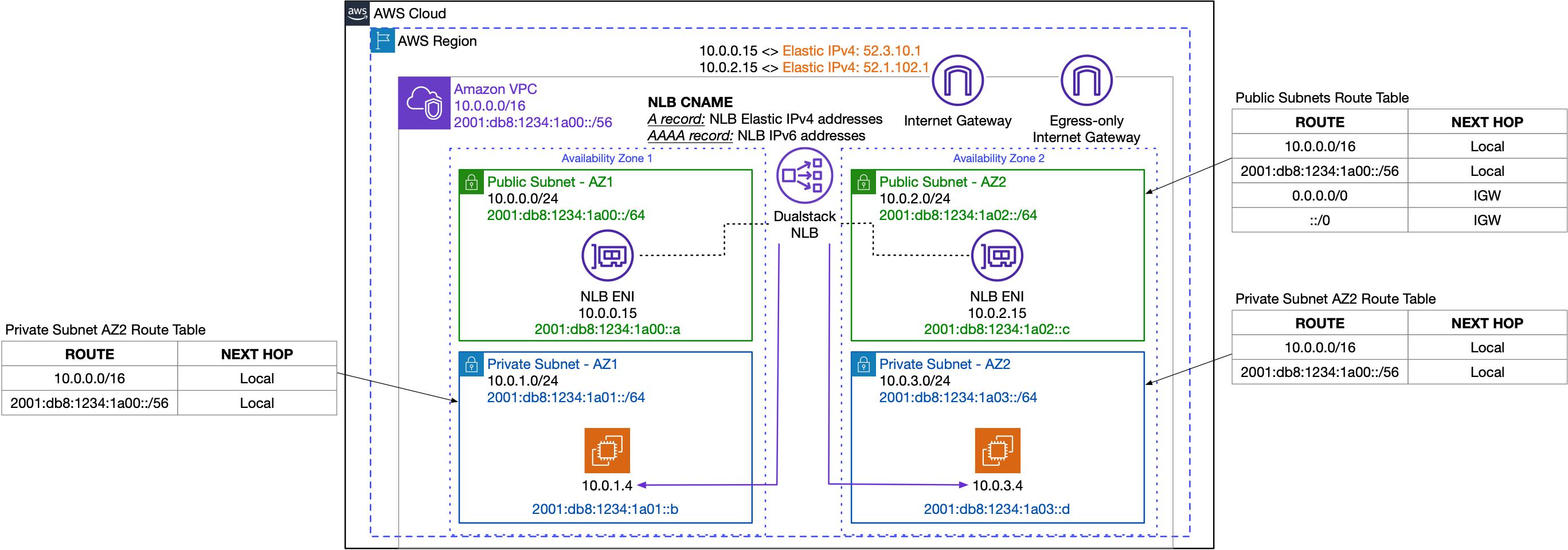

The diagram below shows the dual-stack NLB deployment and route tables configuration:

Fig. 6: Dual-stack NLB

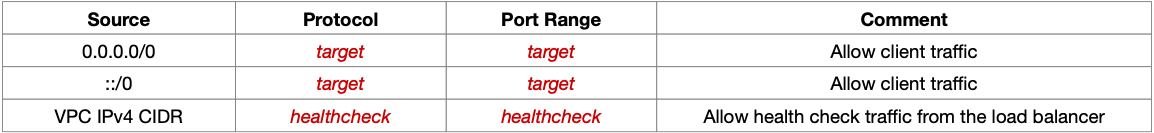

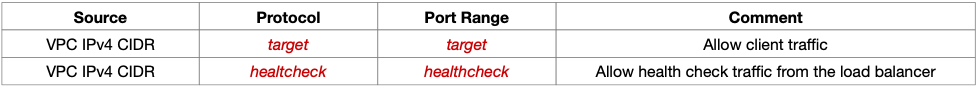

Now that your NLB endpoint is dual-stack, the security groups for your targets need to support both IPv4 and IPv6 addresses, to allow traffic from the load balancer, depending on the target type – instance or IPv4 address. For instance-type targets, the recommended security group settings are:

For IP targets, the recommended security group settings are:

4. Dual-stack VPC connectivity with VPC peering

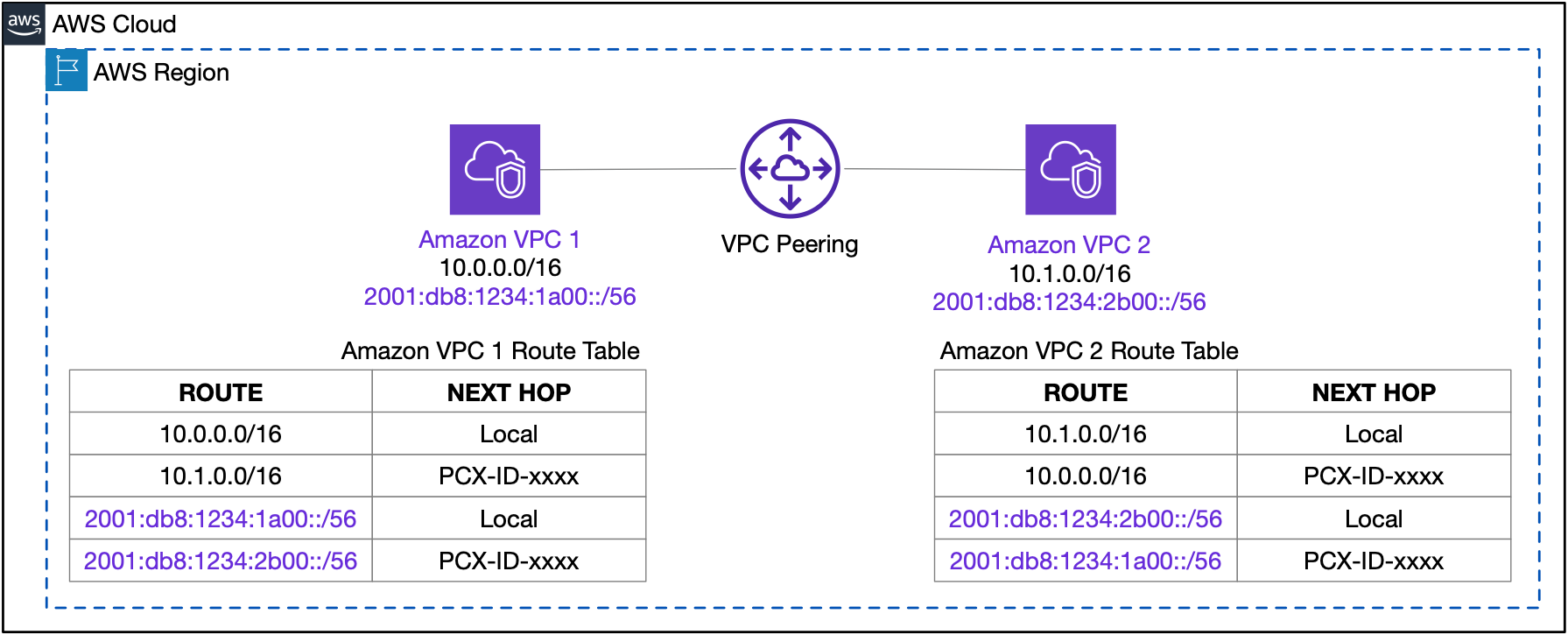

So, given your VPC is now dual-stack enabled and your application endpoints are publicly accessible over both IPv4 and IPv6 addresses, let’s have a look at dual-stack VPC connectivity options. The simplest way to connect two dual-stack VPCs together is through VPC peering (for more information, please check the VPC peering documentation). You can peer together two VPCs with non-overlapping IPv4 CIDR blocks, and you can choose to route either a single stack or both stacks over the peering connection. The following diagram shows the dual-stack VPC peering connection and associated routing:

Fig. 7: Dual-stack VPC Peering

5. Dual-stack VPC connectivity at scale using AWS Transit Gateway

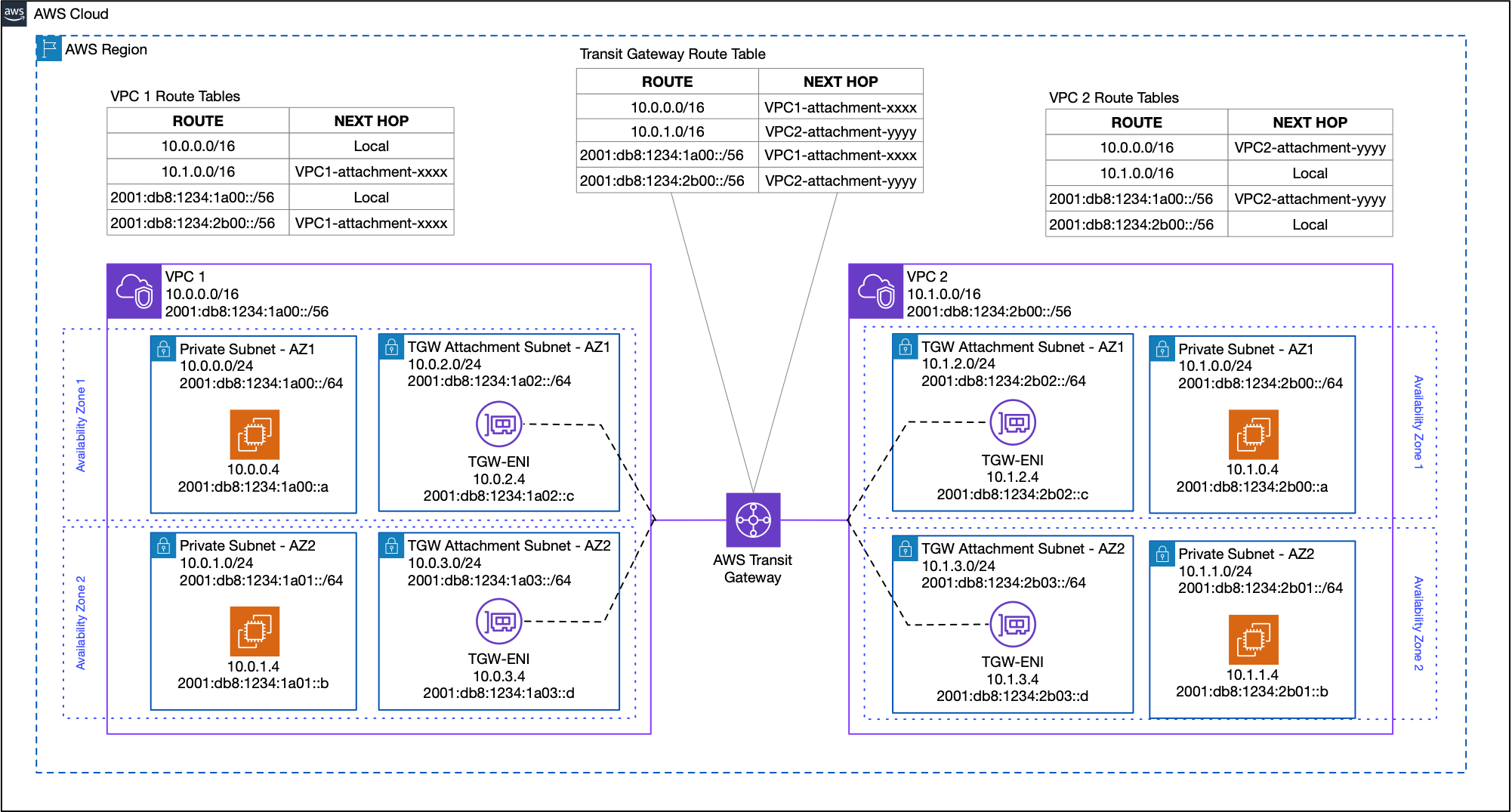

Going beyond the scale of a single VPC, AWS Transit Gateway is a network transit hub that you can use to interconnect your VPCs and hybrid networks for both IPv4 and IPv6 stacks. All Transit Gateway concepts and constructs – attachments, peering connections, associations, propagations and routing – continue to have the same functionalities across both stacks. Note that in order to configure an IPv6-enabled VPC attachment to the Transit Gateway, the VPC and attachment subnets need to have associated IPv6 CIDRs. Also, Transit Gateway peering connections support both IPv4 and IPv6 CIDR routing. The diagram below shows the dual-stack Transit Gateway VPC attachments:

Fig. 8: Dual-stack AWS Transit Gateway Routing

6. AWS VPN and Direct Connect in dual-stack hybrid networks

Hybrid connectivity for AWS networks requires us to have a look at the AWS Site-to-site VPN and Amazon Direct Connect dual-stack configurations.

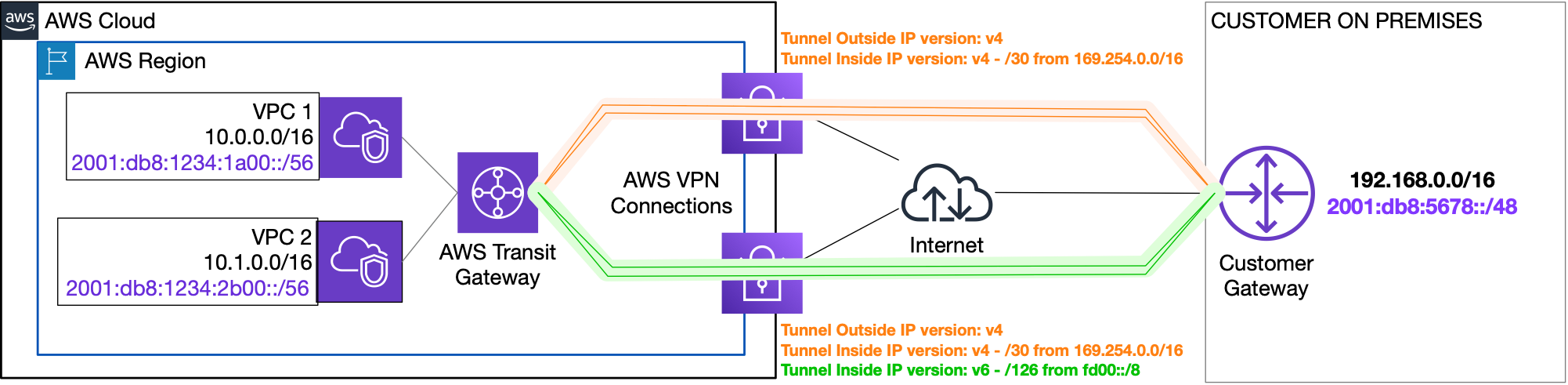

Your Site-to-Site VPN connection on a Transit Gateway can support either IPv4 traffic or IPv6 traffic inside the VPN tunnels. This means that for dual-stack connectivity, you need to create two VPN connections, one for the IPv4 stack and one for the IPv6 stack. For the Site-to-Site VPN connection you intend to use for IPv4 routing, each tunnel will have a /30 IPv4 CIDR in the 169.254.0.0/16 range, while for the VPN connection intended for IPv6 routing, each VPN tunnel will have two CIDR blocks: one /30 IPv4 CIDR in the 169.254.0.0/16 range and one /126 IPv6 CIDR in the fd00::/8 range. The diagram below shows the two VPN connections needed for dual-stack routing:

Fig. 9: Dual-stack AWS Site-to-site VPN

The outside tunnel IP addresses for the AWS endpoints are IPv4 addresses, and the public IP address of your customer gateway must also be IPv4 (for further information on tunnel configuration options, please refer to the AWS Site-to-site VPN configuration guide).

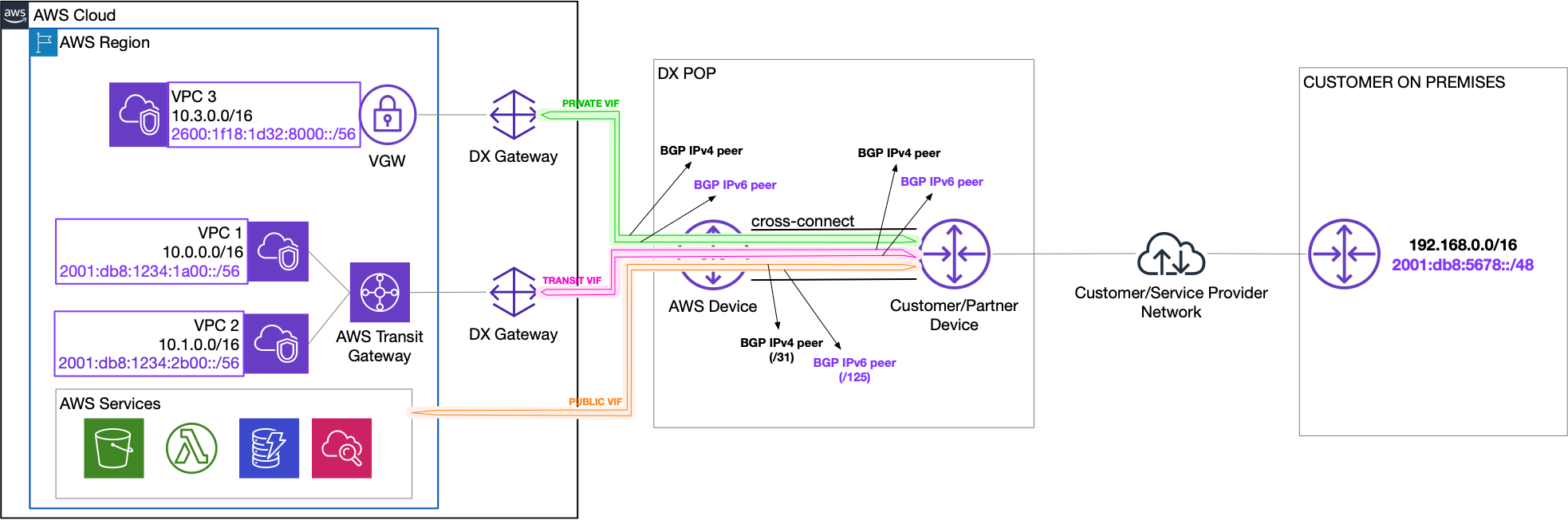

AWS Direct Connect enables you to configure private and dedicated connectivity to your on-premises, and natively supports both IPv4 and IPv6 routing. To use your Direct Connect connection for dual-stack traffic, you need to first create one of the following virtual interfaces (VIFs): Private VIF, Public VIF or Transit VIF, or reuse your existing VIFs and enable them for dual-stack support. The following considerations apply to dual-stack VIFs:

- A virtual interface can support a BGP peering session for IPv4, IPv6, or both (dual-stack).

- For the IPv6 stack, Amazon automatically assigns a /125 IPv6 CIDR for the BGP peering connection.

- On Public VIFs, you must advertise to AWS /64 prefixes or larger.

Fig. 10: Dual-stack Amazon Direct Connect

7. Amazon CloudFront dual-stack distributions

If global content delivery for your dual-stack application relies on Amazon CloudFront using HTTP/HTTPS, you can leverage the same security, availability, performance and scalability for both stacks. CloudFront communicates with origin server using IPv4, so you don’t need to modify your application stack to enable IPv6 support. This applies to all types of origins for CloudFront distribution, including Amazon Simple Storage Service (S3) bucket or a MediaStore container, a MediaPackage channel, or a custom origin, such as an Amazon EC2 instance or your own HTTP web server. If you have turned on Amazon CloudFront access logs, once you enable IPv6, you will start seeing your viewer’s IPv6 address in the “c-ip” field. Also, you will get IPv6 addresses in the ‘X-Forwarded-For’ header that is sent to your origins. Additionally, if you use IP whitelists for Trusted Signers, you should use an IPv4-only distribution for your Trusted Signer URLs with IP allow lists and a dual-stack distribution for all other content.

IPv6 is enabled by default for all newly created Amazon CloudFront web distributions, and for existing web distributions, you can enable IPv6 through the Amazon CloudFront console or API.

Conclusion

This blog post summarizes some of the network architectures and configuration options available for enabling dual-stack IPv4 and IPv6 on AWS environments. You can easily enable dual-stack VPCs and networking resources in your environment and, while there are multiple IPv6 adoptions strategies, with different drivers, you can start your IPv6 journey on AWS today. If you have questions about this post, start a new thread on the Amazon Networking and Content Delivery Forum or contact AWS Support.