AWS Big Data Blog

Designing centralized and distributed network connectivity patterns for Amazon OpenSearch Serverless – Part 2

This post is Part 2 of our two-part series on hybrid multi-account access patterns for Amazon OpenSearch Serverless. In Part 1, we explored a centralized architecture where a single account hosts multiple OpenSearch Serverless collections and a shared VPC endpoint. This approach works well when a single business unit or team manages collections on behalf of the organization.

However, many enterprises have multiple business units that need independent ownership of their OpenSearch Serverless infrastructure. When each business unit wants to manage their own collections, security policies, and VPC endpoints within their own AWS accounts, the centralized model from Part 1 no longer fits.

In this post, we address this multi-business unit scenario by introducing a pattern where the central networking account manages a custom private hosted zone (PHZ) with CNAME records pointing to each business unit’s VPC endpoint. This approach maintains centralized DNS management and connectivity while giving each business unit full autonomy over their collections and infrastructure.

The challenge with multiple business units

When multiple business units independently manage their own OpenSearch Serverless collections in separate AWS accounts, each account has its own VPC endpoint with its own private hosted zones. These private hosted zones only work within their respective VPCs, creating DNS fragmentation across the organization. Consumers in spoke accounts and on-premises environments can’t resolve collection endpoints in other accounts without additional DNS configuration.

Managing individual PHZ associations for each consumer VPC doesn’t scale, and asking each business unit to coordinate DNS with every consumer creates operational overhead. You need a network architecture that gives each team autonomy while keeping DNS management and connectivity centralized.

Solution overview

This architecture solves the problem by centralizing DNS management in the networking account while leaving collection and VPC endpoint ownership with each business unit. The networking account maintains a custom PHZ with CNAME records that map each collection endpoint to the regional DNS name of its corresponding VPC endpoint. This custom PHZ is associated with a Route 53 Profile and shared through AWS Resource Access Manager (AWS RAM) to spoke accounts. On-premises DNS resolution flows through the Route 53 Resolver inbound endpoint in the central networking VPC, which uses the same custom PHZ.

We cover two complementary patterns: Pattern 1 for on-premises access to collections across multiple business unit accounts, and Pattern 2 for spoke account access to those same collections. Both patterns rely on the centralized custom PHZ managed by your networking team.

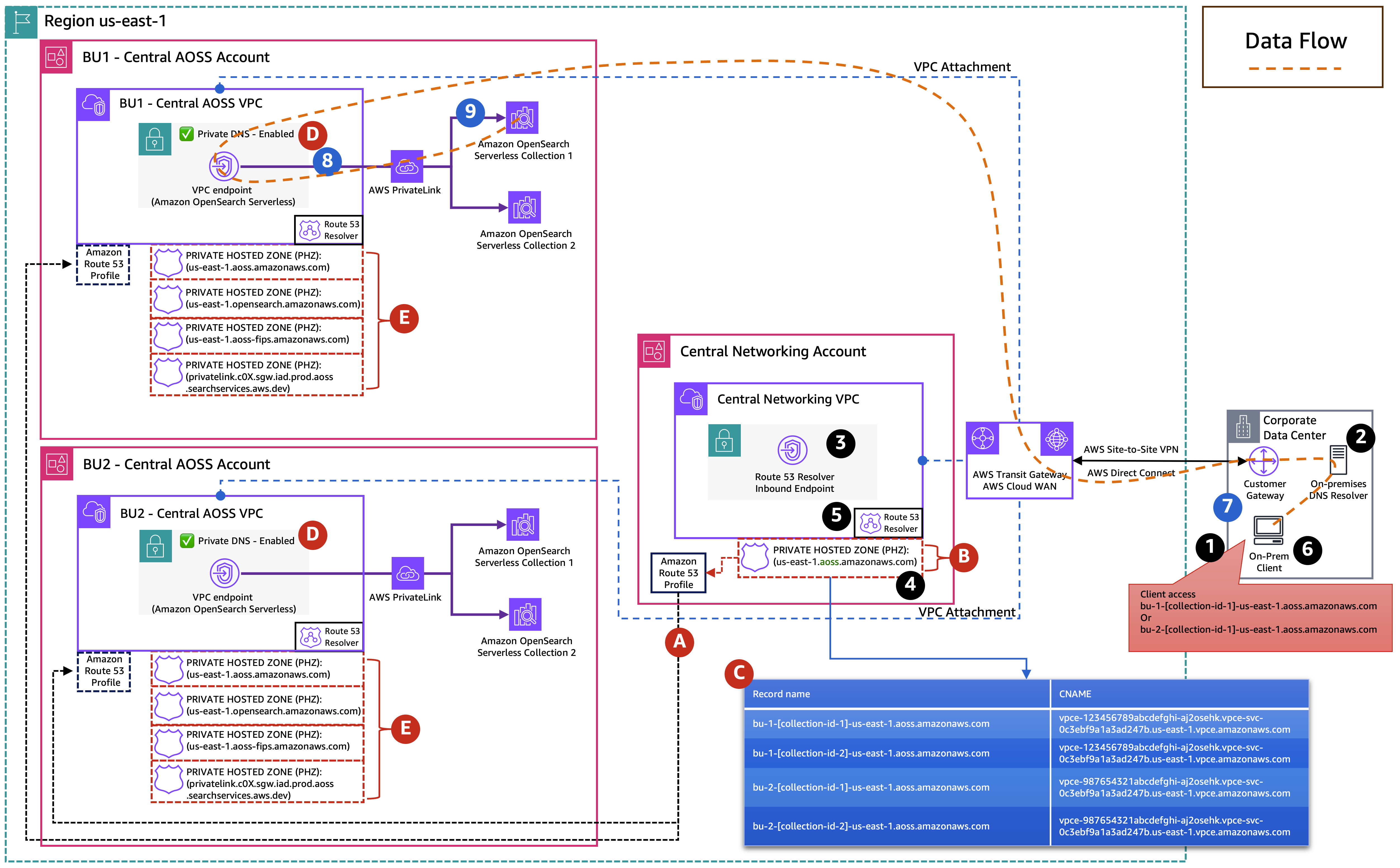

Pattern 1: On-premises access to OpenSearch Serverless collections across multiple business unit accounts

With this pattern, your on-premises clients can privately access OpenSearch Serverless collections hosted across multiple business unit accounts, each with its own VPC endpoint. The following diagram illustrates this multi-business-unit architecture. It shows how on-premises DNS queries are resolved through the custom PHZ in the central networking account and routed to the correct business unit’s VPC endpoint through AWS PrivateLink.

(A) The Route 53 Profiles are created in the central networking account and shared through AWS Resource Access Manager (AWS RAM) with the central OpenSearch Serverless account.

(B) The central networking account has a custom PHZ with domain us-east-1.aoss.amazonaws.com and associated with central networking account VPC and with Route 53 Profiles.

(C) This PHZ contains CNAME records pointing to each business unit’s VPC endpoints.

(D) Each business unit account has Private DNS enabled on its VPC endpoint.

(E) This automatically creates the following PHZs during VPC endpoint creation and associates them with the business unit’s VPC, so DNS resolution works locally within that VPC without depending on the Route 53 Profiles.

us-east-1.aoss.amazonaws.comus-east-1.opensearch.amazonaws.comus-east-1.aoss-fips.amazonaws.comprivatelink.c0X.sgw.iad.prod.aoss.searchservices.aws.dev

DNS resolution flow

- Your on-premises client initiates a request to

bu-1-collection-id-1.us-east-1.aoss.amazonaws.com. - The on-premises DNS resolver has a conditional forwarder for

us-east-1.aoss.amazonaws.comand forwards the query over AWS Direct Connect or AWS Site-to-Site VPN to the Route 53 Resolver inbound endpoint IPs in the central networking VPC. - The inbound Resolver endpoint passes the query to the Route 53 VPC Resolver in the central networking VPC.

- The VPC Resolver finds the custom PHZ (

bu-1-collection-id-1.us-east-1.aoss.amazonaws.com) associated with the central networking VPC. The CNAME record forbu-1-collection-id-1.us-east-1.aoss.amazonaws.comresolves to the regional DNS name of BU1’s VPC endpoint (for example,vpce-1234567890abcdefghi.a2oselk.vpce-svc-0c3ebf9a1a3ad247b.us-east-1.vpce.amazonaws.com). - The VPC Resolver then resolves the VPC endpoint regional DNS name to its elastic network interfaces (ENIs) private IP addresses.

- The traffic reaches BU1’s VPC endpoint elastic network interfaces (ENIs) through private network connectivity because the on-premises client connects over AWS Direct Connect or AWS Site-to-Site VPN through AWS Transit Gateway or AWS Cloud WAN.

Data flow

- Your on-premises client sends an HTTPS request to the resolved IP address with the TLS Server Name Indication (SNI) header set to

bu-1-collection-id-1.us-east-1.aoss.amazonaws.com, over AWS Direct Connect or AWS Site-to-Site VPN through AWS Transit Gateway or AWS Cloud WAN. - Traffic reaches the VPC endpoint ENIs in BU1’s OpenSearch Serverless VPC.

- The VPC endpoint forwards the request to the OpenSearch Serverless service, which inspects the hostname and routes to BU1 Collection 1.

To access a collection in BU2, your client follows the same flow using bu-2-collection-id-1.us-east-1.aoss.amazonaws.com. The custom PHZ contains a separate CNAME record pointing to BU2’s VPC endpoint, and the OpenSearch Serverless service routes to the correct collection based on the hostname.

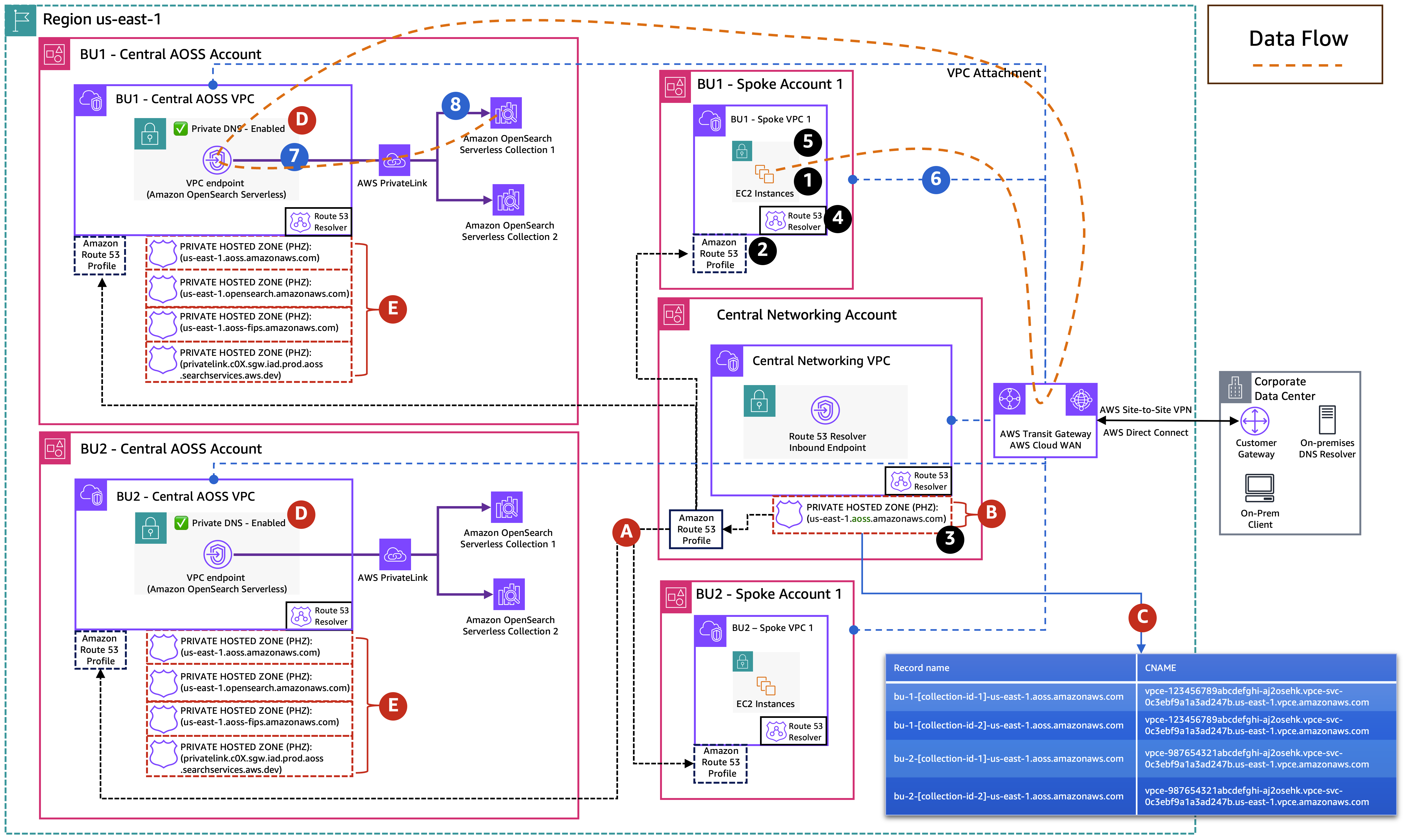

Pattern 2: Spoke account access to OpenSearch Serverless collections across multiple business unit accounts

While Pattern 1 addresses on-premises access, you might also need to provide access from compute resources and distributed applications in spoke accounts to OpenSearch Serverless collections across multiple business unit accounts. With this pattern, compute resources in spoke account VPCs can privately access OpenSearch Serverless collections across multiple business unit accounts through the centralized private hosted zone (PHZ) in the networking account.

In the following example, we use an Amazon Elastic Compute Cloud (Amazon EC2) instance as a compute resource to illustrate the pattern. However, the same approach applies to any compute resource within the spoke VPC. The following diagram illustrates this multi-business-unit, multi-spoke architecture, showing how spoke VPCs resolve DNS through the shared Route 53 Profile and custom PHZ, then route traffic to the correct business unit’s OpenSearch Serverless collections through AWS PrivateLink.

(A) The Route 53 Profiles, created in the central networking account, are shared through AWS RAM with all spoke accounts and with the central OpenSearch Serverless account.

(B) The central networking account has a custom PHZ with domain us-east-1.aoss.amazonaws.com and associated with central networking account VPC and with Route 53 Profiles.

(C) This PHZ contains CNAME records pointing to each business unit’s VPC endpoints.

(D) Each business unit account has Private DNS enabled on its VPC endpoint.

(E) This automatically creates the following PHZs during VPC endpoint creation and associates them with the business unit’s VPC, so DNS resolution works locally within that VPC without depending on the Route 53 Profiles.

us-east-1.aoss.amazonaws.comus-east-1.opensearch.amazonaws.comus-east-1.aoss-fips.amazonaws.comprivatelink.c0X.sgw.iad.prod.aoss.searchservices.aws.dev

DNS resolution flow

- An Amazon EC2 instance in BU1 Spoke VPC 1 initiates a request to

bu-1-collection-id-1.us-east-1.aoss.amazonaws.comand sends a DNS query to the Route 53 VPC Resolver. - The VPC Resolver finds the Route 53 Profiles associated with the spoke VPC.

- The Profiles reference the custom PHZ (

us-east-1.aoss.amazonaws.com) managed in the central networking account. The CNAME record forbu-1-collection-id-1.us-east-1.aoss.amazonaws.comresolves to the regional DNS name of BU1’s VPC endpoint. - The VPC Resolver then resolves the VPC endpoint regional DNS name to its elastic network interfaces (ENIs) private IP addresses.

- Traffic reaches the VPC endpoint ENIs through private network connectivity because the spoke VPC connects to BU1’s VPC through AWS Transit Gateway or AWS Cloud WAN.

Data flow

- Your Amazon EC2 instance sends an HTTPS request to the resolved IP address with the TLS SNI header set to

bu-1-collection-id-1.us-east-1.aoss.amazonaws.com, routed through AWS Transit Gateway or AWS Cloud WAN to BU1’s OpenSearch Serverless VPC. - The request arrives at the VPC endpoint ENIs in BU1’s OpenSearch Serverless VPC.

- The VPC endpoint forwards the request to the OpenSearch Serverless service, which inspects the hostname and routes to BU1 Collection 1.

To access a collection in BU2, the same flow applies using bu-2-collection-id-1.us-east-1.aoss.amazonaws.com. The custom PHZ resolves to BU2’s VPC endpoint, and routes traffic through the transit gateway to BU2’s VPC. The same applies to resources in other spoke accounts with the Route 53 Profiles associated.

Custom PHZ record structure

The custom PHZ in the central networking account uses the domain us-east-1.aoss.amazonaws.com and contains CNAME records that map each collection endpoint to the regional DNS name of its corresponding VPC endpoint. Note that collections within the same business unit account share the same VPC endpoint, so their CNAME records point to the same regional DNS name. Collections in different business unit accounts point to different VPC endpoints.

Custom PHZ management

Unlike Part 1, where the auto-created PHZs from the VPC endpoint handle DNS resolution, this pattern requires your networking team to manually maintain the custom PHZ. When a business unit adds a new collection, the networking team must add a corresponding CNAME record to the custom PHZ.

Cost considerations

The architecture patterns described in this post use several AWS services that can contribute to your overall costs, including Amazon Route 53 (hosted zones, DNS queries, and Resolver endpoints), and Route 53 Profiles. We recommend reviewing the official AWS pricing pages for the most current rates:

For a full cost estimate tailored to your workload, use the AWS Pricing Calculator.

Conclusion

In this post, we showed how you can give on-premises clients and spoke account resources private access to OpenSearch Serverless collections distributed across multiple business unit accounts. By centralizing DNS management through a custom PHZ in the networking account and sharing it through Route 53 Profiles, you avoid coordinating PHZ associations across accounts while giving each business unit full ownership of their collections and VPC endpoints.

Combined with Part 1, you now have two architectural approaches for hybrid multi-account access to OpenSearch Serverless: a centralized model where one account owns all collections and a shared VPC endpoint, and a distributed model where multiple business units each manage their own collections and VPC endpoints. Choose the centralized model when a single team manages collections on behalf of the organization. Choose the distributed model when business units need independent ownership of their OpenSearch Serverless infrastructure.

For additional details, refer to the Amazon OpenSearch Serverless VPC endpoint documentation and Route 53 Profiles documentation.