AWS Database Blog

How Cloudability boosted performance, simplified tuning, and lowered costs with Amazon Aurora

Cloudability is a cloud cost-management platform that empowers enterprises to run with known cloud financials and full accountability. Our platform collects an avalanche of billing and utilization data points—33 million per resource, with over 275,000 services and options from each cloud vendor and more than 1,000 new services rolled out annually per cloud provider.

Using analytics, automation, and machine learning, Cloudability’s True Cost Engine augments and transforms this data based on a company’s discounts, credits, commitments, reservations, allocations, and amortization.

Cloudability’s TrueCost Explorer lets you analyze your billing data and understand how your usage translates into costs.

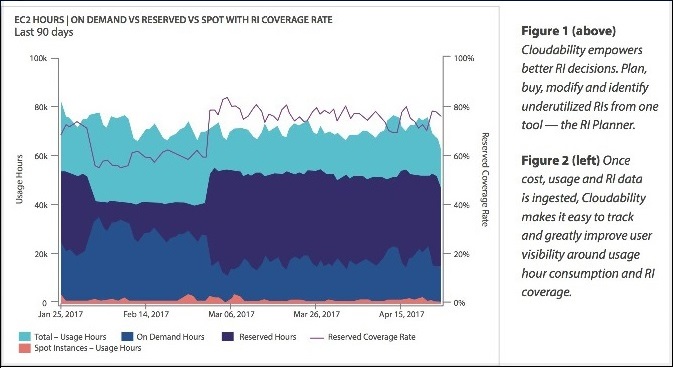

Cloudability enables better Reserved Instance (RI) decisions. You can use the Reserved Instance Planner to plan, buy, modify, and identify underused RIs.

After the cost, usage, and RI data are ingested, Cloudability makes it easy to track and improve user visibility around usage hour consumption and RI coverage.

The situation

In spring 2017, we embarked on a project to roll out a new predictive model for the True Cost Engine. The goal was to develop predictive models that would deliver even greater cost efficiency to customers running in AWS.

To support this kind of analysis, we needed a database that was performant, scalable, cost-effective, and easy to maintain and tune. In this post, we’ll show how we found Amazon Aurora with PostgreSQL compatibility to be the best choice to meet these requirements.

The project

Early last year, Cloudability’s data science team decided to use petabytes of customer cost optimization data to develop a new predictive model. These models would take into account historical spot pricing trends, vacancies in customers’ RI portfolios, instance usage patterns, and customer-specified adjustments to price lists, among other factors.

The models needed to be trained on hundreds of millions of data points from historical AWS Spot price datasets. These data points had to be retained to support continued development of the models. The models also had to adjust in real time to changes in the Spot Instance data feed, a data stream provided by AWS that describes customers’ Amazon EC2 Spot Instance usage and pricing.

Finally, with the additional complexity of unique models for different regions, instance families, and instance types, the team realized that the underlying database would be under significant, continuous stress.

Scalability with multiple reader nodes

The majority of stress on the database was from handling the Spot Instance data feed. The feed generated roughly 1 million new data points per day, which would, in turn, force updates to the prediction models. Although the feed did not generate an extremely high write load, the updates would trigger a flood of read traffic on the database. Because the updates were occurring continuously, we needed to ensure that readers and writers didn’t contend with each other and further exacerbate latency challenges.

Because Aurora clusters have a single writer and multiple readers sharing the same underlying storage, it was easy to implement read-side scalability. By vertically scaling the writer, we were confident that we could accommodate increases in write traffic for the foreseeable future. We had great success in the past in scaling an OLAP and reporting database with Amazon Aurora MySQL to a very large cluster with a single writer and many readers. So we expected the same experience with Aurora PostgreSQL.

Ease of tuning with Performance Insights

A perpetual management chore in our large production database clusters is monitoring, tracking, and isolating slow-running or load-intensive queries. Our application performance monitoring (APM) solution can provide clues. However, for our backend services that use multiple language runtimes with calls across process and host boundaries, such APM frameworks aren’t easily integrated.

Our database engines can also generate a large amount of statistics, which can be overwhelming. For slow query logs, someone still has to go through the process of aggregating data from logs, correlating them with database load, and isolating the queries—an ad hoc and tedious process.

Thus, we were thrilled when Performance Insights was announced for Aurora PostgreSQL. With Performance Insights, we have been able to quickly isolate slow and underperforming queries. This feature helped us detect important performance issues in development and avoid negative customer and business-impacting issues in production. As shown in the following screenshots, Performance Insights provides a simple dashboard that visualizes database load and allows you to easily identify sources of bottlenecks.

Surprisingly cost-effective

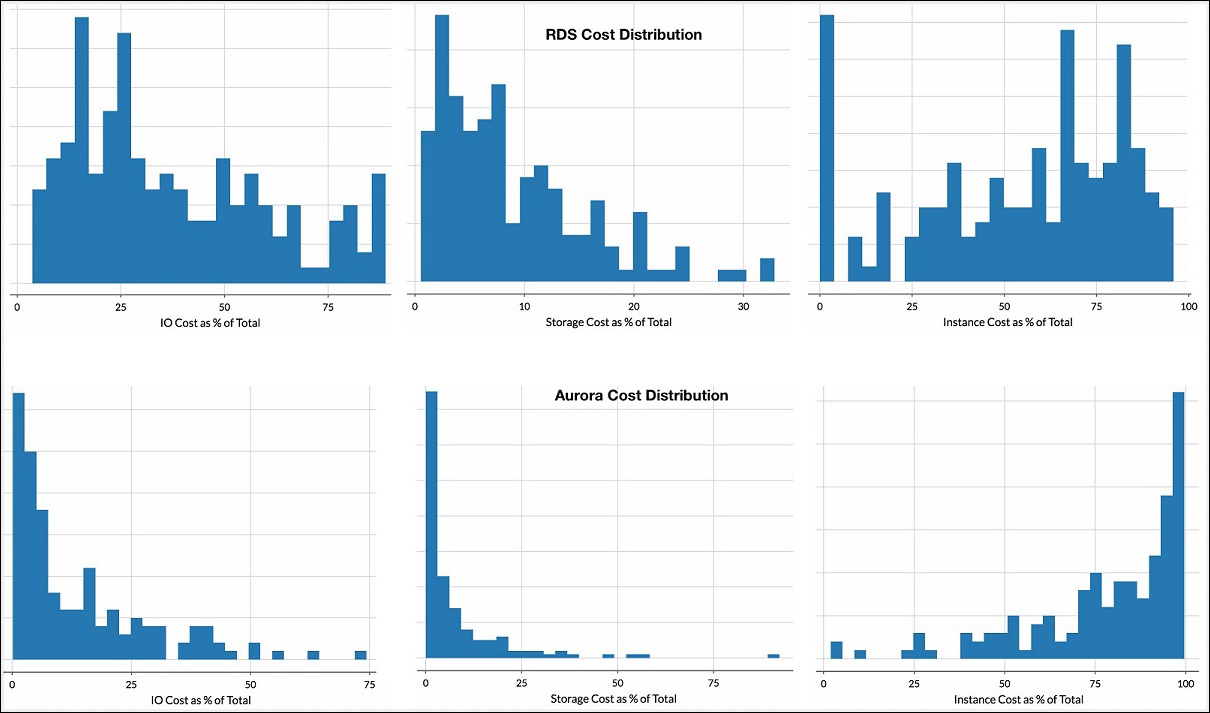

There can be significant cost savings when using Aurora compared to similar Amazon RDS deployments. A production Amazon RDS cluster usually incurs costs for Provisioned IOPS and Multi-AZ storage. In large production clusters, these costs can often exceed the Amazon RDS instance costs. With Aurora, these costs are almost entirely eliminated, as shown in the following cost distribution charts.

We found that some large AWS customers could potentially save $50k per month just in Multi-AZ Provisioned IOPS costs. Add in the Multi-AZ storage rates, and the potential savings are even higher. In general, if your Amazon RDS cluster I/O costs exceed 30 percent of the total, you should evaluate Amazon Aurora.

Thus, you could lower your database costs by moving to Amazon Aurora. How amazing is that?

Conclusion

We needed an enterprise-grade database solution for our newest offering, and we found an ideal match in Amazon Aurora PostgreSQL. The scalability, cost, and ease of maintenance and tuning really stood out to us. In fact, we like Aurora so much that we are standardizing our future database deployments on the Aurora platform—both PostgreSQL and MySQL. As we head into the future, we look forward to helping our customers find more and more ways to optimize their costs in the cloud. Aurora will be an integral part of that effort.

About the Authors

Rich Hua is a business development manager for Aurora and RDS for PostgreSQL at Amazon Web Services.

Rich Hua is a business development manager for Aurora and RDS for PostgreSQL at Amazon Web Services.

Alok Singh is the Principal Data Science Engineer at Cloudability. He is responsible for building models for real-time predictive pricing, cross-cloud workload optimization, and cloud usage and trends analysis.

Alok Singh is the Principal Data Science Engineer at Cloudability. He is responsible for building models for real-time predictive pricing, cross-cloud workload optimization, and cloud usage and trends analysis.