AWS Database Blog

Valkey turns two

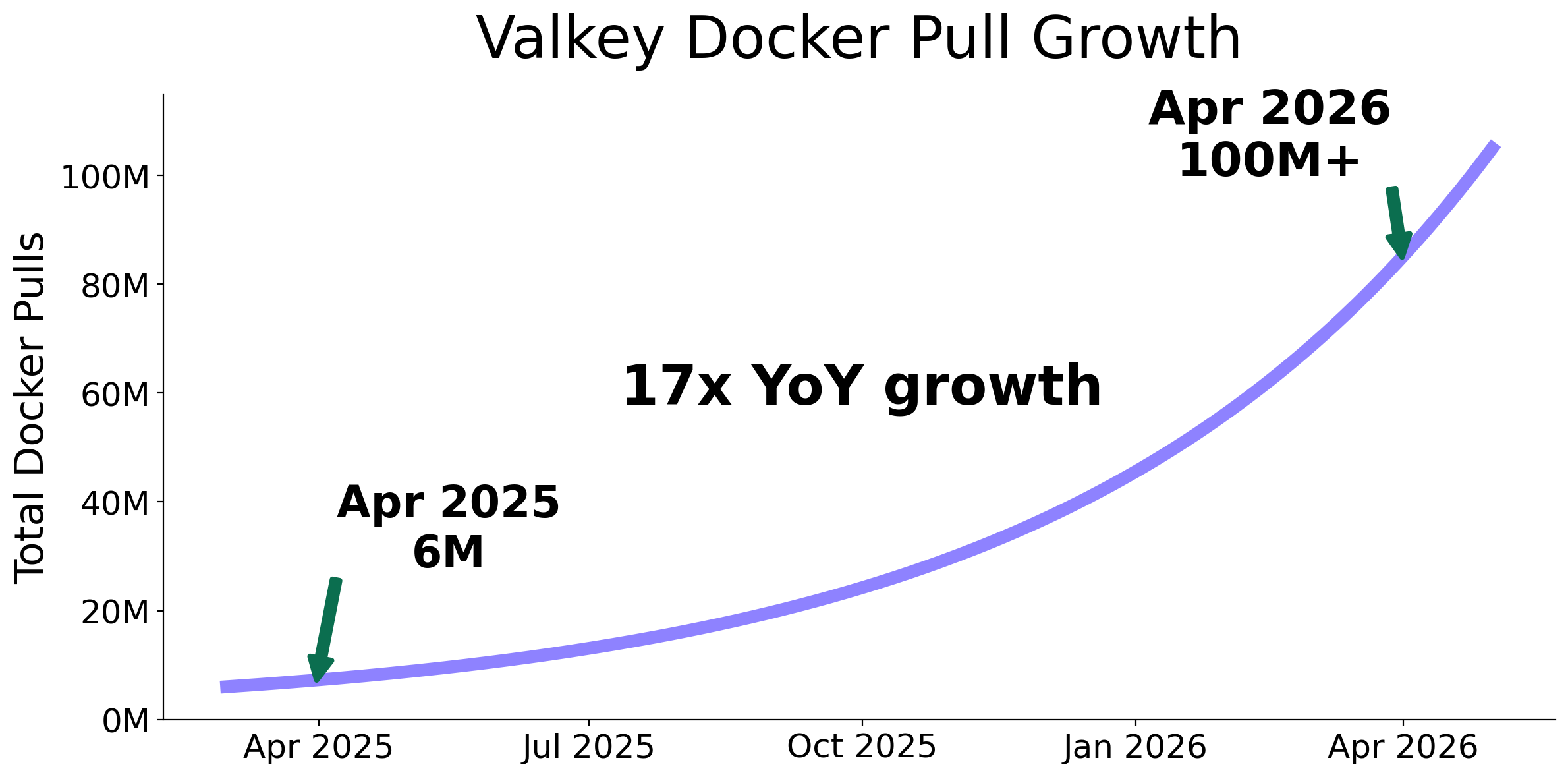

Two years ago, Valkey emerged as a community-driven response to the need for a truly open, vendor-neutral alternative to Redis. In this post, we’ll look back at two years of progress, highlighting the rapid adoption of Valkey, the innovations delivered by the community, and what these developments mean for the future of modern caching and real-time data infrastructure. In just two short years, Valkey has become the default high-performance key-value datastore across nearly every major cloud provider and open source distribution, and the center of gravity for community-led innovation in the caching space. Valkey has surpassed 100 million Docker pulls (up 17x year over year) and attracted more than 225 contributors who have submitted over 1,500 pull requests, roughly double the development pace of Redis over the same period.

This momentum has created a powerful innovation flywheel. As adoption has expanded across cloud providers, enterprises, and developers, contributions from across the Valkey open source ecosystem have driven improvements in security, reliability, performance, cost efficiency, and advanced capabilities. These improvements have led to measurable enhancements in production, such as up to 60% better price-performance and access to advanced capabilities including full-text search, aggregations, vector similarity, and Bloom filters. These enhancements have in turn led tens of thousands of Amazon ElastiCache customers to move hundreds of thousands of clusters to Valkey. Organizations such as Intuit, Airbnb, Nextdoor, Tinder, Peloton, Amazon Ads, and Amazon Music now rely on Valkey to power some of their most latency-sensitive and business-critical systems.

This innovation flywheel is made possible by Valkey’s open governance and community-driven development model. As organizations adopt Valkey and run it at scale, their real-world experience feeds directly back into the project through contributions, benchmarks, and operational feedback. This accelerates the pace of innovation for the entire Valkey ecosystem.

“What makes Valkey remarkable isn’t hero stats like 100 million Docker pulls or over 25 thousand GitHub stars, it’s the community behind them. A diverse group of developers from Ericsson, ByteDance, Intel, Salesforce, Snap, and dozens of other organizations along with nearly every cloud provider are building Valkey together. That kind of broad, open collaboration with community-led governance is how the best technology gets made over the long term.”

— Matt Wilson, Vice President and Distinguished Engineer, AWS

Cache me if you can: Valkey adoption

Valkey’s growth is visible not only in usage metrics, but in the breadth of the ecosystem forming around it. In just two years, Valkey has moved from a new open source project to a widely supported foundation for caching, real-time data access, search, and artificial intelligence (AI) workloads. Cloud providers, commercial service providers, application frameworks, and developer communities across the industry all participate.

That ecosystem now includes more than ten managed Valkey offerings from cloud providers such as AWS, Google, Alibaba, Oracle, Tencent, and DigitalOcean. Service providers and commercial distributors including Percona, Aiven, and Momento are building managed services, enterprise support models, and value-added offerings around the project. At the application layer, frameworks such as Spring Data Valkey and Laravel have added support for Valkey. AI and large language model (LLM) frameworks including LangChain, Haystack, Cognee, and Recall have introduced Valkey integrations for vector storage, retrieval, and agent memory. Together, these integrations are expanding Valkey’s role from a high-performance cache to a core data layer for modern real-time and AI applications.

On Amazon ElastiCache, Valkey adoption reflects the same industry momentum. Real-world production deployments from some of ElastiCache’s largest customers highlight the scale at which Valkey is running today, powering internet-scale applications that demand extreme throughput, low latency, and continuous availability:

- Intuit reported up to an 80% reduction in downstream service calls and approximately 95% latency improvements after upgrading to ElastiCache for Valkey for critical identity workloads.

- Sanoma uses ElastiCache for Valkey to improve AI-assisted comment moderation across its news brands by storing human moderator overrides as vectors. Today, 30% of comments are matched against past moderator decisions. In 6.5% of all cases, that context directly changes the AI’s call, improving accuracy without retraining models or operating a separate vector database.

- Tinder relies on the low-latency performance of Amazon ElastiCache to process billions of daily user actions and support real-time interactions across its global dating platform.

- Peloton uses ElastiCache to power its live leaderboards, allowing riders around the world to compete in real time with sub-millisecond updates.

- Amazon Ads uses ElastiCache for Valkey to power the Sponsored Products advertising platform. The system runs clusters that span hundreds of nodes and delivers billions of ad impressions per day while processing tens of millions of requests per second. Even at this scale, the system maintains p99 latencies under 5 ms and 99.99% availability across global infrastructure.

- Nextdoor upgraded more than 800 nodes to Valkey to reduce operating costs while continuing to power its neighborhood-based social platform.

“It’s exciting to see projects like Valkey gain momentum so quickly as organizations adopt them to run mission-critical workloads at global scale. By contributing engineering expertise and operational experience back to the community, we help accelerate innovation across the ecosystem. Our customers benefit from a faster pace of improvement in performance, reliability, and capabilities.”

— G2 Krishnamoorthy, Vice President, Databases, AWS

The work behind the momentum

Rapid adoption has been matched by rapid innovation. Contributions from organizations across the Valkey community have produced a surge of improvements in performance, reliability, cost efficiency, and functionality. Since the project began, more than 225 contributors have submitted over 1,500 pull requests. That is nearly double the pace of development seen in Redis over the same period in both active contributors and merged pull requests. This rapid pace highlights the power of open source collaboration over traditional single-vendor development models.

Many of the world’s leading technology companies actively contribute to the project, including organizations such as ByteDance, Intel, Salesforce, and Snap, alongside nearly every major cloud provider. The pace of development reflects the strength of an open, industry-wide collaboration model where organizations collectively invest in improving the core infrastructure they depend on. But more important than the numbers is the impact of what the community is building. While it’s difficult to fully capture two years of innovations in one post, the following highlights illustrate several of the innovations that have emerged from this growing community. These include the latest innovations from Valkey 9, which was recently announced as generally available on Amazon ElastiCache on this separate blog post [link].

Performance

Valkey 9 continues to push the boundaries of what in-memory data stores can achieve. Performance improvements include up to 40% higher throughput from pipelined command memory prefetching, up to 20% higher throughput from zero-copy responses, and up to 25% lower latency with Multipath TCP. These gains build on earlier Valkey performance improvements, including up to 230% higher throughput and up to 70% better latency from the redesigned I/O threading architecture introduced in Valkey 8. ElastiCache also achieved up to 5 million requests per second per cache with Amazon ElastiCache Serverless. But performance only matters if it remains predictable under real-world conditions. For this reason, the Valkey community has invested heavily in providing performance gains that translate into consistent reliability at scale.

Reliability

Reliability improvements focus on keeping latency predictable and recovery fast under real production conditions. Recent Valkey releases have introduced Active Defragmentation improvements that reduce P99 latency spikes and dual-channel replication that can cut full synchronization times by up to 50% while reducing memory pressure on primaries. TLS full-sync optimizations deliver up to 18% faster synchronization. Together, these enhancements help keep latency predictable and recovery fast as customers run larger and more demanding production workloads.

Cost efficiency

Valkey 9 expands cost efficiency with numbered databases in cluster mode. Teams can logically separate applications, tenants, or environments within the same distributed cluster instead of over-provisioning separate infrastructure for every namespace. These improvements build on earlier Valkey efficiency gains, including core data-structure optimizations in Valkey 8 and 8.1 that can deliver up to 60% better price-performance compared to Redis OSS on ElastiCache. The result is smaller clusters, lower infrastructure costs, and more headroom for growth. As the core engine becomes more efficient, Valkey helps customers run larger workloads with less infrastructure while continuing to expand into new categories of applications beyond traditional caching.

New capabilities

With Valkey 9 on Amazon ElastiCache, customers can access the latest Valkey search capabilities. These expand on previously added vector search with full-text search, server-side aggregations, and hybrid queries for real-time search, semantic retrieval, filtering, and analytics directly inside the cache. These capabilities help customers search and analyze terabytes of continuously updated data with microsecond latency and throughput up to millions of requests per second. Use cases include product catalog search, document discovery, recommendation systems, anomaly detection, log analytics, conversational memory, and retrieval-augmented generation (RAG).

Valkey 9 also introduces hash field expiration, giving teams fine-grained TTL control over individual fields within a hash. This removes the need to expire an entire key at once or add application-side expiration logic. These innovations build on other capabilities added across recent Valkey engine releases and modules, including Bloom filters, JSON support, LDAP integrations, and geospatial polygon queries.

Building with our key values

Valkey’s rapid adoption over the past two years highlights the power of open collaboration at industry scale. Widespread developer usage, broad participation from cloud providers and technology companies, and neutral open governance have created a powerful flywheel: as more organizations deploy Valkey in production, their operational experience feeds directly back into the project, driving continuous improvements in performance, reliability, scalability, and cost efficiency.

Running Valkey across hundreds of thousands of clusters and some of the world’s most demanding real-time applications continues to surface new opportunities for innovation. Contributions from across the Valkey open source ecosystem strengthen the core engine while expanding what developers can build. Use cases now range from traditional caching and session management to real-time analytics, search, and AI-driven workloads.

We invite developers, operators, and organizations of all sizes to participate on Valkey’s GitHub and Slack, whether by contributing code, providing feedback, or adopting Valkey in production. Check out the latest innovations on Valkey’s blog and join us at upcoming community events and technical sessions that continue to bring together contributors and users from around the world.

Two years in, Valkey stands as proof that open, community-driven technology innovates faster, scales further, and delivers more value than any single-vendor model. The journey is just beginning.