Artificial Intelligence

AI agents in enterprises: Best practices with Amazon Bedrock AgentCore

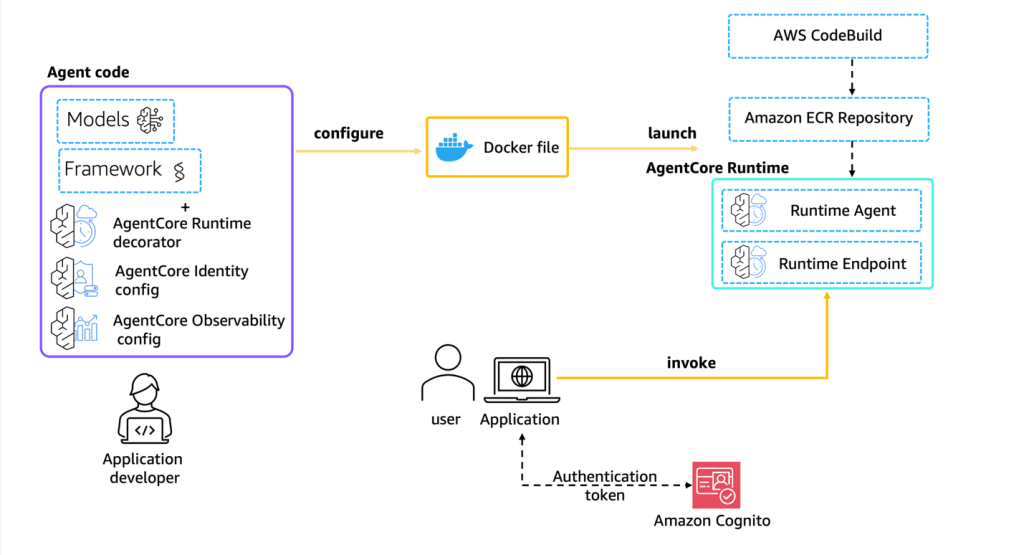

This post explores nine essential best practices for building enterprise AI agents using Amazon Bedrock AgentCore. Amazon Bedrock AgentCore is an agentic platform that provides the services you need to create, deploy, and manage AI agents at scale. In this post, we cover everything from initial scoping to organizational scaling, with practical guidance that you can apply immediately.

Move your AI agents from proof of concept to production with Amazon Bedrock AgentCore

This post explores how Amazon Bedrock AgentCore helps you transition your agentic applications from experimental proof of concept to production-ready systems. We follow the journey of a customer support agent that evolves from a simple local prototype to a comprehensive, enterprise-grade solution capable of handling multiple concurrent users while maintaining security and performance standards.

Best practices for building robust generative AI applications with Amazon Bedrock Agents – Part 2

In this post, we dive into the architectural considerations and development lifecycle practices that can help you build robust, scalable, and secure intelligent agents.

Best practices for building robust generative AI applications with Amazon Bedrock Agents – Part 1

In this post, we show you how to create accurate and reliable agents. Agents helps you accelerate generative AI application development by orchestrating multistep tasks. Agents use the reasoning capability of foundation models (FMs) to break down user-requested tasks into multiple steps.

Fine-tune your Amazon Titan Image Generator G1 model using Amazon Bedrock model customization

Amazon Titan lmage Generator G1 is a cutting-edge text-to-image model, available via Amazon Bedrock, that is able to understand prompts describing multiple objects in various contexts and captures these relevant details in the images it generates. It is available in US East (N. Virginia) and US West (Oregon) AWS Regions and can perform advanced image […]

MLOps deployment best practices for real-time inference model serving endpoints with Amazon SageMaker

After you build, train, and evaluate your machine learning (ML) model to ensure it’s solving the intended business problem proposed, you want to deploy that model to enable decision-making in business operations. Models that support business-critical functions are deployed to a production environment where a model release strategy is put in place. Given the nature […]

Next generation Amazon SageMaker Experiments – Organize, track, and compare your machine learning trainings at scale

Today, we’re happy to announce updates to our Amazon SageMaker Experiments capability of Amazon SageMaker that lets you organize, track, compare and evaluate machine learning (ML) experiments and model versions from any integrated development environment (IDE) using the SageMaker Python SDK or boto3, including local Jupyter Notebooks. Machine learning (ML) is an iterative process. When solving […]

Part 1: How NatWest Group built a scalable, secure, and sustainable MLOps platform

This is the first post of a four-part series detailing how NatWest Group, a major financial services institution, partnered with AWS to build a scalable, secure, and sustainable machine learning operations (MLOps) platform. This initial post provides an overview of the AWS and NatWest Group joint team implemented Amazon SageMaker Studio as the standard for […]