AWS for M&E Blog

Live streaming localization and accessibility using AWS Media Services

Live streaming has transformed how we consume content, from corporate communications to sports broadcasting. With 82–85% of sports fans using streaming services, the demand for accessible, multilingual content is higher than ever. However, traditional approaches to live captioning and translation are prohibitively expensive, requiring significant human resources and technical infrastructure. This cost barrier often forces organizations to limit their language offerings, excluding millions of potential viewers who are deaf or hard of hearing, non-native speakers, or international audiences. AWS Media Services makes it possible to automatically generate high-quality captions and translations in multiple languages at scale, dramatically reducing costs while expanding global reach.

This blog post provides a comprehensive overview of current streaming technology for localization and accessibility. It helps you evaluate live streaming localization technology and choose cost-effective, proven, integrated, deployable, and production-ready solutions.

Target audience

This post is designed for professionals involved in business decisions, technical evaluation, and implementation of live streaming localization and accessibility projects where multiple language captions, subtitles, and live dubbing are required. If you’re interested in learning about current industry developments in accessible live streaming, this serves as an excellent reference resource.

Business benefits

If you own content rights or create content, reaching global audiences is increasingly important. Offering multi-language subtitles and dubbed audio significantly expands market reach and unlocks new revenue opportunities. Content creators that provide multilingual options see measurable impact: broader international viewership, increased engagement across diverse demographics, and enhanced brand reputation. By removing language barriers, businesses can monetize content in previously untapped markets and maximize return on content investment.

Cost considerations and market expansion

Traditional audio transcription, translation, and dubbing services have restricted accessible content because of high costs, leaving many creators unable to reach global audiences. This guide demonstrates how you can use cloud-based services and generative AI to dramatically reduce these barriers to entry. The significantly lower costs enable organizations of all sizes—from independent content creators to large enterprises—to make their live streams accessible across languages and abilities. This transformation in localization technology helps create new opportunities for content producers while improving capital efficiency and delighting viewers worldwide.

Architecture overview and prerequisites

Before implementing this solution, you should have a basic understanding of live streaming architectures. Build a resilient cross-region live streaming architecture on AWS provides the foundational knowledge you’ll need.

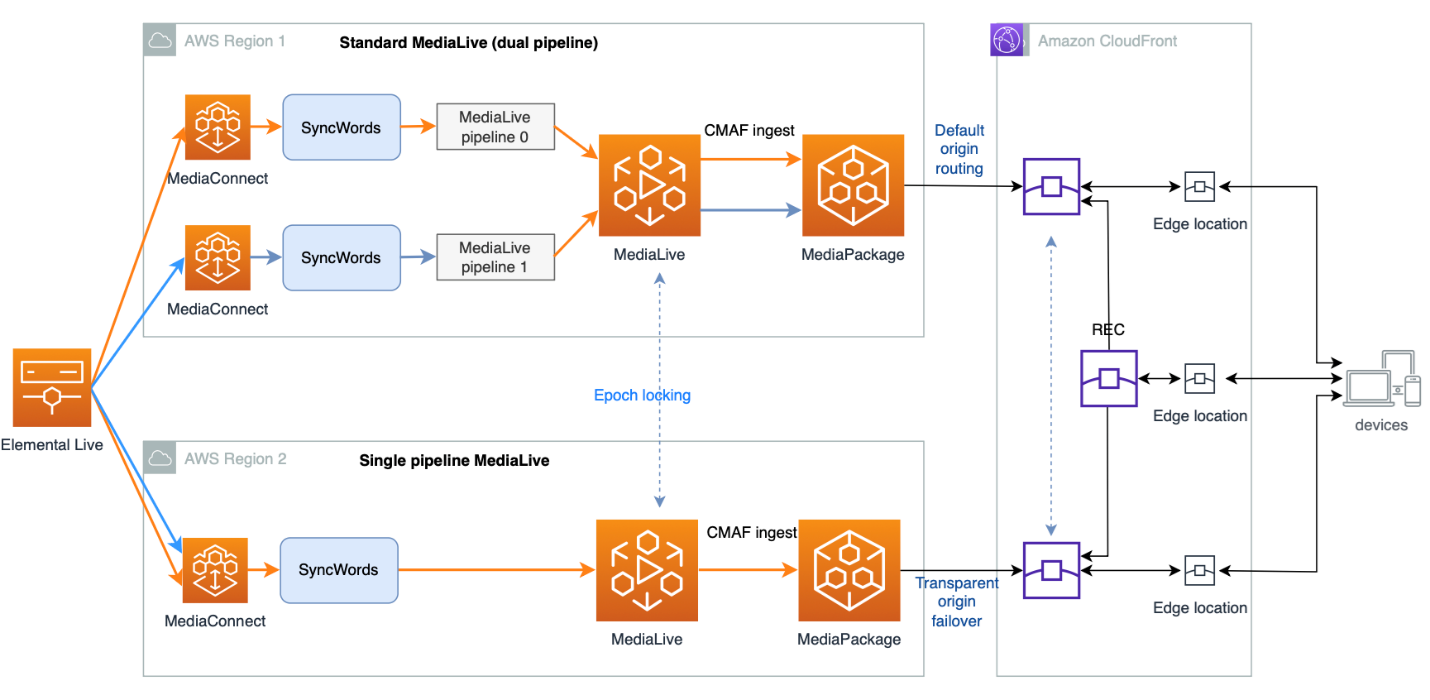

For high-value live streaming events, a resilient and redundant architecture is crucial. While the reference architecture demonstrates a comprehensive cross-AWS Region solution with full redundancy, you can adapt it to match your specific needs and budget:

- Scale down to a single Region

- Use a single pipeline instead of standard pipelines

This guide focuses on adding localization capabilities—including captioning, subtitles, and audio dubbing—as modular components to your streaming architecture. We reference industry standards like CEA-608/708 (the North American closed captioning standard), DVB Subtitle, DVB Teletext, and HTTP Live Streaming (HLS) protocols, providing context for these technical elements throughout the guide.

Design considerations

To choose the right localization technologies, consider these key aspects:

- Start with your current live streaming architecture workflow

- Determine language requirements

- Assess whether languages are supported by the 608 protocol

- Evaluate live dubbing needs

- Define latency requirements

- Determine if the streaming is for live events or 24×7 broadcast channels

These answers help determine the best architecture and services and how to integrate the technology with your current live streaming workflow. The caption, subtitle, and dubbing processes rely heavily on Automatic Speech Recognition (ASR) technologies, machine learning, and generative AI technologies to transcribe, translate, and generate audio dubbing. These technologies sit at the forefront of innovation, rapidly evolving and continuously improving. An architecture that allows customers to choose the right technology for the right job is important and helps protect your investment.

How to choose an architecture to implement localization

Choose the right integration point to reduce complexity when integrating localization features into your live streaming workflow. A typical live streaming workflow includes video transportation using AWS Elemental MediaConnect, video transcoding using AWS Elemental MediaLive, video origin service using AWS Elemental MediaPackage, and final distribution to users through a content distribution network (CDN) such as Amazon CloudFront.

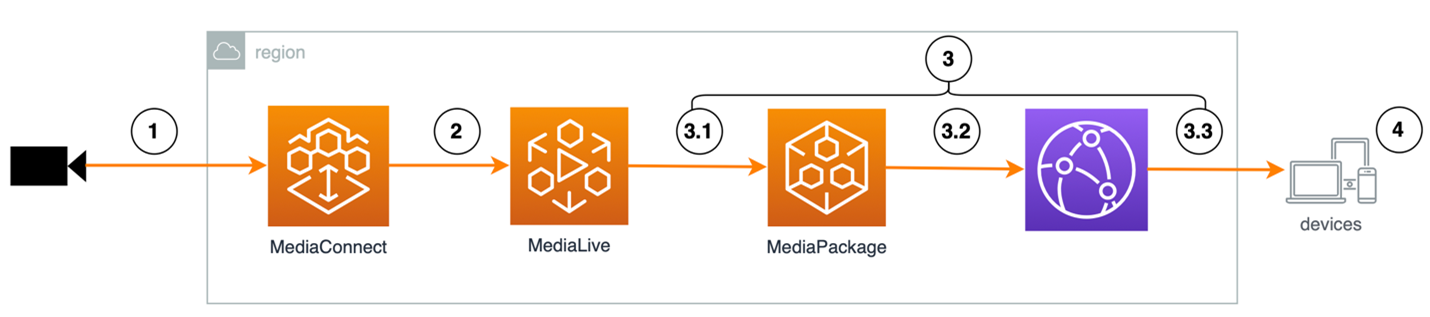

Following the video processing pipeline, the workflow has the following integration points (shown in Figure 1):

Figure 1: Integrations points in the media processing pipeline

- Integration point 1: Video is sent from the video source to MediaConnect. This step is optional, and you can use an alternative protocol such as RTMP to send video from the source directly into MediaLive. MediaConnect provides secure transport with optional video encryption using the SRT protocol.

- Integration point 2: This integration point is positioned immediately before video ingestion into MediaLive. MediaLive can convert embedded 608 captions into WebVTT for HTTP Live Streaming (HLS). This option is commonly used when the targeted languages are supported by 608, such as English, Spanish, French, Dutch, German, Portuguese, and Italian. MediaLive can also process embedded captions such as DVB Subtitle and DVB Teletext if present.

- Integration point 3: This phase of integration includes points 3.1, 3.2, and 3.3. At these points, the video has been transcoded and processed, and a main manifest playlist and multiple bitrate video segments are produced, ready for distribution. At this point, you can augment the playlist with additional subtitle and audio dubbing tracks, align the timing with the original video, and insert them into the main manifest or produce an alternative manifest to include the subtitles and audio dubbing tracks.

- Integration point 4: This is the last integration point, on the client side. This method requires client-side development such as iFrame embedding for browser-based players or application development to integrate SDKs or APIs to retrieve subtitles or audio dubbing tracks from an endpoint other than the original video.

Let’s examine each integration point for a detailed analysis of pros and cons.

Integration point analysis

Integration point 1: Choose this integration point when you already have a caption solution. The live stream starts from an on-premises video camera or a live production feed, goes through the captioning solution before being sent to the cloud for transcoding and distribution. You must provide your own captioning solution when choosing this option.

Integration point 2: At this point, the live stream is already in the cloud and needs captions added. The common solution is to embed 608 captions into the live stream before MediaLive transcoding. The captioning service ingests your live stream, transcribes and translates captions, generates audio dubbing, and embeds the captions and dubbed audio into the live stream, then forwards it to MediaLive for processing. The available options are CEA-608 embedding, DVB Subtitle, and DVB Teletext.

608 embedded captions are limited to Latin-based languages such as English, Spanish, French, Dutch, German, Portuguese, and Italian. The total number of caption tracks is limited to CC1, CC2, CC3, and CC4.

Embedded DVB subtitles and DVB teletext aren’t readily available, often come with limited language support, and the downstream encoder might also have limited support for DVB subtitle and DVB teletext depending on the use case.

The advantage of using embedding is low latency, least interruption to the video processing and distribution pipeline, and can use software as a service (SaaS) based captioning services, or solutions deployed into your virtual private cloud (VPC).

Integration point 3: A major integration task here is to append additional subtitle and audio dubbing tracks into the existing main manifest and perform time alignment with the main video. When properly done, the main video playlist has caption or subtitle tracks and audio dubbing tracks natively integrated with the original video and audio. This method avoids downstream changes and provides seamless integration with the origin server, CDN, and client player.

The advantage of integration point 3 is that there are no limitations on language support. This solution can use SaaS captioning services and uses HLS WebVTT to integrate subtitles with no limitation on the total number of languages.

The disadvantage is the processing latency added by the SaaS service to transcribe, translate, and audio dub, and to align the subtitle and audio dubbing tracks with the original video.

Integration point 4: This integration point occurs on the client side. The main video and subtitle and audio dubbing are delivered through separate paths and origins. The timing synchronization occurs at the client side.

The advantage is that there are no changes to the original video pipeline. The disadvantage is that it requires changing the video player to include additional code to process subtitles and audio dubbing at the client side.

Integration comparison

| Integration Point | 1 | 2 | 3 | 4 |

| Cost | High* | Low | Low | Low |

| Use SaaS | No | Yes | Yes | Yes |

| Low latency | Yes | Yes | No | Depends* |

| Client agnostic | Yes | Yes | Yes | No |

| Any language | No | No | Yes | Yes |

High*: On-premises caption solutions require a captioning hardware encoder and are optionally paired with a SaaS solution to provide captioning embedding. They most likely cost more than SaaS-based solutions.

Depends*: The latency depends on two factors. First, the transcription and translation processing delay. Different languages have different delay characteristics, so not all languages are delivered the same. For example, for an English-speaking live stream, English captions only need transcription service, but Japanese subtitles will need translation from English to Japanese with additional delay. Secondly, it depends on whether the customer needs to deliver a synchronized multi-language video experience or not. For example, if the customer chooses to deliver each language independently, the delay could vary for each language delivery.

Integration architecture recommendation

Integration points 2 and 3 are recommended because they allow ease of integration, ease of deployment with minimum integration touch points, and a converged video delivery path for caption, subtitle, and audio dubbing along with the main video.

- For low latency needs where 608 embedding technology works for your targeted languages, use integration point 2.

- For situations where DVB Subtitle and DVB Teletext work for your workflow and the transcoder can support your desired workflow, use integration point 2.

- For multiple language subtitle support where embedded 608 isn’t an option, use integration point 3.

Integration point 4 provides a solution when you can’t modify your main video distribution pipeline. This applies when you can’t change your video processing pipeline or lack the development resources to make changes to your application.

Integration point 1 depends on the customer’s choice. If you already have an existing captioning solution but need additional subtitle languages not supported by embedded captions, or want to expand caption or subtitle solutions to additional services, we recommend using a SaaS service where the cost is significantly lower compared to hardware-based solutions.

Implementation case studies

Let’s examine a live captioning and dubbing service from SyncWords to see how it helps integrate localization features into live streaming using AWS Media Services. SyncWords specializes in building the integration pipeline and removes the heavy lifting to enable localization, so you can choose the most suitable services for specific tasks. For example, you can choose cloned voice to provide rich emotion, voice separation, and tone-matching dubbed audio, or Amazon Nova or other generative AI services for subtitle translation.

Low latency secure 608 embedding workflow

The first option uses 608 embedding to deliver up to four language captions simultaneously while taking advantage of the CC1, CC2, CC3, and CC4 caption channels. This option offers low latency caption insertion. MediaLive converts the embedded captions into WebVTT for HLS live streaming.

Figure 2: SyncWords – SRT-based workflow

The SRT-based workflow has the following limitations:

- Limited character set: SRT supports only the basic Latin character set, plus a few extended characters and special symbols

- Restricted language options: Because of character constraints, SRT supports only English, Spanish, French, Portuguese, Italian, German, and Dutch

- Supports up to four simultaneous languages

The SRT-based workflow benefits from widespread support in current broadcast and live streaming systems. 608 is widely supported, and the embedded captions can use the SRT protocol for secure transport, enabling flexible transport of live streaming production, contribution, and distribution.

DVB Subtitle and DVB Teletext support in SRT workflow

SyncWords extended their output subtitle support with DVB Subtitle, DVB Teletext, and DVB TTML options. With DVB Subtitle and DVB Teletext, customers can use MediaLive to process DVB Subtitle for pass-through or burn-in applications, and use DVB Teletext to convert to WebVTT for OTT streaming.

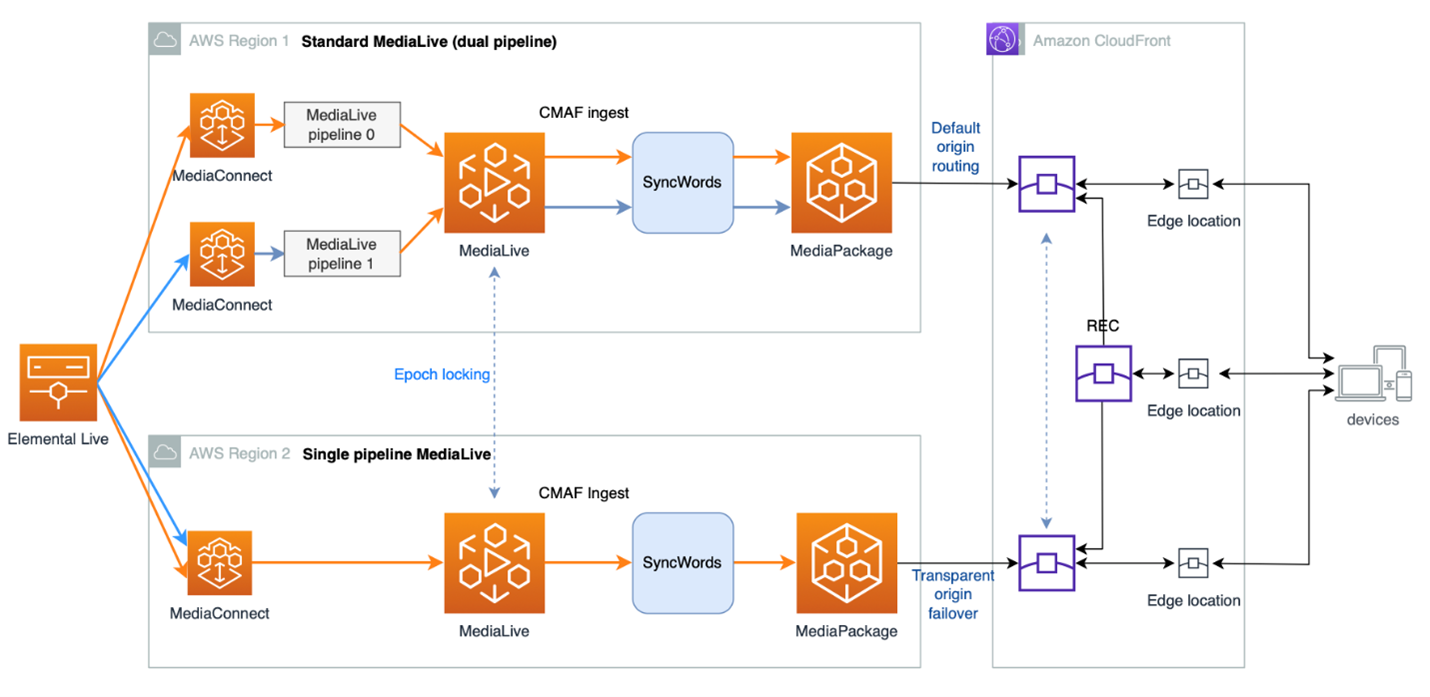

Late binding localization workflow

As demand grows for localized live streaming in more languages, another option for integrating subtitles is to add subtitle tracks after transcoding. For example, in HTTP live streaming, the encoder continuously writes the manifest file and media segments. The top manifest file updates only after at least 2–3 segments to ensure smooth playback and buffering on the client side. Here you can augment the playlist with multiple language subtitle tracks. Binding subtitles with transcoded media assets produces an integrated HLS streaming media asset. Compared to the SRT-based workflow, this is a late binding” mechanism to combine subtitles and transcoded media assets after transcoding.

The late binding workflow provides these benefits:

- Supports many languages with no real limitation on the total number of subtitle languages

- The combined media assets can be ingested by any standard origin service, such as MediaPackage

- Makes the distribution CDN-agnostic and player-safe

- Requires no changes on CDN and player to support multiple language subtitles

Figure 3: Late binding caption insertion workflow

Figure 3: Late binding caption insertion workflow

We discussed two different implementation methods to enable live streaming localization and accessibility; these are production-ready, deployable solutions that many AWS customers deploy today. As the industry and technology evolve, there will be improvements to further simplify and streamline this workflow.

Conclusion

In this guide, we’ve presented comprehensive insights into how you can implement live streaming localization and accessibility using AWS Media Services and partner services. You learned about multiple integration approaches, with Integration Points 2 and 3 emerging as the recommended solutions for most use cases. And the importance of SaaS-based solutions and currently deployable solutions using current caption and subtitle standards such as CEA-608, DVB Subtitle, DVB Teletext, and WebVTT. While current solutions face some limitations, particularly with 608 protocol language support, emerging technologies and AI advancements promise to simplify implementation and expand language support capabilities.

Use this guide as a resource for organizations seeking to expand their global reach through localized streaming content for syndication, free-to-air, direct publishing, and multilingual live OTT streaming.