Amazon Web Services ブログ

AI-driven Business Process Re-Engineering: The Day We Stopped Asking “What’s Your Problem?”

Find the “bottleneck” in operations, replace it with an AI agent, measure the results with KPIs, and report. It took us a while to realize that this perfectly rational approach was a breeding ground for failure.

In this blog, we share the insights gained over three months of developing AI BPR (AI-driven Business Process Re-Engineering), a program offered by AWS. AI BPR is a 4–5 hour program designed to restructure business models and operational processes around AI agents. The entire process — facilitation, technical validation, and deliverable creation — is driven by AI agents, enabling rapid and effective business transformation.

This blog is translated from the original Japanese article: AI 駆動の業務変革手法 :「課題は何ですか?」と聞くのをやめた日

Why “Doing It Right” Doesn’t Change Organizations

AI BPR was born from a simple idea: could we apply the methodology for optimizing the entire software development lifecycle (AI DLC) to business operations? We initially launched it as a framework to accelerate the standard steps of business process optimization — designing target outcomes, analyzing workflows, identifying bottlenecks, implementing solutions with AI agents, measuring results, and compiling recommendations — all driven by AI.

| Step | Description | Duration |

|---|---|---|

| Step 1: Goal | Identify stakeholders, document adoption decision criteria | 30 min |

| Step 2: Focus | Visualize scope of focus areas, identify bottlenecks, set constraints | 30 min |

| Step 3: Solution | Generate and evaluate multiple options, create FAQ | 30 min |

| Step 4: Simulation | Create scenarios, validate AI agent effectiveness via Kiro Power | 30 min |

| Step 5: Challenge | Anticipate friction and plan resolution timelines | 30 min |

When we began pitching the program, we received positive feedback about how generative AI accelerated deliverable creation and allowed participants to focus on decision-making. We saw tangible results. But there were also reactions we couldn’t ignore:

- Delegating operations to AI agents is dangerous from a BCP standpoint. Our business cannot afford downtime.

- We ran AI BPR, but the solutions stayed within the range of what we’d already considered. It doesn’t feel like a significant leap from our previous analysis.

Both seem like legitimate concerns. Yet regarding business continuity, even human-dependent processes carry risks from illness or absence. If downtime is truly unacceptable, AI agents should logically be the more rational choice. As for the latter, it’s a fair assessment from practitioners who think about problems and solutions every day. What concerned us, however, was that they were evaluating generative AI’s proposals as critics rather than engaging with it as a co-creation partner.

The surface-level feedback varied, but we noticed a common underlying structure.

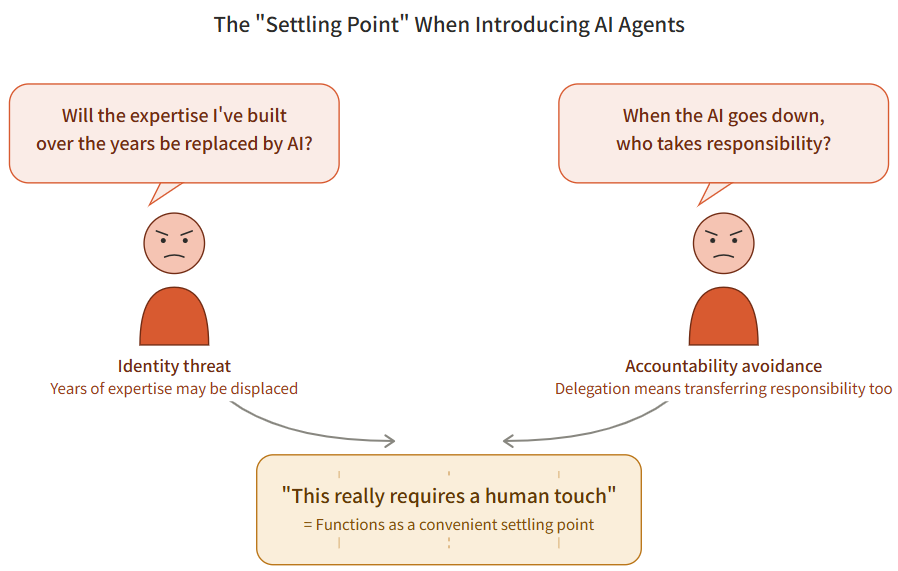

The first element is a visceral pushback rooted in deep expertise and experience with one’s own work. The suggestion that years of honed expertise might be replaced by AI is received not as a threat to capability, but as a threat to identity. This tendency is supported by Stanford research: among those using generative AI in the workplace, 45% cite concerns about AI accuracy and reliability, while 23% express fear of job displacement. In our conversations with efficiency-focused teams across various companies, this anxiety surfaced regardless of industry.

The second element is the psychological desire to maintain trust in “people” as a way to stabilize interpersonal relationships and accountability structures within the organization. The notion that “so-and-so is the expert on this” reflects the organization’s division of responsibility. Delegating to AI agents isn’t merely about replacing tasks — it entails a transfer of accountability. In other words, when operations fail, the responsibility now extends more than ever to the IT function that oversees the AI agents.

When these two forces combine, phrases like “this really requires a human touch” or “we need people in charge for crisis situations” function as a convenient “settling point” — one that simultaneously honors experienced practitioners and avoids the transfer of accountability. There are genuinely many tasks that are difficult without human involvement, but this seemingly rational settling point tends to be chosen over the harder challenge of actually confronting that difficulty.

What these reactions made clear was that the current AI BPR could not address the real problem. The problem-solving process had simply gotten faster with AI, but the outcomes still landed at the “settling point” with no meaningful change. Speed has value, of course, but accelerating treatment while the diagnosis remains wrong will never lead to a cure.

Tear Down, Investigate, Rebuild

The first decision we made was to tear down the AI BPR framework. We chose to question the conventional problem-solving and efficiency optimization frameworks and commit to uncovering root causes and pursuing genuine solutions.

We weren’t anxious about this because we had a strong intuition: this “something feels off about the right approach not working” couldn’t possibly be unexplored territory. The failure of business transformation has been a recurring structural challenge since BPR was first proposed in 1993. In an IBM survey of 1,532 transformation practitioners, only 41% reported that their projects fully achieved their objectives (IBM, 2008). A 2021 McKinsey survey of 1,034 respondents found transformation success rates hovering around 30% (McKinsey & Company, 2021). Success rates have remained at 30–40% for decades, meaning an enormous body of root-cause analysis and proposed countermeasures must have accumulated.

After each AI BPR delivery, we deconstructed participant reactions across four layers: Surface (the words spoken), Experience (what happened during the AI BPR session), Psychology (prior expectations and biases), and Habit (personal experience and disposition). We analyzed which layer each piece of feedback originated from and cross-referenced it with precedents and research. The generative AI–powered analysis reports identifying specific improvements sometimes ran to tens of thousands of characters.

The result: the reactions observed in Chapter 1 turned out to be theoretically predictable phenomena. Below, we describe the process of investigation and reconstruction.

Theoretical Grounding for Our Observations

(This section is somewhat academic, with citations from the literature. If you’re primarily interested in “what we actually changed,” feel free to skip ahead.)

The “settling point” of “this really requires a human touch” observed in Chapter 1 has a name in the literature: organizational defensive routines, as discussed by Argyris (1990, 1991). Defensive routines are the actions, policies, and practices unconsciously adopted when individuals encounter situations that could cause embarrassment or threat — in this case, the prospect of job displacement by generative AI or expanded accountability from AI agent adoption. Argyris further noted that this avoidance operates as a refined skill, which he termed skilled incompetence. The problem lies precisely in the fact that because the avoidance is so skillfully executed, people become unaware that they are avoiding the problem at all. The desire for change on one hand, and the desire to protect the current stable state on the other — these opposing motivations cancel each other out, resulting in stasis. This dynamic of settling into equilibrium at the “settling point” is further corroborated by Kegan & Lahey’s (2001, 2009) concept of Immunity to Change.

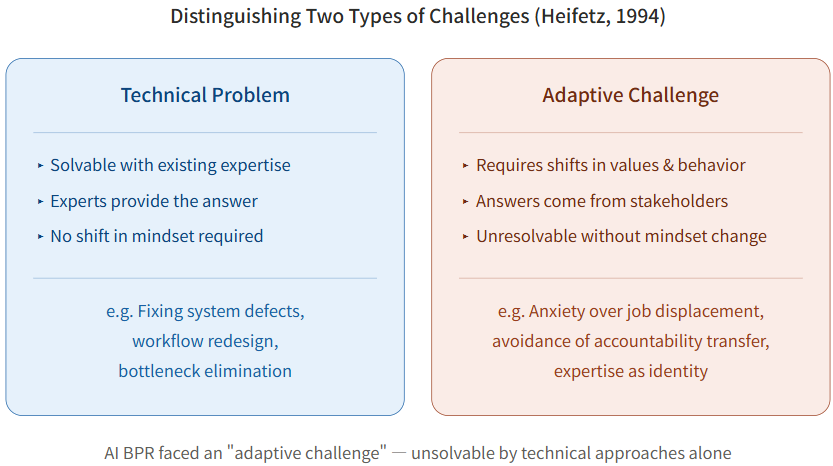

We also learned that breaking through the “settling point” in AI BPR would be difficult with the conventional “Solution”-proposal approach. In the step literally called “Solution,” generative AI “proposes and creates” while humans “review.” This approach is effective for technical problems — those solvable with established expertise and methodologies. However, challenges like anxiety over job displacement or avoidance of accountability transfer — where the transformation of organizational values and behavioral norms is itself what’s required — fall under what Heifetz calls adaptive challenges. Technical problems can be solved when an expert provides the answer, but adaptive challenges cannot be resolved unless the stakeholders themselves undergo a shift in perception. As Heifetz (1994) put it:

“The most common cause of failure in leadership is produced by treating adaptive challenges as if they were technical problems.”

It was inevitable that AI BPR — built on an approach rooted in software development, a fundamentally technical discipline — would hit this wall.

Schein (1999), in his work on Process Consultation, classified problem-solving approaches into three models: 1) the “expert model,” which provides answers (prescriptions); 2) the “doctor-patient model,” which diagnoses and then prescribes; and 3) the “process consultation model,” which develops the client’s own problem-solving capacity. The third model is the best fit for adaptive challenges.

Our investigation of the theoretical literature yielded two key findings. First, the “settling point” is not a problem unique to specific individuals or companies — it is a structural defensive response widely observed across organizations. Second, to move beyond the settling point, the entire program needed to be redesigned from a format where participants “receive proposals” to one that elicits their own awareness and judgment. This is the fundamental difference from the similarly named “AI BPO” — simply outsourcing work to AI.

Rebuilding AI BPR

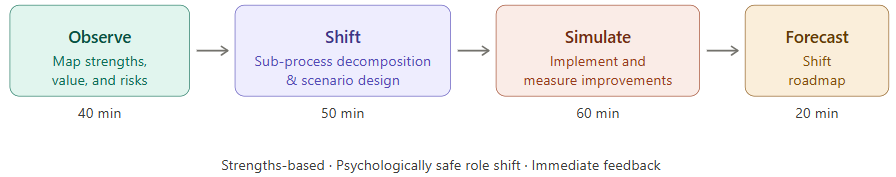

Following our analysis, AI BPR at the time of writing is structured as a four-step framework characterized by three principles:

- Strengths-based starting point: Rather than asking “What’s wrong with your current operations?”, we discover the customer value being delivered and the strengths recognized in the market and internally. The question becomes: “How can we amplify these strengths to further enhance customer value?”

- Psychologically safe role shift: For each element of the business process, participants assess — based on the presence or absence of strengths — whether to “delegate to an AI agent or elevate to excellence.” They actively decide “what to let go of and what to focus on.”

- Immediate feedback: Through interactive dialogue with AI, deliverables are created with “zero take-home time.”

The rebuilt 4 Steps are as follows:

| Step | Description | Duration |

|---|---|---|

| Step 1: Observe | Map strengths, value, and risks based on operational interviews and documentation | 40 min |

| Step 2: Shift | Decompose sub-processes and design transformation scenarios for operations and customer experience based on deliberate judgment | 50 min |

| Step 3: Simulate | Implement the Shift and measure evaluation improvements | 60 min |

| Step 4: Forecast | Develop a Shift roadmap based on the velocity of evaluation improvements | 20 min |

Getting started with AI BPR is simple. Each step is driven by prompts and a set of MCPs, so you can begin by simply telling Kiro: “Start AI BPR.”

In the redesign, we again drew on prior research. As a concrete process consultation approach to adaptive challenges — which require shifts in mindset — we adopted Appreciative Inquiry, proposed by Cooperrider & Srivastva (1987). Rather than analyzing problems and fixing them, this methodology discovers an organization’s existing strengths and success experiences and amplifies them to drive transformation. Bushe (2007, 2013) argued that Appreciative Inquiry is particularly effective because of its generativity — the capacity for generative dialogue that produces new perspectives, vocabulary, and possibilities that participants did not previously hold, which in turn facilitates “adaptation.”

What unexpectedly helped us implement generative dialogue in AI BPR — as dialogue with generative AI driven by prompts — was the well-known KonMari Method. In the KonMari Method, the focus is not on what to discard but on what “sparks joy.” This reframes the question from “What should we eliminate?” — which triggers defensive routines — to “What sparks joy?” (a generative question rooted in success experiences), thereby prompting behavioral change in people around the world when it comes to tidying up. The subject of judgment is always the individual or the organization itself. Items are examined one by one. The accumulation of judgments transforms self-perception. No other familiar success story was better suited for translating these principles into a concrete methodology.

That said, the Appreciative Inquiry approach adopted here is not a silver bullet, and it has its critics. Bushe (2007) in particular noted that this approach risks being trivialized into merely “looking at the positive side,” potentially suppressing structural organizational issues and relational dynamics. This parallels how Edmondson’s (1999) concept of psychological safety has long been misunderstood as simply “having a friendly team.” Affirmative intervention is easily misread as superficial “niceness,” causing it to lose its true transformative power. Bushe responded to this critique by redefining the core of Appreciative Inquiry as generativity (Bushe, 2013), and in 2015, together with Marshak, systematized it as Dialogic Organization Development (Bushe & Marshak, 2015). AI BPR’s design — centering on inquiry through AI and deliberately posing “binary choices” of delegate-or-elevate to elicit new perspectives — stands in this lineage of Dialogic OD.

Automating Hypothesis Validation for the “Rebuild”

There was no guarantee that the radical changes to AI BPR would succeed. At the same time, when delivering to actual customers, we needed to deliver impact in a single session. To reconcile disruptive change with consistent quality, we implemented AI agent–driven dry runs of AI BPR. We created scenarios based on the target customer’s business, participants, and themes — outlining anticipated personas and potential risks. Using these scenarios alongside the AI BPR prompts, we had AI agents execute dry runs and predict reactions at each stage. This is analogous to automated testing (CI/CD) in software development. By validating in advance whether the intended changes, experiences, and effects would materialize, we stabilized quality.

We ran a semi-automated cycle of pre-delivery simulation, deep four-layer analysis of actual delivery feedback, and theory-grounded improvement. Starting from a framework that had been reduced to zero, we refined it through this cycle. From the initial implementation in February, the framework underwent more than 40 changes — large and small — over approximately two months to reach its current form, and the refinement continues.

The Results of No Longer Asking “What’s Your Problem?”

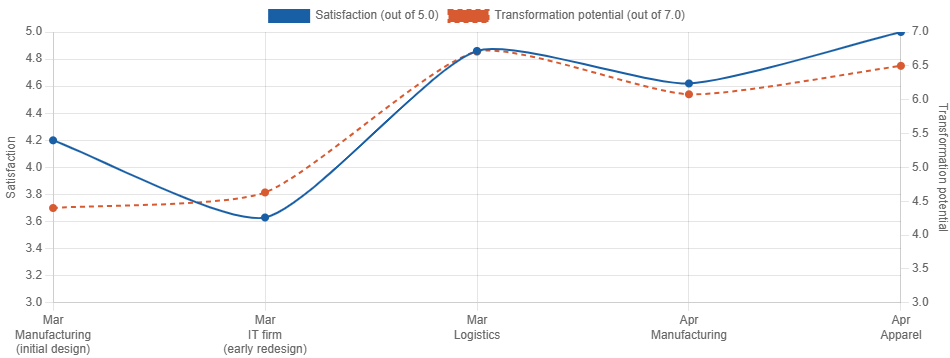

The results showed up in the numbers. Below is a table of satisfaction scores and perceived transformation potential from key deliveries.

| Client | Date | Satisfaction | Transformation Potential | Delivered by |

|---|---|---|---|---|

| Large manufacturer (initial design) | March | 4.2 / 5.0 | 4.40 / 7.0 | DevRel |

| IT company (early post-change) | March | 3.63 / 5.0 | 4.63 / 7.0 | DevRel |

| Major logistics company | March | 4.86 / 5.0 | 6.71 / 7.0 | DevRel |

| Large manufacturer | April | 4.62 / 5.0 | 6.08 / 7.0 | DevRel |

| Apparel company | April | 5.00 / 5.0 | 6.50 / 7.0 | Account team |

From the initial design through the rebuild, satisfaction dipped to 3.63 at one point, but the latest delivery achieved a perfect score of 5.00/5.0, with transformation potential rising from 4.63 to above 6.0. Notably, the latest result came from a delivery led not by the DevRel team that developed the program, but by the account team responsible for the customer — demonstrating the non-person-dependent nature of the AI BPR approach. Here are a few notable episodes from our deliveries.

Why a Logistics Executive Volunteered to “Replace” His Own Role

In our delivery to a major logistics company, we discovered an AI agent capable of substantially replacing the information gathering and dissemination tasks performed by branch managers at logistics hubs. An executive actively expressed willingness to drive the replacement. Two factors prevented this from triggering defensive reactions. First, the source of the company’s strength was identified as a deep commitment to “keeping promises to customers.” Second, the idea was not proposed by generative AI — it was a “generative” idea that participants arrived at themselves through dialogue and Simulate-based validation. The framing of “What should we focus on to keep our promises?” worked effectively. The feedback we received confirmed this: “If we had approached this from a problem-first perspective, we probably wouldn’t have embraced it this positively.”

Visualizing Business Processes in 40 Minutes — Earning Executive Praise at a Manufacturer

During a delivery to a major manufacturer, an executive commented that “AI is overwhelmingly better than human consultants for business analysis and visualization.” The time spent organizing business process flows in the Observe step was just 40 minutes. What typically takes hours to days was completed in a fraction of the time. Having experienced this speed and the simplicity of progressing through Q&A, participants spontaneously requested to launch a second team. The experience of immediately conveying their intentions and walking away with deliverables — “zero take-home time” — was the moment that prompted participants’ own behavioral change.

Perfect Scores Without the AI BPR Developer Present

The delivery to an apparel company was the first case led by the account team rather than the DevRel team that developed the program. The result was the highest satisfaction score to date — 5.00/5.0 (every participant gave a perfect score) — with transformation potential reaching 6.50/7.0. Feedback included: “It felt like we accomplished three days’ worth of tasks in four hours today” and “The fact that you can drive process reform through a chat interface is remarkable.” A report to the CEO was scheduled on the same day. The key facilitation was embedded in the AI agent, allowing the human facilitator to focus solely on course corrections — and that made all the difference.

A Refinement Still in Progress: Beyond 40 Years of Research

Appreciative Inquiry, the theoretical core of AI BPR, has been supported by theory and has produced results for approximately 40 years. Yet two factors have been cited for why it never achieved widespread adoption: the difficulty of facilitation and clients’ instinctive demand for technical solutions.

- “Asking questions rather than giving answers” demands real-time responsiveness, unlike the expert model where “answers” can be prepared in advance. This requires high facilitation skill and is difficult to standardize.

- When organizations face adaptive challenges, they instinctively seek technical solutions. By demanding “Give us the answer” or “Show us the plan,” they externalize the psychological cost of decision-making.

AI BPR offers a clear answer to both challenges. By designing the inquiry “in advance” through prompts, it reduces dependence on the facilitator. And through generative AI–powered Simulate and implementation, it can deliver proof of technical feasibility and a plan (Forecast) within hours. As AI BPR becomes more refined, the empirical validation of the theory will accelerate. To get there, we need more experience and more feedback.

Co-Creating AI BPR

We have begun co-creation activities with AWS Partners who share our conviction in the AI BPR concept and its evolution. With Accel Universe Corporation, we are hosting workshops that integrate data-driven AI agents into business processes through AI BPR. In March, five customers participated, and a second workshop is planned for May.

Accel Universe Corporation is a partner with deep strengths in AWS and AI/ML, and we are collaborating on more advanced implementations of AI BPR. In the March session, a major change to AI BPR landed just days before the event — a testament to the kind of partnership that embraces rapid change as a given. This is fundamentally different from a traditional SI engagement of running standardized workshops and implementing fixed use cases. Our ultimate goal is for partners to lead end-to-end process optimization — from executive engagement through AI BPR proposals to enterprise-wide rollout.

Additionally, with Stockmark, we are developing a more time-efficient and impactful approach that combines Stockmark’s strengths in manufacturing-focused solutions and models with AI BPR. We have already delivered AI BPR to Stockmark itself, gathering feedback and jointly refining the methodology.

AWS positions this activity — delivering AI BPR alongside solution providers like Stockmark to refine both the methodology and the solutions — as the “Forward Deployment Support Program.”

Internally at AWS, we have conducted AI BPR training so that account teams can propose and deliver it when they see the need, with over 60 participants to date. For large-scale organizations, this requires designing company-wide KPIs and orchestrating across stakeholders in each division. In some cases, enterprise-wide governance of the agents being built is also necessary. To address these needs, we are working with the ProServe team to develop a dedicated service offering.

Amazon founder Jeff Bezos famously said that the key is to focus on “what won’t change in ten years.” As noted at the outset, the success rate of business transformation has remained stuck at 30–40% for decades — it hasn’t changed. We aim for the moment when this “thing that hasn’t changed” finally does — through the convergence of the theories that organizational transformation researchers have built over decades, generative AI, and the partnership of AWS Partners who embrace change and challenge.

If you’re interested in applying AI BPR to your own business transformation, or if you’re motivated to co-create with AWS, please reach out to your AWS account team. If you’d like to discuss the theories and knowledge behind AI BPR presented in this article, I’d be happy to hear from you directly.

References

- Argyris, C. (1990). Overcoming Organizational Defenses: Facilitating Organizational Learning. Allyn & Bacon.

- Argyris, C. (1991). Teaching smart people how to learn. Harvard Business Review, 69(3), 99–109. https://hbr.org/1991/05/teaching-smart-people-how-to-learn

- Bushe, G. R. (2007). Appreciative Inquiry is not (just) about the positive. OD Practitioner, 39(4), 30–35. https://www.gervasebushe.ca/AI_pos.pdf

- Bushe, G. R. (2013). Generative process, generative outcome: The transformational potential of Appreciative Inquiry. In D. L. Cooperrider, D. P. Zandee, L. N. Godwin, M. Avital, & B. Boland (Eds.), Organizational Generativity: The Appreciative Inquiry Summit and a Scholarship of Transformation (pp. 89–113). Emerald. https://gervasebushe.ca/AI_generativity.pdf

- Bushe, G. R., & Marshak, R. J. (Eds.). (2015). Dialogic Organization Development: The Theory and Practice of Transformational Change. Berrett-Koehler. https://www.bkconnection.com/books/title/dialogic-organization-development

- Cooperrider, D. L., & Srivastva, S. (1987). Appreciative Inquiry in organizational life. Research in Organizational Change and Development, 1, 129–169. https://www.researchgate.net/publication/265225217_Appreciative_Inquiry_in_Organizational_Life

- De Smet, A., Pacthod, D., Relyea, C., & Sternfels, B. (2021, December 7). Losing from day one: Why even successful transformations fall short. McKinsey & Company. https://www.mckinsey.com/capabilities/people-and-organizational-performance/our-insights/successful-transformations

- Edmondson, A. (1999). Psychological safety and learning behavior in work teams. Administrative Science Quarterly, 44(2), 350–383. https://doi.org/10.2307/2666999

- Heifetz, R. A., Grashow, A., & Linsky, M. (2009). The Practice of Adaptive Leadership. Harvard Business Review Press. https://www.hks.harvard.edu/publications/practice-adaptive-leadership-tools-and-tactics-changing-your-organization-and-world

- IBM Global Business Services. (2008). Making Change Work: Continuing the Enterprise of the Future Conversation. IBM Corporation. https://public.dhe.ibm.com/software/be/Making_Change_Work_eff.pdf

- Kegan, R., & Lahey, L. L. (2001). The real reason people won’t change. Harvard Business Review, 79(10), 84–92. https://hbr.org/2001/11/the-real-reason-people-wont-change

- Kegan, R., & Lahey, L. L. (2009). Immunity to Change: How to Overcome It and Unlock the Potential in Yourself and Your Organization. Harvard Business Press. https://store.hbr.org/product/immunity-to-change-how-to-overcome-it-and-unlock-the-potential-in-yourself-and-your-organization/1736

- Kondo, M. (2011). The Life-Changing Magic of Tidying Up. Ten Speed Press, 2014. https://www.penguinrandomhouse.com/books/240981/the-life-changing-magic-of-tidying-up-by-marie-kondo/

- Schein, E. H. (1999). Process Consultation Revisited: Building the Helping Relationship. Addison-Wesley.

- Shao, Z. et al. (2025). Future of work with AI agents: Auditing automation and augmentation potential across the U.S. workforce. Stanford SALT Lab. https://arxiv.org/abs/2506.06576