Bagaimana konten ini?

- Pelajari

- Pathway's BDH: a new post-transformer approach to enterprise AI, on AWS

Pathway's BDH: a new post-transformer approach to enterprise AI, on AWS

Transformer models, the architecture underpinning modern LLMs, have revolutionized how we live, work, create, and compete. They’ve allowed startups to experiment and launch ideas with lean teams, and been a gamechanger in B2B verticals such as coding, analysis, and content creation. For both founders and enterprises, they’ve unlocked productivity gains that would have been unimaginable a few years ago.

Transformer-based AI has evolved with brute force scaling, meaning that bigger models, more data, and more compute have improved LLMs. If this is the case, then why is there a 95 percent failure rate for enterprise AI projects, according to an MIT study? The answer lies in the architecture of transformer models. They begin every task in the same state every time, and with no memory, they’re stuck in a Groundhog Day loop, unable to learn from the past. This technical limitation translates to real business implications, meaning the very infrastructure that enabled growth and empowered organizations is now presenting a roadblock to further progression.

A radically different approach to models

As AI has evolved, the stakes have risen. Enterprises are now looking to apply AI to proprietary internal use cases and workflows, requiring models that can learn and adapt behavior over time, support long-horizon reasoning, and produce observable, auditable inferences. Transformer models used to mean business transformation, but now, a radically different approach is needed.

Pathway, an AWS frontier model partner, is pioneering this next generation of AI with Dragon Hatchling (BDH). Developed on Amazon SageMaker and optimized for AWS infrastructure, BDH is a post-transformer frontier model that powers continuous learning, infinite context, and real-time adaptation. It solves the core challenge for enterprise AI adoption, memory and learning on the fly, allowing organizations to unlock critical new functionalities and capitalize on personalized enterprise intelligence.

The limitations of transformers in enterprise

Transformer models remain the default choice for many tasks and have fundamentally changed business operations. However, after nearly a decade of existence, the transformer is starting to reach its practical and mathematical limits in enterprise.

- Transformers were not designed to achieve continuous learning. They are trained once, they cannot learn new skills over time, and they cannot become smarter over time as they can only accumulate text in an external database.

- Transformers are incredibly resource-intensive. They use massive amounts of computing power because they process information in a way that grows very quickly as data increases, need very fast memory to run, and constantly adjust and account for a model's parameters.

- Transformers face architectural constraints in delivering what's required for true enterprise-native AI. Enterprises contain specialized knowledge and processes that need to be internalized and learned. They also require governance and observability. Today’s transformer-based models act as black boxes with opaque reasoning, fall back on pre-trained weights and lack mechanisms to model ‘what happens next.’

What this boils down to in reality

The technological limitations of transformers translate directly into real business implications, creating additional costs and complexities for enterprises and startups. Rather than serving as a tool for empowerment, transformers have become a source of bottlenecks, forcing teams to spend more time managing workarounds than driving value.

- Extra work is needed to fit the model to how a business actually works. Aligning off-the-shelf models to how an organization operates often involves managing extra workloads or fixes, such as building custom LLMs, re-sizing models, and using agentic workflows to build in processes.

- Adapting or contextualizing specialized knowledge requires teams to manage extensive fine-tuning and/or complex deployments of agentic workflows.

- Differentiation becomes a challenge. Getting unique outputs means teams have to customize models on their own, knowing they can lose relevancy and accuracy over time, or use the same off-the-shelf models as their competitors.

- Cost forces tricky trade-offs. Expensive training and inference force teams to pick and choose where

AI is deployed, missing out on potential opportunities.

BDH: hatching a new paradigm of enterprise intelligence

We’ve seen the evolution of transformer models, but for enterprises looking to differentiate their offering and add value, this is no longer enough. Instead of providing an incremental improvement to the transformer stack, BDH marks a paradigm shift, ushering in a new wave of enterprise AI.

Unlike transformer-based models which use fixed weights, BDH’s architecture is structured more like a network of neurons and synapses. Connections are continuously updated as new information arrives, allowing the model to retain and refine knowledge over time rather than resetting for each task. The result is a view of enterprise intelligence as memory-based, autonomous, and observable. This unlocks new capabilities for enterprises, including:

1. Continuous learning: BDH learns from every interaction and internalizes that learning over time for reasoning, durable expertise, and increasingly autonomous problem-solving.

Why it matters

- BDH doesn’t just ingest and regurgitate more information. They develop intelligence and judgment over time.

- Domain expertise accumulates, creating a compounding advantage through personalization.

- Establishes enterprise stickiness that can’t be replicated via data or training. AI becomes irreplaceable as it learns your business.

- Reasons across long time horizons.

- Learns from scarce,

specialized data (vs. transformers' data hunger).

2. Efficiency: BDH works like a brain on GPU with efficiency built in, whether the model is in training or in inference mode. Intelligence starts from local interactions that strengthen or weaken over time and engage when needed, not all at once. Only 5 percent of neurons fire per token versus transformers firing the entire model at once.

Furthermore, BDH is a truly native reasoning model. By creating a larger internal reasoning space called the latent reasoning space, it has intrinsic memory mechanisms that support learning and adaptation during use. BDH keeps what transformers are great at, specifically language understanding and generation, while adding the ability to solve non-language problems that stump standard LLMs. For example, BDH reaches 97.4 percent accuracy on Extreme Sudoku benchmarks, a collection of roughly 250,000 of the toughest Sudoku puzzles available, while leading LLMs struggle to perform at all.

Why it matters?

- 10x improvement in efficiency, across training efforts and inference tokens.

- Reduced energy and power usage.

- Faster time to market, without massive upfront training (thin data sets, learning over time).

- 1 single architecture that excels at both

language and non-language tasks (e.g, games) - indicative of strong

decision-making potential.

3. Enterprise grade: With BDH, enterprises can run AI that understands their business, continuously adapts, and is easy to monitor and explain. All without any extra workarounds or fixes.

Why it matters

- It's AI that embeds with the context of an organization’s experience.

- There’s confidence in deploying systems that continue learning in production with auditability.

- AI accounts for time and can therefore handle process awareness, digital twinning, and next best action.

- Governance over how and why a model is changing over time.

- Available and clear mechanisms to pause, isolate, or roll back learning when needed.

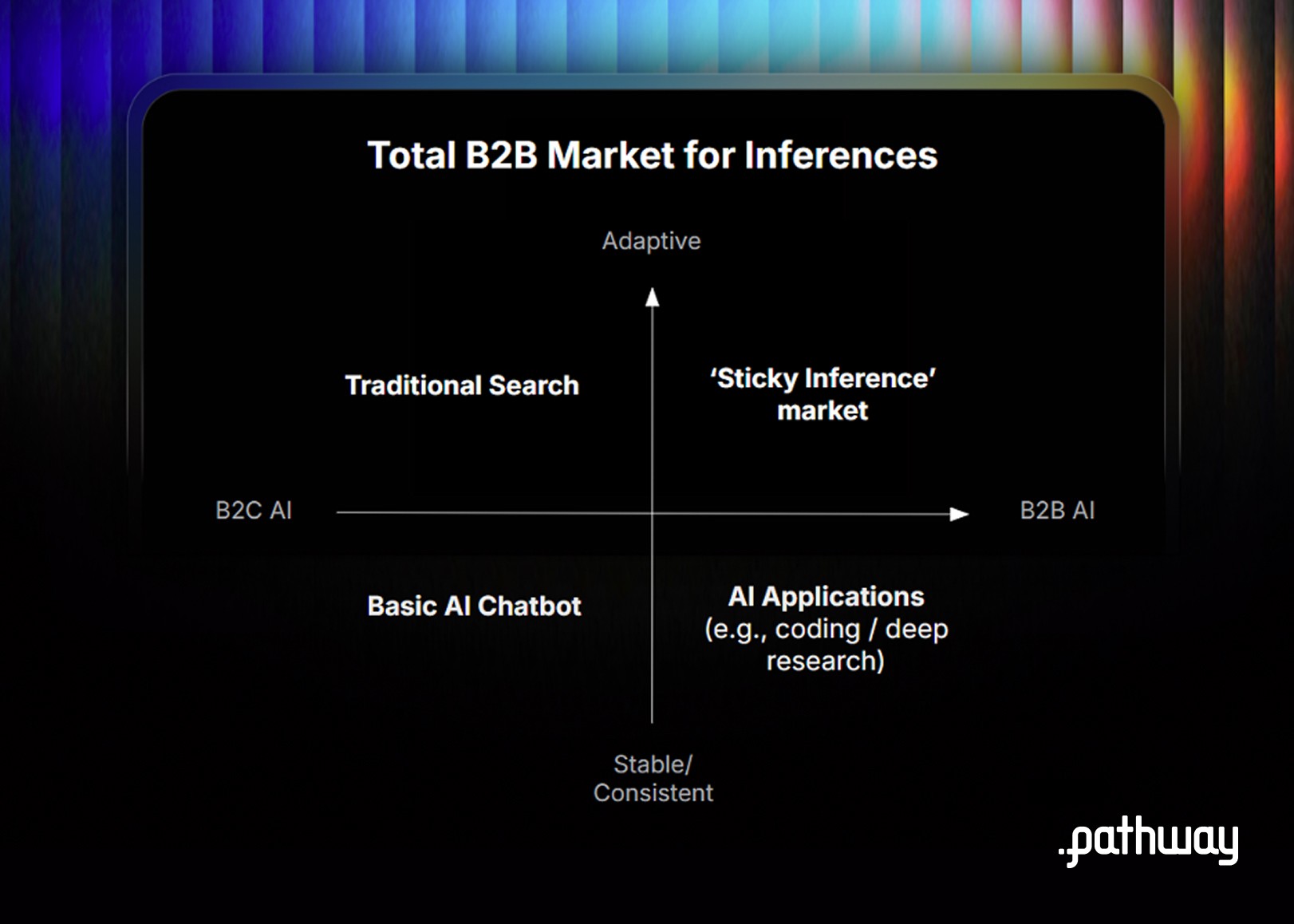

The ‘sticky inference’ unlocks critical AI functionality

What’s your moat? When deploying off-the-shelf AI and one-time training no longer delivers competitive advantage, a different approach is needed. BDH is designed for ‘sticky inference’ use cases that create the ultimate enterprise moat. BDH learns continuously from proprietary data, building domain expertise over time and creating real competitive advantage.

Sticky inference use cases are deeply tied to an enterprise’s most valuable asset: its proprietary data. They demand constant access to the freshest, most immediate information to remain accurate and valuable, and they depend on the ability to reason observably over long durations and time.

So, where do these ‘sticky’ use cases show up? BDH serves sticky inference use cases across horizontals and verticals. Horizontally, we see these emerge in areas where new functionality is critical. Vertically, we see these across retail, insurance, tech, financial services, and healthcare where data and context truly drive businesses.

Horizontal:

BDH automates a lengthy quarter-end accounting process for a global, multi-discipline company by reasoning across regions and business departments over several months. Rather than treating financial close as an isolated event, BDH spans the full quarter, performing early forecasts, assessing mid‑cycle variances, analyzing exposures to guide hedging, coordinating reconciliations, and ending with a clean, auditable trial close. This long‑horizon intelligence improves accuracy and foresight over time.

Vertical: Agnostic

Horizontal: Management

Primary differentiator: Long-horizon reasoning

Retail:

BDH ensures a retail company keeps sales on target based on dynamic conditions. By taking into account changing internal and external data, BDH suggests next best actions such as where to focus in the product personalization workflow, opportunities for dynamic pricing or areas where tailored promotion make sense.

Vertical: Retail

Horizontal: Sales

Primary differentiator: Next best action

Insurance

BDH increases automation of pre-authorization and subsequent medical claim verification for an insurance services company, reasoning through complex, un-paved scenarios in real time. It reduces cost per claim by automating a higher percentage of claims, while improving accuracy and providing a built-in audit trail for each decision.

Vertical: Insurance

Horizontal: Operations

Primary differentiator: Interoperability

Big tech

BDH runs a live digital twin of each enterprise customer’s network for a telecom provider. By continuously ingesting telemetry, topology and live customer data, BDH compares actual versus probable usage, and recommends preemptive reconfiguration, maintenance + service next-best actions.

Vertical: High Tech

Horizontal: Service

Primary differentiator: Live digital twinning

Healthcare

BDH supports complex clinical research by continuously integrating patient histories to suggest more informed treatment options. Unlike traditional LLMs, which are not built to learn from a thin data set and struggle with rare or unique cases, BDH can learn from ‘examples of one’, and turn these into actionable insights.

Vertical: Healthcare

Horizontal: Research

Primary differentiator: Thin data sets

Financial Services

BDH runs hyper-personalized deal prospecting by learning a bank’s proprietary risk playbook and investment thesis. By continuously analyzing real time market data, it generates new deal opportunities that match and identifies new opportunities that are more profitable than standard market options.

Vertical: Financial Services

Horizontal: Risk

Primary differentiator: Continuous learning

Developed on Amazon SageMaker HyperPod, optimized for AWS

AWS and Pathway are making this technology accessible today, bringing frontier AI innovation to the use cases where context, memory, and long-horizon reasoning matter most. BDH is developed on Amazon SageMaker HyperPod, which is designed for large-scale distributed training and helps teams provision and operate GPU clusters with managed workflows.

It’s also optimized for AWS infrastructure, meaning it operates efficiently and with maximum performance. BDH is accessible via API to existing AWS applications and workflows, and continuously improves based on the data that organizations already store on AWS.

Get in touch with Pathway (dragon@pathway.com) to begin exploring and implementing BDH. Customers can get started with reduced upfront investment through AWS-backed POC programs. You can also access Pathway’s research on Arxiv and their open source repositories on Github.

Bagaimana konten ini?