AWS Partner Network (APN) Blog

Accelerate Machine Learning Initiatives Using DXC’s MLOps Quick Start on AWS

By Sebastian Kloeser, Offering Owner, MLOps – DXC Technology

By Robin Reuben, Head of MLOps CoE – DXC Technology

By Dhiraj Thakur, Solution Architect – AWS

|

| DXC Technology |

|

Artificial intelligence (AI) is at an interesting point on its path to wide-scale adoption and broad value generation for everyday businesses and enterprises.

Until recently, many businesses engaging with AI were focused primarily on questions such as:

- Are there areas or use cases where can we create value from AI?

- What is the right model approach for our identified use cases?

- Which algorithm should we use?

- Which data do we need to collect?

All of those questions belong to the early “experimental” stage of an AI application’s lifecycle. It’s natural to think about these questions at first, and it can be time consuming and complex to resolve them. However, this should not distract from an important fact: these are all still scientific lab questions.

To use a manufacturing analogy: it’s one thing to craft an impressive prototype in the research and development (R&D) phase, but quite another to produce it in highly automated assembly lines in modern factories.

Unfortunately with AI, many organizations have invested in the AI R&D lab and hoped it would provide them with fully functional AI software developed in-house. But they forgot to build the factory and introduce processes that allow a smooth transition from prototype to mass production and product aftercare.

Thus, only a small number of companies today manage to leverage the true value of their machine learning (ML) proofs of concept, and the majority of those are still struggling to overcome the experiment-production gap for their AI applications fueled by machine learning and data.

In this post, we will describe what MLOps is, why organizations should care about it on their AI journey, and how DXC Technology and Amazon Web Services (AWS) can help to quickly integrate MLOps best practices into your daily business using the MLOps Quick Start for MLOps on AWS.

DXC is an AWS Premier Tier Services Partner and Managed Cloud Service Provider (MSP) that understands the complexities of migrating workloads to AWS in large-scale environments, and the skills needed for success.

MLOps and its Benefits

Many businesses are coming to realize the solution for getting ML projects into the profit zone is what is known as MLOps, short for machine learning operations.

Just as DevOps revolutionized the way software is developed and maintained, MLOps introduces the same mindset to the data science and machine learning world. Similarly to DevOps, MLOps is not just a tool or methodology but a combination of cultural practices, collaboration schemes, and end-to-end processes supported by a technological framework.

MLOps provides structure and automation to the lifecycle management of machine learning systems, and thus enables companies to safely and quickly develop, test, deploy, monitor, and operate ML models that are integrated into daily business.

It is organized to achieve five core values:

- Collaboration across involved business units.

- Quick time to market for new AI use cases.

- Transparency and auditability for machine learning.

- Robustness and reliability

- Seamless scalability.

Figure 1 – Profit over time Naïve vs. MLOps.

Best practices for MLOps are summarized in Figure 2 below, but more important than the technical details is understanding its implications on a business level. Professionalizing the way ML models are developed, deployed, and operated has a significant impact on the “profit over time” curve depicted in Figure 1.

As you can see, proceeding in a naïve fashion without MLOps best practices and infrastructure will result in long deployment times and various operations incidents that will need to be addressed regularly and at great cost.

On the other hand, accommodating and streamlining the deployment and handling of operations incidents that are inherent to the ML lifecycle is baked into MLOps best practices. This allows companies to reach the break-even point more quickly and raise profitability of ML applications as a whole.

Figure 2 – MLOps best practices.

With faster deployment times and more stable operations, MLOps unleashes the business value of AI in a threefold fashion:

- The use case benefit is finally achieved, since MLOps enables operationalization.

- Costs for deployment and operations of ML models are drastically reduced, often by as much as 75%.

- Reliable AI applications lead to a culture that embraces AI, which drives innovation and the development of new products.

DXC’s Quick Start for MLOps on AWS

If MLOps is the key to sustainably harvesting the real benefits and power of AI, how do you get started?

Most organizations need assistance with this, and there are two levels of support required: 1) a professional MLOps environment, or 2) an experienced services partner. To fulfill these needs, AWS and DXC Technology jointly developed a combined offering to provide the best technology and services for MLOps.

DXC is one of the largest global IT service providers with a dedicated center of excellence (CoE) for MLOps. It helps clients around the globe adopt MLOps methodology and technology, and offers services around advisory, implementation, and machine learning engineering as a service.

DXC’s services are based on a large repository of reusable assets, such as organizational and process blueprints, reference architectures, infrastructure as code (IaC) deployments, monitoring packages, standard dashboards, and explainable AI modules.

The jointly developed DXC MLOps Quick Start for AWS is a quickly deployable (as IaC), standardized yet customizable MLOps environment built from AWS-native services.

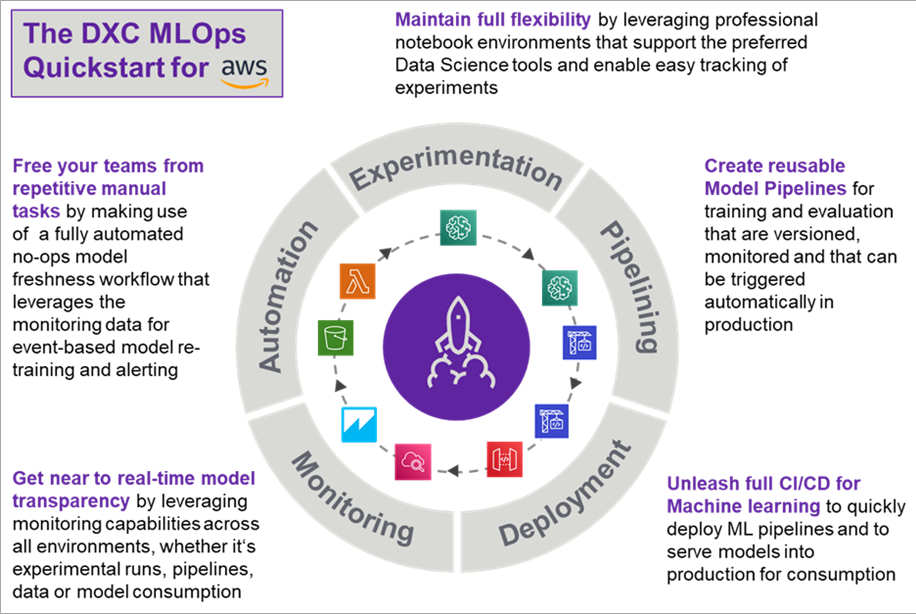

Figure 3 – Core functionality provided by the DXC MLOps Quick Start for AWS.

DXC MLOps Quick Start for AWS provides an enterprise-ready base environment for MLOps and enables the operationalization of ML use cases in a short amount of time. It was intentionally designed to be fully customizable to the specific needs, requirements, and constraints of any company and reflects the complex requirements of every MLOps environment.

This solution consists of the following elements:

Feature Storage

With data being the fuel of AI applications, end-to-end AI development involves the design and implementation of stable and monitored data pipelines. However, to maintain a modularization and separate data from MLOps architecturally, feature stores serve as an interface between the two domains.

For large AI initiatives, feature stores offer a single source of data for machine learning development and assure that developed features are reusable and not embedded into the specific code of a particular use case.

Flexible Experimentation

Although MLOps focuses on deployment and operations, data scientists need flexibility to perform experiments in their preferred environments and tool frameworks. To enable this, while at the same time ensuring data scientists work in production-ready environments, DXCs architecture leverages containerized notebook and integrated development environments (IDEs) that are versioned, shareable, and scalable.

Model Repositories

Reproducibility is critical to maintain model quality in large solutions over time. Therefore, orchestrated and fully logged model training runs both in development and in production. This ensures all aspects of a model are stored regardless of whether or not it is used later in production, and enables proper model comparison over time and on various training and validation sets.

ML Pipeline Development

Naïve model deployment focuses on directly pushing individual models into production. This approach ignores the fact that model deterioration is not a rarity but the norm.

Frequent model retraining should always be expected, and therefore it’s not a model that needs to be pushed to production but model training pipelines. Those modeling pipelines—being triggered by certain conditions such as the arrival of new labelled data—will automatically train, test, and compare new model versions, if necessary.

CI/CD for ML Pipeline Deployment

To minimize the manual effort, model pipeline deployment itself is performed with the help of CI/CD pipelines.

Model Serving

With automatically triggered training pipelines, new completely logged model versions will be available in the model repository. Moving them into consumable prediction services can either be automated or performed after manual approval.

This approach allows users to design A/B tests by serving multiple versions into production to compare real-life performance over time. DXC offers multiple standard serving patterns for models as microservices, precompute data-based results, and models that are running on the edge.

Model Monitoring

Operating ML models in production requires a close monitoring of their performance. Depending on the use case and serving pattern, monitoring services need to be established.

In general, model monitoring involves at least two levels:

- Monitoring of the prediction service requires logging the status of the service, the number of requests, and the output of the model.

- Monitoring of the model performance if new labeled data arrives requires an evaluation of the current production model of the new data. Monitoring the involved data pipelines ensures the validation of the data quality in real time.

Conclusion

In an environment of competitive pressure emerging from AI, MLOps enables companies to unleash the potential of artificial intelligence in real-world practical applications. However, building a professional MLOps solution requires a complex set of interconnected capabilities.

The DXC MLOps Quick Start for AWS is a proven MLOps solution that is built from AWS-native services and enables users to scale seamlessly.

DXC offers standardized services to advise and coach people, change organizational structures, and implement and run professional MLOps platforms at scale.

The DXC CoE for MLOps ensures the highest level of quality across many global clients. Contact DXC to request a demo and a deeper conversation with experts about the right path towards successful machine learning.

DXC Technology – AWS Partner Spotlight

DXC Technology is an AWS Premier Tier Services Partner and MSP that understands the complexities of migrating workloads to AWS in large-scale environments, and the skills needed for success.