AWS Partner Network (APN) Blog

How to Build a Secure-by-Default Kubernetes Cluster with Basic CI/CD Pipeline on AWS

|

|

|

|

By Matthew Sheppard, Solution Principal at Slalom

By Donald Carnegie, Consultant at Slalom

As users and engineers of modern web applications, we expect them to be available 24 hours a day, seven days a week, and to be able to deploy new versions of them many times a day.

Kubernetes is an open-source platform to automate deploying, scaling, and operating application containers. But in itself, Kubernetes is not enough to achieve this goal. It ensures our containerized applications run when and where we want and can find the tools and resources they require. To fully empower our engineers, however, we need to build a CI/CD pipeline around Kubernetes.

At Slalom Consulting, an AWS Partner Network (APN) Premier Consulting Partner, we have not come across a tutorial that brings together all the pieces needed to set up a Kubernetes cluster on Amazon Web Services (AWS) that could be made production ready.

The documentation is mostly there, but it’s a treasure hunt to track it down and work out how to make it work in each particular situation. This makes it particularly challenging for anyone embarking on their first Kubernetes pilot, or making the step up from a local minikube cluster.

Using this tutorial, we have built a Kubernetes cluster with a good set of security defaults and then wrapped a simple CI/CD pipeline around it. We then specified through Infrastructure-as-Code how we wanted a simple containerized application to be deployed and used our CI/CD pipeline and Kubernetes cluster to deploy it as defined — automatically.

Check out the full tutorial from Slalom >>

What’s in the Tutorial?

The aim of this tutorial is to close off this gap and step through the setup of Kubernetes cluster that:

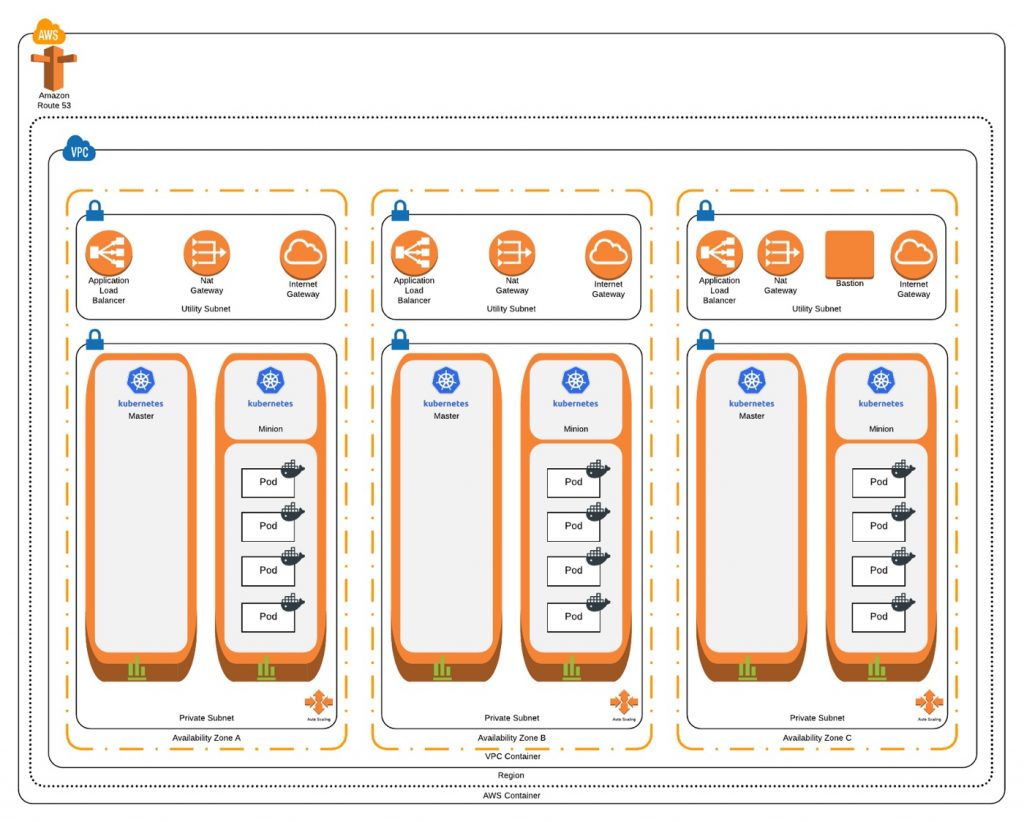

- Is highly available: We want to ensure our environments can deal with failure and that our containerized applications will carry on running if some of our nodes fail or an AWS Availability Zone (AZ) experiences an outage. To achieve this, we’ll run Kubernetes masters and nodes across three AZs.

- Enforces the “principle of least privilege”: By default, all pods should run in a restrictive security context; they should not have the ability to make changes to the Kubernetes cluster or the underlying AWS environment. Any pod that needs to make changes to the Kubernetes cluster should use a named service account with the appropriate role and policies attached. If a pod needs to make calls to the AWS API, the calls should be brokered to ensure that pod has sufficient authorization to make them and use only temporary AWS Identity Access Management (IAM) credentials.

- We’ll achieve this by using Kubernetes Role-Based Access Control (RBAC) to ensure that by default pods run with no ability to change the cluster configuration. Where specific cluster services need permissions, we will create a specific service account and bind it to a required permission scope (i.e. cluster wide or in just one namespace) and grant the required permissions to that service account.

- Access to the AWS API will be brokered through kube2iam; all traffic from pods destined for the AWS API will be redirected to kube2iam. Based on annotations in the pod configurations, kube2iam will make a call to the AWS API to retrieve temporary credentials matching the specified role in the annotation and return these to the caller. All other AWS API calls will be proxied through kube2iam to ensure the principle of least privilege is enforced and policy cannot be bypassed.

- Integrates with Amazon Route53 and Classic Load Balancers: When we deploy an application, we want the ability to declare in the configuration how it’s made available to the world and where it can be found, and have this automated for us. Kubernetes will automatically provision a Classic Load Balancer to an application and external-dns allows us to assign it a friendly Fully Qualified Domain Name (FQDN), all through Infrastructure as Code.

- Has a basic CI/CD pipeline wrapped around it: We want to automate the way in which we make changes to the cluster, and to how we deploy or update applications. The configuration files specifying our cluster configuration will be committed to a Git repository and the CI/CD pipeline will apply them to the cluster. To achieve this, we’ll use Travis-CI to apply the configuration that gets committed in our master branch to the Kubernetes cluster.

At the end of the tutorial, we’ll end up with a Kubernetes cluster that looks like this:

Figure 1 – Slalom’s end-state Kubernetes cluster.

Next Steps

This tutorial provides a simple illustration of the rich benefits Kubernetes can bring to your developers and DevOps capabilities when included as part of your toolchain.

Check out the full tutorial from Slalom >>

You may also be interested in Slalom’s webinar recording about DevOps with Kubernets on AWS >>

The content and opinions in this blog are those of the third party author and AWS is not responsible for the content or accuracy of this post.

.

|

| |

Slalom Consulting – APN Partner Spotlight

Slalom is an APN Premier Partner. They focus on helping customers design, build, migrate, and manage AWS deployments to reduce complexity and maximize value. Slalom’s expertise extends across next-generation infrastructure, custom development, advanced analytics, enterprise data management, and beyond.

Contact Slalom | Practice Overview | Customer Success

*Already worked with Slalom? Rate this Partner

*To review an APN Partner, you must be an AWS customer that has worked with them directly on a project.