AWS Developer Tools Blog

Introducing the ‘aws-rails-provisioner’ gem developer preview

AWS is happy to announce that the aws-rails-provisioner gem for Ruby is now in developer preview and available for you to try! What is aws-rails-provisioner? The new aws-rails-provisioner gem is a tool that helps you define and deploy your containerized Ruby on Rails applications on AWS. It currently only supports AWS Fargate. aws-rails-provisioner is a […]

Real-time streaming transcription with the AWS C++ SDK

Today, I’d like to walk you through how to use the AWS C++ SDK to leverage Amazon Transcribe streaming transcription. This service allows you to do speech-to-text processing in real time. Streaming transcription uses HTTP/2 technology to communicate efficiently with clients. In this walkthrough, you build a command line application that captures audio from the […]

Announcing AWS Toolkit for Visual Studio Code

Visual Studio Code has become an enormously popular tool for serverless developers, partly due to the intuitive user interface. It’s also because of the rich ecosystem of extensions that can customize and automate so much of the development experience. We are excited to announce that the AWS Toolkit for Visual Studio Code extension is now […]

Serverless data engineering at Zalando with the AWS CDK

This blog was authored by Viacheslav Inozemtsev, Data Engineer at Zalando, an active user of the serverless technologies in AWS, and an early adopter of the AWS Cloud Development Kit. Infrastructure is extremely important for any system, but it usually doesn’t carry business logic. It’s also hard to manage and track. Scripts and templates […]

Referencing the AWS SDK for .NET Standard 2.0 from Unity, Xamarin, or UWP

In March 2019, AWS announced support for .NET Standard 2.0 in SDK for .NET. They also announced plans to remove the Portable Class Library (PCL) assemblies from NuGet packages in favor of the .NET Standard 2.0 binaries. If you’re starting a new project targeting a platform supported by .NET Standard 2.0, especially recent versions of Unity, Xamarin and […]

Getting started with the AWS Cloud Development Kit and Python

This post introduces you to the new Python bindings for the AWS Cloud Development Kit (AWS CDK). What’s the AWS CDK, you might ask? Good question! You are probably familiar with the concept of infrastructure as code (IaC). When you think of IaC, you might think of things like AWS CloudFormation. AWS CloudFormation allows you […]

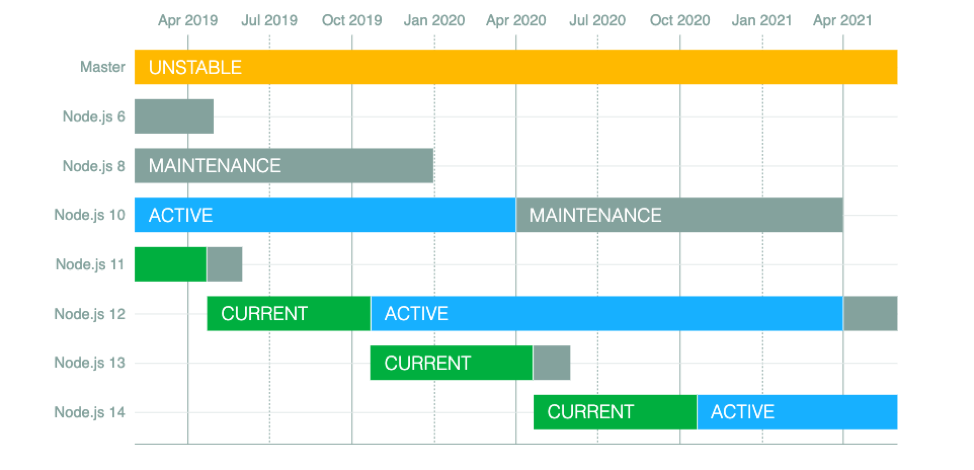

Node.js 6 is approaching End-of-Life – upgrade your AWS Lambda functions to the Node.js 10 LTS

This blog was authored by Liz Parody, Developer Relations Manager at NodeSource. Node.js 6.x (“Boron”), which has been maintained as a long-term stable (LTS) release line since fall of 2016, is reaching its scheduled end-of-life (EOL) on April 30, 2019. After the maintenance period ends, Node.js 6 will no longer be included in Node.js […]

V2 AWS SDK for Go adds Context to API operations

As of January 19th, 2021, the AWS SDK for Go, version 2 (v2) is generally available. The v2 AWS SDK for Go developer preview made a breaking change in the release of v0.8.0. The v0.8.0 release added a new parameter, context.Context, to the SDK’s Send and Paginate Next methods. Context was added as a required […]

New — Analyze and debug distributed applications interactively using AWS X-Ray Analytics

Developers spend a lot of time searching through application logs, service logs, metrics, and traces to understand performance bottlenecks and to pinpoint their root causes. Correlating this information to identify its impact on end users comes with its own challenges of mining the data and performing analysis. This adds to the triaging time when using […]

Query Systems Manager Parameter Store for AWS Regions, endpoints and more using PowerShell

In Jeff Barr’s recent blog post, he announced support for querying AWS Region and service availability programmatically by using AWS Systems Manager Parameter Store. The examples in the blog post all used the AWS CLI, but the post noted that you can also use the AWS Tools for PowerShell. In this post I’ll show you […]