AWS for Industries

EDA Scale with FSx for NetApp ONTAP and IBM LSF

Introduction

Silicon design teams are constantly under pressure to deliver more efficient products faster, while keeping costs low. Electronic Design Automation (EDA) infrastructure teams, which provide compute, storage, and other IT resources to silicon design teams, can play a critical role in shrinking the RTL-to-GDSII cycle.

Historically, compute capacity for EDA workloads has come from on-premises data center deployments. However, advance node designs, requiring more complex checks and more operations per check, are exposing limitations of on-premises EDA setups – often designed to either optimize cost, leading to limited capacity for bursty EDA workloads or designed for peak utilization, resulting in underutilized resources. Scaling on-premises EDA compute capacity infinitely becomes cost prohibitive, time consuming and impractical.

AWS offers massively scalable compute capacity in a pay-as-you-go model and helps reduce turnaround times for EDA workloads, maximizes EDA license value, and achieves faster time to tape-out. AWS’s ability to elastically scale compute up or down based on demand eliminates the need for extensive compute demand forecasting and is a natural fit for EDA. The pay-as-you-go model and per-second billing for compute on AWS helps attain cost efficiency. Reduced operational complexity with AWS enables EDA infrastructure teams to focus more on innovation.

On-demand Scalability with AWS

An elegant, hybrid, EDA solution which bursts into cloud to provide capacity on demand, beyond on-premises compute should implement job forwarding logic seamlessly when needed, without changing end-user experience, existing tools or flows. Efficient, dynamic management of compute hosts and data ensures good performance and optimized costs.

IBM LSF is the most popular job scheduler in EDA, while NetApp ONTAP is a popular choice for EDA storage. For EDA environments using IBM LSF and NetApp on-premises, we publish a reference architecture to seamlessly scale the current environment into AWS using LSF’s multicluster capabilities to implement job forwarding and Amazon FSx for NetApp ONTAP for efficient data management. The collateral is intended to expedite Proof of Concept (PoC) implementations and help scale EDA workloads seamlessly into AWS.

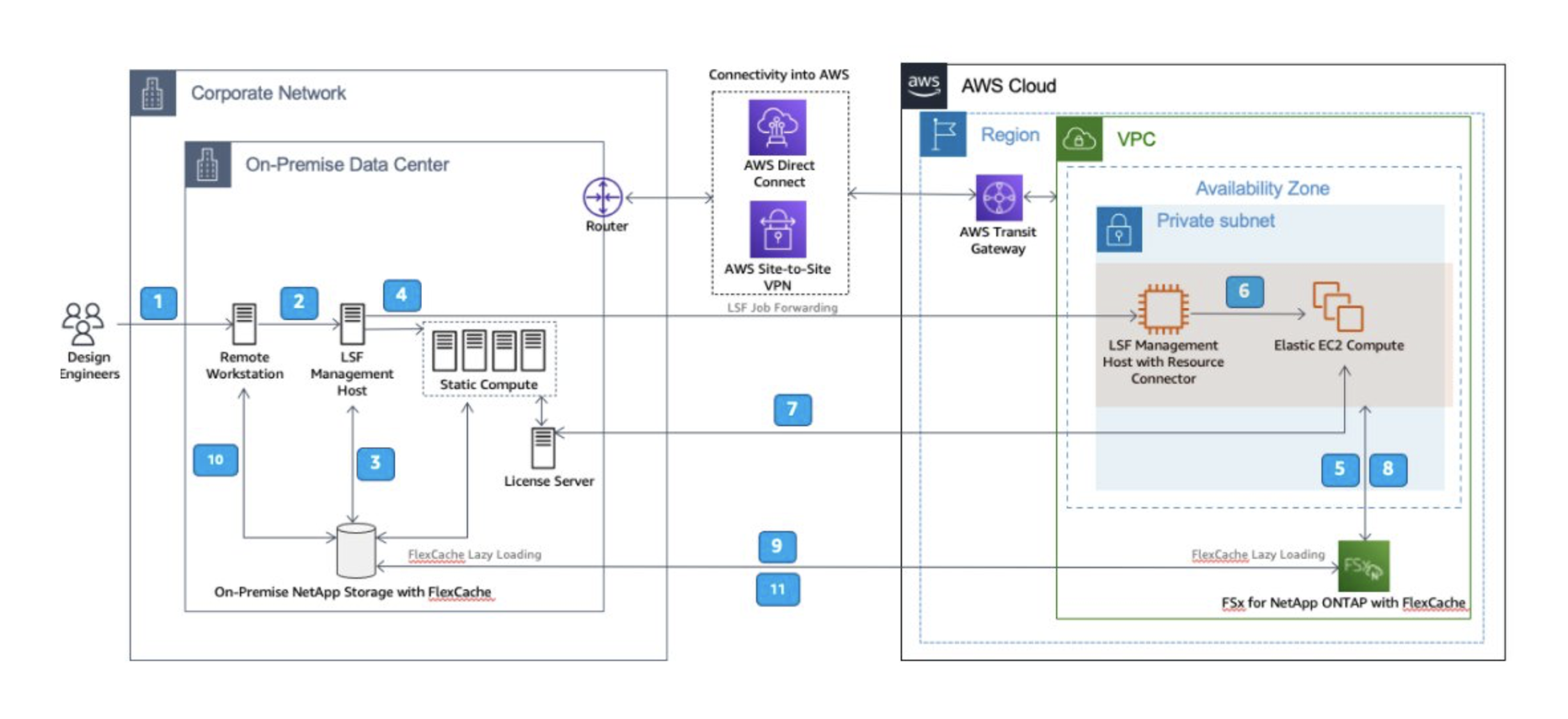

On-premises EDA environment with LSF scheduler and NetApp storage and the typical Design Engineer workflow is as follows:

As we scale into AWS for EDA burst capacity, compatibility with existing tools, flows, and processes is paramount. The reference architecture below shows EDA scale using Amazon FSx for NetApp ONTAP and IBM Spectrum LSF in Multicluster mode:

LSF is configured in the multicluster mode. LSF management host on AWS uses IBM Spectrum LSF Resource Connector to dynamically provision Amazon Elastic Compute Cloud (EC2) instances to run EDA jobs. Infrastructure and cost efficiency is achieved by provisioning compute servers, when needed and for the time they are needed. NetApp FlexCache is used to lazy load active data on-demand and provides low latency read access to data. EDA scale into AWS is achieved with minimal changes to user experience.

Scale into AWS with FSx for NetApp ONTAP and IBM LSF Multicluster is achieved in four simple steps:

- Setting up EDA cluster on AWS

- Connecting on-premises Data Center to AWS

- NetApp volume peering and FlexCache setup

- Enabling IBM LSF Multicluster

Architecture Deep Dive

An EDA cluster on AWS is no different than one on-premises, with the added advantage of agility, flexibility and dynamic scaling. Cluster setup starts with deployment of Amazon Virtual Private Cloud (VPC) in an AWS Region closest to the on-premises data center for reduced latency and highest performance. AWS VPC is an extension of existing EDA compute capacity. Hence, diligent IP planning is needed to ensure AWS VPC CIDR range is non-overlapping with on-premises IPs. An Availability Zone in the VPC is chosen to deploy a subnet. For EDA scale on AWS, compute entities on AWS are LSF management host and dynamically provisioned LSF execution hosts – both modeled using Amazon EC2. Neither of these need to be accessed from the internet and can themselves access the internet via the on-premises gateway, if needed. Hence, they reside in a private subnet. The principle of least privilege is implemented using AWS Security Groups, Network Access Control Lists (NACLs) and AWS Route Tables.

LSF Resource Connector is enabled on the management host on AWS for dynamic provisioning of EC2 execution hosts to meet scale capacity demands. Elastic instances are terminated when idle, ensuring cost-optimized EDA setup. Amazon FSx for NetApp ONTAP provides fully managed, reliable, scalable, performant, feature-rich NetApp storage for EDA on AWS. Volumes created on FSx for NetApp ONTAP store tool binaries, configuration files and design data for EDA workloads.

Connectivity between on-premises network and AWS VPC is achieved using AWS Site-To-Site VPN and AWS Direct Connect. AWS Site-To-Site VPN over internet provides secure, redundant IPSec tunnels between endpoints but comes with the unpredictable latency of packets traversing the internet. AWS Direct Connect provides private, layer 2 connectivity using 802.1q VLAN between on-premises infrastructure and AWS fabric for higher throughput and predictable latency. Both services can be used together to provide a secure, highly available connection between on-premises data center and AWS. AWS Transit Gateway acts as the VPN concentrator on the AWS side, connecting to Customer Gateway, configured on-premises. AWS Transit Gateway provides a hub and spoke design for connecting VPCs and on-premises networks as a fully managed service without requiring provisioning of virtual appliances.

Amazon FSx for NetApp ONTAP allows customers to launch and run a fully managed ONTAP file system on AWS. It provides familiar features, performance and APIs of on-premises NetApp file systems with the agility, scalability and operational simplicity of an AWS service. To enable NetApp volume on-premises and on AWS to communicate with each other, NetApp cluster and Storage Virtual Machines (SVM) peering needs to be setup. NetApp’s FlexCache lazy loads only the required active data, providing low latency access to data for EDA. Bidirectional FlexCache configuration helps copy output back to on-premises NetApp storage for viewing and debug from on-premises user workstations.

IBM Spectrum LSF is deployed in multicluster mode. In the multicluster mode, an LSF management host is running on-premises as well as on AWS. Dynamic scaling of compute instances on AWS is achieved using LSF Resource Connector, enabled in the management host instance deployed on AWS. EDA Workshop with IBM Spectrum LSF provides automation code to deploy IBM LSF on AWS with Resource Connector. Both the LSF clusters are made aware of each other by editing entries in the lsf.shared file and job forwarding/receiving capabilities at the on-premises and AWS LSF clusters respectively is configured in the lsb.queues file per LSF documentation.

Conclusion

As process nodes advance from 7 to 5 to 3nm, AWS’s virtually infinite compute capacity can reduce Silicon development time substantially. IBM Spectrum LSF Multicluster helps extend an on-premises EDA cluster seamlessly into AWS, while Amazon’s FSx for NetApp ONTAP provides a fully AWS managed, performant, scalable NetApp storage on AWS for EDA. EDA scale with FSx for NetApp ONTAP and IBM LSF provides an implementable reference architecture to extend your existing EDA infrastructure to AWS, with minimal changes to existing tools & flows, enabling shorter RTL-2-GDSII cycle.

Click here to learn more about Semiconductors and Electronics on AWS and try AWS EDA Workshops.