AWS for M&E Blog

Deliver device sensitive stream renditions with FireTV

Have you ever wondered how providers like Amazon Prime Video deliver video content optimized for your television’s features and settings? How do providers know whether your device supports Ultra High Definition (UHD) or High Dynamic Range (HDR), and how do they use this information to deliver the corresponding live or on-demand content you have requested?

Keep reading to learn how to deliver device-sensitive stream renditions with FireTV. To get the most out of this blog, we recommend having a basic knowledge of the FireTV app, AWS Elemental MediaLive, and AWS Elemental MediaPackage.

As a streaming service provider, delivering the streams your customers request is a fundamental practice. Imagine sitting on the couch, opening your 4K HDR television and expecting a visual feast, but instead receiving the incorrect resolution HDR streaming rendition.

There are two requirements to ensure the right streaming rendition is served to the viewer. First, you need some way to get device hardware information from the end user’s device, such as resolutions and color space. Second, you need to design different renditions for each device-specific viewing request. I will now walk you through the steps to achieve these prerequisites with Amazon’s FireTV stick 4K device.

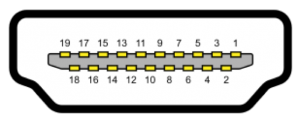

Before we look at the code snippet to retrieve device hardware information, let’s brush up on HDMI’s Pin 16 transfers SDA (I²C serial data for DDC), as shown in the following picture. This data protocol is designed by VESA (Video Electronics Standards Association) and its standard for metadata describing display device capabilities to the video source is called DisplayID. By looking at this specification, you can find defined display device information such as the manufacturer, productID, color space, color depth, and EOTF (electro-optical transfer function).

FireTV runs on FireOS, which is a fork of Android system; therefore, there is no difference between developing a FireTV app and any other Android app.

Fortunately, you don’t need to implement our display driver program to get the above information from monitors or television sets. You can take advantage of Android’s SDK and API instead. The image below shows how you can use Android 6.0’s Display.Mode to query physical display sizes. You can also use Display.HdrCapabilities (added in API level 24) to retrieve your device’s HDR information.

Using the code below, you can retrieve the HDR and resolutions for connected televisions. Once that information is ready, append it to feed list’s URL field(variable) as an http query parameter. (You should change a method called fetchData, which is implemented in BasicHttpBasedDownloader.java. In this case, suppose you are developing a FireTV app using Fire App Builder).

Next, I will show you how you can respond and deliver the corresponding video feed list to different devices. Let’s look at the architecture.

Please focus on the right part of the above diagram.

- When a user switches on their TV and clicks your FireTV app, it will try to detect HDR capability of the device and append a query parameter to the content feed list URL.

- The FireTV app tries to contact the URL backend, which is in our case an API Gateway triggering a Lambda function.

- An AWS Lambda function selects the feed list to deliver to the device based on the HDR query parameter.

- FireTV receives the video content feed list.

- The user selects a certain live or VoD video to watch, and Amazon CloudFront serves the right video stream renditions to end device.

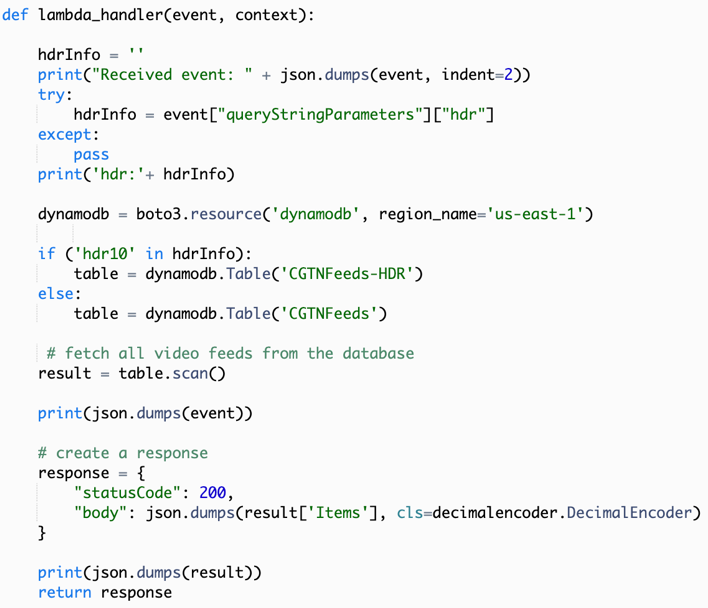

Here is the code snippet for the Lambda function referenced in step 3:

Something to note: When you develop FireTV app, you can change urlFile.json (in Android Studio ‘Android’ view, under app->assets->urlFile.json) to define the endpoint of video content feed list, as shown in the image below.

To this point, we have managed to retrieve the display device’s HDR information, append it to feed list’s URL, send it to API Gateway, and get back the right video content list. You can imagine this as an HDR10-capable television receiving an HDR10 video stream rather than Dolby Vision or HLG stream.

Finally, we will walk through how to design stream renditions using MediaLive and MediaPackage.

1. Define HLS renditions under the same outgroup in MediaLive. In this case, the following renditions are defined:

2. In MediaPackage, filter renditions by bitrate. The output endpoint shown below accepts all streams from 0 bit/s to 10M bit/s, which means all renditions except the 4K version.

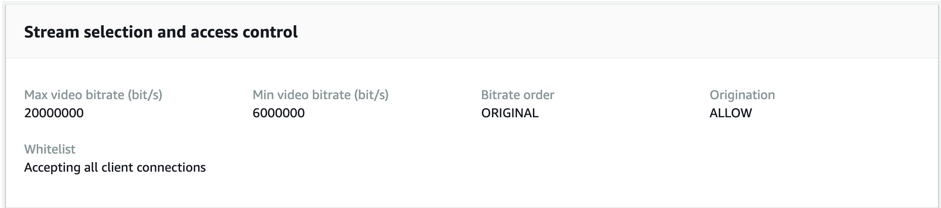

This output endpoint accepts all streams from 6M bit/s to 20M bit/s, which means both Full HD and 4K HDR renditions.

Here is a test case of the endpoints serving different streams:

The CloudFront distribution endpoint URLs then can be updated to Amazon DynamoDB from which the Lambda code can query.

In this article, you have learned how to build a solution for retrieving display device information like resolution or HDR capability and defining stream renditions through MediaLive and MediaPackage to deliver device-sensitive stream renditions with FireTV. To learn more about MediaLive and MediaPackage, read more below or visit AWS Media Services web page.

AWS Media Services used in this tutorial

AWS Elemental MediaLive is a broadcast-grade live video processing service. It lets you create high-quality video streams for delivery to broadcast televisions and internet-connected multiscreen devices, like connected TVs, tablets, smart phones, and set-top boxes. As of September 2019, MediaLive supports ultra-high-definition (UHD) encoding with HDR, of which customers can take advantage to be prepared for the next generation of UHD and HDR content. With MediaLive, you can either use up convert capability or decode UHD incoming streams as input, and assign MediaPackage as the output group under which a number of well-designed streams are delivered once the channel starts.

AWS Elemental MediaPackage reliably prepares and protects your video for delivery over the Internet. From a single video input, AWS Elemental MediaPackage creates video streams formatted to play on connected TVs, mobile phones, computers, tablets, and game consoles. Just-in-time packaging enables MediaPackage to filter stream renditions based on bitrate, and then serve as origin for content delivery using CloudFront.