AWS Public Sector Blog

A governance framework for nonprofit agentic AI on AWS

Every business leader is being asked to do more with less, and agentic AI promises to help organizations be more productive without requiring additional staff. However, nonprofit organizations face a unique set of challenges, such as high volunteer and staff turnover, board accountability, and compliance and reporting requirements. Accountability and trust problems arise when agents run under uncontrolled accounts, an agent’s decision or action can’t be explained, or an organization is unable to demonstrate that sensitive data was handled appropriately. In this post, I outline a governance framework that addresses these problems through features of Amazon Bedrock AgentCore. By following the practices outlined here, you can gain confidence to run your agentic AI workloads in highly demanding situations.

Overview

Governance frameworks for agentic AI exist, but many of those tend to be burdensome to implement, and many don’t tie governance directly to the technology being used. The framework I’ll introduce aligns governance concerns directly with the technology used to solve the concern.

At its core, governing agentic AI really comes down to four questions you, your leadership, or your board will want to understand:

- What is the agent allowed to do?

- When an agent runs, who is it acting for?

- What did the agent do?

- What happens when the agent gets something wrong?

These are questions that leadership or the board might ask after an agent sends an unintended donor communication, a large donor requests an audit, or you notice that an agent used the access credentials of a former volunteer that hadn’t been revoked.

Being able to answer these questions builds trust in agentic AI and your team’s ability to manage it within the organization. This trust framework consists of four pillars that directly map to a capability in Amazon Bedrock AgentCore:

- Boundaries – This is what agents can and can’t do, and an agent is denied when it attempts to perform an unallowed action.

- Identity – Agents are designed to act on behalf of an authorized person. When the individual is no longer with the organization, their access must also automatically expire.

- Visibility – Maintaining a trail of the actions the agent takes is essential.

- Evaluation – When an agent behaves unexpectedly, you should be able to detect it, stop it (through alerting), and be able to explain it.

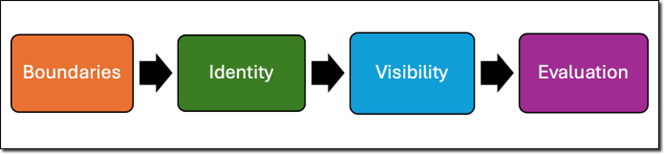

The order here is important. You create boundaries to define what an agent can and can’t do before you identify who can authorize it to do those things. Identity must be defined before visibility so you can record the actions initiated by the authorized user. Finally, visibility happens before evaluation because you can’t respond to events that you’re unable to see. Together these pillars can give you and your leadership confidence in moving from a generative AI prototype to production.

The following graphic illustrates these four pillars and the order they should be enacted.

Figure 1: Agentic AI trust framework

Pillar 1: Boundaries

Most agentic system failures aren’t caused by models doing unpredictable things. Rather, they’re often due to agents doing exactly what they were told to do, but in contexts or with data that was unexpected. For example, a donor outreach agent might work perfectly fine for a list of 50 lapsed donors but might do something unexpected when it’s run against the full member database.

These sorts of problems can be solved by Policy in Amazon Bedrock AgentCore. You can use policies to define what an agent can and can’t do in natural language. The natural language boundary is converted into Cedar, an open source policy language from Amazon Web Services (AWS) for fine-grained permissions. As an example, you might write a boundary statement such as: “This agent may draft donor communications but may not send to more than 100 recipients.” This natural language boundary is converted into a Cedar policy that is validated against your gateway’s Model Context Protocol (MCP) tool schema, enabling the policy to map to actual tool parameters and actions.

Policy integrates with Amazon Bedrock AgentCore Gateway, which is a straightforward and secure way for developers to build, deploy, discover, and connect tools at scale. Whenever a tool is called through AgentCore Gateway, the policy is evaluated in real time. This prevents the agent from exceeding its authorized scope regardless of how it was invoked or what it was asked to do. Policies follow the basic pattern of who (which users or roles can perform the action), what (which operations or tools can they use), and when (under what conditions or constraints). You can configure enforcement mode to one of the following:

LOG_ONLY– Where the policy engine logs whether the action would be allowed or denied without enforcing the decision. This is a great option to track what would happen if you’re not ready to block actions.ENFORCE– Where the policy engine evaluates the action and enforces the decision by denying agent operations.

The “who” in this pattern is where the policy connects to identity, which is pillar 2. Policies can be scoped to specific identities or roles, so a board treasurer’s agent might have broader access to financial tools than a volunteer’s agent.

You can also use Amazon Bedrock Guardrails to filter harmful user inputs and toxic model responses. For example, you might want your agent to redact personally identifiable information (PII) from the large language model (LLM) output to protect the privacy of your users or members. You can use a guardrail with the Amazon Bedrock Converse API or the ApplyGuardrail API.

Pillar 2: Identity

Through inconsistent identity management, you could end up in a situation where a volunteer is granted access to the donor customer relationship management (CRM) tool to help with a campaign. They leave the organization and a month later someone discovers that a caller that was provisioned during the campaign is still accessing the CRM agent with the volunteer’s old credentials. Without identity governance, this is a problem that unfortunately many nonprofits experience.

Amazon Bedrock AgentCore Identity uses OAuth-based authorization so agents are designed to act on behalf of a specific, verified identity. That identity can be scoped, audited, and revoked. Nonprofit organizations can use enterprise identity providers such as Okta, Microsoft Entra, and many others. Organizations without an enterprise identity provider can use Amazon Cognito. When someone leaves the organization, disabling their identity will automatically disable any agent’s authorization to act on their behalf.

With AgentCore Identity, you can control both inbound authentication and outbound authentication. With inbound authentication, a nonprofit organization can provide callers with the right access to an agent, tool, runtime, or gateway. With outbound authentication, organizations can use an API key or OAuth to allow agent, tool, or gateway access to downstream resources, such as third-party systems.

Pillar 3: Visibility

To have trust in your AI systems, you need to know what an agent did at each step of its invocation. Amazon Bedrock AgentCore Observability helps you trace, debug, and monitor agent performance across each step of the agent workflow.

AgentCore services automatically emit a set of metrics for agents, gateway resources, and memory resources. You can enable log data and spans for memory resources, and you can instrument your custom agents to provide custom trace data. With this observability data, you can troubleshoot performance problems, validate agent tool selection, and identify hard-to-reproduce issues. For example, when a board member asks what the agent did with donor data on a specific date, you can trace the exact sequence of tool calls, inputs, and outputs to provide a complete answer.

Pillar 4: Evaluation

A fundamental feature of agentic systems is that they’re nondeterministic. This property can be frustrating because it can lead to inconsistent results, but nondeterminism is the fundamental property that makes agentic AI useful. Consider a situation where an agent is generating outreach messages to members that are tonally off and inconsistent with the organization’s voice. It’s important to be able to detect that this is happening.

Amazon Bedrock AgentCore Evaluations provides automated assessment tools to measure how well your agents are performing their tasks. AgentCore Evaluations uses LLM-as-a-judge or code-based evaluators to determine how your agentic workload is performing. AgentCore Evaluations generates a series of evaluation metrics such as Goal success rate, Harmfulness, and Stereotyping. These metrics are created as Amazon CloudWatch metrics that you can monitor and alert on like any other metric.

For example, if the agent’s harmfulness evaluator average (whether the agent’s response includes potentially harmful content, such as insults or hate speech) falls below 1 (meaning the content is harmful), you can alert on the metric and take action to disable the agent while you investigate. Nonprofit organizations can use this approach to quickly respond to events and have confidence in the output of their agents.

Conclusion

Amazon Bedrock AgentCore provides the technology that supports the four pillars of this framework. It places boundaries on what your agents can do, helps verify that your agents are authorized to do what they’re asked to do, provides visibility into their actions, and gives you the tools to set alerts for when something unexpected happens.

Two common concerns I hear about generative AI applications are:

- How do we get started?

- How do we build the experience to run generative AI workloads in production?

Both concerns are addressed by starting small. When building an AI agent, a best practice is to start with something that is internal facing. Start with something that is low risk but provides value.

At Amazon, we like to say that there is no compression algorithm for experience. By starting with a small, low-risk application, teams can gain operational experience running agentic AI workloads while keeping the risk low. As an example, an organization might deploy an internal knowledge base agent.

When you have one workflow operating under the full framework outlined, expand your efforts to other higher-stakes workflows that generate value. At this point, you understand the framework, so your work shifts from a governance problem to following the same pattern you’ve already established. The framework I outline in this post can be incorporated into the regular work of an organization’s Cloud Center of Excellence (CCoE).

The Amazon Bedrock AgentCore services I talked about in this post are charged based on how much you use. For complete details, refer to the Amazon Bedrock AgentCore Pricing page.

As a next step, get started by adding Amazon Bedrock AgentCore features to your agentic workloads. Depending on your use case, review the AgentCore code examples, which provide a deeper dive into the concepts I outlined here.