AWS Big Data Blog

Category: Industries

How Razorpay achieved 11% performance improvement and 21% cost reduction with Amazon EMR

In this post, we explore how Razorpay, India’s leading FinTech company, transformed their data platform by migrating from a third-party solution to Amazon EMR, unlocking improved performance and significant cost savings. We’ll walk through the architectural decisions that guided this migration, the implementation strategy, and the measurable benefits Razorpay achieved.

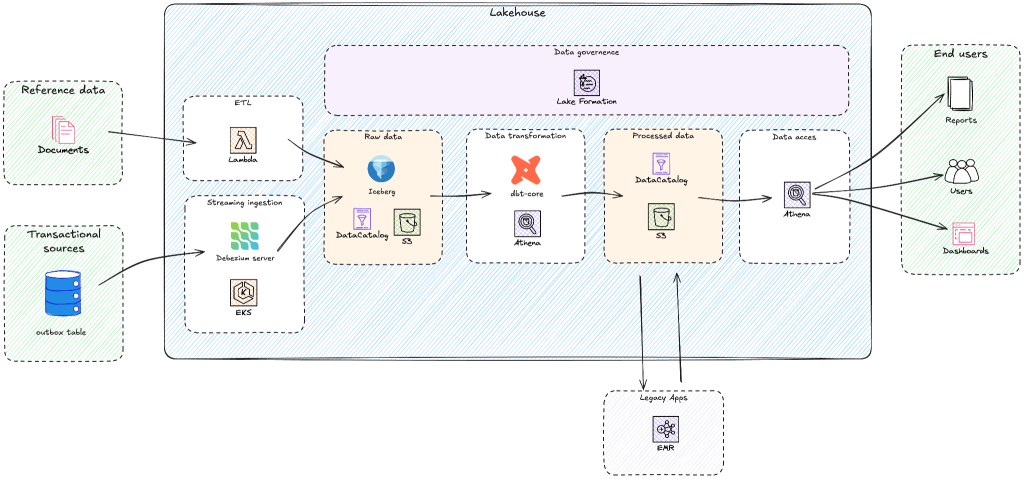

Building a modern lakehouse architecture: Yggdrasil Gaming’s journey from BigQuery to AWS

Yggdrasil Gaming develops and publishes casino games globally, processing massive amounts of real-time gaming data for game performance analytics, player behavior insights, and industry intelligence. Yggdrasil Gaming reduced multi-cloud complexity and built a scalable analytics foundation by migrating from Google BigQuery to AWS analytics services. In this post, you’ll discover how Yggdrasil Gaming transformed their data architecture to meet growing business demands. You will learn practical strategies for migrating from proprietary systems to open table formats such as Apache Iceberg while maintaining business continuity. Yggdrasil worked with GOStack, an AWS Partner, to migrate to an Apache Iceberg-based lakehouse architecture. The migration helped reduce operational complexity and enabled real-time gaming analytics and machine learning.

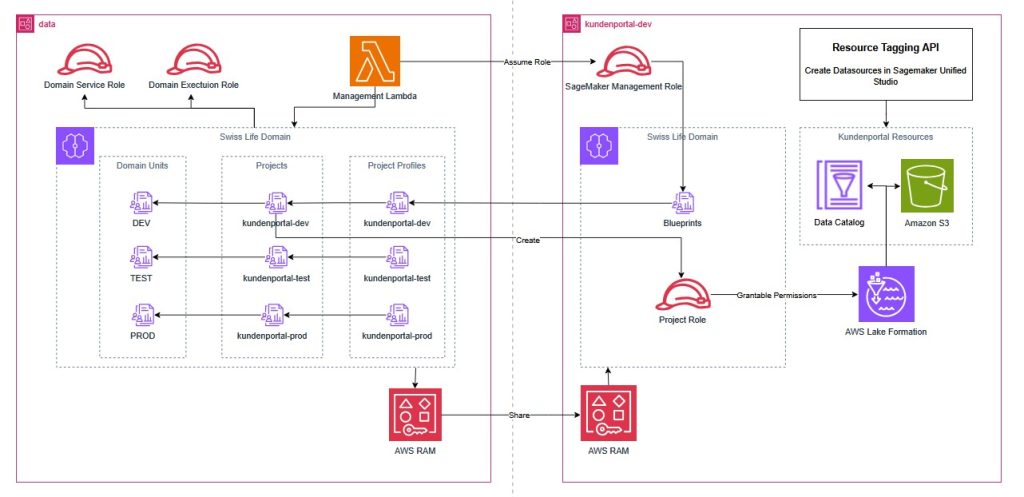

How Swiss Life Germany automated data governance and collaboration with Amazon SageMaker

Swiss Life Germany, a leading provider of customized pension products with over 100 years of experience, recently transitioned from legacy on-premises infrastructure to a modern cloud architecture. To enable secure data sharing and cross-departmental collaboration in this regulated environment, they implemented Amazon SageMaker with a custom Terraform pattern. This post demonstrates how Swiss Life Germany aligned SageMaker’s agility with their rigorous infrastructure as code standards, providing a blueprint for platform engineers and data architects in highly regulated enterprises.

Verisk cuts processing time and storage costs with Amazon Redshift and lakehouse

Verisk, a catastrophe modeling SaaS provider serving insurance and reinsurance companies worldwide, cut processing time from hours to minutes-level aggregations while reducing storage costs by implementing a lakehouse architecture with Amazon Redshift and Apache Iceberg. If you’re managing billions of catastrophe modeling records across hurricanes, earthquakes, and wildfires, this approach eliminates the traditional compute-versus-cost trade-off by separating storage from processing power. In this post, we examine Verisk’s lakehouse implementation, focusing on four architectural decisions that delivered measurable improvements.

Modernize game intelligence with generative AI on Amazon Redshift

In this post, we discuss how you can use Amazon Redshift as a knowledge base to provide additional context to your LLM. We share best practices and explain how you can improve the accuracy of responses from the knowledge base by following these best practices.

Breaking down data silos: Volkswagen’s approach with Amazon DataZone

In this post, we introduce Amazon DataZone and explore how Volkswagen used Amazon DataZone to build their data mesh, tackle the challenges encountered, and break the data silos.

Announcing SageMaker Unified Studio Workshops for Financial Services

In this post, we’re excited to announce the release of four Amazon SageMaker Unified Studio publicly available workshops that are specific to each FSI segment: insurance, banking, capital markets, and payments. These workshops can help you learn how to deploy Amazon SageMaker Unified Studio effectively for business use cases.

Integrate scientific data management and analytics with the next generation of Amazon SageMaker, Part 1

In this blog post, AWS introduces a solution to a common challenge in scientific research – the inefficient management of fragmented scientific data. The post demonstrates how the next generation of Amazon SageMaker, through its Unified Studio and Catalog features, helps scientists streamline their workflow by integrating data management and analytics capabilities.

Develop and deploy a generative AI application using Amazon SageMaker Unified Studio

In this post, we demonstrate how to use Amazon Bedrock Flows in SageMaker Unified Studio to build a sophisticated generative AI application for financial analysis and investment decision-making.

Near real-time baggage operational insights for airlines using Amazon Kinesis Data Streams

This post explores a framework developed by IBM to modernize baggage analytics using AWS managed services like Amazon Kinesis Data Streams, DynamoDB Streams, and other AWS services within a serverless architecture. The solution enables near real-time baggage operational insights for airlines, delivering cost savings, enhanced scalability, and improved performance while providing better security and operational efficiency to meet evolving airline needs.