AWS for Industries

Accelerating physical AI with AWS and NVIDIA: building production-ready applications with simulation and real-world learning

Defining physical AI beyond digital intelligence

Physical AI represents a transformative evolution in artificial intelligence, extending beyond purely computational systems, to intelligent agents that perceive, reason, and interact directly with the physical world. Unlike traditional AI systems that process information in digital domains (such as chatbots or recommendation engines), physical AI embeds intelligence in systems equipped with sensors and actuators, enabling them to take meaningful, adaptive, and autonomous actions in real-world environments.

Robotics represents physical AI’s most sophisticated applications, where machines perform complex manipulation, navigation, and assembly tasks. However, physical AI also extends across diverse domains, including autonomous vehicles navigating dynamic traffic conditions, drones conducting infrastructure inspections, and smart manufacturing assets like conveyors that autonomously adjust speeds to prevent package jams. Each application shares a common requirement that includes the ability to sense environmental conditions, process physical data in real time, and execute adaptive responses.

This emerging field represents a market opportunity projected by Morgan Stanley to reach $5 trillion by 2050. The growth is driven by zero-shot manufacturing capabilities, where physically trained AI humanoids work autonomously and intuitively like humans, with potential to automate 30-40% of global labor costs. However, even when these humanoids are trained for fundamental capabilities, such as self-balancing, they still require physical AI tuning for specific real-world applications. Organizations deploying robotic arms and humanoids for manufacturing operations, using physical AI, need practical pathways to solve real business problems.

The development to deployment challenge

Recent research from DHL Supply Chain highlights the implementation and management challenges of integrating robotics in warehouses. While 44% have already deployed robotics, but only 34% of supply chain executives believe their technology deployments are performing adequately. This reinforces that real-world deployment, monitoring, distribution, and governance of physical AI models are critical for successful performance and business outcomes. Amazon Web Services (AWS) has demonstrated proven capabilities in these areas through extensive deployments of robotics with physical AI across Amazon warehouses and supply chains.

Traditional physical AI development faces significant barriers as building autonomous systems requires substantial capital investment in physical prototypes, poses safety concerns during trial-and-error learning, and limits iteration speed. This process can be replaced with physics and environment-based simulation, enabling training against a wide array of scenarios in parallel. Yet, simulation alone cannot capture the full complexity of real-world physics, including friction variations, material deformations, sensor noise, and environmental unpredictability.

This blog presents a comprehensive reference architecture that bridges the simulation-to-reality gap, combining the speed and safety of simulation-based training with the fidelity of real-world learning. Built on AWS infrastructure and NVIDIA Isaac, an open robotics development platform, this approach enables organizations to accelerate the development, deployment, and continuous improvement of physical AI applications at scale.

The dual-path approach: simulation and real-world learning

While simulation enables rapid, safe experimentation at scale, real-world deployment demands systems that can handle unpredictable physical conditions. NVIDIA Isaac enables organizations to thoroughly train and test robot policies in physics-accurate virtual environments, preparing them for successful edge deployment.

NVIDIA Isaac comprises open models, libraries and open source frameworks such as NVIDIA Isaac Sim and NVIDIA Isaac Lab. Isaac Sim is an open source robotics simulation reference framework built on NVIDIA Omniverse libraries, providing a physically accurate, GPU-accelerated virtual environment for designing, testing, and generating synthetic training data for AI-driven robots. NVIDIA Isaac Lab is an open source, unified robot learning framework built on Isaac Sim, for training advanced robot policies using reinforcement and imitation learning methods.

Isaac Sim provides a physics-accurate simulation environment, while Isaac Lab scales that environment across thousands of parallel training scenarios. Together, they enable rapid policy development before real-world deployment.

The power of simulation-based training

Simulation provides an efficient starting point for physical AI development. Using Isaac Sim, teams can create digital twins of their robotic systems and operational environments, enabling rapid experimentation without the cost and time of building multiple physical prototypes. Running Isaac Sim on AWS infrastructure provides physical AI developers with several key advantages:

Rapid iteration and cost efficiency: Engineers can now test thousands of scenarios in parallel without risking expensive hardware or creating safety hazards. Instead of building multiple physical prototypes, teams evaluate design alternatives virtually. A robotic arm learning to grasp fragile objects can fail countless times in simulation at no additional cost.

Physics-based learning at scale: Simulations provide sufficient physics understanding for initial policy learning. Simulation enables massive parallel training, compressing weeks of physical robot learning into hours by running hundreds of virtual environments simultaneously. Techniques like domain randomization, where physics parameters are systematically varied during training, help models generalize to real-world conditions.

The necessity of real-world validation

Despite the advantages of simulation, real-world deployment remains essential for production-ready physical AI applications. The “sim-to-real” gap represents the differences between simulated and actual physics, can significantly impact performance, safety, reliability, and operational effectiveness.

Physics fidelity and environmental complexity: Real sensors capture nuances that simulation can only approximate, including surface texture variations, lighting conditions, material compliance, and dynamic environmental factors. Production environments present unpredictable scenarios such as human workers moving nearby, varying ambient conditions, and other edge cases difficult to anticipate in simulation.

Continuous improvement: As systems operate in production, they encounter new situations that inform model refinement. Operational data reveals edge cases and performance gaps that guide targeted model improvements. Real-world testing with comprehensive sensor feedback (force sensors, joint encoders, cameras, accelerometers) provides ground truth for model effectiveness, with millisecond data streaming enabling continuous performance monitoring.

End-to-end architecture overview

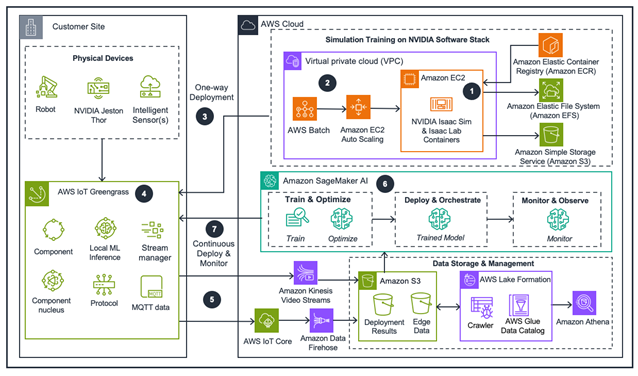

The following architectural guidance enables physical AI robotics application development through two complementary paths: simulation-based and real-world reinforcement learning. The solution manages physics either through NVIDIA Isaac or through real-world intelligent sensor data such as force, vision, position, and motion sensors. The simulation path allows model training in virtual environments before real-world implementation, while the real-world path captures actual physical interactions through sensor data. Trained models deploy to edge devices that implement inference-based control policies and ingest real-time sensor data for iterative learning. This enables systems that emulate human-like intelligence and autonomously perform designated tasks.

The reference architecture implements two complementary learning loops working in parallel.

Figure 1: This guidance architecture shows how to integrate advanced AI capabilities with physical robotics systems on AWS, enabling autonomous operation in real-world environments.

Simulation training Loop – build and train

- The journey begins with Isaac Sim running in containers on GPU-powered Amazon Elastic Compute Cloud (Amazon EC2) instances. Engineers model system kinematics, define physics constraints, and create virtual environments representing operational scenarios. Isaac Lab scales this training across multiple parallel scenarios, testing variations in physics parameters, environmental conditions, and task complexities.

- AWS Batch orchestrates simulation workloads, dynamically provisioning GPU compute resources through Amazon EC2 Auto Scaling groups. As training demand fluctuates, infrastructure scales automatically, spinning up additional instances during intensive phases and scaling down during idle periods, optimizing cost efficiency. Trained models and associated policies are stored in Amazon Simple Storage Service (Amazon S3), providing durable, versioned storage. Amazon Elastic Container Registry (Amazon ECR) manages container images for consistent deployment across environments.

Real-world learning Loop – deploy and monitor

- Once simulation training produces a candidate model, engineers deploy it to edge infrastructure running AWS IoT Greengrass on physical robot controllers, such as NVIDIA Jetson Thor for real-time reasoning. These edge devices serve dual purposes, executing ML inference for real-time control and collecting comprehensive sensor data.

- AWS IoT Greengrass components process real-time feedback from multiple sensor types.

- The multi-modal data streams through two pathways: structured sensor data (MQTT messages containing time-series telemetry) flows through AWS IoT Core and Amazon Data Firehose to Amazon S3 data lake. Video streams from cameras are captured via Amazon Kinesis Video Streams. AWS Glue crawlers catalog operational data, making it queryable through Amazon Athena and manageable via AWS Lake Formation.

- Amazon SageMaker AI processes batches of real-world operational data to retrain and optimize models, explicitly bridging the sim-to-real gap.

- Refined models are deployed to AWS IoT Greengrass running on edge devices, driving improved behavior. A monitoring layer continuously tracks performance metrics, detects model drift, and triggers retraining workflows when performance degrades. This creates continuous improvement, as systems generate operational data, models are refined based on real-world performance, improved models redeploy, and the cycle repeats.

Real-world application in industrial assembly

Consider a common industrial challenge involving contact-rich manipulation tasks like inserting gear components with tight tolerances, required in electronics manufacturing, automotive assembly, and precision engineering. These tasks demand sophisticated control strategies that respond to contact forces in real time. Universal Robots (UR) demonstrates this capability through their integration of Isaac libraries for contact-rich assembly. Their robotic arms insert pegs into holes with micron-level precision, using adaptive force feedback and control strategies.

Simulation phase: Engineers model the UR robot arm, workpiece geometry, and assembly fixtures in Isaac Sim, defining physics parameters including material properties, friction coefficients, and contact dynamics. Using reinforcement learning in Isaac Lab, the system trains across thousands of parallel scenarios with domain randomization, varying insertion angles, initial positions, friction parameters, and part tolerances. This develops initial policies for force-controlled insertion, teaching the robot to sense contact, adjust approach angles, and apply appropriate forces.

Deployment and refinement: The trained policy model is deployed via AWS IoT Greengrass on the robot controller. During production testing, force sensors, joint encoders, and position sensors stream real-time data to AWS, revealing sim-to-real gaps. For example, real friction exceeds simulated values, or actual part tolerances vary more than modeled.

Amazon SageMaker processes this operational data, retraining the model to account for real-world physics. Engineers may discover that insertion failures correlate with specific force profiles, enabling targeted improvements. The refined model can then be redeployed to the edge, improving success rates. This iterative process continues as the robot encounters new variations. Monitoring systems track key performance indicators and trigger retraining when metrics drift outside acceptable ranges.

Figure 2: Robot Arm Gear Assembly

This dual-path architecture extends across diverse physical AI applications. Organizations can apply these principles when developing dexterous manipulation systems for pharmaceutical handling, mobile robots for dynamic warehouse navigation, and humanoid robots in logistics facilities.

Best practices for success

Start with robust simulation: Invest in defining a physics model, preferably based on real prototypes. Users achieve the best results when they develop proper reward functions for reinforcement learning and iteratively tune physics parameters within simulation loops, using prototypes to validate accuracy. Applying domain randomization before real-world deployment is another way to support robust training results. Multiple simulation iterations are far cheaper than physical testing.

Deploy incrementally: Begin real-world testing in controlled environments before full production. Use initial data to validate simulation assumptions and identify critical gaps.

Instrument comprehensively: Deploy diverse sensors to capture multi-modal data and validate physics. Richer real-world feedback enables more effective model refinement and continuous monitoring with automated retraining triggers.

Maintain simulation-real parity: As real-world data reveals physics insights, update simulation models to improve future training iterations, creating a virtuous cycle where each domain informs the other.

Practical physical AI at scale

Physical AI applications, spanning robotics and other autonomous systems, have moved beyond research environments into production use. This reference architecture provides a practical, scalable pathway for organizations to develop autonomous systems that solve real business problems across manufacturing, logistics, healthcare, and beyond.

By combining the speed and safety of simulation-based training with the fidelity of real-world learning, organizations can accelerate development cycles, reduce costs, and deploy systems that continuously improve through operational experience. The architecture’s flexibility, supporting both simulation-first and real-world-first approaches, accommodates diverse use cases and organizational readiness levels.

As physical AI adoption accelerates, successful organizations will be those that effectively bridge simulation and reality, using the strengths of each to build production-ready applications. With AWS’s scalable infrastructure and NVIDIA physics simulation platforms, that future is available today.

Ready to get started? Access the reference architecture at AWS Guidance for Physical AI for Robotics. For additional resources, visit the NVIDIA Isaac Sim documentation for simulation, testing, and synthetic data generation in physically based virtual environments, AWS IoT Greengrass documentation and for edge deployment, Amazon SageMaker AI documentation for model development and continuous refinement, and AWS Batch documentation for GPU-accelerated compute.