Artificial Intelligence

Operationalizing Agentic AI Part 1: A Stakeholder’s Guide

Agentic AI isn’t a feature you turn on. It’s a shift in how work is defined, who does it, and how decisions get made.

Most enterprises learn this the hard way. They launch pilots that stall the moment they hit real processes, systems, and governance. The pattern repeats: vague use cases, prototypes that can’t survive messy data, autonomy outpacing controls, compliance blocking launch dates, datasets too weak for autonomous decisions. Underneath all of it, the same root problem—no one agreed on what success looks like.

The AWS Generative AI Innovation Center has helped 1,000+ customers move AI into production, delivering millions in documented productivity gains. Our cross-functional teams—scientists, strategists, and machine learning experts—work side-by-side with customers from ideation through deployment. Increasingly, that work involves agents.

In this post, we share guidance for leaders across the C-suite: CTOs, CISOs, CDOs, and Chief Data Science/AI officers, as well as business owners and compliance leads. Our core observation: when agentic AI works, it looks less like magic software and more like a well-run team—each agent with a clear job, a supervisor, a playbook, and a way to improve over time.

If you sit in an executive meeting and ask, “Are we investing enough in AI?”, the answer is almost always yes. If you then ask, “Which specific workflows are materially better today because of AI agents, and how do we know?”, the room gets quiet.

This is Part I of a two-part series. Here we establish the foundation: why the value gap is mostly an execution problem, and what makes work truly agent-shaped. Part II will speak directly to each C-suite persona, in the language of their responsibilities.

The shared problem as an enterprise

The value gap is mostly about how you work

If you sit in an executive meeting and ask, “Are we investing enough in AI?”, the answer is almost always yes. If you then ask, “Which specific workflows are materially better today because of AI agents, and how do we know?”, the room gets quiet.

What sits between those two answers isn’t a missing foundation model or a missing vendor. It’s a missing operating model. In organizations where agents create visible value, three things tend to be true:

- The work is defined in painful detail. People can describe, step by step, what arrives, what happens, and what “done” means. They can also describe what happens when things go wrong.

- Autonomy is bounded. Agents are given clear authority limits, explicit escalation rules, and surfaces where humans can see and override decisions.

- Improvement is a habit, not a project. There’s a regular cadence where teams look at how agents behaved last week, where they helped, where they caused friction, and what to change next.

Where those things are missing, the same symptoms appear: impressive proofs of concept that do not leave the lab, pilots that quietly die after a few months, and leaders who stop asking, “What can we do next?” and start asking, “Why are we spending so much on this?”

What makes work agent-shaped

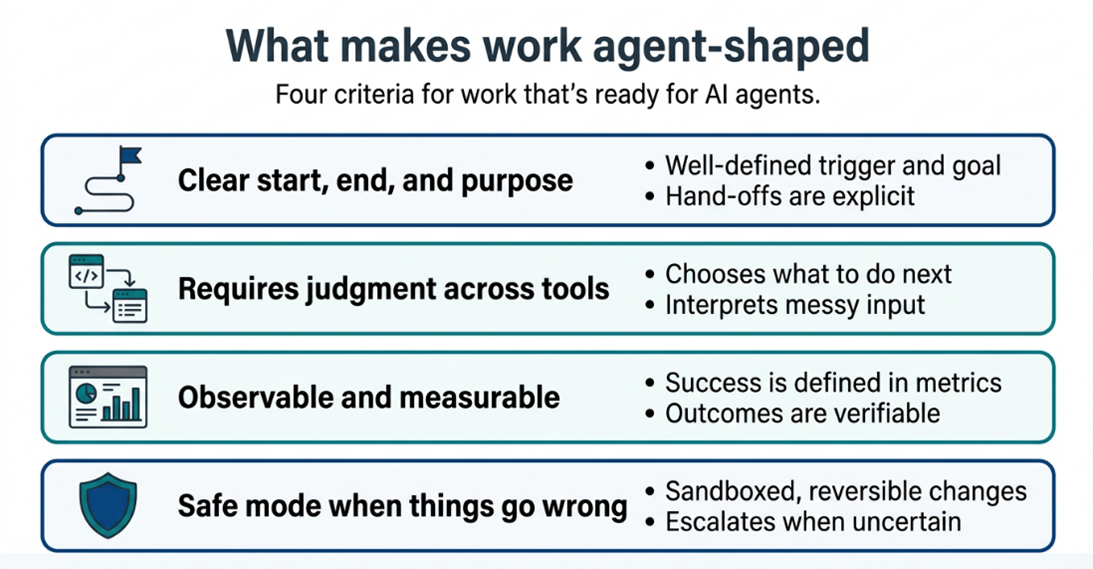

Most organizations start with the question, “Where can we use an agent?” A better starting point is, “Where is the work already structured like a job an agent could do?” In practice, that means four things.

First, the work has a clear start, end, and purpose. A claim arrives. An invoice appears. A support ticket is opened. The agent can recognize when it has enough information to begin, what goal it’s working toward, and when the task is complete or needs to be handed off. This is more than just a trigger and a finish line. The agent needs to understand the intent behind the work well enough to handle reasonable variations without being explicitly told what to do for each one. If your team can’t articulate what done well looks like for a given task, including how to handle exceptions and edge cases, the work isn’t yet ready for an agent.

Second, the work requires judgment across tools. The agent doesn’t follow a fixed script. It reasons about what information it needs, decides which systems to query, interprets what it finds, and determines the right action based on context. The difference from traditional automation is that the path isn’t hard-coded: the agent adapts its approach, handles variations, and knows when a situation falls outside its competence. But agents act through tools, and those tools must exist before the agent does. Your systems need well-defined, secure, and reliable interfaces that an agent can call to read data, write updates, trigger transactions, or send communications. If the process today is humans reasoning in email and spreadsheets, you have both process design and tooling work to do before you have a viable agent use case.

Third, success is observable and measurable. Someone who doesn’t work in the team can look at the output and say, “This is correct,” or “This needs fixing” without reading minds. That might mean checking whether a ticket was resolved on time, whether a form is complete and consistent, whether a transaction balances, or whether a customer got the response they needed. But observability goes beyond spot-checking outputs. You need to see how the agent arrived at its answer: what data it used, what tools it called, what options it considered, and why it chose one over another. If you can’t evaluate the reasoning, you can’t improve the agent, and you can’t defend its decisions when something goes wrong.

Start with work where actions are reversible or where the agent’s output is a recommendation that a human acts on. As trust, controls, and evaluation mature, you earn the right to move into higher-stakes work where the agent closes the loop on its own.

Fourth, the work has a safe mode when things go wrong. The best early agent candidates are tasks where mistakes are caught quickly, corrected cheaply, and don’t create irreversible harm. If an agent misclassifies a support ticket, it can be rerouted. If it drafts an incorrect response, a human can edit before it’s sent. But if an agent approves a payment, executes a trade, or sends a legally binding communication, the cost of being wrong is fundamentally different. Start with work where actions are reversible or where the agent’s output is a recommendation that a human acts on. As trust, controls, and evaluation mature, you earn the right to move into higher-stakes work where the agent closes the loop on its own.

When these four ingredients are present, you have something that can become a job for an agent. When they’re missing, the conversation drifts back into vague labels like assistant, copilot, or automation that mean different things to every person in the room.

Call to Action

Ready to Close the Execution Gap?

The patterns described in Part I aren’t theoretical. They show up in organizations of every size, across every industry. The good news: the gap between where you are and where you want to be is not a technology gap. It is an execution gap, and execution gaps are solvable.

Here are three things you can do this week:

- Name the work, not the wish. Pick one workflow in your organization that has a clear start, a clear end, and a measurable definition of “done.” That’s your first candidate for an agent.

- Ask the hard question in the room. In your next leadership meeting, don’t ask, “Are we investing enough in AI?” Ask, “Which specific workflows are materially better today because of AI agents, and how do we know?” The silence that follows is your roadmap.

- Start the job description. Before any technology decision, write down what the agent would do, what tools it would need, what success looks like, and what happens when it fails. If you can’t fill in that page, you’re not ready to build, and that’s valuable information.

Coming up in Part II: Guidance by Persona

Knowing that agentic AI is an execution problem is one thing. Knowing your role in solving it is another.

In Part II, we speak directly to the leaders who need to make this work in practice: the line-of-business owner who needs agents tied to KPIs, the CTO deciding between ten one-off agents or a platform for one hundred, the CISO who must treat agents like colleagues rather than code, the CDO who needs to make data boring in the best possible way, the Chief AI Officer for whom evaluation is the product, and the compliance leader who must design for audits before they happen.

Each persona. Each responsibility. Each concrete move.

Partner with the Generative AI Innovation Center

You don’t have to navigate this journey alone. Whether you are planning your first agentic pilot or scaling to an enterprise-wide capability, reach out to the Generative AI Innovation Center team to start a conversation grounded in your workflows, your data, and your business outcomes.