AWS Storage Blog

AWS re:Invent recap: Modernize your on-premises backup strategy with AWS

While re:Invent 2020-2021 is a departure in format from previous years, the purpose is still the same: to educate and inspire you to build, simplify, and modernize with AWS. This year I was happy to present in a re:Invent session on modernizing your on-premises backup strategy with AWS. In my session, I discussed how organizations of all sizes, in all industries, continue to see huge growth in their data, both on premises, and in cloud-native applications. I also discussed how AWS can help organizations modernize on-premises backup strategies to help store data backups more efficiently, safely, and at a lower cost.

In this post, I provide some of the key takeaways from my re:Invent session. If you missed it or haven’t gotten to check it out, remember that you can always view on-demand re:Invent sessions.

Challenges with on-premises backup storage

Customers who have accumulated tremendous amounts of data frequently reach out to AWS and mention their struggles with their existing on-premises backup strategies. If you’ve ever had to purchase, provision, manage, and replace on-premises backup storage infrastructure, you know how much work is involved with keeping up with your backup data growth. Even getting the right amount of backup storage can be a challenge. If you under plan or over plan your backup storage provisioning, you may have to start moving your backup data around, possibly leading to back up failures and missed SLAs. To gain higher levels of availability and durability of backup data, you must add more and more backup storage locally, and then remotely as a replication target. These additional backup storage appliances then multiply the burden of planning, monitoring, managing, securing, and updating all of these appliances, now in multiple locations. These multiple appliances typically then require additional management software that has to be also deployed increasing both your fixed operational costs and capital costs.

Here are some challenges of on-premises backup storage:

- Configuring, managing, and monitoring your backup storage consumption.

- Typically pre-paying for at least a portion backup storage with no ability to easily scale up or down if needs change.

- Settle for < 11 9’s of data durability and buy two or more appliances for higher availability and redundancy with no SLAs.

- Manage daily maintenance tasks to keep the backup storage up-to-date and healthy.

- Repeat the process when it becomes necessary to add a second backup storage appliance or tape library for secondary copies of your backups.

This has led to customers looking for a more modern way of procuring, paying for, and managing the storage for their backup data without necessarily changing their backup application.

As in my re:Invent session, this post highlights the steps involved in the journey of modernizing your on premises backup strategy and how AWS can help with that journey.

Storing your backup data

There are many choices when it comes to where to store your backup data. Today, backup storage can take the form of a variety of media including disk, tape, virtual tape, physical and virtual dedicated backup storage appliances. Organizations have stored backups using physical tape for many years, partly due to its ability to scale through purchasing more and more tapes. Customers have been moving away from tape due to the effort involved in managing and handling the physical tapes and their rotation offsite and back onsite. As a result, many on-premises backups now are sent to disk-based backup locations or to dedicated disk-based backup storage appliances.

However, while these disk-based appliances have replaced the need to handle physical tape media, they typically require a trade-off on their scalability versus tape. Leading backup storage appliances only scale up to less than two PBs of physical capacity that must be purchased upfront. To scale further, they rely on data compression and deduplication techniques to squeeze more data into this physical storage space. This works well for some data types. However, your ability to further deduplicate data to achieve the storage efficiencies needed to justify the cost of these backup storage appliances decreases, as more data is encrypted and compressed. When space runs out on these backup appliances, customers have a decision to make. They can decide to add more capacity in their existing backup storage appliance, add a second backup appliance, or try to relocate data through a tediously managed migration process. At AWS, we recognize that this on premises approach to your backup storage can be complex, costly, and rigid. When you want to modernize your backup strategy and the infrastructure to support it, you require options to browse to pick the best fit for modernizing your organization’s backup strategy. Let’s explore some of those options.

Accelerate the modernization of your backups

Today, many organizations spend too many of their resources on backup storage that requires lengthy acquisition, setup, management, patching and eventual replacement. This lack of elasticity for traditional backup storage appliances is leading customers to look for alternatives with lower or no fixed costs. Organizations are also voicing their desire for alternatives with no separate software management products to acquire, install, configure, and patch just for the growing fleet of backup storage appliances. With a growing number of backup storage appliances, the management tasks for managing and monitoring that backup storage also grows exponentially.

AWS is different due to the elasticity of the on-demand experience of acquiring, configuring, and managing cloud storage. As you transition to cloud storage for your backups, you don’t have to expend capital for backup storage appliances, or the data centers to house and power them. Instead, you get to pay for it as you consume it – as a variable expense. Fixed backup storage costs for those appliances do not typically ever decrease. This is again why customers are choosing cloud storage for their backups as AWS has lowered prices 80 times since AWS launched in 2006 (as of Feb 6, 2020).

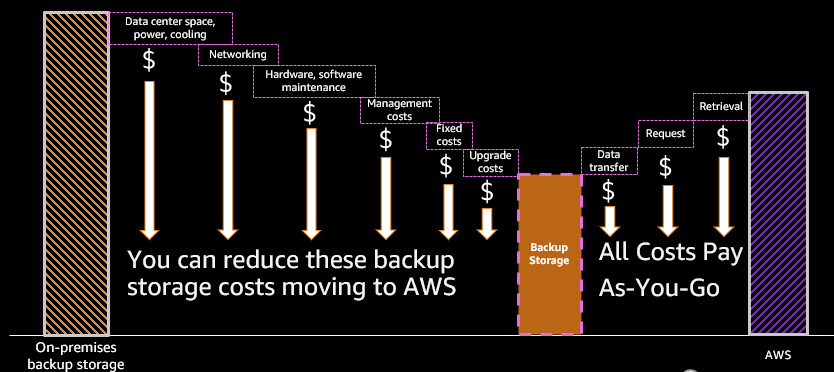

How much has your backup storage decreased in cost over the same period? Even with deduplication? There are so many cost advantages when you modernize you backup storage with AWS over traditional backup storage appliances. This diagram illustrates the comparison of those costs:

Traditional backup storage appliances and physical tape libraries cannot match the flexibility and pay as you go cost structure of AWS Storage. With large upfront fixed costs, and ongoing costs that rarely, if ever, decrease for data center space, networking, and maintenance, traditional storage is costlier by an order of several magnitudes.

With AWS Storage you never have to:

- Pay for fixed storage costs upfront, even a portion of it. You only pay for what you use.

- Worry about running out of space. AWS Storage can scale to whatever amount you need when you need it for your backups.

- Settle for < 11 9’s of data durability, < 99.5% data availability (with Amazon S3 and EF), or buy multiple backup appliances to increase availability of your backups. Compare that to your current backup storage.

- Manage daily or weekly maintenance tasks including patching, upgrading, deduplication garbage collection

Use the right tool for the job

Customers looking to modernize their backup storage with AWS often ask where they should begin their journey? Which AWS Storage service should use? Should they use Amazon Simple Storage Service (Amazon S3) for object storage, or perhaps another file storage service like Amazon EFS? The answer does not have to be complicated. Your backup application already knows which type of storage it needs.

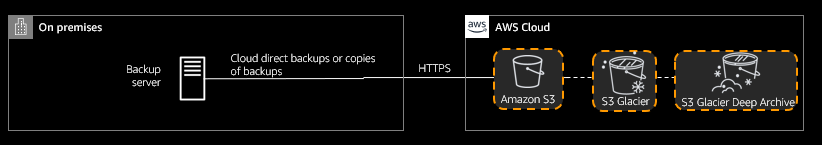

The most widely used AWS storage used for backups is Amazon S3. Amazon S3 is an object storage service that offers industry-leading scalability, data availability, security, and performance at low cost. AWS designed S3 for 99.999999999% (11 9’s) of durability for your backup data, and the service provides robust capabilities to manage access, cost, replication, versioning, and data protection. Amazon S3 Glacier and S3 Glacier Deep Archive are secure, durable, and low-cost Amazon S3 cloud storage classes for even lower-cost data archiving and long-term backups that do not require a low Recovery Time Objective (RTO).

In general, most modern backup applications can write directly to Amazon S3 and S3 Glacier by creating a backup storage destination that points to S3 instead of an on-premises tape library or backup appliance. Additionally, most backup applications can leverage their own native deduplication, compression, and encryption to lower the overall size of backup and the S3 storage needed to store these backups.

Consider the question: “How do I use Amazon S3 with on-premises backup applications that don’t natively work with an object store, but instead need file-based SMB or NFS access to write to?” There are several options for this.

The first is AWS Storage Gateway. Customers can use AWS Storage Gateway as a Virtual Tape Library (VTL) or as a file storage target for their backup infrastructure. Practically every enterprise backup or information management software available integrates directly with AWS Storage Gateway. This simplifies data transfers to changing the backup destination in your backup application to write to the Tape Gateway configuration of AWS Storage Gateway as a virtual tape target instead of physical tape.

The same choice to write directly to the AWS Cloud, or to a local intermediary File Gateway that offers a local SMB or NFS-based backup location, is available to you. For example, you can choose to backup locally to AWS File Gateway to store your Oracle or SQL Server databases on-premises temporarily before archiving them into Amazon S3. Alternatively, you can backup data that you would like to write directly to NFS or SMB-compatible file storage hosted in AWS, without using an intermediary gateway device. This can be done similarly to what was covered earlier in this post for writing to Amazon S3 directly. Let’s explore those backup storage options next.

Amazon EFS

For backup and archival data that you want to write to a cloud-based NFS or SMB-compatible file system directly, changing your backup storage destination to Amazon EFS, our NFS-based file storage service, is just as easy. Most backup applications can write backups to NFS-compatible backup targets. Applications such as Oracle, MySQL, and SAP and their respective native backup capabilities can use Amazon EFS natively as a NFS-compatible backup location. EFS is built to scale on demand to petabytes without disrupting applications, growing and shrinking automatically as you add and remove files. This eliminates the necessity of provisioning and managing capacity to accommodate growth.

Amazon FSx for Windows File Server

You can use Amazon FSx for Windows File Server (Amazon FSx) for backup storage for Windows applications that have native backup capabilities that look for SMB-compatible backup storage to write their backups to. Applications such as Microsoft SQL Server and its native backup capabilities can use Amazon FSx directly for backups.

Amazon FSx is an SMB-compatible storage that has the optional benefit of data deduplication to further reduce backup storage consumption and costs. Backups often have redundant data, which increases the data storage costs. You can reduce your data storage costs by turning on data deduplication for your file system. Data deduplication reduces or eliminates redundant data by storing duplicated portions of the dataset only once. After data deduplication is enabled, it continually and automatically scans and optimizes your file system in the background. Just like on-premises backup storage appliances with deduplication, the storage savings that you can achieve with Amazon FSx data deduplication depend on how much duplication exists across backups with typical savings average 50–60 percent.

Benefits of modernizing your backup storage with AWS

In the cloud, you just provision what you need – if it turns out you need less, you give it back to us and stop paying for it. That variable expense is lower than what virtually every company can do on its own with traditional backup storage appliances. The scale of AWS benefits customers in the form of lower prices, amongst many other ways. Amazon S3 addresses the common challenges of managing traditional backup storage infrastructure, while still giving choice on which backup product organizations use to protect their data. Moving to the cloud enables you to reduce the significant costs in securing, storing, and managing that data in on-premises arrays. For example, a large financial services customer eliminated 30,000 tapes and reduced monthly disaster recovery (DR) expenses by more than 50%.

The combination of S3-integrated backup software with Amazon S3 storage offers the following advantages over traditional on-premises backup storage and purpose-built backup appliances:

- Backup storage scalability: Amazon S3 offers industry-leading scalability into multiple exabytes without performance degradation.

- Backup storage elasticity: Scale backup storage resources up and down to meet fluctuating retention demands, without upfront investments or procurement, deployment, and management cycles of a traditional physical backup appliance.

- Backup storage security: Amazon S3 maintains compliance programs, such as PCI-DSS, HIPAA/HITECH, FedRAMP, EU Data Protection Directive, and FISMA, to help you meet regulatory requirements. AWS also supports numerous auditing capabilities to monitor access requests to your backups in S3, and you can use S3 Object Lock to prevent unauthorized deletion of backups in S3.

- Backup storage availability: AWS designed the S3 Standard storage class for 99.99% availability, the S3 Standard-IA storage class for 99.9% availability, the S3 One Zone-IA storage class for 99.5% availability, and the S3 Glacier and S3 Glacier Deep Archive class for 99.99% availability and SLA of 99.9%.

- Backup storage durability: AWS designed Amazon S3 Standard for 99.999999999% (11 9’s) of data durability. S3 automatically creates and stores copies of all S3 objects across multiple Availability Zones within a selected Region for backup storage.

Key takeaways and conclusion

As you already know, backup storage is going to continue to grow and have to be maintained in larger and larger quantities. In this post, and in my re:Invent session, I explored why and how organizations should begin their journey transition to AWS for their backup storage. My goal is to help organizations modernize their backup strategy and backup storage infrastructure.

I hope this post has made you eager to explore modernizing your on-premises backup strategies and storage with AWS. To begin your journey, here are some helpful links and key takeaways:

- Check to see if your backup application can write backups directly to Amazon S3 and Amazon S3 Glacier, or if it would require an AWS Storage Gateway as an intermediary. You can find a list of AWS Storage Competency Partners here if you need help from backup software vendors.

- Backups are an increasing target for ransomware. Amazon S3 Object Lock is ready to help prevent attacks on your backup files. You can use S3 Object Lock to secure your backups from unauthorized deletions or modifications to your backup files. It is supported by many backup vendors natively today.

- For Windows-based backup applications, such as Microsoft SQL Server, you can begin writing your backups directly to Amazon FSx for Windows File Server with optional deduplication.

- You can learn more about our backup solutions here.

- For applications such as Oracle, MySQL, and SAP where a NFS-compatible backup storage destination is necessary, Amazon EFS provides a simple, scalable, fully managed elastic NFS file system for those backups.

- For protecting AWS services natively in-cloud, a re:Invent session on AWS Backup is here.

- TCO analysis calculator here to help you compare storage costs with AWS vs. on-premises storage.

If you have any comments or questions about my re:Invent session or this blog post, please don’t hesitate to reply in the comments section. Thanks again for reading!