Artificial Intelligence

Category: Artificial Intelligence

Governing the ML lifecycle at scale, Part 4: Scaling MLOps with security and governance controls

This post provides detailed steps for setting up the key components of a multi-account ML platform. This includes configuring the ML Shared Services Account, which manages the central templates, model registry, and deployment pipelines; sharing the ML Admin and SageMaker Projects Portfolios from the central Service Catalog; and setting up the individual ML Development Accounts where data scientists can build and train models.

How Untold Studios empowers artists with an AI assistant built on Amazon Bedrock

Untold Studios is a tech-driven, leading creative studio specializing in high-end visual effects and animation. This post details how we used Amazon Bedrock to create an AI assistant (Untold Assistant), providing artists with a straightforward way to access our internal resources through a natural language interface integrated directly into their existing Slack workflow.

Protect your DeepSeek model deployments with Amazon Bedrock Guardrails

This blog post provides a comprehensive guide to implementing robust safety protections for DeepSeek-R1 and other open weight models using Amazon Bedrock Guardrails. By following this guide, you’ll learn how to use the advanced capabilities of DeepSeek models while maintaining strong security controls and promoting ethical AI practices.

Fine-tune and host SDXL models cost-effectively with AWS Inferentia2

As technology continues to evolve, newer models are emerging, offering higher quality, increased flexibility, and faster image generation capabilities. One such groundbreaking model is Stable Diffusion XL (SDXL), released by StabilityAI, advancing the text-to-image generative AI technology to unprecedented heights. In this post, we demonstrate how to efficiently fine-tune the SDXL model using SageMaker Studio. We show how to then prepare the fine-tuned model to run on AWS Inferentia2 powered Amazon EC2 Inf2 instances, unlocking superior price performance for your inference workloads.

How Aetion is using generative AI and Amazon Bedrock to translate scientific intent to results

Aetion is a leading provider of decision-grade real-world evidence software to biopharma, payors, and regulatory agencies. In this post, we review how Aetion is using Amazon Bedrock to help streamline the analytical process toward producing decision-grade real-world evidence and enable users without data science expertise to interact with complex real-world datasets.

Trellix lowers cost, increases speed, and adds delivery flexibility with cost-effective and performant Amazon Nova Micro and Amazon Nova Lite models

This post discusses the adoption and evaluation of Amazon Nova foundation models by Trellix, a leading company delivering cybersecurity’s broadest AI-powered platform to over 53,000 customers worldwide.

OfferUp improved local results by 54% and relevance recall by 27% with multimodal search on Amazon Bedrock and Amazon OpenSearch Service

In this post, we demonstrate how OfferUp transformed its foundational search architecture using Amazon Titan Multimodal Embeddings and OpenSearch Service, significantly increasing user engagement, improving search quality and offering users the ability to search with both text and images. OfferUp selected Amazon Titan Multimodal Embeddings and Amazon OpenSearch Service for their fully managed capabilities, enabling the development of a robust multimodal search solution with high accuracy and a faster time to market for search and recommendation use cases.

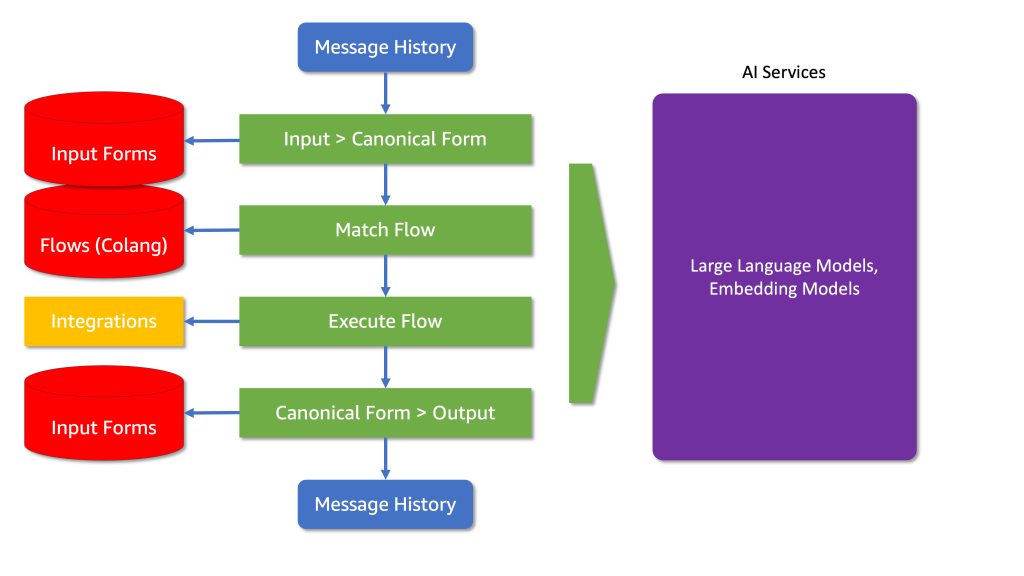

Enhancing LLM Capabilities with NeMo Guardrails on Amazon SageMaker JumpStart

Integrating NeMo Guardrails with Large Language Models (LLMs) is a powerful step forward in deploying AI in customer-facing applications. The example of AnyCompany Pet Supplies illustrates how these technologies can enhance customer interactions while handling refusal and guiding the conversation toward the implemented outcomes. This journey towards ethical AI deployment is crucial for building sustainable, trust-based relationships with customers and shaping a future where technology aligns seamlessly with human values.

Build a multi-interface AI assistant using Amazon Q and Slack with Amazon CloudFront clickable references from an Amazon S3 bucket

There is consistent customer feedback that AI assistants are the most useful when users can interface with them within the productivity tools they already use on a daily basis, to avoid switching applications and context. Web applications like Amazon Q Business and Slack have become essential environments for modern AI assistant deployment. This post explores how diverse interfaces enhance user interaction, improve accessibility, and cater to varying preferences.

Orchestrate seamless business systems integrations using Amazon Bedrock Agents

The post showcases how generative AI can be used to logic, reason, and orchestrate integrations using a fictitious business process. It demonstrates strategies and techniques for orchestrating Amazon Bedrock agents and action groups to seamlessly integrate generative AI with existing business systems, enabling efficient data access and unlocking the full potential of generative AI.