Artificial Intelligence

Category: Amazon Elastic Kubernetes Service

How Reco transforms security alerts using Amazon Bedrock

In this blog post, we show you how Reco implemented Amazon Bedrock to help transform security alerts and achieve significant improvements in incident response times.

Introducing Disaggregated Inference on AWS powered by llm-d

In this blog post, we introduce the concepts behind next-generation inference capabilities, including disaggregated serving, intelligent request scheduling, and expert parallelism. We discuss their benefits and walk through how you can implement them on Amazon SageMaker HyperPod EKS to achieve significant improvements in inference performance, resource utilization, and operational efficiency.

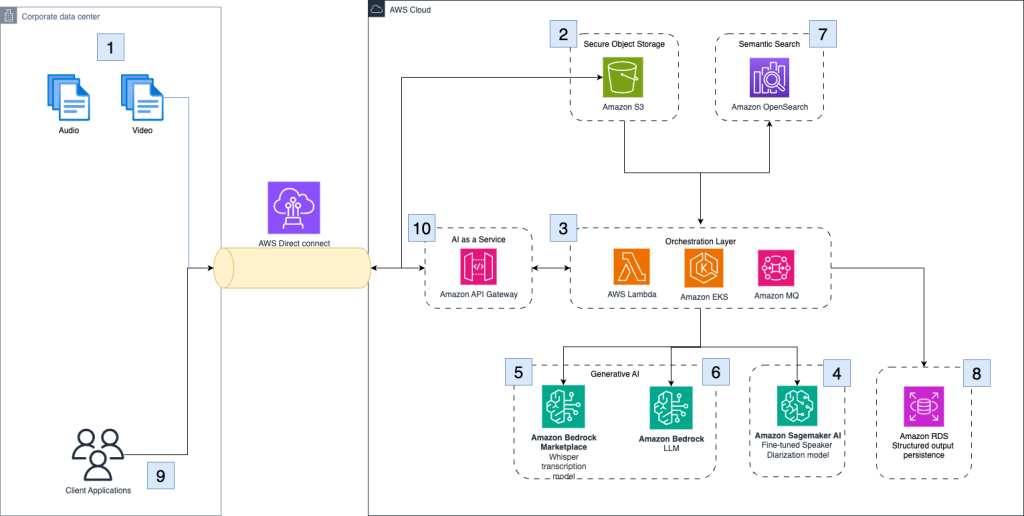

Fine-tuning NVIDIA Nemotron Speech ASR on Amazon EC2 for domain adaptation

In this post, we explore how to fine-tune a leaderboard-topping, NVIDIA Nemotron Speech Automatic Speech Recognition (ASR) model; Parakeet TDT 0.6B V2. Using synthetic speech data to achieve superior transcription results for specialised applications, we’ll walk through an end-to-end workflow that combines AWS infrastructure with the following popular open-source frameworks.

Build AI workflows on Amazon EKS with Union.ai and Flyte

In this post, we explain how you can use the Flyte Python SDK to orchestrate and scale AI/ML workflows. We explore how the Union.ai 2.0 system enables deployment of Flyte on Amazon Elastic Kubernetes Service (Amazon EKS), integrating seamlessly with AWS services like Amazon Simple Storage Service (Amazon S3), Amazon Aurora, AWS Identity and Access Management (IAM), and Amazon CloudWatch. We explore the solution through an AI workflow example, using the new Amazon S3 Vectors service.

Manage Amazon SageMaker HyperPod clusters using the HyperPod CLI and SDK

In this post, we demonstrate how to use the CLI and the SDK to create and manage SageMaker HyperPod clusters in your AWS account. We walk through a practical example and dive deeper into the user workflow and parameter choices.

How Clario automates clinical research analysis using generative AI on AWS

In this post, we demonstrate how Clario has used Amazon Bedrock and other AWS services to build an AI-powered solution that automates and improves the analysis of COA interviews.

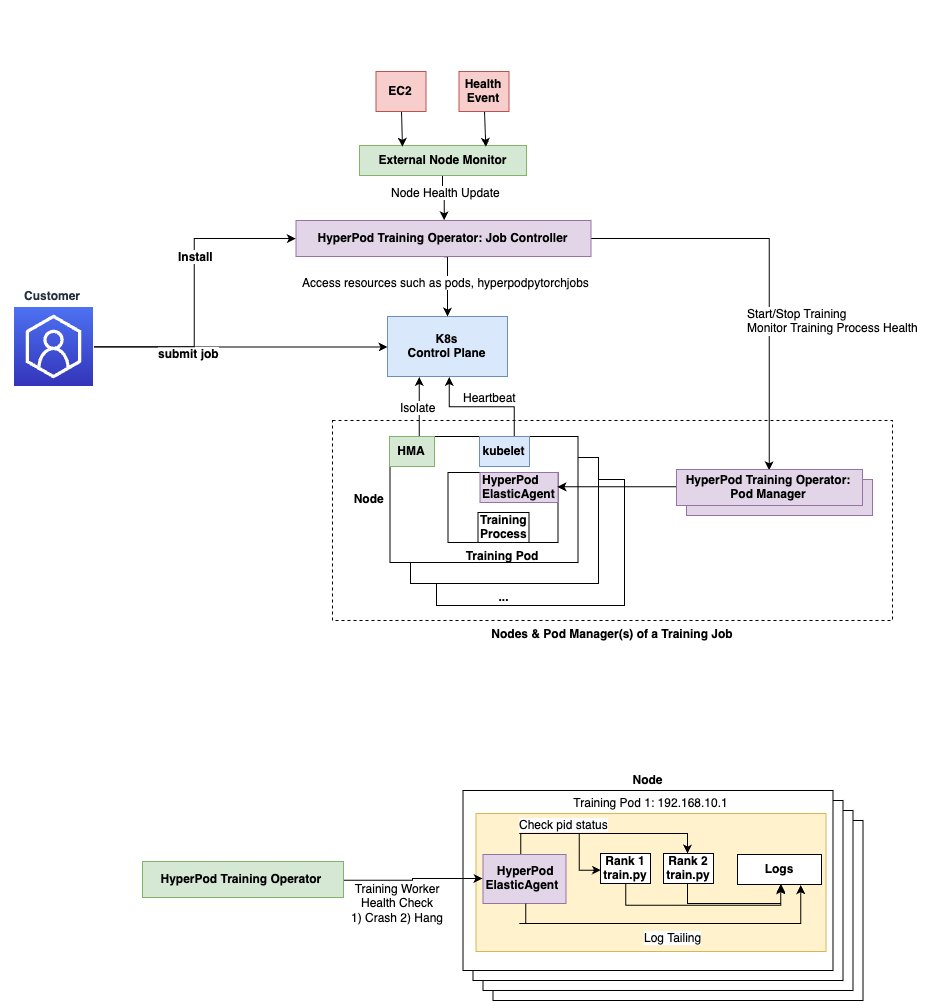

Accelerate large-scale AI training with Amazon SageMaker HyperPod training operator

In this post, we demonstrate how to deploy and manage machine learning training workloads using the Amazon SageMaker HyperPod training operator, which enhances training resilience for Kubernetes workloads through pinpoint recovery and customizable monitoring capabilities. The Amazon SageMaker HyperPod training operator helps accelerate generative AI model development by efficiently managing distributed training across large GPU clusters, offering benefits like centralized training process monitoring, granular process recovery, and hanging job detection that can reduce recovery times from tens of minutes to seconds.

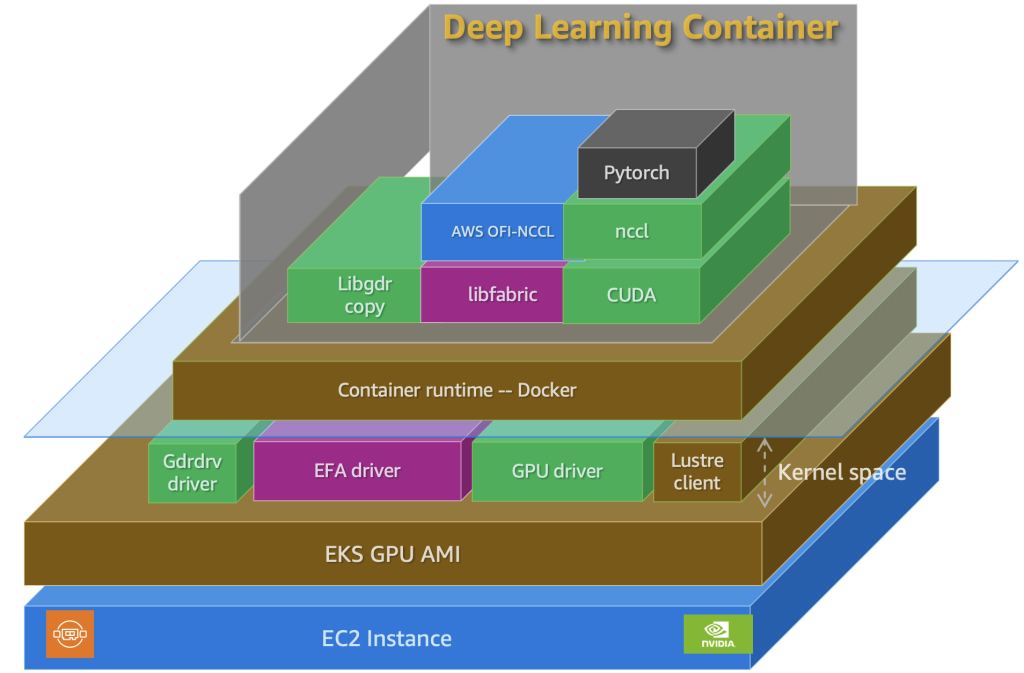

Configure and verify a distributed training cluster with AWS Deep Learning Containers on Amazon EKS

Misconfiguration issues in distributed training with Amazon EKS can be prevented following a systematic approach to launch required components and verify their proper configuration. This post walks through the steps to set up and verify an EKS cluster for training large models using DLCs.

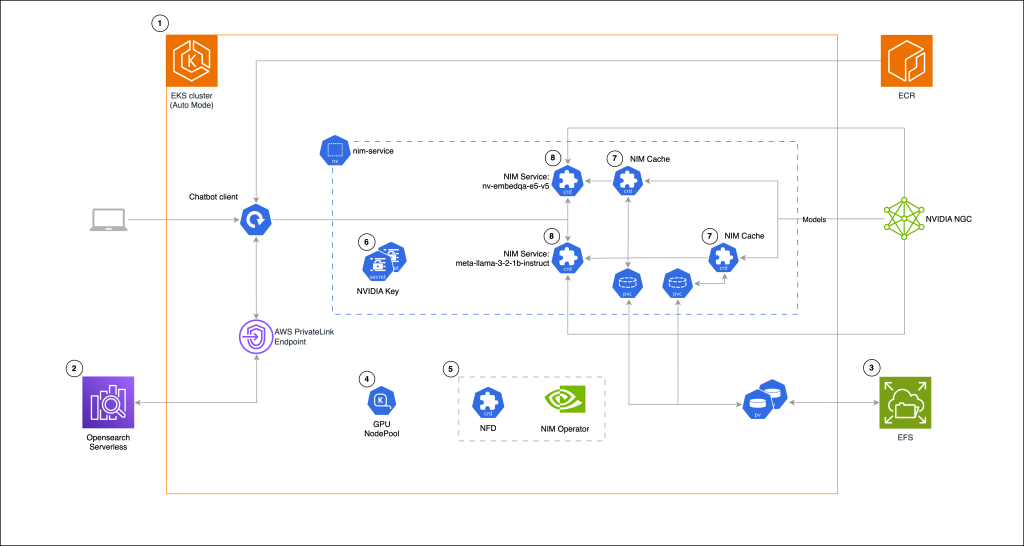

Building a RAG chat-based assistant on Amazon EKS Auto Mode and NVIDIA NIMs

In this post, we demonstrate the implementation of a practical RAG chat-based assistant using a comprehensive stack of modern technologies. The solution uses NVIDIA NIMs for both LLM inference and text embedding services, with the NIM Operator handling their deployment and management. The architecture incorporates Amazon OpenSearch Serverless to store and query high-dimensional vector embeddings for similarity search.

Containerize legacy Spring Boot application using Amazon Q Developer CLI and MCP server

In this post, you’ll learn how you can use Amazon Q Developer command line interface (CLI) with Model Context Protocol (MCP) servers integration to modernize a legacy Java Spring Boot application running on premises and then migrate it to Amazon Web Services (AWS) by deploying it on Amazon Elastic Kubernetes Service (Amazon EKS).