AWS Partner Network (APN) Blog

Bring Your Own Public IP (BYOIP) Addresses to VMware Cloud on AWS

By Rene van den Bedem, Sr. Specialist Solutions Architect – AWS

|

This post describes how a customer can migrate legacy applications with Bring Your Own Public IPv4 Addresses (BYOIP) into VMware Cloud on AWS.

VMware Cloud on AWS is an integrated hybrid cloud offering jointly developed by Amazon Web Services (AWS) and VMware.

VMware Cloud on AWS does not currently support bringing your own publicly routable IPv4 address ranges from your on-premises network to VMware Cloud on AWS. However, AWS allows you to bring part or all of your publicly routable IPv4 or IPv6 address range from your on-premises network to your AWS account.

You continue to own the address range, but AWS advertises it on the internet for you. After you bring the address range to AWS, it appears in your AWS account as an address pool.

In this post, I will describe how to support a multi-region, business-critical legacy monolithic application with customer-owned static public IPv4 addresses running on VMware Cloud on AWS. You can do this whilst using the AWS Cloud to deliver the BYOIP function with Amazon Virtual Private Cloud (VPC), Network Load Balancer (NLB), Elastic IP address (EIP), Amazon Route 53, and VMware Transit Connect (vTGW).

Multi-Site Three-Tier Application Topology

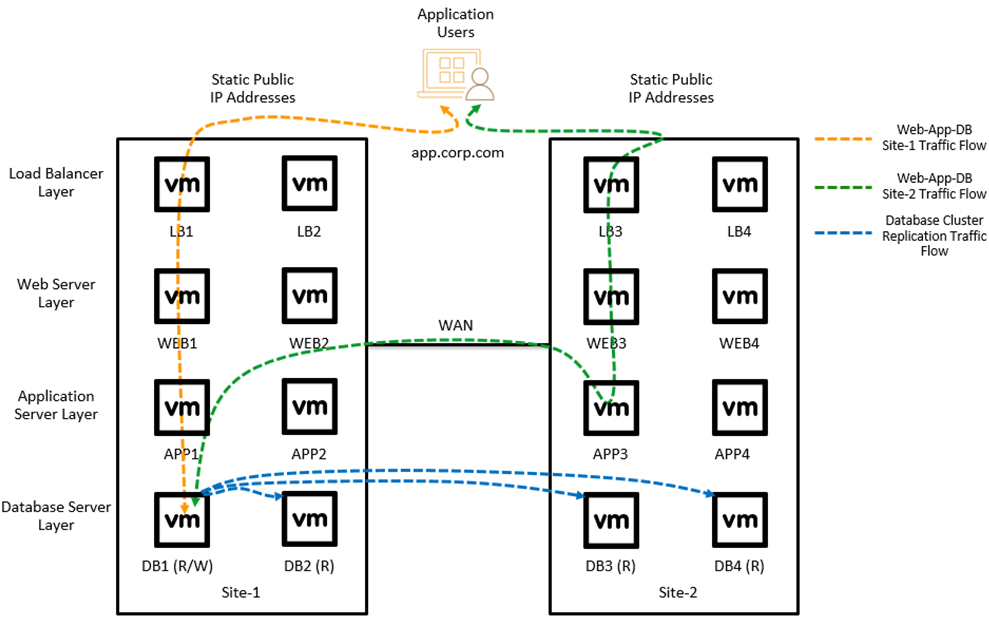

The diagram below describes a multi-site legacy three-tier business-critical application architecture.

Figure 1 – Multi-site legacy business-critical application topology.

The application is hosted in two data centers that are connected by a high-speed Wide Area Network (WAN) link. Application users access the service via the app.corp.com uniform resource locator (URL) which is backed by a Global Site Load Balancer (GSLB) in the load balancer layer. The GSLB translates the URL to the static public IP address which is forwarded to the private IP address of the selected web server via Network Address Translation (NAT).

The web server layer connects to the application server layer, which accesses the database server layer. The application server layer also uses the WAN link to remote access the read/write node of the database cluster.

The database server layer is a multi-site relational database management service (RDMS) cluster, where the active read/write node replicates database changes to the other read-only nodes in the database cluster via the Local Area Network (LAN) and WAN links.

VMware Transit Connect

VMware Transit Connect provides high bandwidth, low latency, and resilient connectivity to software-defined data centers (SDDCs) within an SDDC group. VMware Transit Connect uses AWS Transit Gateway (TGW) for this service.

An SDDC group is a logical entity that leverages vTGW through automated provisioning and controls to interconnect SDDCs, Amazon VPCs, and a customer-managed TGW. It also establishes hybrid connectivity to on-premises data centers using an AWS Direct Connect Gateway (DXGW).

In addition, VMware Transit Connect helps customers simplify management at scale through automatic route propagations of SDDC networks to the route tables in each SDDC and the vTGW. SDDCs automatically learn routes advertised by other SDDCs within the same SDDC group, and networks advertised from other VPCs, TGWs, and on-premises networks via the vTGW and DXGW. Customers can configure the specific routes they wish to share with VMware Transit Connect.

Furthermore, with the recent M15 release from VMware, customers can integrate up to 20 SDDCs from up to three AWS Regions into the same SDDC group. Multi-region VMware Transit Connect provides simplified routing and connectivity and is the foundation of the BYOIP use case. The next section describes this architecture.

BYOIP VPC Architecture

This reference architecture has been developed for multiple AWS customers that want to migrate hundreds of legacy applications into VMware Cloud on AWS and have a static public IPv4 address that is a constraint to the service. They cannot use the DHCP function from Amazon’s pool of public IPv4 addresses that VMware Cloud on AWS supports.

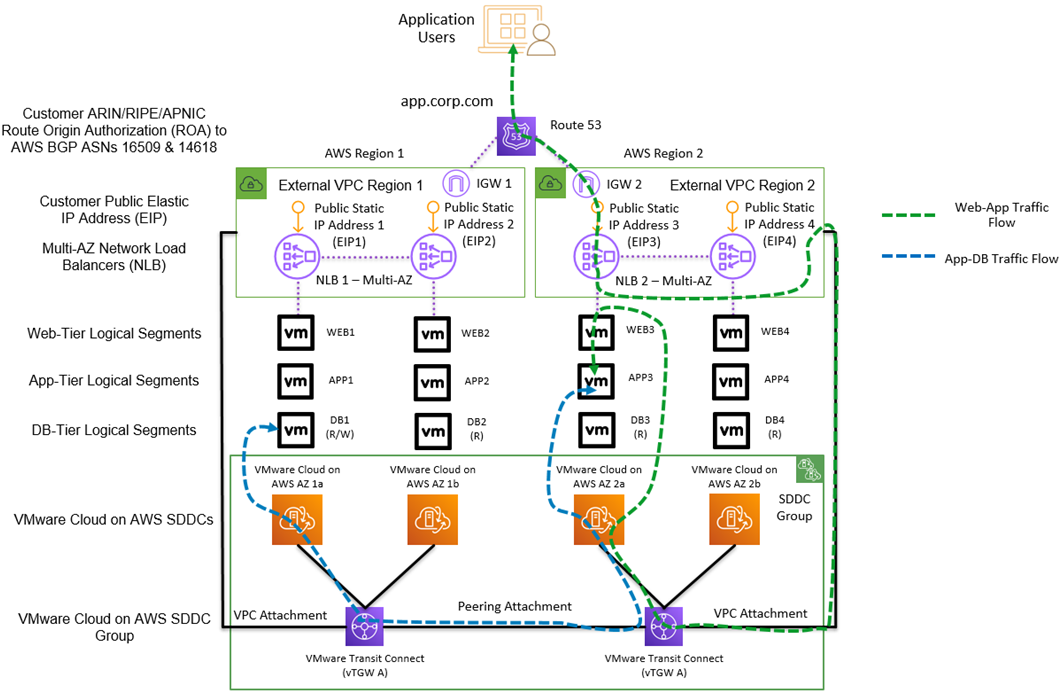

The diagram below describes a multi-site legacy three-tier business-critical application architecture running in VMware Cloud on AWS with multi-region VMware Transit Connect and BYOIP VPCs.

Figure 2 – BYOIP with VMware Cloud on AWS.

Amazon Route 53, EIP, and NLB are used to create a multi-region load balancing solution for the legacy business-critical virtual machines (VMs) running in VMware Cloud on AWS. The customer route origin authorization (ROA) is used to give AWS permission from the relevant regional internet registry to advertise the customer-owned IPv4 networks from AWS.

Route 53 provides DNS resolution and multi-region traffic control with a latency or geoproximity traffic policy.

ROAs are used to allow AWS to advertise the customer-owned IPv4 networks from the AWS BGP ASNs 16509 and 14618. The customer BYOIP networks become available in the customer’s AWS account as “BYOIP” Public IPv4 Address Pools for Elastic IP Address assignment.

The EIPs from the “BYOIP” Public IPv4 Address Pools are assigned to VPC resources such as Network Load Balancers, including target groups with the IP addresses of the web server VMs running in VMware Cloud on AWS.

The SDDC group uses vTGW to provide high-bandwidth, low-latency connectivity between:

- SDDCs in an SDDC group.

- SDDCs and one or more VPCs.

- SDDCs and one or more native TGW (intra- and inter-region).

- SDDCs and on-premises via DXGW.

- SDDCs in other regions (inter-region).

Amazon VPC uses VPC attachments to connect to the vTGW to establish communication with networks connected to the vTGW.

The vTGW peering attachment enables cross-region communication between SDDC compute and management networks connected to vTGW A and vTGW B.

Setup

In the following example, we’ll deploy BYOIP into AWS and integrate with VMware Cloud on AWS using VMware Transit Connect.

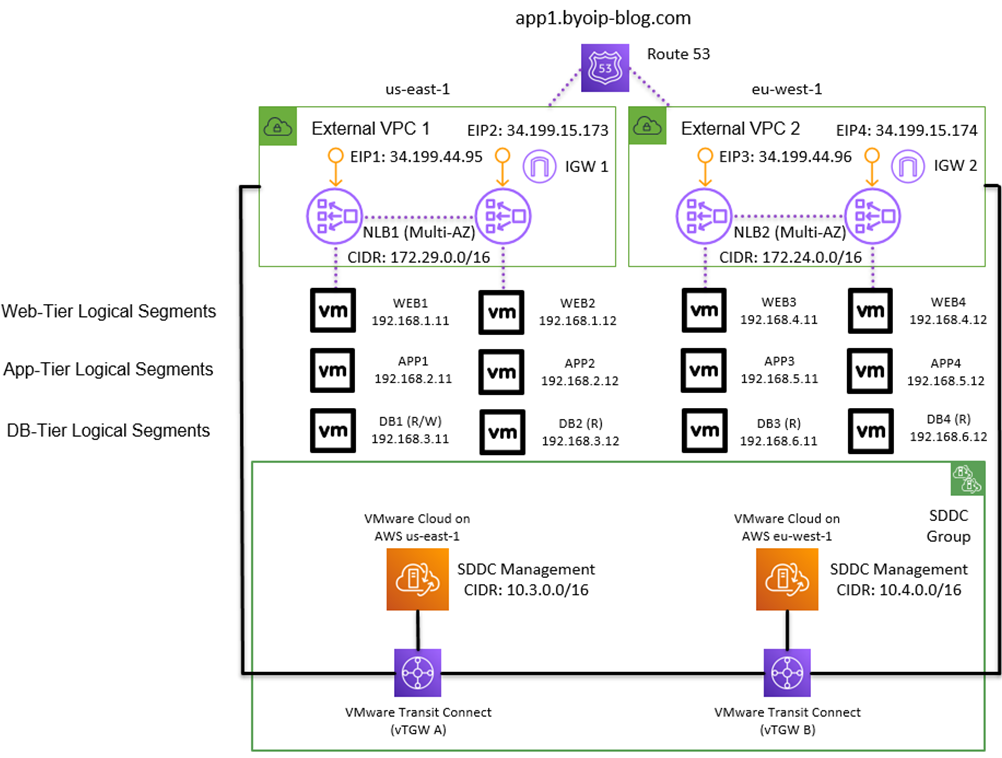

Figure 3 – Lab configuration.

Before we get started, I have provisioned and prepared the following items as prerequisites to this lab:

- Two SDDCs deployed, one in each AWS Region with M15 release or higher.

- AWS account with one VPC configured per AWS Region that will be connected as an “external” VPC to the SDDC group. Each VPC has a public subnet configured.

- A pair of web, application, and database VMs running in each SDDC.

- Customer-owned IPv4 subnets imported into the designated “external” VPC in each AWS Region.

- Amazon Route 53 public hosted zone is configured.

- Each SDDC has north-south customer gateway network security policies configured to allow web, application, and database tier traffic.

- Each VPC has security group and network access control list (NACL) security policies configured to allow internet, NLB and web tier traffic.

I will walk through the configuration of the following steps:

- VMware Transit Connect connected with Amazon VPCs

- BYOIP with Amazon NLB

- Amazon Route 53 with Amazon NLB

Part 1: VMware Transit Connect and Amazon VPC

From the VMware Cloud on AWS console, we’ll begin by creating an SDDC group to include the existing SDDCs as members. This triggers the automatic deployment of the vTGWs (one per AWS Region) that connects to the SDDCs via high-speed VPC attachments.

Once the VMware Transit Connect and SDDC connectivity status are showing “CONNECTED,” we can associate the AWS account containing the external VPCs to the SDDC group. The external VPC account status will be listed as “ASSOCIATING.”

Figure 4 – Associate AWS account to the SDDC group.

In the AWS Management Console, we’ll see the vTGW resource being shared under the Resource Access Manager (RAM). Accept the share for each AWS Region and the external VPC account status will change to “ASSOCIATED.”

Figure 5 – Accept the vTGW resource share in both AWS Regions.

In the AWS console, create a Transit Gateway attachment to connect the vTGW to the external VPC in each AWS Region.

Figure 6 – Create a Transit Gateway attachment for the external VPCs to the vTGWs.

Back in the SDDC group console, accept the newly-created TGW attachment. The TGW attachment status for each external VPC will change from “PENDING ACCEPTANCE” to “AVAILABLE.”

Figure 7 – Accept the external VPC attachment for each AWS Region.

We can now add a static route for each TGW attachment specifying the CIDR range of each respective external VPC. The static route will be automatically propagated to both member SDDCs, and this can be verified in the “Routes Learned” section under Networking and Security > Transit Connect.

Figure 8 – Add the external VPC CIDR ranges as static routes.

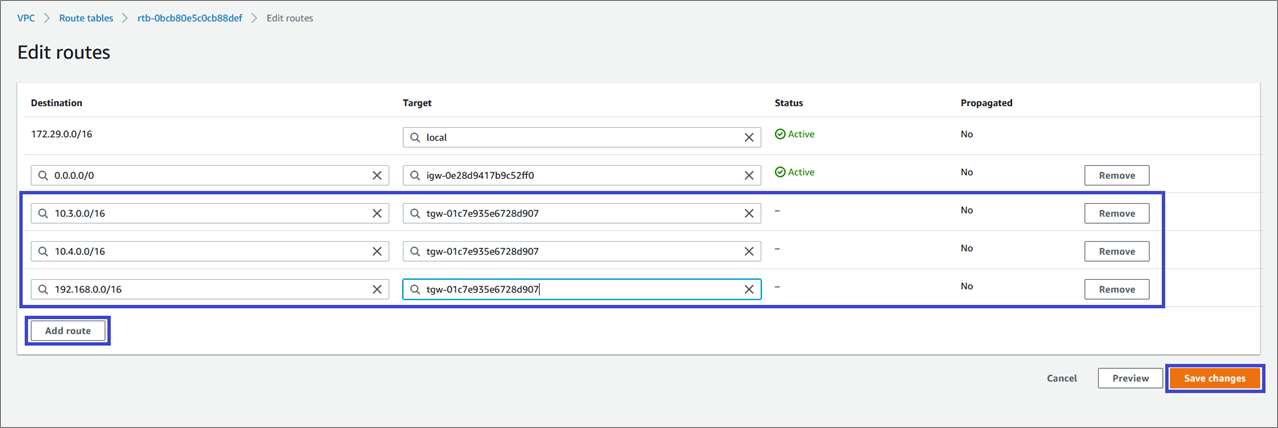

Next, we’ll configure static routes for each of the external VPCs. This ensures all internet traffic terminating on the NLBs can be forwarded to the web servers running in the SDDCs. We’ll add the SDDC management and compute CIDR ranges with the vTGW as the target in each external VPC route table.

Figure 9 – Add the SDDC CIDR ranges as static routes for each external VPC.

Part 2: BYOIP with Network Load Balancers

In the AWS console, assign the static customer-owned IPv4 addresses to EIPs from the Amazon Elastic Compute Cloud (Amazon EC2) console. Select the “Customer owned Pool of IPv4 addresses” to consume the IPv4 addresses that were migrated from the on-premises network. Perform this task for both AWS Regions.

Figure 10 – Allocate two static public IPv4 addresses as EIPs for each AWS Region.

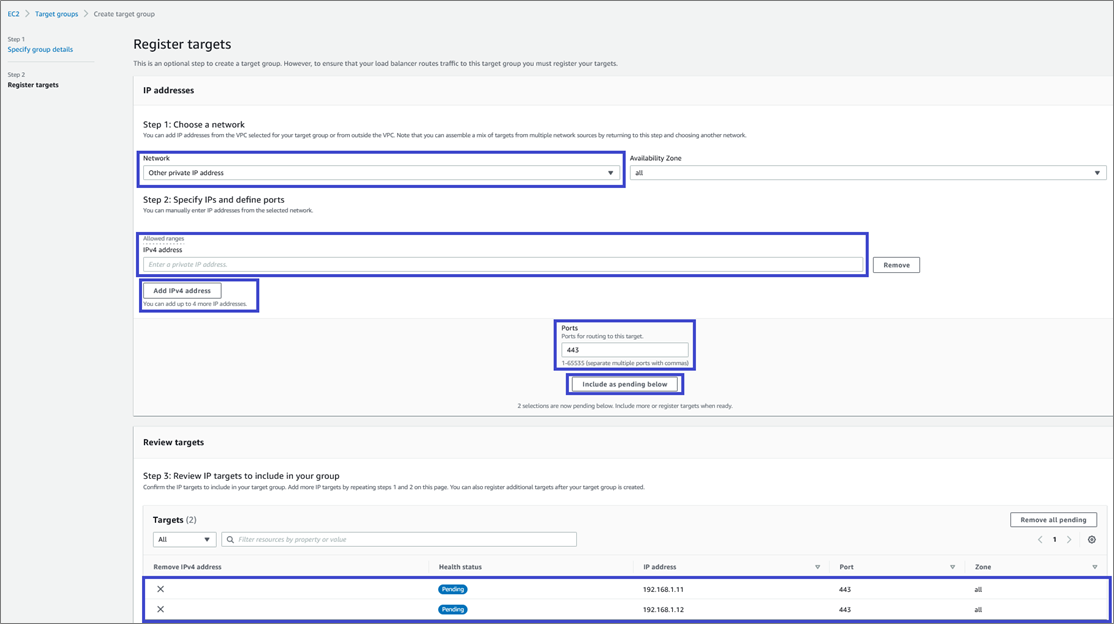

We then create the Load Balancer target groups from the Amazon EC2 console. Use the “IP Addresses” target type and select the external VPC.

Figure 11 – Create the Load Balancer target group for each AWS Region.

Choose the “Other private IP address” network option and specify the VMware Cloud on AWS web server IP addresses as targets. Perform this task for both AWS Regions.

Figure 12 – Add the web server IP addresses to each Load Balancer target group.

Next, create the Network Load Balancer from the Amazon EC2 console. Use the “Network Load Balancer” type with the “Internet-facing” scheme and “IPv4” address type.

Choose the external VPC and map to two AWS Availability Zones (AZs), and then select “Use an Elastic IP address” as the IPv4 address. Select the BYOIP IP address from the IP address drop-down menu for each AZ.

Finally, select the target group configured earlier as the listener. Perform this task for both AWS Regions.

Figure 13 – Configure the NLB for each AWS Region.

Part 3: Amazon Route 53

Now, we’ll add two “A” records to the Amazon Route 53 public hosted zone with “Alias” enabled. Route traffic to “Alias to Network Load Balancer” and select the NLB name created earlier. Select the appropriate routing policy; one “A” record is required per AWS Region.

Figure 14 – Add “A” records to the Amazon Route 53 public hosted zone.

We can now enter the application service URL in a web browser. The traffic will hit Amazon Route 53 and be passed onto the NLB in either AWS Region, which will then be forwarded to the web server running in VMware Cloud on AWS.

The Network Load Balancer performs the NAT function from public IPv4 to RFC 1918 private IPv4. The web server sends traffic to the application server and from there to the database cluster.

Additional Considerations

Design Considerations

- AWS supports a maximum of five BYOIP subnets per AWS Region.

- AWS supports a minimum BYOIP subnet size of /24 (for example, /25 is not supported, /23 is supported).

- One VMware Transit Connect can connect a maximum of three AWS Regions.

- One VMware Transit Connect can support a maximum of 64 external VPCs.

- One VMware Transit Connect can support a maximum of 20 SDDCs.

Asymmetric Traffic Flows

One of the risks of using VMware Cloud on AWS with multiple routing domains and multiple default routes (DXGW, SDDC, vTGW, VPC) are asymmetric traffic flows.

This reference architecture follows the best practice of symmetric traffic flows, with the NLB terminating each incoming TCP connection from the internet and creating a new TCP connection to each web server. None of the RFC 1918 private IP addresses use a default route.

Migration Planning

VMware HCX (included in the VMware Cloud on AWS service) can be used to extend a Layer 2 network from on-premises to VMware Cloud on AWS while the customer-owned IPv4 subnet is still hosted by the customer on-premises routers. This allows the application to be migrated to VMware Cloud on AWS while the network cutover planning is in-progress.

Summary

In this post, we took a closer look at Bring Your Own IPv4 Address (BYOIP) to VMware Cloud on AWS using VMware Transit Connect.

We also went through an example of running a multi-region legacy business-critical application in VMware Cloud on AWS using static customer-owned public IPv4 addresses in a dual AWS Region configuration.

To learn more about these services, we recommend you review these additional resources: