AWS Architecture Blog

Architecting for agentic AI development on AWS

If you’re architecting cloud systems for AI development on AWS, you’ve likely discovered that traditional architectures create friction for AI agents. Many cloud teams are experimenting with AI coding assistants but quickly discover a gap between what these tools promise and what their architectures allow. When an AI agent generates code, it often takes minutes—or hours—before you can validate whether that change actually works. Slow deployment cycles, tightly coupled services, and opaque code bases turn every iteration into a high-friction exercise. As a result, AI agents struggle to operate autonomously, and developers are forced back into manual validation loops.

This article is written for cloud architects who want to remove that friction. It focuses on agentic development, a model where an AI agent does more than suggest snippets—it writes, tests, deploys, and refines code through rapid feedback cycles. To make that possible, both your system architecture and your code base architecture must be designed to support fast validation, safe iteration, and clear intent.

In this post, we demonstrate how to architect AWS systems that enable AI agents to iterate rapidly through design patterns for both system architecture and code base structure. We first examine the architectural problems that limit agentic development today. We then walk through system architecture patterns that support rapid experimentation, followed by codebase patterns that help AI agents understand, modify, and validate your applications with confidence.

Why traditional architectures hinder agentic AI

Most cloud architectures were designed for human-driven development. They assume long-lived environments, manual testing, and infrequent deployments. In an agentic workflow, those assumptions break down.

AI agents must validate changes continuously. When every test requires provisioning cloud resources, waiting for pipelines, or debugging deployment-only failures, feedback loops become too slow. Tight coupling between business logic and cloud services further complicates local testing, while inconsistent project structures make it difficult for an agent to understand where changes belong.

Without architectural support, agentic AI produces more risk than value. The solution is not better prompts, it’s an architecture that treats fast feedback and clear boundaries as first-class concerns. This architectural friction isn’t only inconvenient, it fundamentally limits AI agent effectiveness. Here’s how to redesign your architecture to help unlock the potential of agentic AI.

System architecture for fast agentic feedback loops

Agentic development depends on feedback speed. The faster an agent can observe the impact of a change, the more effectively it can refine its output. System architecture plays a decisive role here.

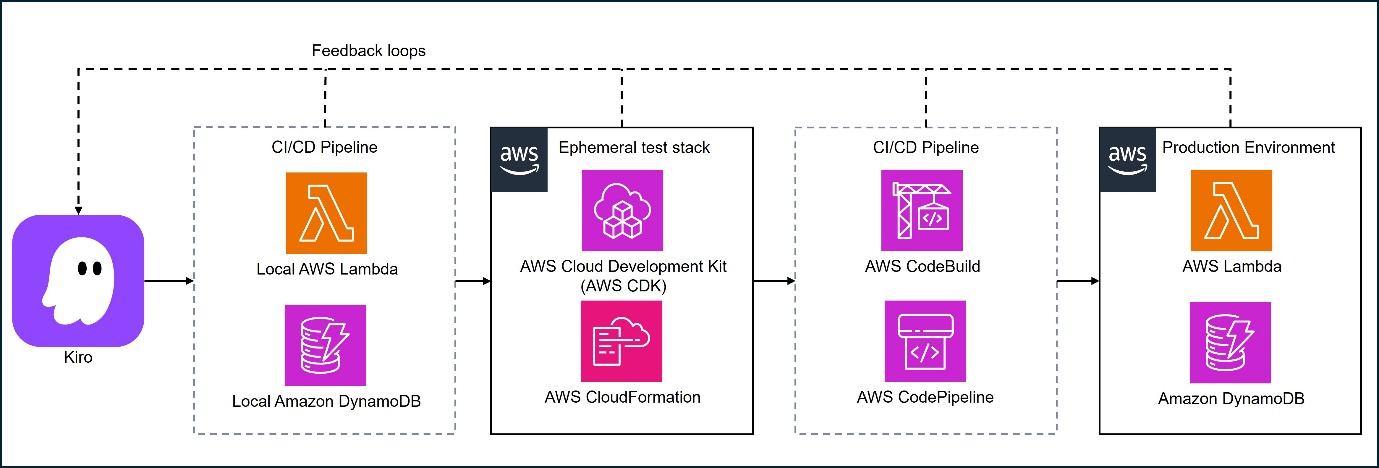

Figure 1: High-level architecture enabling agentic development: local test loops, ephemeral test stack, and continuous integration and continuous delivery (CI/CD) pipeline triggered by AI

Local emulation as the default feedback path

Whenever possible, your architecture should allow AI agents to test changes locally before touching cloud resources. AWS provides several tools that make this practical.

For example, serverless applications built with AWS Lambda and Amazon API Gateway can be emulated locally using the AWS Serverless Application Model (AWS SAM). With the sam local start-api command, an AI agent can invoke Lambda functions through a locally emulated API Gateway, observe responses immediately, and iterate in seconds rather than minutes.

Containers offer similar benefits for services that run on Amazon Elastic Container Service (Amazon ECS) or AWS Fargate. By building and running the same container images locally, an agent can validate application behavior before deploying to the cloud. For data persistence, Amazon DynamoDB Local allows the agent to test create, read, update, and delete (CRUD) operations against a local database that mirrors the DynamoDB API.

Note: Local emulation reduces iteration time, allowing AI-generated code to be validated in seconds and potentially reducing the cost and risk of experimentation.

Offline development for data and analytics workloads

Many workloads fit neatly into request-response testing, but data processing pipelines often involve large datasets and distributed execution. Even here, agentic workflows benefit from local feedback.

AWS Glue provides Docker images that allow AWS Glue jobs to run locally with the AWS Glue ETL libraries. An AI agent can validate transformations against sample datasets, inspect intermediate results, and only move to the cloud for scale testing. The same pattern applies to other data and machine learning (ML) workloads: isolate logic, test locally with reduced data, and promote validated code to managed services later.

Note: Offline development shortens feedback loops for data workloads and reduces unnecessary cloud runs during early iteration.

Hybrid testing with lightweight cloud resources

Some AWS services cannot be fully emulated locally. In these cases, the goal is not to avoid the cloud, but to keep cloud feedback lightweight.

For event-driven systems using Amazon Simple Notification Service (Amazon SNS) or Amazon Simple Queue Service (Amazon SQS), you can define minimal development stacks using infrastructure as code (IaC) tools such as AWS CloudFormation or the AWS Cloud Development Kit (AWS CDK). An AI agent can deploy small, isolated resources, invoke them through the AWS SDK, and validate behavior without provisioning full environments.

This hybrid approach treats the cloud as another test dependency—used sparingly and predictably.

Note: Hybrid testing confirms real service behavior early while keeping cloud usage focused and controlled.

Preview environments and contract-first design

Fast feedback does not stop at local testing. End-to-end validation still matters, especially when multiple services interact.

Preview environments are short-lived stacks deployed on demand for validation. Defined through IaC, they allow an AI agent to deploy a complete application, run smoke tests, and tear everything down when finished. When combined with contract-first design—where APIs are defined upfront using OpenAPI specifications—agents can validate integrations even before all services are implemented.

Note: Preview environments can reduce integration risk and allow AI-generated changes to be validated safely before reaching production.

Code base architecture for AI-friendly development

System architecture accelerates feedback, but code base architecture determines whether an AI agent can make sense of what it is changing.

Domain-driven structure with explicit boundaries

We recommend agentic development when your repository reflects clear architectural intent. A domain-driven structure inspired by Domain-Driven Design (DDD) separates core business logic from application orchestration and infrastructure concerns.

In practice, this often means organizing code into predictable layers such as /domain, /application, and /infrastructure. The domain layer contains business rules with no Amazon dependencies. Infrastructure code handles integrations with services such as Amazon DynamoDB or Amazon SNS. This separation allows AI agents to modify business logic and validate it locally without touching cloud-specific code.

Patterns like hexagonal architecture reinforce this separation by treating external systems as adapters rather than dependencies.

Note: Clear boundaries can reduce unintended side effects and make AI-generated changes more straightforward to reason about and test.

Encoding architectural intent with project rules

Even well-structured repositories benefit from explicit guidance. Kiro supports steering files—Markdown files stored under .kiro/steering/—that describe architectural constraints and coding conventions.

For example, a rule might state that database access must go through repository classes in the infrastructure layer. The agent consults these rules automatically, reducing the need to restate constraints in every prompt and helping to keep generated code aligned with your architecture.

Note: Project rules reduce architectural drift and help maintain consistency as AI agents operate more autonomously.

Tests as executable specifications

In agentic workflows, tests do more than catch regressions, they define acceptable behavior. A layered testing strategy works particularly well:

- Unit tests validate domain logic in isolation and run quickly, making them ideal for frequent AI-driven iterations.

- Contract tests verify that services honor agreed interfaces, catching breaking changes early.

- Smoke tests run against deployed environments to surface configuration or permission issues that only appear at runtime, such as missing AWS Identity and Access Management (IAM) permissions.

Well-written tests also act as documentation. When a test fails, the agent can infer what behavior is expected and refine its changes accordingly.

Note: Tests provide fast, objective validation of AI-generated code and reduce the risk of subtle integration failures.

Monorepos and machine-readable documentation

AI agents work more effectively when they have broad context. A monorepo allows the agent to navigate across services, understand shared patterns, and evaluate the impact of changes system-wide. Within that repository, concise and structured documentation is essential. Files such as AGENT.md can explain architectural principles and constraints, while RUNBOOK.md and CONTRIBUTING.md describe operational and development workflows. Machine-readable formats, such as YAML or configuration files, are more straightforward for agents to interpret than lengthy prose.

Kiro can use foundational steering documents—summaries of structure, technology, and product guidelines—to help the agent maintain situational awareness as the project evolves.

Note: Shared context improves the quality of AI-generated changes and reduces the need for manual correction.

Integrating agents safely into delivery pipelines

As AI agents become more capable, governance remains essential. Continuous integration and continuous deliver (CI/CD) pipelines should include guardrails such as required test execution, automated reviews, and branch protections. Over time, as confidence grows, you can expand the agent’s autonomy while keeping humans in the loop for high-impact decisions. This balance allows AI to accelerate routine work without increasing operational risk.

Conclusion

Agentic AI development does not succeed by accident. It requires architectures that prioritize fast feedback, clear boundaries, and explicit intent. Combining local emulation, lightweight cloud testing, and preview environments with domain-driven structure, layered testing, and machine-readable documentation creates an environment where AI agents can operate effectively and safely. Tools like Kiro help bridge the gap between human design decisions and autonomous AI execution. When architecture aligns with agentic workflows, AI agents become true force multipliers, handling iterative development at speed while your team focuses on higher-level design and innovation.

To learn more about how AWS can help your organization implement agentic solutions, visit AWS Agentic AI.