AWS Cloud Operations Blog

Creating and hydrating self-service data lakes with AWS Service Catalog

Organizations are evolving IT processes to include data lakes and supporting services. Your organization might start by looking to extend the self-service portals you built using AWS Service Catalog to create data lakes as well. A self-service portal lets users vend required AWS resources within the guardrails defined by your cloud center of excellence (CCOE) team. This removes the heavy lifting from the CCOE team and lets users build their own environments. With AWS Service Catalog, you can also define the constraints on which AWS resources your users can and can’t deploy.

For example, with an appropriately configured self-service portal that supports creation and hydration of data lakes for structured relational data, your users could do the following:

- Vend an Amazon RDS database that they can launch only in private subnets.

- Create an Amazon S3 bucket with versioning and encryption enabled.

- Create an AWS DMS task that can hydrate only the chosen S3 bucket.

- Launch an AWS Glue crawler that populates an AWS Glue Data Catalog for data from that chosen S3 bucket.

With adequately configured constraints and templates, you can be confident that your users follow the best practices around these services (that is, private subnets, encrypted buckets, and specific security groups).

In this post, I show you how to use AWS Service Catalog to create an IT self-service portal that lets you create a data lake and populate your Data Catalog.

Data lake basics

A data lake is a central repository of your structured and unstructured data that you use to store information in a separate environment from your active or compute space. A data lake enables diverse query capabilities, data science use cases, and discovery of new information models. For more information on data lakes, see Data Lakes and Analytics on AWS. Amazon S3 is an excellent choice for building your data lake on AWS because it offers multiple integrations. For more information, see Amazon S3 as the Data Lake Storage Platform.

Before you use your data lake for analytics and machine learning, you must first hydrate it—fill it with data—and create a Data Catalog containing metadata. AWS DMS works well for hydrating your data lake with structured data from your database. With that in place, you can use AWS Glue to automatically discover and categorize data, making it immediately searchable and queryable across data sources such as Glue ETL, Amazon Athena, Amazon Redshift Spectrum, and Amazon EMR.

Other AWS services such as Amazon Data pipeline can be used for hydrating a data lake from a structured or unstructured database in addition to AWS DMS. This post demonstrates a specific solution that uses AWS DMS.

The manual data lake hydration process

The following diagram shows the typical data lake hydration and cataloging process for databases.

- Create a database, which various applications populate with data.

- Create an S3 bucket to which you can export a copy of the data.

- Create a DMS replication task that migrates the data from your database to your S3 bucket. You can also create an ongoing replication task that captures ongoing changes after you complete your initial migration. This process is called ongoing replication or change data capture (CDC).

- Run the DMS replication task.

- Create an AWS Glue crawler to crawl your S3 bucket and populate your AWS Glue Data Catalog. AWS Glue can crawl RDS too, for populating your Data Catalog; in this example, I focus on a data lake that uses S3 as its primary data source.

- Run the crawler.

For more information, see to following resources:

- Build a Data Lake Foundation with AWS Glue and Amazon S3

- Using AWS Database Migration Service and Amazon Athena to Replicate and Run Ad Hoc Queries on a SQL Server Database

The automated, self-service data lake hydration process

Using AWS Service Catalog, you can set up a self-service portal that lets your end users request components from your data lake, along with tools to hydrate it with data and create a Data Catalog.

The diagram below shows the data lake hydration process using a self-service portal.

The automated hydration process using AWS Service Catalog consists of the following:

- An Amazon RDS database product. Because CCOE team controls the CloudFormation template that enables resource vending, your organization can maintain appropriate security measures. You can also tag subnets for specific teams and configure catalog such that self-service portal users only choose from an allowed list of subnets. You can codify RDS database best practices (such as Multi-AZ) in your CloudFormation template and simultaneously leave the decision points such as the size, engine, and number of read replicas for the database up to the user. With self-service actions, you can also further extend the RDS product to enable your users to start, stop, restart, and otherwise manage their own RDS database.

- An S3 bucket. By controlling the CloudFormation template that creates the S3 bucket, you can enable encryption at the source, as well as versioning, replication, and tags. Along with the S3 bucket, you can also allow your users to vend service-specific IAM roles configured to grant access to the S3 bucket. Your users can then use these roles for tasks such as:

- AWS Glue crawler task

- DMS replication task

- Amazon SageMaker execution role with access only to this bucket

- Amazon Elastic Compute Cloud (Amazon EC2) role for Amazon EMR

- A DMS replication task, which copies the data from your database into your S3 bucket. Users can then go to the console and start the replication task to hydrate the data lake at will.

- An AWS Glue crawler to populate the AWS Glue Data Catalog with metadata of files read from the S3 bucket.

To allow users from specific teams to vend these resources, you must associate an AWSServiceCatalogEndUserFullAccess managed policy with them. Your users also need IAM permissions to stop or start a crawler and a DMS task.

You can also configure the catalog to use launch constraints, which assume the appropriate, pre-configured IAM roles you configured and execute your CloudFormation template whenever your users activate specific resource. This provides your users capability to execute specific tasks, such as creating a DMS task or S3 bucket within guardrails you define.

After creating these resources, users can run the DMS task and AWS Glue crawler using the AWS console, finally hydrating and populating the Data Catalog.

You can try the above solution by deploying a sample catalog. The sample catalog solution creates a VPC, subnets, and IAM roles. It sets up a sample catalog with service products such as AWS Glue crawlers, DMS tasks, RDS, S3, and corresponding IAM roles for the AWS Glue crawler and DMS target. It also creates an end user and demonstrates how to allow that user to deploy RDS and DMS tasks using only the subnets created for them. The sample catalog also teaches you to configure launch constraints, so you don’t have to grant additional permissions to users. It contains an S3 product that vends service-specific IAM roles with access restricted to a specific S3 bucket.

Best practices for data lake hydration at scale

By configuring catalog in such a manner, you can make implementing the following best practices at scale easier by standardizing and automating:

- Grant the least amount of privilege possible. IAM users should have an appropriate level of permissions to only do the task they must do.

- Create resources such as S3 buckets with appropriate read/write permissions, with encryption and versioning enabled.

- Use a team-specific key for database and DMS replication tasks, and do not spin up either in public subnets.

- Give team-specific DMS and AWS Glue roles access to only the S3 bucket created for their individual team.

- Do not enable users to spin up RDS and DMS resources in VPCs or subnets that do not belong to their teams.

With AWS CloudFormation, you can automate the manual work by writing a CloudFormation template. With AWS Service Catalog, you can make templates available to end users like data curators, who might not know all the AWS services in detail. With the self-service portal, your users would vend AWS resources only using the CloudFormation template that you standardize and into which you implement your security best practices.

With a self-service portal created using AWS Service Catalog, you can automate the process and leave decision points like RDS engine type, size of the database, VPC, and other configurations to your users. This helps you maintain an appropriate level of security, keeping the nuts and bolts of that security automated behind the scene.

Make sure that you understand how to control the AWS resource you deploy using AWS Service Catalog as well as the general vocabulary before you begin. In this post, you populate a self-service portal using a sample RDS database blueprint from AWS Service Catalog reference blueprints.

How to deploy a sample catalog

To deploy the sample catalog solution discussed earlier, follow these steps.

Prerequisites

To deploy this solution, you need administrator access to the AWS account.

Step 1: Deploy the AWS CloudFormation template

A CloudFormation template handles most of the heavy lifting of sample catalog setup:

- Download the sample CloudFormation template (raw file) to your computer.

- Log in to your AWS account using a user account or role that has administrator access.

- In the AWS CloudFormation console, create a new stack in AWS CloudFormation in the us-east-1 Region.

- Under Choose a template section, choose Choose File, and select the yaml file that you downloaded earlier. Choose Next.

- Complete the wizard and choose Create.

- When the stack status changes to CREATE COMPLETE, select the stack and choose Outputs. Note the link in output (SwitchRoleSCEndUser) for switching to the AWS Service Catalog end-user role.

The templates this post provides are samples and not intended for production use. However, you can review the CloudFormation template to understand the infrastructure it creates.

Step 2: View the catalog

Next, you can view the catalog:

- In the console, in the left navigation pane, under Admin, choose Portfolio List.

- Choose Analytics Team portfolio.

- The sample catalog automatically populates the following for you:

- An RDS database (MySQL) for vending a database instance

- An S3 bucket and appropriate roles for vending the S3 bucket and IAM roles for the AWS Glue crawler and the DMS task

- AWS Glue crawler

- A DMS task

Step 3: Create an RDS database using AWS Service Catalog

For this post, set up an RDS database. To do so:

- Switch to my_service_catalog_end_user role by launching the link you noted in the output section during Step 1.

- Open this console to see the products available for you to launch as an end-user.

- Choose RDS Database (Mysql).

- Choose Launch Product. Specify the name as my-db.

- Choose v1.0, and choose Next.

- On the Parameters page, specify the following parameters and choose Next.

- DBVPC: Choose the one with SC-Data-lake-portfolio in its name.

- DBSecurityGroupName: Specify dbsecgrp.

- DBSubnets: Choose private subnet 1 and private subnet 2.

- DBSubnetGroupName: Specify dbsubgrp.

- DBInputCIDR: Specify CIDR of the VPC. If you did not modify defaults in step 1, then this value is 10.0.0.0/16.

- DBMasterUsername: master.

- DBMasterUserPassword: Specify a password that is at least 12 characters long. The password must include an uppercase letter, a lowercase letter, a special character, and a number. For example, dAtaLakeWorkshop123_.

- Leave the remaining parameters as they are.

- On the Tag options page, choose Next (AWS Service Catalog automatically generates a mandatory tag here).

- On the Notifications page, choose Next.

- On the Review page, choose Launch.

- The status changes to Under Change/In progress. After AWS Service Catalog provisions the database, the status changes to Available/Succeeded. You can see the RDS connection string available in the output.

The output contains MasterJDBCConnectionString connection string, which includes the RDS endpoint (the underlined portion in the following example). You can use the same endpoint to connect to the database and create sample data.

Sample output:

jdbc:mysql://<u>XXXX.XXXX.us-east-1.rds.amazonaws.com:</u>3306/mysqldbThis example vends an RDS database, but you can also automate the creation of an Amazon DynamoDB, Amazon Redshift cluster, Amazon Kinesis Data Firehose delivery stream, and other necessary AWS resources.

Step 4: Load sample data into your database (optional)

For security reasons, I provisioned the database in a private subnet. You must set up a bastion host (unless you have VPN or DirectConnect access) to connect it to your database. You can provision an Amazon Linux 2.0-based bastion host in the public subnet and then log on to the same. For more information, see Launch an Amazon EC2 instance.

The my_service_catalog_end_user does not have access to the Amazon EC2 console. Do this step with an alternate user that has permissions to launch an EC2 instance. After you launch an EC2 instance, connect to your EC2 instance.

Next, execute the following commands to create a simple database and a table with two rows:

- Install the MySQL client:

sudo yum install mysql- Connect to the RDS that you provisioned:

mysql -h <RDS_endpoint_name> -P 3306 -u master -p

- Create a database:

create database mydb;- Use the newly created database:

use mydb;- Create a table called client_balance and populate it with two rows:

CREATE TABLE `client_balance` ( `client_id` varchar(36) NOT NULL, `balance_amount` float NOT NULL DEFAULT '0', PRIMARY KEY (`client_id`) );

INSERT INTO `client_balance` VALUES ('123',0),('124',1.0);

COMMIT;

SELECT * FROM client_balance;

EXIT

Step 5: Create an S3 bucket using AWS Service Catalog

Switch to the my_service_catalog_end_user role and follow the process outlined in Step 3 to provision a product from the S3 bucket and appropriate roles. If you are using a new AWS account that does not have dms-vpc-role and dms-cloudwatch-logs-role IAM roles, you can select N as parameters; otherwise, you can leave default values for parameters.

After you provision the product, you can see the output and find details of the S3 bucket and DMS/AWS Glue roles that can access the newly created bucket. Make a note of the output, as you need the S3 bucket information and IAM roles in subsequent steps.

Step 6: Launch a DMS task

Follow the process identical to Step 3 and provision a product from DMS Task product. When you provision the DMS task, on the Parameters page:

- Specify the S3 bucket from the output that you noted earlier.

- Specify servername as the server endpoint of your RDS database (for example, XXX.us-east-1.rds.amazonaws.com).

- Specify data as bucketfolder.

- Specify mydb as the database.

- Specify S3TargetDMSRole from the output you noted earlier.

- Specify Private subnet 1 as DBSubnet1.

- Specify Private subnet 2 as DBSubnet2.

- Specify S3TargetDMSRole from the output you noted earlier.

- Specify the dbUsername and dbPassword you noted after creating the RDS database.

Next, open the tasks section of DMS console and locate the newly created task; its status should read Ready. Select the task and choose Restart/Resume. This starts your DMS replication task, which hydrates the S3 bucket you specified earlier with the extract of database chosen.

I granted the my_service_catalog_end_user IAM role additional permissions – dms:StartReplicationTask and dms:StopReplicationTask, to allow users to start and stop DMS tasks. This shows how you can combine minimal permissions outside AWS Service Catalog to enable your users to perform tasks.

After the task completes, its status changes to Load Complete and the S3 bucket you created earlier now contains files filled with data from your database.

Step 7: Launch an AWS Glue crawler task

Now that you have hydrated your data lake with sample data, you can run AWS Glue crawler to populate your AWS Glue Data Catalog. To do so, follow the process outlined in Step 3 and provision a product from AWS Glue crawler. On the Parameters page, specify the following parameters:

- For S3Path, specify the complete path to your S3 bucket, for example: s3://<your_bucket_name>/data

- Specify IAMRoleARN as the value of GlueCrawlerIAMRoleARN from the output you noted at the end of Step 5.

- Specify the DatabaseName as mydb.

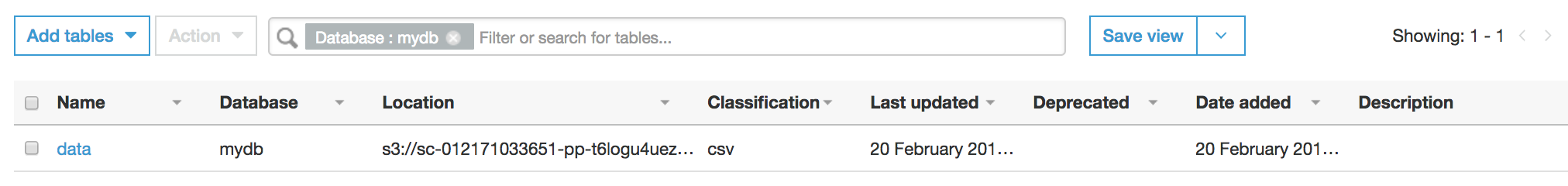

Next, open the crawlers section of the AWS Glue catalog to locate a crawler created by the AWS Service Catalog. The crawler’s status should read Ready. Select the task and then choose Run crawler. When the crawler finishes, you can review what data populated your database from the database console.

As shown in following diagram, you can select the mydb database and see tables to explore the AWS Glue Data Catalog populated by the AWS Glue crawler.

The principles discussed in this post can be extended to logs, streams, and files. You can use various BI tools to extract useful knowledge from your data lake. For information about how to visualize your data, see Harmonize, Query, and Visualize Data from Various Providers using AWS Glue, Amazon Athena, and Amazon QuickSight. You can query your data lake from Amazon SageMaker notebooks. For more information, see Access Amazon S3 data managed by AWS Glue Data Catalog from Amazon SageMaker notebooks.

Conclusion

AWS Service Catalog enables you to build and distribute catalogs of IT services to your organization. In this post, I demonstrated how you can set up a catalog that lets your users vend tools to support creation and hydration of data lakes and maintain tight security standards. You can extend this idea of self-service by supporting resources such as DynamoDB databases, Kinesis Data Firehose delivery streams, Amazon Redshift clusters, and Amazon SageMaker notebooks, granting your users more flexibility and utility in their data lakes within guardrails you define.

If you have questions about implementing the solution described in this post, you can start a new thread on the AWS Service Catalog Forum or contact AWS Support.

About the Author

Kanchan Waikar is a Senior Solutions Architect at Amazon Web Services. She enjoys helping customers build architectures using AWS Marketplace for machine learning, AWS Service catalog, and other AWS services.

Kanchan Waikar is a Senior Solutions Architect at Amazon Web Services. She enjoys helping customers build architectures using AWS Marketplace for machine learning, AWS Service catalog, and other AWS services.