AWS Glue

Discover, prepare, and integrate all your data at any scale

Why AWS Glue?

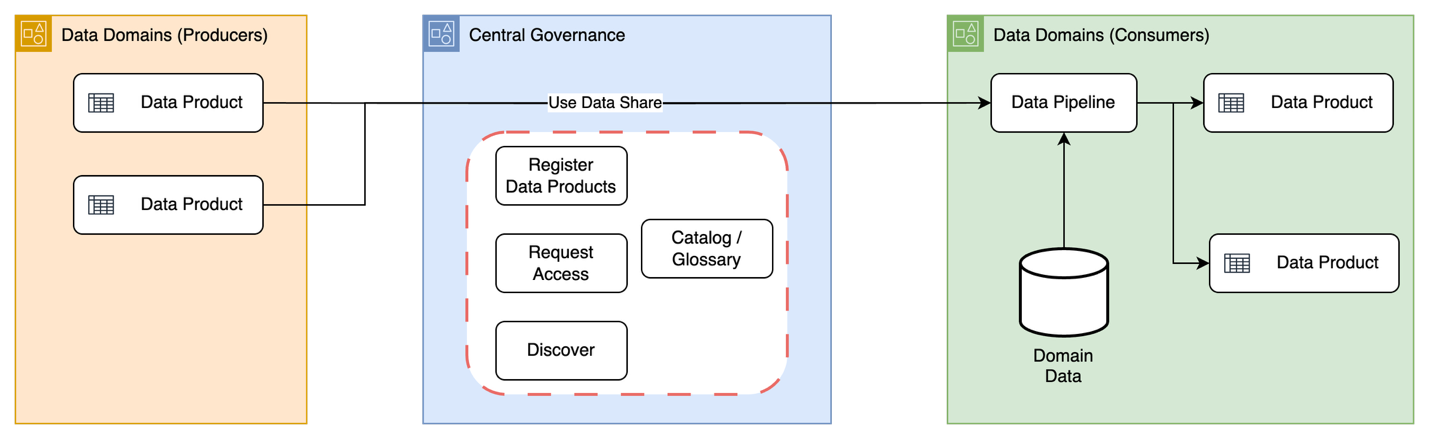

Preparing your data to obtain quality results is the first step in an analytics or AI project. AWS Glue is a serverless service that makes data integration simpler, faster, and cheaper. You can discover and connect to more than 100 diverse data sources, manage your data in a centralized data catalog, and visually create, run, and monitor data pipelines to load data into your data lakes, data warehouses, and lakehouses. With built-in generative AI capabilities, you can modernize Apache Spark jobs and develop faster with intelligent assistance for ETL authoring and Spark troubleshooting.

Integrate your data with AWS Glue in the next generation of Amazon SageMaker

With AWS Glue in the next generation of Amazon SageMaker, you can manage and build your workloads in one place with cost-effective, serverless, and scalable data integration.

Benefits

-

AWS Glue provides all the capabilities needed for data integration, so you can gain insights and put your data to work quickly. AWS Glue provides a fully managed, serverless toolkit to design and automate modern data pipelines—with built-in ETL, schema discovery, and cross-service integration.

AWS Glue automatically scales even the most demanding resource-intensive data processing jobs from gigabytes to petabytes with no infrastructure to manage, and you pay only for the resources used.

-

AWS Glue eliminates infrastructure management by providing serverless data pipelines with built-in scheduling and monitoring capabilities, allowing teams to focus on building data workflows rather than maintaining servers.

-

Get AI-powered help throughout your data integration journey—from automatically generating ETL code to modernizing your Spark jobs. AWS Glue provides intelligent code generation, AI-assisted Spark upgrades, and built-in Spark troubleshooting.

-

Integrate your data, wherever it lives, with fast and easy connectivity to data sources in the next generation of Amazon SageMaker. Create a data processing project with a combination of AWS Glue, Amazon Athena, Amazon EMR and MWAA - all within Amazon SageMaker - and benefit from a shared management and monitoring experience. AWS Glue data processing capabilities are available in Amazon SageMaker notebooks and Amazon SageMaker visual ETL.

Use Cases

Simplify ETL pipeline management

Remove infrastructure management with automatic provisioning and worker management, and consolidate all your data integration needs into a single service.

Interactively explore, experiment on, and process data

Using AWS Glue interactive sessions, data engineers can interactively explore and prepare data using the integrated development environment (IDE) or notebook of their choice.

Discover data efficiently

Quickly identify data across AWS, on premises, and other clouds, and then make it instantly available for querying and transforming.

Support various processing frameworks and workloads

More easily support various data processing frameworks, such as ETL and ELT, and various workloads, including batch, micro-batch, and streaming.

What's New

Did you find what you were looking for today?

Let us know so we can improve the quality of the content on our pages