AWS Big Data Blog

Enable real-time mainframe analytics with Precisely Connect and Amazon S3

This is a guest post by Supreet Padhi, Technology Architect, Strategic Technologies, and Rochelle Grubbs, Senior Director, Solution Architect at Precisely in partnership with AWS.

Business leaders face a critical challenge to enable real-time analytics. Their most valuable data sits in mainframe systems that reliably process billions of transactions daily, but extracting value for modern analytics and AI remains complex and costly. Traditional mainframe-to-cloud integration approaches require multi-step replication with intermediary systems, creating operational overhead, latency, and data integrity risks. This complexity delays insights, increases infrastructure costs, limits agility, and blocks organizations from using AI and machine learning on their mainframe data.

Precisely, a global leader in data integrity with over 12,000 customers including 95 of the Fortune 100, has announced an expansion of its collaboration with AWS through new enhancements to Precisely Connect. Precisely is an AWS Data and Analytics ISV Competency and AWS Migration and Modernization ISV Competency partner. Precisely has service specializations in Amazon Redshift and Amazon Relational Database Service (Amazon RDS).

In Stream mainframe data to AWS in near-real time with Precisely and Amazon MSK, we showed you how to set up mainframe CDC and the AWS Mainframe Modernization – Data Replication for IBM z/OS Amazon Machine Image (AMI) available in AWS Marketplace. In this post, we discuss how you can use Precisely Connect to enable real-time, direct replication of mainframe data to Amazon Simple Storage Service (Amazon S3), and how your organization can extend this foundation using Amazon S3 Tables for advanced analytics.

Real-time mainframe data access

Organizations that can connect their mainframe environments with modern cloud platforms can gain advantages through improved agility, reduced operational costs, and enhanced analytics capabilities.For example, moving appropriate analytics and reporting workloads to the cloud can significantly reduce mainframe operational costs while maintaining performance and reliability. Real-time data access makes insights available within seconds rather than waiting for batch processing cycles, enabling faster responses to market changes and customer needs. Eliminating bulk data extracts and intermediary systems also reduces infrastructure and maintenance expenses. This frees IT resources to focus on higher-value initiatives.

However, implementing mainframe-to-cloud integrations presents unique technical challenges that require specialized solutions. These include converting mainframe character encoding (EBCDIC) to standard ASCII format and handling mainframe-specific data types such as packed decimal (COMP) fields. You also need to manage the complexity of VSAM (Virtual Storage Access Method) files that can store multiple record types in a single file, and maintain real-time synchronization without impacting mainframe performance.

Change Data Capture (CDC) technology addresses these challenges through incremental data movement that eliminates disruptive bulk extracts by streaming only changed data to cloud targets, minimizing system impact and ensuring data currency. Real-time synchronization keeps cloud applications in sync with mainframe systems, enabling immediate insights and responsive operations.

Precisely Connect: Real-time data replication to Amazon S3

With Precisely Connect, you can replicate data directly from mainframes to Amazon S3 in real time, eliminating the need for intermediaries and simplifying modernization.Data flows directly from mainframe sources, including Db2 z/OS, IMS, and VSAM, to Amazon S3, eliminating intermediary steps and reducing both latency and operational complexity. You can move mainframe data directly to Amazon S3 data lakes and analytics platforms without managing complex, multi-step replication processes.

The simplicity of this approach reduces maintenance overhead and integration complexity by removing the need for staging servers, middleware, or batch processing systems. After data lands in Amazon S3, it becomes immediately available for downstream AWS workloads. You can use Amazon Athena for SQL queries, AWS Glue for ETL and data cataloging, Amazon EMR for big data processing, Amazon SageMaker AI for machine learning, and Amazon Quick Sight for business intelligence dashboards.

Solution overview

Here we present a solution architecture for streaming mainframe data changes from Db2z through AWS Mainframe Modernization – Data Replication for IBM z/OS AMI directly to Amazon S3 and then using Amazon S3 Tables for advanced analytics capabilities.

By introducing direct S3 replication and streamlining deployment through the pre-configured AWS Marketplace AMI, you can deploy in minutes rather than weeks. This creates new possibilities for data distribution, transformation, and consumption. This architecture offers several key benefits:

- Simplified deployment – Accelerate implementation using the preconfigured AWS Marketplace AMI

- Direct replication – Eliminate intermediary systems by streaming data directly to Amazon S3, reducing latency and operational overhead

- Real-time synchronization – Capture changes as they occur on the mainframe, ensuring downstream applications operate on current data

- Flexible analytics options – Use S3 Tables for Iceberg-compatible tabular data storage

- Comprehensive AWS integration – Gain immediate access to Amazon EMR, Amazon Athena, AWS Glue, Amazon SageMaker AI, and Amazon Quick Sight

- Natural language data access – Through the MCP Server for Amazon S3 Tables, AI assistants can interact with structured data using conversational interfaces without needing to write SQL queries.

Prerequisites

To complete the solution, you need the following prerequisites:

Precisely components

- AWS Mainframe Modernization – Data Replication for IBM z/OS – Deploy this Precisely Connect AMI from AWS Marketplace. This pre-configured image contains the Apply Engine and Controller Daemon components required for replicating mainframe data changes to Amazon S3.

- Precisely Connect CDC Capture/Publisher – Deploy the Precisely Connect CDC Capture/Publisher on your mainframe environment. This component captures changes from Db2z logs and streams them to the Apply Engine over TCP/IP.

For detailed setup and configuration steps for Precisely components, refer to our previous post Stream mainframe data to AWS in near-real time with Precisely and Amazon MSK.

Connectivity requirements

- Have network connectivity established between your mainframe environment and AWS using your organization’s approved connectivity method (such as AWS Direct Connect or VPN).

- Verify that firewall rules allow TCP/IP communication between the mainframe Capture/Publisher and the Apply Engine.

AWS analytics components (optional extension)

After mainframe data lands in Amazon S3, your organization can extend its analytics capabilities using AWS services. One approach is to use Amazon EMR streaming jobs to process and write data to Amazon S3 Tables. After the data is stored in S3 Tables, the data can be queried directly using Amazon Athena for ad-hoc SQL analysis. This extension is optional and represents one of several ways to consume and analyze mainframe data after it reaches Amazon S3.

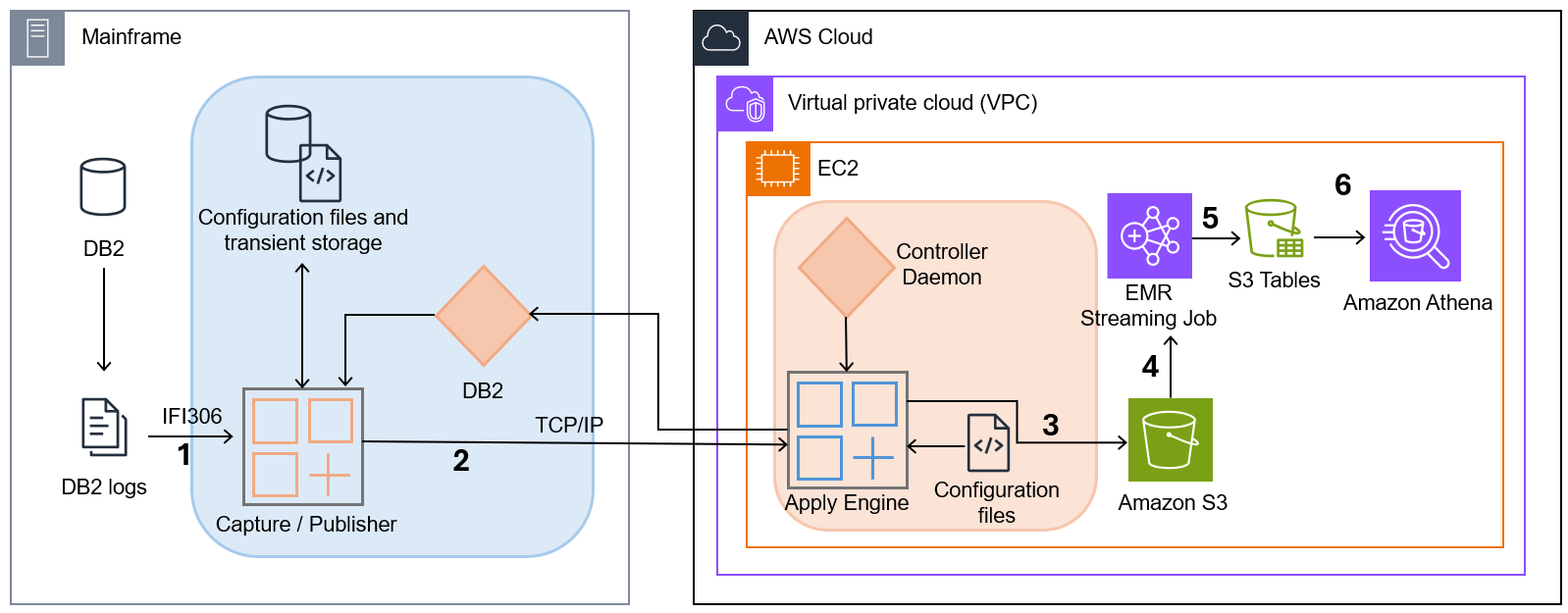

The following diagram illustrates the solution architecture.

- Capture/Publisher – Connect CDC Capture/Publisher captures Db2 changes from Db2 logs using IFI 306 Read and communicates captured data changes to a target engine through TCP/IP.

- Controller Daemon – The Controller Daemon authenticates all connection requests, managing secure communication between the source and target environments.

- Apply Engine – The Apply Engine receives the changes from the Publisher agent and applies the changed data to the target Amazon S3.

- Amazon S3 – Serves as the scalable data lake foundation where replicated mainframe data lands.

- Amazon EMR streaming job – As data arrives, an instance of the Amazon EMR streaming job writes the data to target tables in Amazon S3 Tables.

- Amazon Athena – Queries data stored in Amazon S3 Tables using standard SQL.

This architecture provides a clean separation between the data capture process and the data consumption process, allowing each to scale independently. When CDC data arrives in Amazon S3, you can use Amazon S3 Tables to store Db2 z/OS, VSAM, and IMS data in an open table format (Apache Iceberg) that is ready for analytics, providing a flexible path to mainframe modernization.

Quantifiable business value

Organizations implementing this solution typically see significant reductions in mainframe operational costs by offloading analytics and reporting workloads to the cloud. The elimination of intermediary infrastructure reduces both capital and operational expenses. The reduced maintenance burden frees IT resources to focus on strategic initiatives rather than managing complex replication systems. Speed and agility improvements are equally significant. Near real-time data availability, measured in seconds to minutes rather than hours to days, enables organizations to respond rapidly to market changes and operational events. The rapid deployment of new analytics use cases without requiring mainframe changes accelerates innovation. Organizations gain access to the full breadth of AWS services that can be used immediately after data lands in Amazon S3.

From an analytics and AI perspective, the solution creates a unified data platform that brings together mainframe, cloud-native, and third-party data sources. This unified view enables advanced machine learning on historical and current data, delivering predictive insights that drive proactive decision-making across the organization.

Customer story

A leading global payments provider put this into practice. The payments provider was struggling to generate timely analytics and insights from Point of Sale (POS) transaction data. As one of the world’s largest payment providers, they process hundreds of thousands of transactions per second. Users expect to swipe their card and have their transaction approved in seconds. New architecture was needed to keep up with customer demands and volume. By streaming mission-critical mainframe data directly to AWS in real time using Precisely Connect and landing it in Amazon S3 Tables, the company used storage built on the Apache Iceberg open standard. This approach enables high-performance analytics directly on mainframe data alongside cloud-native sources.

Conclusion

In this post, we demonstrated how Precisely Connect enables real-time, direct data replication from mainframes to Amazon S3, eliminating intermediaries and simplifying mainframe modernization.

Your organization can further extend this foundation with Amazon S3 Tables, purpose-built storage for Apache Iceberg tables in S3, enabling analytical applications to query the most current mainframe data using tools such as Amazon Athena, Amazon EMR, and Amazon Redshift.

Get started by deploying AWS Mainframe Modernization – Data Replication for IBM z/OS from AWS Marketplace and use Amazon S3 as a target for your mainframe use cases. Learn more about Precisely’s mainframe data integration capabilities at precisely.com. Contact AWS and Precisely experts to discuss your specific modernization challenges and design a proof-of-concept that demonstrates business value quickly.