Artificial Intelligence

Identifying landmarks with Amazon Rekognition Custom Labels

Amazon Rekognition is a computer vision service that makes it simple to add image and video analysis to your applications using proven, highly scalable, deep learning technology that does not require machine learning (ML) expertise. With Amazon Rekognition, you can identify objects, people, text, scenes, and activities in images and videos and detect inappropriate content. Amazon Rekognition also provides highly accurate facial analysis and facial search capabilities that you can use to detect, analyze, and compare faces for a wide variety of use cases.

Amazon Rekognition Custom Labels is a feature of Amazon Rekognition that makes it simple to build your own specialized ML-based image analysis capabilities to detect unique objects and scenes integral to your specific use case.

Some common use cases of Rekognition Custom Labels include finding your logo in social media posts, identifying your products on store shelves, classifying machine parts in an assembly line, distinguishing between healthy and infected plants, and more.

Amazon Rekognition Labels supports popular landmarks like the Brooklyn Bridge, Colosseum, Eiffel Tower, Machu Picchu, Taj Mahal, and more. If you have other landmarks or buildings not yet supported by Amazon Rekognition, you can still use Amazon Rekognition Custom Labels.

In this post, we demonstrate using Rekognition Custom Labels to detect the Amazon Spheres building in Seattle.

With Rekognition Custom Labels, AWS takes care of the heavy lifting for you. Rekognition Custom Labels builds off the existing capabilities of Amazon Rekognition, which is already trained on tens of millions of images across many categories. Instead of thousands of images, you simply need to upload a small set of training images (typically a few hundred images or less) that are specific to your use case via our straightforward console. Amazon Rekognition can begin training in just a few clicks. After Amazon Rekognition begins training from your image set, it can produce a custom image analysis model for you within few minutes or hours. Behind the scenes, Rekognition Custom Labels automatically loads and inspects the training data, selects the suitable ML algorithms, trains a model, and provides model performance metrics. You can then use your custom model via the Rekognition Custom Labels API and integrate it into your applications.

Solution overview

For our example, we use the Amazon Spheres building in Seattle. We train a model using Rekognition Custom Labels; whenever similar images are used, the algorithm should identify it as Amazon Spheres instead of Dome, Architecture, Glass building, or other labels.

Let’s first show an example of using the label detection feature of Amazon Rekognition, where we feed the image of Amazon Spheres without any custom training. We use the Amazon Rekognition console to open the label detection demo and upload our photo.

After the image is uploaded and analyzed, we see labels with their confidence scores under Results. In this case, Dome was detected with confidence score of 99.2%, Architecture with 99.2%, Building with 99.2%, Metropolis with 79.4%, and so on.

We want to use custom labeling to produce a computer vision model that can label the image Amazon Spheres.

In the following sections, we walk you through preparing your dataset, creating a Rekognition Custom Labels project, training the model, evaluating the results, and testing it with additional images.

Prerequisites

Before starting with the steps, there are quotas for Rekognition Custom Labels that you need to be aware of. If you want to change the limits, you can request a service limit increase.

Create your dataset

If this is your first time using Rekognition Custom Labels, you’ll be prompted to create an Amazon Simple Storage Service (Amazon S3) bucket to store your dataset.

For this blog demonstration, we have used images of the Amazon Spheres, which we captured while we visited Seattle, WA. Feel free to use your own images as per your need.

Copy your dataset to the newly created bucket, which stores your images inside their respective prefixes.

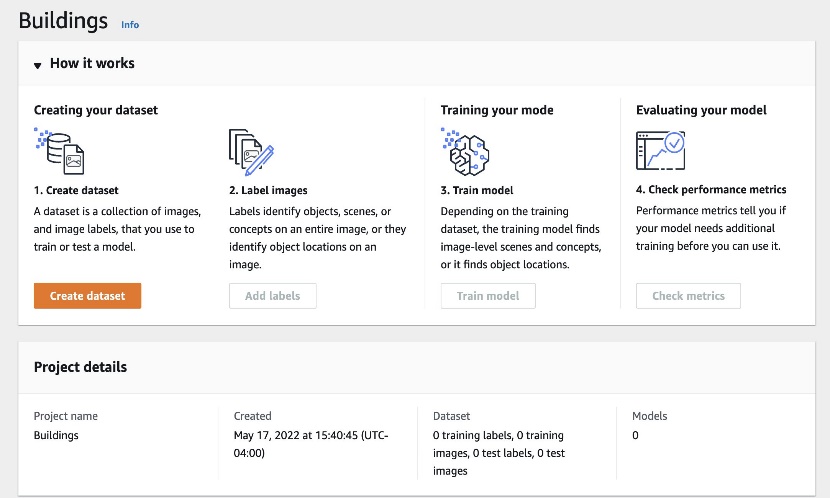

Create a project

To create your Rekognition Custom Labels project, complete the following steps:

- On the Rekognition Custom Labels console, choose Create a project.

- For Project name, enter a name.

- Choose Create project.

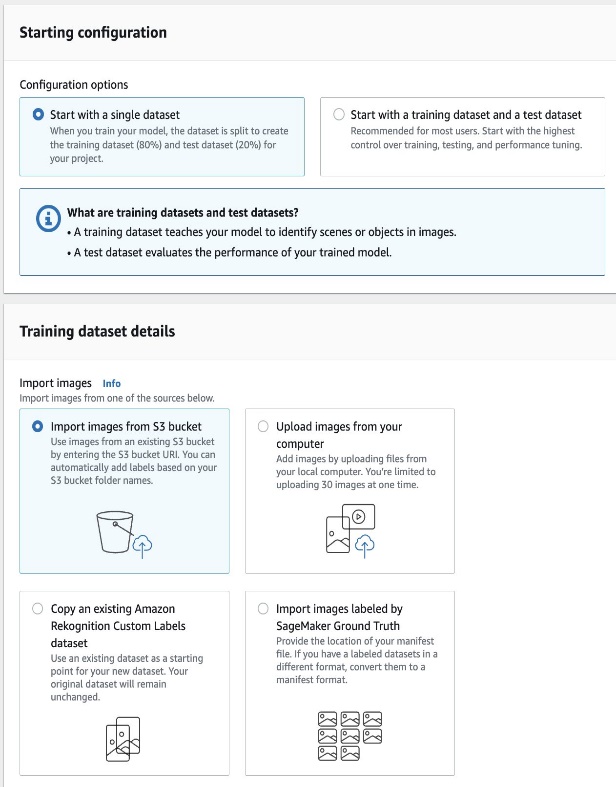

Now we specify the configuration and path of your training and test dataset. - Choose Create dataset.

You can start with a project that has a single dataset, or a project that has separate training and test datasets. If you start with a single dataset, Rekognition Custom Labels splits your dataset during training to create a training dataset (80%) and a test dataset (20%) for your project.

Additionally, you can create training and test datasets for a project by importing images from one of the following locations:

- An S3 bucket

- A local computer

- Amazon SageMaker Ground Truth

- An existing dataset

For this post, we use our own custom dataset of Amazon Spheres.

- Select Start with a single dataset.

- Select Import images from S3 bucket.

- For S3 URI, enter the path to your S3 bucket.

- If you want Rekognition Custom Labels to automatically label the images for you based on the folder names in your S3 bucket, select Automatically assign image-level labels to images based on the folder name.

- Choose Create dataset.

A page opens that shows you the images with their labels. If you see any errors in the labels, refer to Debugging datasets.

Train the model

After you have reviewed your dataset, you can now train the model.

- Choose train model.

- For Choose project, enter the ARN for your project if it’s not already listed.

- Choose Train model.

In the Models section of the project page, you can check the current status in the Model status column, where the training is in progress. Training time typically takes 30 minutes to 24 hours to complete, depending on several factors such as number of images and number of labels in the training set, and types of ML algorithms used to train your model.

When the model training is complete, you can see the model status as TRAINING_COMPLETED. If the training fails, refer to Debugging a failed model training.

Evaluate the model

Open the model details page. The Evaluation tab shows metrics for each label, and the average metric for the entire test dataset.

The Rekognition Custom Labels console provides the following metrics as a summary of the training results and as metrics for each label:

You can view the results of your trained model for individual images, as shown in the following screenshot.

Test the model

Now that we’ve viewed the evaluation results, we’re ready to start the model and analyze new images.

You can start the model on the Use model tab on the Rekognition Custom Labels console, or by using the StartProjectVersion operation via the AWS Command Line Interface (AWS CLI) or Python SDK.

When the model is running, we can analyze the new images using the DetectCustomLabels API. The result from DetectCustomLabels is a prediction that the image contains specific objects, scenes, or concepts. See the following code:

In the output, you can see the label with its confidence score:

As you can see from the result, just with few simple clicks, you can use Rekognition Custom Labels to achieve accurate labeling outcomes. You can use this for a multitude of image use cases, such as identifying custom labeling for food products, pets, machine parts, and more.

Clean up

To clean up the resources you created as part of this post and avoid any potential recurring costs, complete the following steps:

- On the Use model tab, stop the model.

Alternatively, you can stop the model using the StopProjectVersion operation via the AWS CLI or Python SDK.Wait until the model is in theStoppedstate before continuing to the next steps. - Delete the model.

- Delete the project.

- Delete the dataset.

- Empty the S3 bucket contents and delete the bucket.

Conclusion

In this post, we showed how to use Rekognition Custom Labels to detect building images.

You can get started with your custom image datasets, and with a few simple clicks on the Rekognition Custom Labels console, you can train your model and detect objects in images. Rekognition Custom Labels can automatically load and inspect the data, select the right ML algorithms, train a model, and provide model performance metrics. You can review detailed performance metrics such as precision, recall, F1 scores, and confidence scores.

The day has come when we can now identify popular buildings like Empire State Building in New York City, the Taj Mahal in India, and many others across the world pre-labeled and ready to use for intelligence in your applications. But if you have other landmarks currently not yet supported by Amazon Rekognition Labels, look no further and try out Amazon Rekognition Custom Labels.

For more information about using custom labels, see What Is Amazon Rekognition Custom Labels? Also, visit our GitHub repo for an end-to-end workflow of Amazon Rekognition custom brand detection.

About the Authors:

Suresh Patnam is a Principal BDM – GTM AI/ML Leader at AWS. He works with customers to build IT strategy, making digital transformation through the cloud more accessible by leveraging Data & AI/ML. In his spare time, Suresh enjoys playing tennis and spending time with his family.

Suresh Patnam is a Principal BDM – GTM AI/ML Leader at AWS. He works with customers to build IT strategy, making digital transformation through the cloud more accessible by leveraging Data & AI/ML. In his spare time, Suresh enjoys playing tennis and spending time with his family.

Bunny Kaushik is a Solutions Architect at AWS. He is passionate about building AI/ML solutions on AWS and helping customers innovate on the AWS platform. Outside of work, he enjoys hiking, climbing, and swimming.

Bunny Kaushik is a Solutions Architect at AWS. He is passionate about building AI/ML solutions on AWS and helping customers innovate on the AWS platform. Outside of work, he enjoys hiking, climbing, and swimming.