Artificial Intelligence

Category: Amazon Machine Learning

Claude Code deployment patterns and best practices with Amazon Bedrock

In this post, we explore deployment patterns and best practices for Claude Code with Amazon Bedrock, covering authentication methods, infrastructure decisions, and monitoring strategies to help enterprises deploy securely at scale. We recommend using Direct IdP integration for authentication, a dedicated AWS account for infrastructure, and OpenTelemetry with CloudWatch dashboards for comprehensive monitoring to ensure secure access, capacity management, and visibility into costs and developer productivity .

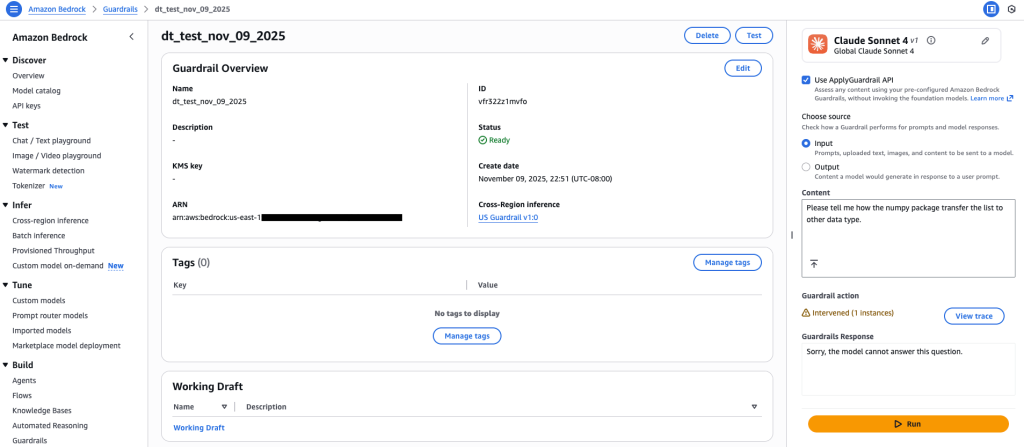

Amazon Bedrock Guardrails expands support for code domain

Amazon Bedrock Guardrails now extends its safety controls to protect code generation across twelve programming languages, addressing critical security challenges in AI-assisted software development. In this post, we explore how to configure content filters, prompt attack detection, denied topics, and sensitive information filters to safeguard against threats like prompt injection, data exfiltration, and malicious code generation while maintaining developer productivity .

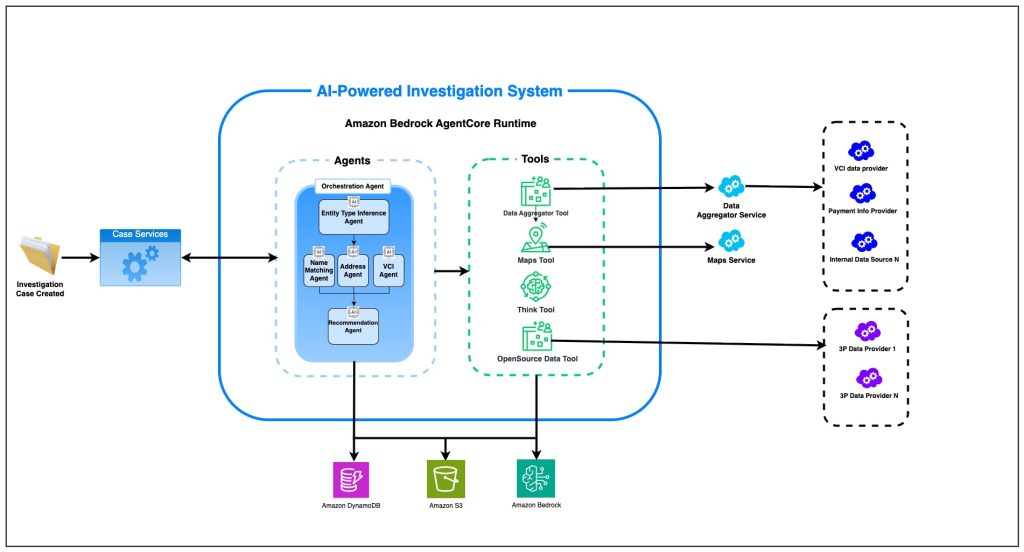

How Amazon uses AI agents to support compliance screening of billions of transactions per day

Amazon’s AI-powered Amazon Compliance Screening system tackles complex compliance challenges through autonomous agents that analyze, reason through, and resolve cases with precision. This blog post explores how Amazon’s Compliance team built its AI-powered investigation system through a series of AI agents built on AWS.

Build an agentic solution with Amazon Nova, Snowflake, and LangGraph

In this post, we cover how you can use tools from Snowflake AI Data Cloud and Amazon Web Services (AWS) to build generative AI solutions that organizations can use to make data-driven decisions, increase operational efficiency, and ultimately gain a competitive edge.

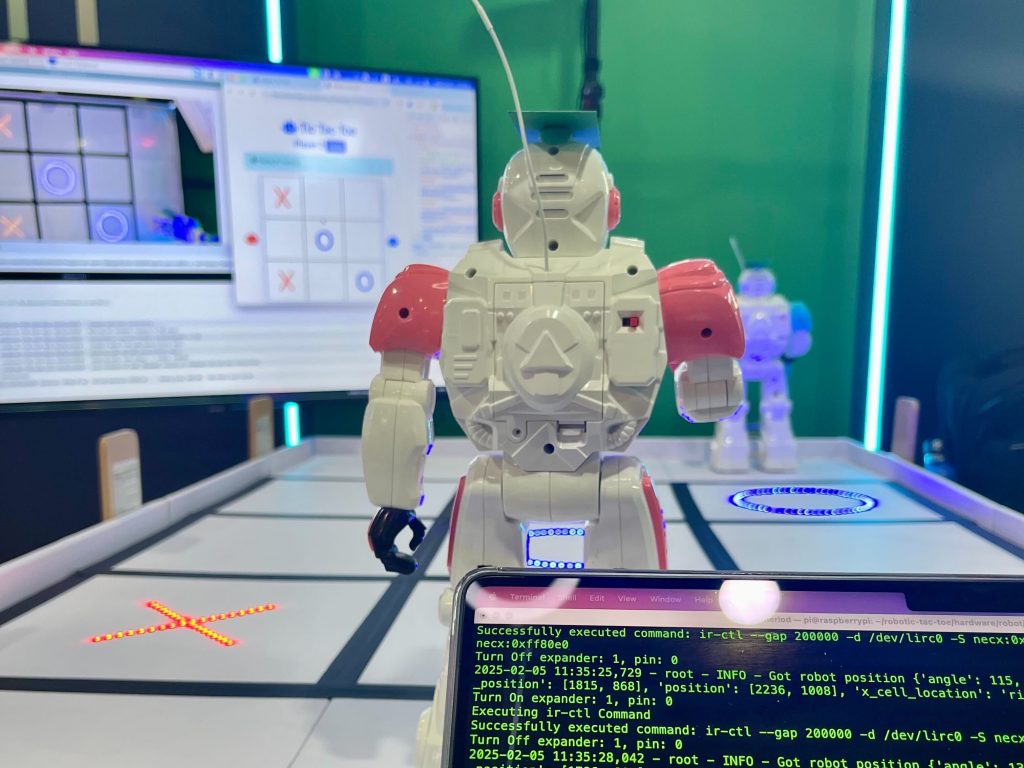

Bringing tic-tac-toe to life with AWS AI services

RoboTic-Tac-Toe is an interactive game where two physical robots move around a tic-tac-toe board, with both the gameplay and robots’ movements orchestrated by LLMs. Players can control the robots using natural language commands, directing them to place their markers on the game board. In this post, we explore the architecture and prompt engineering techniques used to reason about a tic-tac-toe game and decide the next best game strategy and movement plan for the current player.

Accelerating generative AI applications with a platform engineering approach

In this post, I will illustrate how applying platform engineering principles to generative AI unlocks faster time-to-value, cost control, and scalable innovation.

Accelerate enterprise solutions with agentic AI-powered consulting: Introducing AWS Professional Service Agents

I’m excited to announce AWS Professional Services now offers specialized AI agents including the AWS Professional Services Delivery Agent. This represents a transformation to the consulting experience that embeds intelligent agents throughout the consulting life cycle to deliver better value for customers.

Amazon Bedrock AgentCore and Claude: Transforming business with agentic AI

In this post, we explore how Amazon Bedrock AgentCore and Claude are enabling enterprises like Cox Automotive and Druva to deploy production-ready agentic AI systems that deliver measurable business value, with results including up to 63% autonomous issue resolution and 58% faster response times. We examine the technical foundation combining Claude’s frontier AI capabilities with AgentCore’s enterprise-grade infrastructure that allows organizations to focus on agent logic rather than building complex operational systems from scratch.

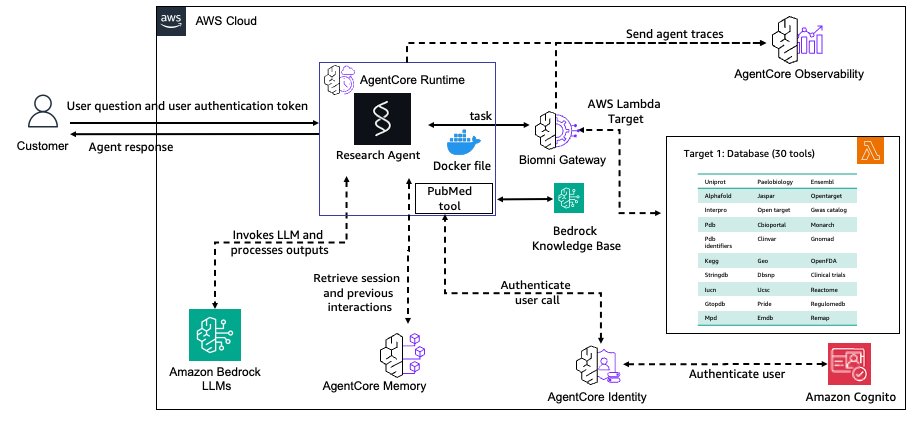

Build a biomedical research agent with Biomni tools and Amazon Bedrock AgentCore Gateway

In this post, we demonstrate how to build a production-ready biomedical research agent by integrating Biomni’s specialized tools with Amazon Bedrock AgentCore Gateway, enabling researchers to access over 30 biomedical databases through a secure, scalable infrastructure. The implementation showcases how to transform research prototypes into enterprise-grade systems with persistent memory, semantic tool discovery, and comprehensive observability for scientific reproducibility .

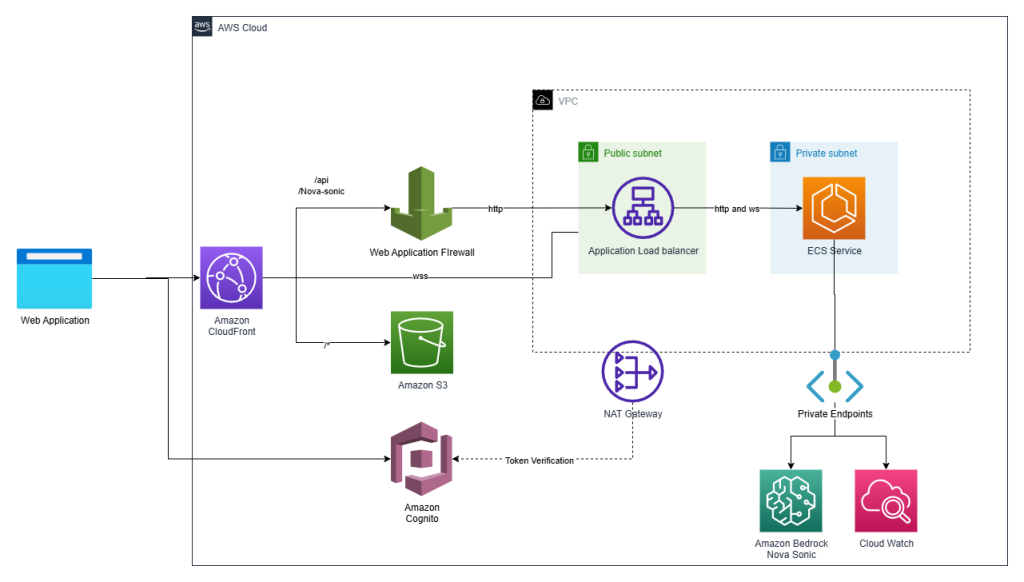

Make your web apps hands-free with Amazon Nova Sonic

Graphical user interfaces have carried the torch for decades, but today’s users increasingly expect to talk to their applications. In this post we show how we added a true voice-first experience to a reference application—the Smart Todo App—turning routine task management into a fluid, hands-free conversation.