Artificial Intelligence

Accelerated PyTorch inference with torch.compile on AWS Graviton processors

Originally PyTorch used an eager mode where each PyTorch operation that forms the model is run independently as soon as it’s reached. PyTorch 2.0 introduced torch.compile to speed up PyTorch code over the default eager mode. In contrast to eager mode, the torch.compile pre-compiles the entire model into a single graph in a manner that’s optimal for […]

Access control for vector stores using metadata filtering with Amazon Bedrock Knowledge Bases

In November 2023, we announced Amazon Bedrock Knowledge Bases as generally available. Knowledge bases allow Amazon Bedrock users to unlock the full potential of Retrieval Augmented Generation (RAG) by seamlessly integrating their company data into the language model’s generation process. This feature allows organizations to harness the power of large language models (LLMs) while making […]

Accenture creates a custom memory-persistent conversational user experience using Amazon Q Business

Traditionally, finding relevant information from documents has been a time-consuming and often frustrating process. Manually sifting through pages upon pages of text, searching for specific details, and synthesizing the information into coherent summaries can be a daunting task. This inefficiency not only hinders productivity but also increases the risk of overlooking critical insights buried within […]

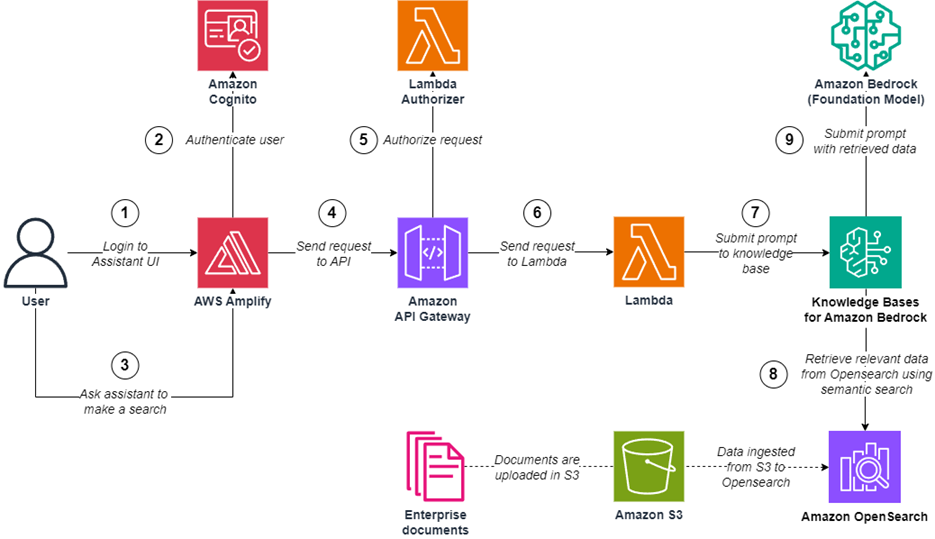

Create an end-to-end serverless digital assistant for semantic search with Amazon Bedrock

With the rise of generative artificial intelligence (AI), an increasing number of organizations use digital assistants to have their end-users ask domain-specific questions, using Retrieval Augmented Generation (RAG) over their enterprise data sources. As organizations transition from proofs of concept to production workloads, they establish objectives to run and scale their workloads with minimal operational […]

Build a self-service digital assistant using Amazon Lex and Amazon Bedrock Knowledge Bases

Organizations strive to implement efficient, scalable, cost-effective, and automated customer support solutions without compromising the customer experience. Generative artificial intelligence (AI)-powered chatbots play a crucial role in delivering human-like interactions by providing responses from a knowledge base without the involvement of live agents. These chatbots can be efficiently utilized for handling generic inquiries, freeing up […]

Identify idle endpoints in Amazon SageMaker

Amazon SageMaker is a machine learning (ML) platform designed to simplify the process of building, training, deploying, and managing ML models at scale. With a comprehensive suite of tools and services, SageMaker offers developers and data scientists the resources they need to accelerate the development and deployment of ML solutions. In today’s fast-paced technological landscape, […]

Indian language RAG with Cohere multilingual embeddings and Anthropic Claude 3 on Amazon Bedrock

Media and entertainment companies serve multilingual audiences with a wide range of content catering to diverse audience segments. These enterprises have access to massive amounts of data collected over their many years of operations. Much of this data is unstructured text and images. Conventional approaches to analyzing unstructured data for generating new content rely on […]

The future of productivity agents with NinjaTech AI and AWS Trainium

NinjaTech AI’s mission is to make everyone more productive by taking care of time-consuming complex tasks with fast and affordable artificial intelligence (AI) agents. We recently launched MyNinja.ai, one of the world’s first multi-agent personal AI assistants, to drive towards our mission. MyNinja.ai is built from the ground up using specialized agents that are capable of completing tasks on your behalf, including scheduling meetings, conducting deep research from the web, generating code, and helping with writing. These agents can break down complicated, multi-step tasks into branched solutions, and are capable of evaluating the generated solutions dynamically while continually learning from past experiences. All of these tasks are accomplished in a fully autonomous and asynchronous manner, freeing you up to continue your day while Ninja works on these tasks in the background, and engaging when your input is required.

Build generative AI applications on Amazon Bedrock — the secure, compliant, and responsible foundation

Generative AI has revolutionized industries by creating content, from text and images to audio and code. Although it can unlock numerous possibilities, integrating generative AI into applications demands meticulous planning. Amazon Bedrock is a fully managed service that provides access to large language models (LLMs) and other foundation models (FMs) from leading AI companies through a […]

Build a conversational chatbot using different LLMs within single interface – Part 1

With the advent of generative artificial intelligence (AI), foundation models (FMs) can generate content such as answering questions, summarizing text, and providing highlights from the sourced document. However, for model selection, there is a wide choice from model providers, like Amazon, Anthropic, AI21 Labs, Cohere, and Meta, coupled with discrete real-world data formats in PDF, […]