AWS for M&E Blog

Build a compelling eCommerce experience with Amazon Interactive Video Service

Introduction

Amazon Interactive Video Service (Amazon IVS) makes creating live video streams simpler than ever. This means you can spend more time creating live content and features to inspire, engage, and entice viewers.

Amazon IVS helps you build a compelling, interactive platform that goes beyond traditional eCommerce experiences. In this post, I show you how to use Amazon IVS and its timed metadata API to highlight products on a web app interface as they are talked about in the live stream. This makes it easier for viewers to browse product details and make purchases in real time.

In this post, we use serverless technologies Amazon IVS, AWS Lambda, Amazon API Gateway, and Amazon DynamoDB with a web user interface as a single page application built using responsive web design frameworks and techniques that provide a native app-like experience tailored to the viewer’s device. This tutorial uses the timed metadata featured covered in a previous blog post that details how to build an interactive poll. In this post, I show you how to share more information about the products being discussed in a livestream to create a new level of interactivity with the viewers!

Please note that while the underlying services such as API Gateway are highly scalable and secure, the design of this tutorial is not intended for production use.

Prerequisites

- Node.js (Installing Node.js)

- AWS Command Line Interface (AWS CLI)

- AWS Serverless Application Model (AWS SAM)

- An AWS Account with permissions to create: AWS Identity and Access Management (AWS IAM) roles, AWS Simple Storage Service (Amazon S3) buckets, Amazon DynamoDB tables, and AWS Lambda functions

Tutorial

Getting started: clone the repository and use variables

First, let’s clone the Amazon IVS eCommerce demo code from GitHub using your method of choice. The following steps describe how to do this using Terminal or iTerm2 app on macOS:

- Open the Terminal app.

- Navigate to a directory where you want to store the app code. For example:

cd ~/git - Clone the demo code repository:

git clone https://github.com/aws-samples/amazon-ivs-ecommerce-web-demo.gitIn this tutorial, we begin with the “serverless” directory first:

cd serverlessIn the next steps, we replace most of text in the commands and files from the repo’s instructions. Since we are in a Unix/Linux environment, let’s use “bash variables” to our benefit. These variable only work in this session and disappear if you open a new terminal session. We must set three variables:

DEMO_BUCKET="ivs-ecommerce-demo-myname123"

DEMO_REGION="us-west-2"

DEMO_STACK="ivs-ecommerce-demo-stack"Note: The S3 bucket name has to be unique globally. If you get an error that name has been taken, you can re-enter that variable again with a new name.

You may test the variable using theechocommand:

echo $DEMO_BUCKET

echo $DEMO_REGION

echo $DEMO_STACK

I try to keep the numbers in the following steps the same as the ones in repository so you can go back and forth and keep pace if needed. I provide slightly different methods than the repository’s instructions, but both give you the same results.

1. Create the S3 bucket

First we follow the instructions from the README.md in the “serverless” directory, using the new variables to speed up the process:

aws s3api create-bucket --bucket $DEMO_BUCKET --region $DEMO_REGION --create-bucket-configuration LocationConstraint=$DEMO_REGION

2. Pack template with SAM

Please make sure you have SAM installed and continue:

sam package --template-file template.yaml --output-template-file packaged.yaml --s3-bucket $DEMO_BUCKET

Note: Ignore the “Execute the following command” message. We will create our own in the next step!

3. Deploy AWS CloudFormation with SAM

sam deploy --template-file packaged.yaml --stack-name $DEMO_STACK --capabilities CAPABILITY_IAMNote: If you already have a DynamoDB table with the name of “products” refer to the optional step in the repo instructions. This change deviates a bit from these instructions, and I advise you to follow those instructions found on the repo instead.

You can watch the progress of the CloudFormation template as it deploys. When complete, there is a “Successfully created/updated stack” message at the bottom. You need the “SimpleProductEndpointURL” in a later step, so keep that handy.

4a. Import sample product images

There are sample images provided in the “products” directory of this repo. Each of these files is listed under their product “id” that is in the DynamoDB table. We upload these images to an S3 bucket and update the permissions so that the world can access the images via HTTPs.

Notice: I added a--acl public-readparameter to this command to only allow “public read” access to these files, and not the whole S3 bucket. It’s best practice to not give read permission to a full S3 bucket. The--aclparameter allows us to give public-readaccess on a per file basis.

aws s3 cp ./product_images/ s3://$DEMO_BUCKET/products --recursive --exclude "*" --include "*.jpg" --acl public-read

Note: Since we are using the --acl public-readparameter, we do not need to do the put-bucket-policystep from the repo instructions.

Advice: In a production environment, I recommend using an Amazon CloudFront distribution in front of your S3 bucket when it is used as a static file origin.

4b. Import sample product data

Next, we must update theproducts.json file to point back to this S3 bucket now that we have given it access. Let’s usesedto make the changes for us:

sed -i '' "s/<my-bucket-name>/$DEMO_BUCKET/g" products.json<br />sed -i '' "s/<my-region>/$DEMO_REGION/g" products.jsonUsecatandgrepto quickly confirm the changes and copy the URL into your favorite web browser to over confirm we are on the right track.

cat products.json| grep imageUrl

Note: if you get an error, omit the '' after the -i as the single quotes are only required on macOS.

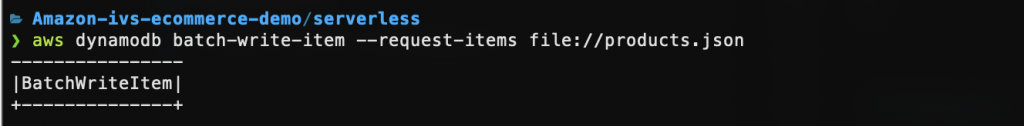

After you have prepared the products.json file, it is time to import it into DynamoDB Table.

aws dynamodb batch-write-item --request-items file://products.json

5. Verify data import

We can use the AWS CLI to confirm thebatch-write-item command was successful by doing a scan of the whole table. There is a lot of data returned, so it may not fit all neatly on your screen.

aws dynamodb scan --table-name products

Next we can do a get-itemof a single entry in our database table:

aws dynamodb get-item --table-name products --key '{"id":{"S":"1000567890"}}'

6. Verify API Gateway

If you did not take note of the “SimpleProductEndpointURL” from step 3, you may use this command to retrieve it:

aws cloudformation describe-stacks --stack-name $DEMO_STACK --query 'Stacks[].Outputs'

We can use the curl command or a web browser to verify.

curl "https://abc123.execute-api.us-west-

2.amazonaws.com/Prod/"

curl "https://abc123.execute-api.us-west-

2.amazonaws.com/Prod/?productId=1000567890"

7. Deploy eCommerce web UI

Follow the instructions from the README.md in the “web-ui” to make sure that you have “Node.js” installed.

Next, it takes only two steps using the npm command to install and start a webserver that hosts this interactive portion of the demo.

npm installThis command downloads all the dependencies required for this tutorial.

If we runnpm start before we edit the configuration files, the demo does not use your newly created environment. This project defaults to a “mock” configuration instead. We must edit the file located at src/config.js and make the following changes:

// Enabling USE_MOCK_DATA will not require an eCommerce demo Backend (see serverless\README.md

export const USE_MOCK_DATA = false;

// API endpoint for retrieving the products list

export const GET_PRODUCTS_API = "<PASTE

SimpleProductEndpointURL HERE>";

You are now ready to do the first true “end-to-end” test of this demo!

npm start

Use your favorite web browser and navigate to http://localhost:3000if thenpm start command did not do that for you. You may also open up “Developer Tools” to use the “Network” tool to confirm the domain name of where the JPG files are loaded from. You may need to refresh the page if you did not have the “Network” tab open before loading the page. You should see your S3 bucket as the subdomain name.

If you hover your pointer over the video player, you see a black box that shows each timed metadata event as they arrive in the livestream. You may also use the “Console” from the “Developer Tools” to see the same information.

Looking at the metadata, you can see that a productIdis sent every second. About every 5 seconds the productIdchanges. Repeating the productId every second may seem redundant, but keep in mind that the timed metadata payload is only available through the live stream. If a viewer begins watching sometime after the initial change of the productId, they might miss that event. To address that, timed metadata events are sent at regular intervals.

8. Using your own Amazon IVS stream

This demo comes with an Amazon IVS demo stream that already has timed metadata inserted. We encourage you to use your own stream and trigger your own timed metadata events. Please use the following documentation to create a new stream and insert timed metadata into your stream:

- Creating a Channel: https://docs.aws.amazon.com/ivs/latest/userguide/GSIVS-create-channel.html

- Setting up a streaming software: https://docs.aws.amazon.com/ivs/latest/userguide/GSIVS-live-streaming.html

- Embedding timed metadata within a stream: https://docs.aws.amazon.com/ivs/latest/userguide/SEM.html

Tip: If you prefer to use ffmpeg to stream a VOD file from your local machine or from a remote Amazon EC2 instance, use this script and change the variables at the top of the file for your own stream configuration. The script’s ffmpeg parameters are set to use the “Basic” channel type to save on costs. Make sure to also configure your Amazon IVS Channel to “Basic” in the “Channel Type” section!

Note: After creating your Amazon IVS channel, make sure to note its “Playback configuration” as you will need the playback URL in the next step.

When you complete the creation of your channel and are streaming to it, return to the src/config.js file. Replace the following variable with the playback URL of the channel you created:

// Default video stream to play inside the video player

// Replace this with your own Amazon IVS Playback URL

export const DEFAULT_VIDEO_STREAM = "<YOUR CHANNEL's PLAYBACK URL>";After you save this file, you may need to end the running session by using the ctrl-c keyboard command. Now run the npm startcommand again to confirm that your changes have taken effect.

Next, we must send timed metadata to your Channel. I provide a sample AWS Management Console CLI command. Please take note of the “ARN” that is in the “General configuration” section of your channel in the AWS. Use it in the following command:

aws ivs put-metadata --channel-arn "CHANNEL_ARN" --metadata '{"productId":"1000567892"}'You can replace the --metadatapayload with any of the “productId” present in the products.json file or the DynamoDB table.

Now that we have swapped the Channel’s content for your own, this is a perfect time to replace the products with your own! Review previous steps 4a and 4b. Upload your own images to the S3 bucket (step 4a) and modify the products.jsonfile to reflect those changes. Make sure to change the productId to something new so they don’t collide with the ones currently in your DynamoDB Table. Use the same process from step 4b to use the batch-write-item command. Finally, use the timed metadata commands to reference your new products.

Note: You may need to refresh your browser page to get the new products after they have been imported.

9. Clean up

After you are done testing the serverless portion of this demo complete the following steps to avoid any unwanted charges. We encourage you to build upon this demo like a sandbox, but keep in mind that S3, DynamoDB, and API Gateway have costs associated to them, even if you leave the resources idle. Please see the pricing calculator if you have questions about this. You can continue to use the USE_MOCK_DATA = true feature of the “web-ui” section without needing the “serverless” infrastructure if you want to continue developing on this demo without incurring any additional AWS costs.

If you still have the demo frontend running, end the process by pressing ctrl+c in your terminal.

First, use delete-stackto remove the CloudFormation stack:

aws cloudformation delete-stack --stack-name $DEMO_STACKBefore we delete-bucket of our S3 bucket, we must empty its contents:

aws s3 rm s3://$DEMO_BUCKET --recursiveNow we candelete-bucket:

aws s3api delete-bucket --bucket $DEMO_BUCKET --region $DEMO_REGIONDouble check if the CloudFormation stack was successfully deleted. You can do so via the AWS Management Console, or by running the following command:

aws cloudformation describe-stacks --stack-name $DEMO_STACKYou should get adoes not existresponse if it was successfully deleted.

About Amazon IVS

Amazon Interactive Video Service (Amazon IVS) is a managed live streaming solution that is quick and easy to set up, and ideal for creating interactive video experiences. Simply send your live streams to Amazon IVS and the service does everything you need to make low-latency live video available to any viewer around the world, letting you focus on building interactive experiences alongside the live video. Learn more.