Artificial Intelligence

Extracting custom entities from documents with Amazon Textract and Amazon Comprehend

July 2024: This post was reviewed and updated for accuracy.

Amazon Textract is a machine learning (ML) service that makes it easy to extract text and data from scanned documents. Textract goes beyond simple optical character recognition (OCR) to identify the contents of fields in forms and information stored in tables. This allows you to use Amazon Textract to instantly “read” virtually any type of document and accurately extract text and data without needing any manual effort or custom code.

Amazon Textract has multiple applications in a variety of fields. For example, talent management companies can use Amazon Textract to automate the process of extracting a candidate’s skill set. Healthcare organizations can extract patient information from documents to fulfill medical claims.

When your organization processes a variety of documents, you sometimes need to extract entities from unstructured text in the documents. A contract document, for example, can have paragraphs of text where names and other contract terms are listed in the paragraph of text instead of as a key/value or form structure. Amazon Comprehend is a natural language processing (NLP) service that can extract key phrases, places, names, organizations, events, sentiment from unstructured text, and more. With custom entity recognition, you can to identify new entity types not supported as one of the preset generic entity types. This allows you to extract business-specific entities to address your needs.

In this post, we show how to extract custom entities from scanned documents using Amazon Textract and Amazon Comprehend.

Use case overview

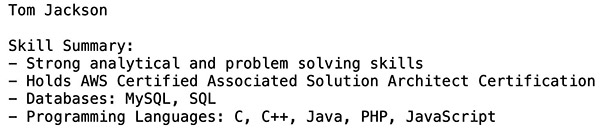

For this post, we process resume documents from the Resume Entities for NER dataset to get insights such as candidates’ skills by automating this workflow. We use Amazon Textract to extract text from these resumes and Amazon Comprehend custom entity recognition to detect skills such as AWS, C, and C++ as custom entities. The following screenshot shows a sample input document.

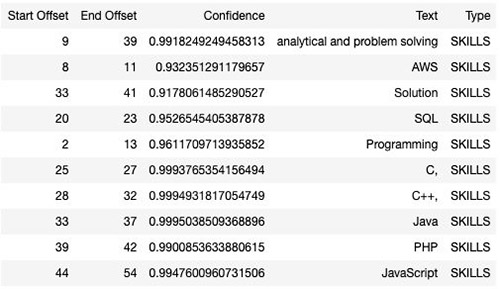

The following screenshot shows the corresponding output generated using Amazon Textract and Amazon Comprehend.

Solution Approaches

There are two main approaches to solve the given use case with Amazon Comprehend, depending on the type of data provided for training and the nature of the documents used for inference.

- Entity List: One approach is to train a custom entity recognition model with Amazon Comprehend by providing an Entity List. With this method, you can supply a list of specific entities that you want the model to learn and identify. This allows Amazon Comprehend to train the model specifically to recognize and extract those custom entities from your data. However, models trained with entity lists can only be used for plaintext documents. If you need to extract custom entities from image files, PDFs, or Word documents, you must first extract the text and convert it to plain text for custom entity extraction.

- Annotations: With this approach, you provide the location of your entities in a number of documents, allowing Amazon Comprehend to train the model on both the entity and its context. This method enables you to pass image files, PDFs, or Word documents directly to the custom entity recognition model for custom entity extraction.

In this blog, we will walk you through the implementation of the entity list approach and outline the steps for the Annotations approach to solve the use case.

Leveraging Annotations vs entity lists

Creating annotations for Amazon Comprehend takes more work than making an entity list, but it results in a more accurate model because of the added context. An entity list is quicker and requires less labor, however, the resulting model may lack accuracy. Annotations are better for ambiguous or context-dependent entities, like distinguishing between Amazon the river and Amazon the company. It enables Amazon Comprehend to leverage the rich contextual data during the training process. As a result, it identifies the entities more accurately and reduces false positives. They are also recommended when working with image files, PDFs, or Word documents, and when there is a process to get annotations. On the other hand, an entity list suits when a comprehensive list of entities is readily available or easy to create. It also suits first-time users seeking a less effort-intensive option, albeit potentially trading off some accuracy. To learn more about it, see when to use annotations vs entity lists.

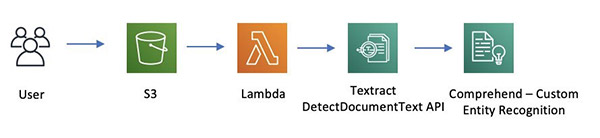

Solution overview – using Entity List

The following diagram shows a serverless architecture that processes incoming documents for custom entity extraction using Amazon Textract and custom model trained using Amazon Comprehend. As documents are uploaded to an Amazon Simple Storage Service (Amazon S3) bucket, it triggers an AWS Lambda function. The function calls the Amazon Textract DetectDocumentText API to extract the text and calls Amazon Comprehend with the extracted text to detect custom entities using DetectEntities API.

The solution consists of two parts:

- Training:

- Extract text from PDF documents using Amazon Textract

- Label the resulting data using Amazon SageMaker Ground Truth

- Train custom entity recognition using Amazon Comprehend with the labeled data

- Inference:

- Send the document to Amazon Textract for data extraction

- Send the extracted data to the Amazon Comprehend custom model for entity extraction

Launching your AWS CloudFormation stack

For this post, we use an AWS CloudFormation stack to deploy the solution and create the resources it needs. These resources include an S3 bucket, Amazon SageMaker instance, and the necessary AWS Identity and Access Management (IAM) roles. For more information about stacks, see Walkthrough: Updating a stack.

- Download the following CloudFormation template and save to your local disk.

- Sign in to the AWS Management Console with your IAM user name and password.

- On the AWS CloudFormation console, choose Create Stack.

Alternatively, you can choose Launch Stack directly.

- On the Create Stack page, choose Upload a template file and upload the CloudFormation template you downloaded.

- Choose Next.

- On the next page, enter a name for the stack.

- Leave everything else at their default setting.

- On the Review page, select I acknowledge that AWS CloudFormation might create IAM resources with custom names.

- Choose Create stack.

- Wait for the stack to finish running.

You can examine various events from the stack creation process on the Events tab. After the stack creation is complete, look at the Resources tab to see all the resources the template created.

- On the Outputs tab of the CloudFormation stack, record the Amazon SageMaker instance URL.

Running the workflow on a Jupyter notebook

To run your workflow, complete the following steps:

- Open the Amazon SageMaker instance URL that you saved from the previous step.

- Under the New drop-down menu, choose Terminal.

- On the terminal, clone the GitHub repository.

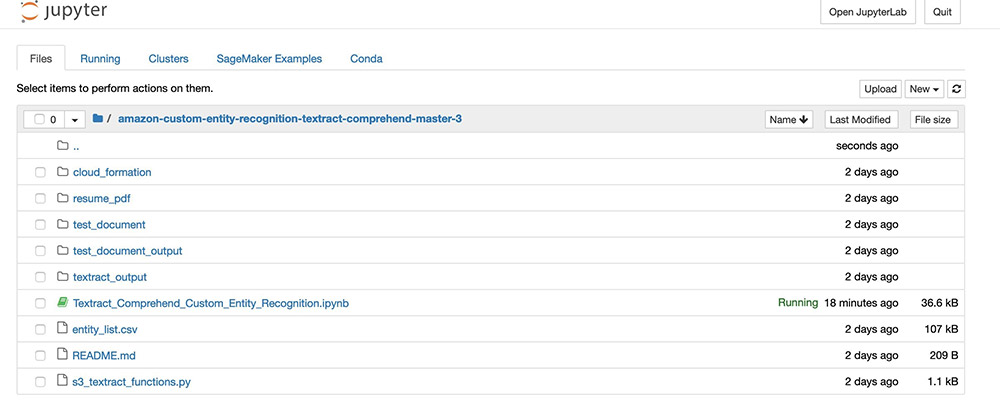

You can check the folder structure (see the following screenshot).

- Open

Textract_Comprehend_Custom_Entity_Recognition.ipynb. - Run the cells.

Code walkthrough

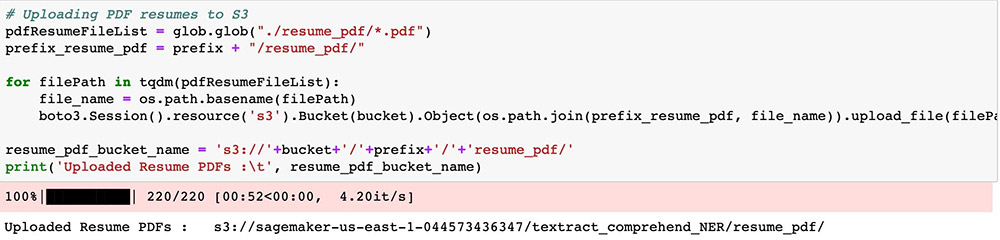

Upload the documents to your S3 bucket.

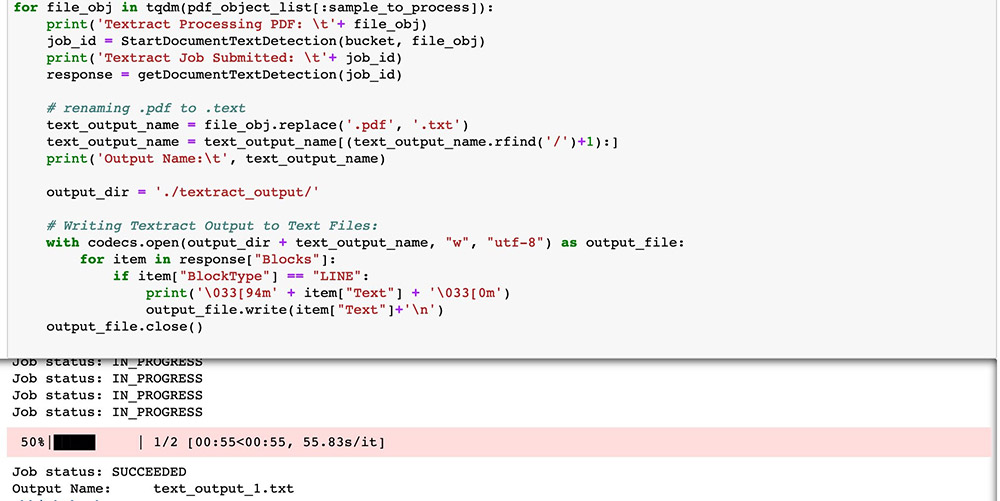

The PDFs are now ready for Amazon Textract to perform OCR. Start the process with a StartDocumentTextDetection asynchronous API call.

For this post, we process two resumes in PDF format for demonstration, but you can process all 220 if needed. The results have all been processed and are ready for you to use.

Because we need to train a custom entity recognition model with Amazon Comprehend (as with any ML model), we need training data. In this post, we use Ground Truth to label our entities. By default, Amazon Comprehend can recognize entities like person, title, and organization. For more information, see Detect Entities. To demonstrate custom entity recognition capability, we focus on candidate skills as entities inside these resumes. We have the labeled data from Ground Truth. The data is available in the GitHub repo <(see: entity_list.csv)>. For instructions on labeling your data, see Developing NER models with Amazon SageMaker Ground Truth and Amazon Comprehend.

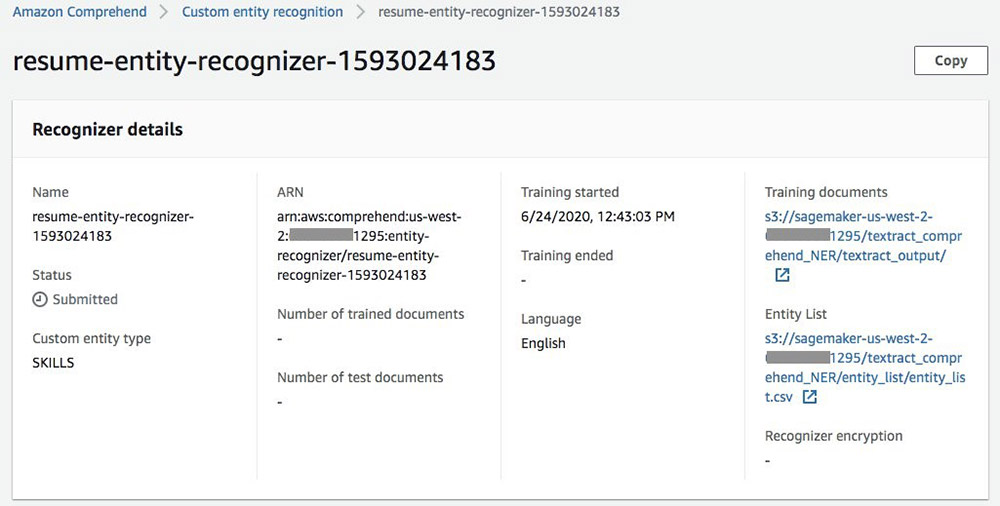

Now we have our raw and labeled data and are ready to train our model. To start the process, use the create_entity_recognizer API call. When the training job is submitted, you can see the recognizer being trained on the Amazon Comprehend console.

In the training, Amazon Comprehend sets aside some data for testing. When the recognizer is trained, you can see the performance of each entity and the recognizer overall.

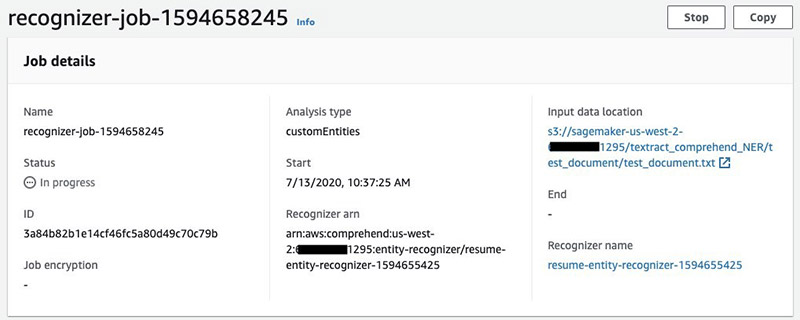

We have prepared a small sample of text to test out the newly trained custom entity recognizer. We run the same step to perform OCR, then upload the Amazon Textract output to Amazon S3 and start a custom recognizer job.

When the job is submitted, you can see the progress on the Amazon Comprehend console under Analysis Jobs.

When the analysis job is complete, you can download the output and see the results. For this post, we converted the JSON result into table format for readability.

Solution Overview – using Annotations

The following architecture diagram provides a walk-through of a serverless setup that analyzes incoming files for customized entity detection. It utilizes a tailored model trained with Amazon Comprehend on Annotations data, Amazon Comprehend Parser, and Amazon Textract. When documents are uploaded to an Amazon Simple Storage Service (Amazon S3) bucket, an AWS Lambda function is triggered through Amazon S3 trigger. The function invokes Amazon Comprehend’s DetectEntities API to identify custom entities. Within the same API call, you can specify text extraction methods, either using Amazon Comprehend Parser or Amazon Textract for text extraction. For more information, refer to the section on setting text extraction options.

For training, you may first start with labelling the documents. Comprehend Semi-Structured Documents Annotation Tool provides detailed steps for this task . If you want to set up the custom annotation template and look for examples of how to annotate documents, refer to Custom document annotation for extracting named entities in documents using Amazon Comprehend. Often times, use case requires to automate this process where speed is critical. This blog post introduces a solution that takes a manifest file which maps PDF documents with the entities that we need to extract from these documents. The pre-labeling tool uses the manifest file to automatically annotate the documents with their corresponding entities. You can then use these annotations directly to train an Amazon Comprehend model resulting in automating the pre-labelling process. The solution leverages AWS services like AWS Lambda, Amazon S3, Amazon SQS, and AWS Step Functions to orchestrate the entire process.

By automating pre-labeling, organizations can save time and effort, enabling them to focus on reviewing and refining the machine-generated labels, leading to more accurate and efficient document processing pipelines. Once the document labelling is done, you can train custom entity recognition using Amazon Comprehend with the labeled data.

For Inference, you can set up the text extraction options in DetectEntities API based on which Amazon Comprehend leverages Amazon Textract or its own parser. Then, you can send the document to Amazon Comprehend custom entity extraction model for entity extraction and view the results.

Conclusion

ML and artificial intelligence allow organizations to be agile. It can automate manual and operational tasks to improve efficiency. In this post, we demonstrated an end-to-end architecture for extracting entities such as a candidate’s skills on their resume by using Amazon Textract and Amazon Comprehend. This blog covered how you can leverage Amazon Textract’s capabilities to extract data from various sources, such as resumes or documents. Additionally, we showcased how to train a custom entity recognizer using Amazon Comprehend, tailored to your specific dataset. Throughout the process, we explored multiple techniques for building custom entity recognition models within Amazon Comprehend, catering to diverse use cases and requirements. Training a custom recognition model based on an entity list requires less work. However, it may yield lower accuracy compared to a model trained on annotation data. Based on your use case and priorities, you can apply these approaches to a variety of industries, such as healthcare and financial services.

To learn more about different text and data extraction features of Amazon Textract, see How Amazon Textract Works. Similarly, to learn more how Amazon Comprehend extracts insights about the content of documents and recognizes the entities, key phrases, language and sentiments to develop the insights. see How Amazon Comprehend works.

About the Authors

Yuan Jiang is a Solution Architect with a focus on machine learning. He is a member of the Amazon Computer Vision Hero program.

Yuan Jiang is a Solution Architect with a focus on machine learning. He is a member of the Amazon Computer Vision Hero program.

Sonali Sahu is a Solution Architect and a member of Amazon Machine Learning Technical Field Community. She is also a member of the Amazon Computer Vision Hero program.

Sonali Sahu is a Solution Architect and a member of Amazon Machine Learning Technical Field Community. She is also a member of the Amazon Computer Vision Hero program.

Kashif Imran is a Principal Solutions Architect at Amazon Web Services. He works with some of the largest AWS customers who are taking advantage of AI/ML to solve complex business problems. He provides technical guidance and design advice to implement computer vision applications at scale. His expertise spans application architecture, serverless, containers, NoSQL and machine learning.

Kashif Imran is a Principal Solutions Architect at Amazon Web Services. He works with some of the largest AWS customers who are taking advantage of AI/ML to solve complex business problems. He provides technical guidance and design advice to implement computer vision applications at scale. His expertise spans application architecture, serverless, containers, NoSQL and machine learning.

Nishant Dhiman is a Senior Solutions Architect at AWS with an extensive background in serverless, security, and mobile platform offerings. He is a voracious reader and a passionate technologist. He loves to interact with customers and always relishes giving talks or presenting on public forums. Outside of work, he likes to keep himself engaged with podcasts, calligraphy, and music.

Nishant Dhiman is a Senior Solutions Architect at AWS with an extensive background in serverless, security, and mobile platform offerings. He is a voracious reader and a passionate technologist. He loves to interact with customers and always relishes giving talks or presenting on public forums. Outside of work, he likes to keep himself engaged with podcasts, calligraphy, and music.

Rohit Raj is a Solution Architect at AWS, specializing in Serverless and a member of the Serverless Technical Field Community. He continually explores new trends and technologies. He is passionate about guiding customers build highly available, resilient, and scalable solutions on cloud. Outside of work, he enjoys travelling, music, and outdoor sports.

Rohit Raj is a Solution Architect at AWS, specializing in Serverless and a member of the Serverless Technical Field Community. He continually explores new trends and technologies. He is passionate about guiding customers build highly available, resilient, and scalable solutions on cloud. Outside of work, he enjoys travelling, music, and outdoor sports.

Audit History

Last reviewed and updated in July 2024 by Nishant Dhiman | Sr. Solutions Architect, and Rohit Raj | Solutions Architect