基于深度学习迁移学习的端到端图像分类器

深度学习中需要大量的数据和计算资源且需花费大量时间来训练模型,但在实际中难以满足这些需求,而使用迁移学习则能有效降低数据量、计算量和计算时间,并能定制在新场景的业务需求,可谓一大利器。

此解决方案基于 Amazon SageMaker完全托管的机器学习服务,使用自己的数据来微调一个预训练的图像分类模型并且达到较高的准确率来构建一个车型号分类器。Amazon SageMaker 是一项完全托管的模块化机器学习服务,可帮助开发人员和数据科学家大规模地构建、训练和部署机器学习模型。

本文使用了斯坦福大学提供的开源数据集cars dataset,该数据集包含了196种不同汽车品牌车型的16185张图片。其中我们将使用三种车型号组成训练集。您可以访问该数据集主页查看完整的数据说明和进行下载。

2. 在SageMaker notbook的命令窗口运行下载的数据处理脚本process.sh

#!/usr/bin/env bash

## The process file for image classification data transform to the recordio format

# current directory contains only one zip file : my_traindataset.zip

#image to record tool

git clone https://github.com/apache/incubator-mxnet.git

source activate amazonei_mxnet_p36

#unzip your own dataset

unzip *.zip

mkdir train

mkdir validation

#need to fill with your own data path

data_path=/home/ec2-user/Sagemaker/yourowndatafile/

echo "data_path: ${data_path}"

train_path=train/

echo "train_path: ${train_path}"

val_path=validation/

echo "val_path: ${val_path}"

python incubator-mxnet/tools/im2rec.py \

--list \

--train-ratio 0.8 \

--recursive \

$data_path/data $data_path

python incubator-mxnet/tools/im2rec.py \

--resize 224 \

--center-crop \

--num-thread 4 \

$data_path/data $data_path

#move the xxx.rec file to train & test file

mv ${data_path}data_train.rec $train_path

mv ${data_path}data_val.rec $val_path

3. 将处理之后的train_data.rec/val_data.rec上传至已创建的S3存储桶内供后续模型训练使用

import os

import boto3

def upload_to_s3(file):

s3 = boto3.resource('s3')

data = open(file, "rb")

key = file

s3.Bucket(bucket).put_object(Key=key, Body=data)

# caltech-256

s3_train_key = "car_data_sample/train"

s3_validation_key = "car_data_sample/validation"

s3_train = 's3://{}/{}/'.format(bucket, s3_train_key)

s3_validation = 's3://{}/{}/'.format(bucket, s3_validation_key)

upload_to_s3('car_data_sample/train/data_train.rec')

upload_to_s3('car_data_sample/validation/data_val.rec')

2. 在SageMaker Notebook中配置模型训练的一系列超参数

# The algorithm supports multiple network depth (number of layers). They are 18, 34, 50, 101, 152 and 200 # For this training, we will use 18 layers num_layers = 18 # we need to specify the input image shape for the training data image_shape = "3,224,224" # we also need to specify the number of training samples in the training set # for caltech it is 15420 num_training_samples = 96 # specify the number of output classes num_classes = 3 # batch size for training mini_batch_size = 30 # number of epochs epochs = 100 # learning rate learning_rate = 0.01 top_k=2 # Since we are using transfer learning, we set use_pretrained_model to 1 so that weights can be # initialized with pre-trained weights use_pretrained_model = 1

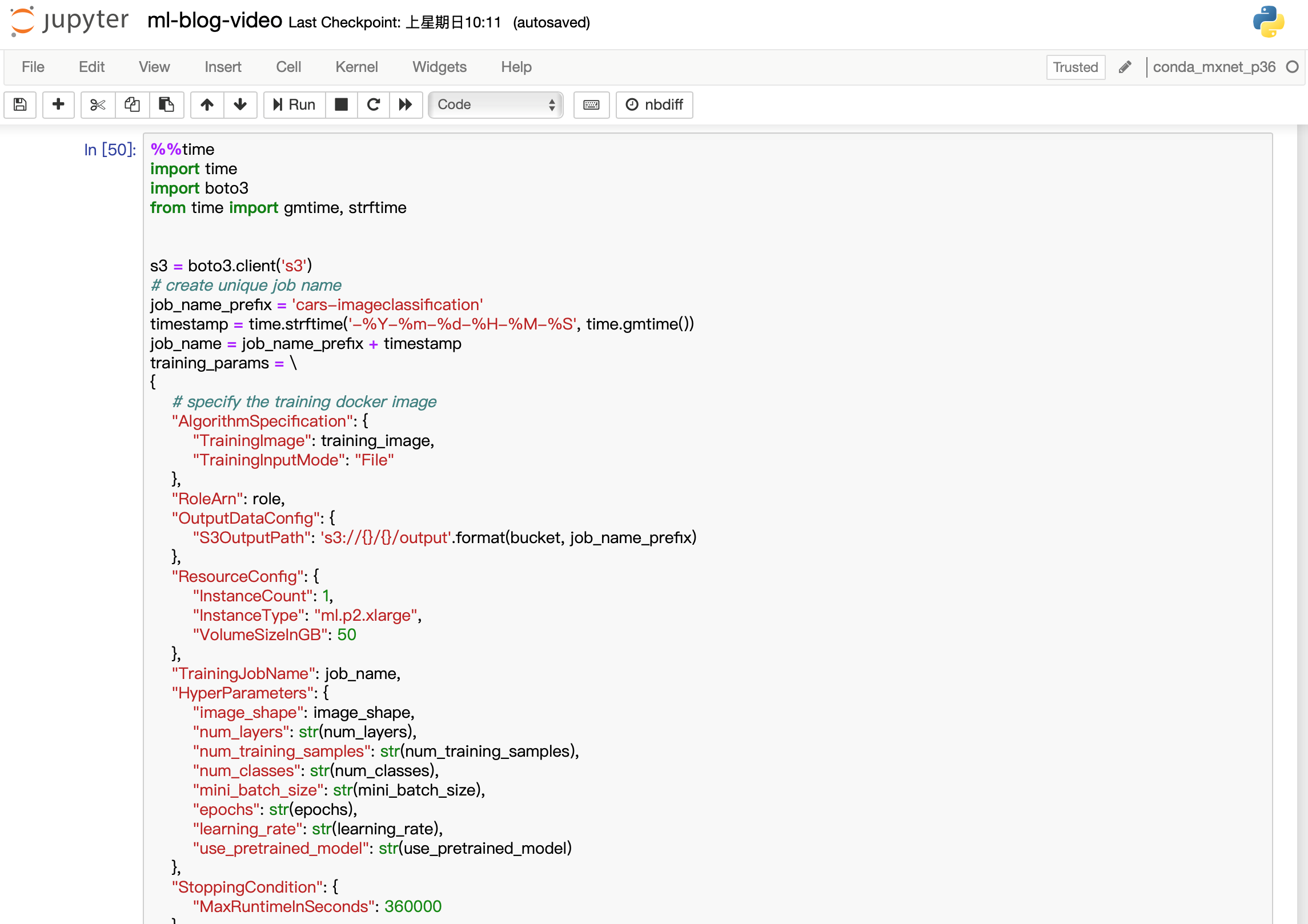

3. 在Amazon SageMaker Notebook中创建SageMaker API : 构建对应的训练任务

a) 指定训练的输入与输出

b) 指定训练的计算实例配置

%%time

import time

import boto3

from time import gmtime, strftime

s3 = boto3.client('s3')

# create unique job name

job_name_prefix = 'cars-imageclassification'

timestamp = time.strftime('-%Y-%m-%d-%H-%M-%S', time.gmtime())

job_name = job_name_prefix + timestamp

training_params = \

{

# specify the training docker image

"AlgorithmSpecification": {

"TrainingImage": training_image,

"TrainingInputMode": "File"

},

"RoleArn": role,

"OutputDataConfig": {

"S3OutputPath": 's3://{}/{}/output'.format(bucket, job_name_prefix)

},

"ResourceConfig": {

"InstanceCount": 1,

"InstanceType": "ml.p2.xlarge",

"VolumeSizeInGB": 50

},

"TrainingJobName": job_name,

"HyperParameters": {

"image_shape": image_shape,

"num_layers": str(num_layers),

"num_training_samples": str(num_training_samples),

"num_classes": str(num_classes),

"mini_batch_size": str(mini_batch_size),

"epochs": str(epochs),

"learning_rate": str(learning_rate),

"use_pretrained_model": str(use_pretrained_model)

},

"StoppingCondition": {

"MaxRuntimeInSeconds": 360000

},

#Training data should be inside a subdirectory called "train"

#Validation data should be inside a subdirectory called "validation"

#The algorithm currently only supports fullyreplicated model (where data is copied onto each machine)

"InputDataConfig": [

{

"ChannelName": "train",

"DataSource": {

"S3DataSource": {

"S3DataType": "S3Prefix",

"S3Uri": s3_train,

"S3DataDistributionType": "FullyReplicated"

}

},

"ContentType": "application/x-recordio",

"CompressionType": "None"

},

{

"ChannelName": "validation",

"DataSource": {

"S3DataSource": {

"S3DataType": "S3Prefix",

"S3Uri": s3_validation,

"S3DataDistributionType": "FullyReplicated"

}

},

"ContentType": "application/x-recordio",

"CompressionType": "None"

}

]

}

print('Training job name: {}'.format(job_name))

print('\nInput Data Location: {}'.format(training_params['InputDataConfig'][0]['DataSource']['S3DataSource']))

2. 通过Amazon SageMaker Notebook创建线上部署模型,配置接口以及推理使用的实例类型

%%time

import boto3

from time import gmtime, strftime

sage = boto3.Session().client(service_name='sagemaker')

model_name="cars-imageclassification-" + time.strftime('-%Y-%m-%d-%H-%M-%S', time.gmtime())

print(model_name)

info = sage.describe_training_job(TrainingJobName=job_name)

model_data = info['ModelArtifacts']['S3ModelArtifacts']

print(model_data)

hosting_image = get_image_uri(boto3.Session().region_name, 'image-classification')

primary_container = {

'Image': hosting_image,

'ModelDataUrl': model_data,

}

create_model_response = sage.create_model(

ModelName = model_name,

ExecutionRoleArn = role,

PrimaryContainer = primary_container)

print(create_model_response['ModelArn'])

from time import gmtime, strftime

timestamp = time.strftime('-%Y-%m-%d-%H-%M-%S', time.gmtime())

endpoint_config_name = job_name_prefix + '-epc-' + timestamp

endpoint_config_response = sage.create_endpoint_config(

EndpointConfigName = endpoint_config_name,

ProductionVariants=[{

'InstanceType':'ml.m4.xlarge',

'InitialInstanceCount':1,

'ModelName':model_name,

'VariantName':'AllTraffic'}])

print('Endpoint configuration name: {}'.format(endpoint_config_name))

print('Endpoint configuration arn: {}'.format(endpoint_config_response['EndpointConfigArn']))

3. 使用上面配置的模型和端口在Amazon SageMaker Notebook中配置创建endpoint,大约需要10分钟左右的时间,当以下代码运行结果为Endpoint creation ended with EndpointStatus = InService,那么代表以及成功创建了一个托管在Amazon SageMaker的endpoint。

%%time

import time

timestamp = time.strftime('-%Y-%m-%d-%H-%M-%S', time.gmtime())

endpoint_name = job_name_prefix + '-ep-' + timestamp

print('Endpoint name: {}'.format(endpoint_name))

endpoint_params = {

'EndpointName': endpoint_name,

'EndpointConfigName': endpoint_config_name,

}

endpoint_response = sagemaker.create_endpoint(**endpoint_params)

print('EndpointArn = {}'.format(endpoint_response['EndpointArn']))

# get the status of the endpoint

response = sagemaker.describe_endpoint(EndpointName=endpoint_name)

status = response['EndpointStatus']

print('EndpointStatus = {}'.format(status))

# wait until the status has changed

sagemaker.get_waiter('endpoint_in_service').wait(EndpointName=endpoint_name)

# print the status of the endpoint

endpoint_response = sagemaker.describe_endpoint(EndpointName=endpoint_name)

status = endpoint_response['EndpointStatus']

print('Endpoint creation ended with EndpointStatus = {}'.format(status))