AWS for M&E Blog

Live video streaming using Amazon S3

Introduction

Amazon Simple Storage Service (Amazon S3) is an object storage service offering the scalability, data availability, security, performance, and consistency required of a basic origin for live streaming video workflows. This post outlines best practices for configuration and resiliency when using AWS Elemental Live and AWS Elemental MediaLive to generate an HLS output group with Amazon S3. Amazon S3 can now be used instead of AWS Elemental MediaStore for basic low-cost live video origination. For live streaming workflows that require a comprehensive range of features to prepare, protect, and distribute video content, see AWS Elemental MediaPackage.

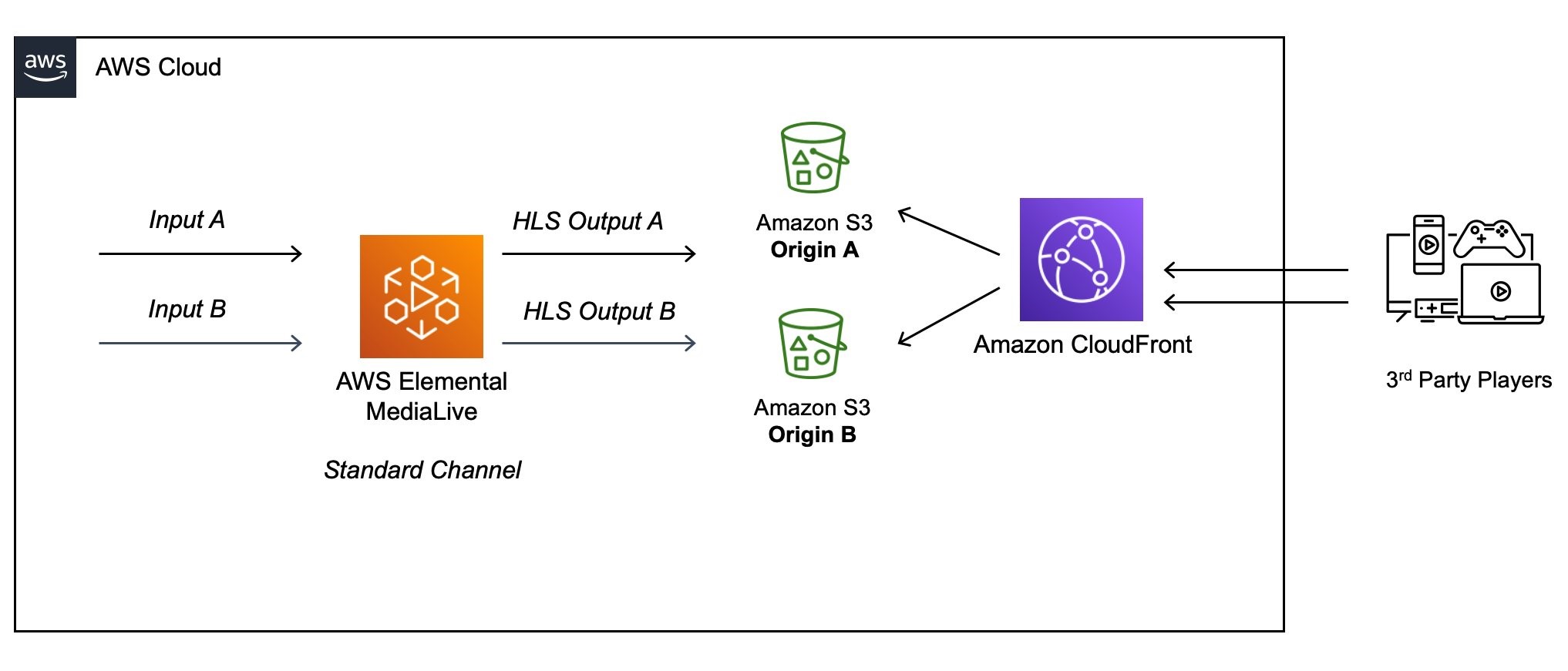

Live origin architecture

A redundant live streaming architecture provides two media processing pipelines with identical outputs. In this example, a MediaLive standard channel generates HLS outputs to two separate Amazon S3 bucket locations, using the Redundant HLS manifests feature. Alternatively, with the MediaLive single pipeline class, there is only one output destination and an interruption will affect the viewing experience.

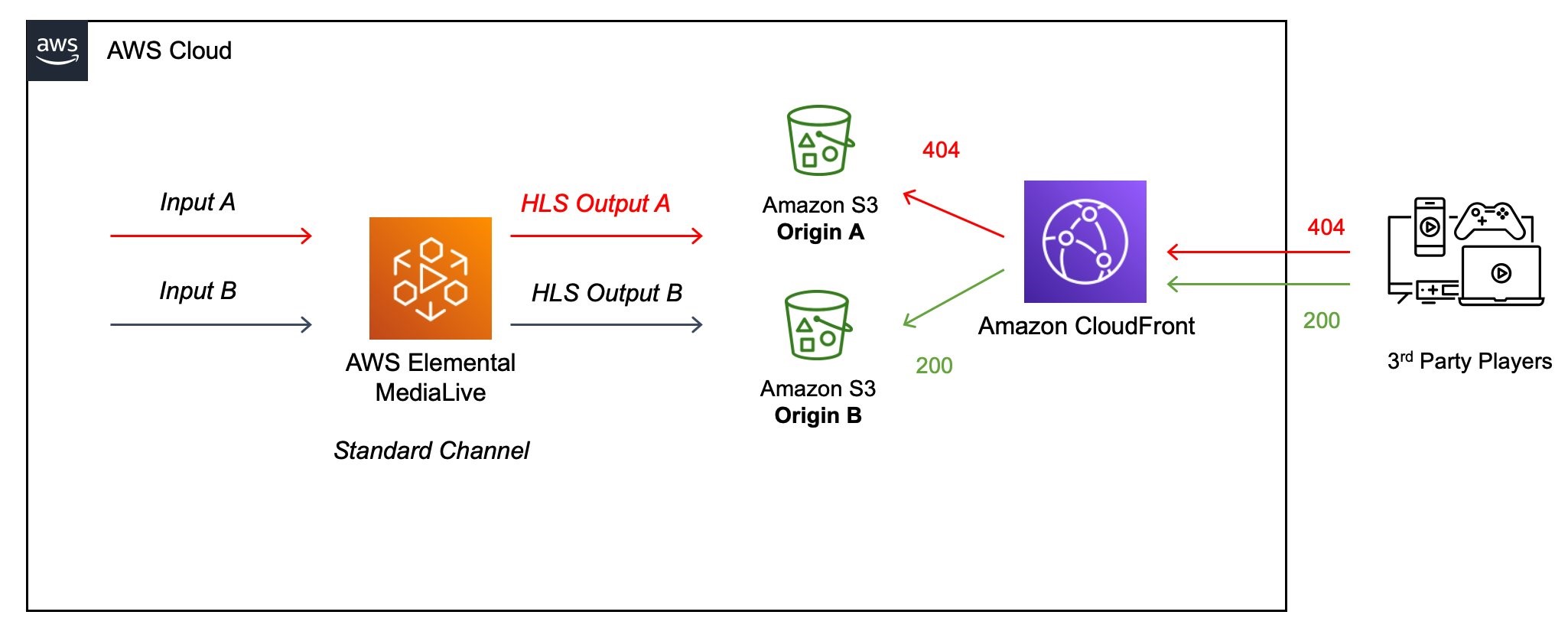

For either architecture, any failure to update manifest and segments results in the live stream going “stale”, whereby players receive 200 HTTP code responses from the origin, but typically play the last few segments of video/audio and then stop. This post outlines techniques to detect and invalidate such stale variant manifests. Forcing 404 HTTP error codes to the downstream Amazon CloudFront content delivery network (CDN) and video players triggers redundancy failover as described in the HLS specification.

When selecting Amazon S3 as origin storage and to ensure downstream CDN and players handle redundant manifests as depicted in the previous diagram, the following criteria need to be considered.

Performance

Adaptive bitrate (ABR) media used for over-the-top (OTT) streaming is typically based on a series of sequentially named file segments containing video and audio media. Best practices for optimizing Amazon S3 performance outlines how Amazon S3 automatically scales to high request rates to achieve at least 3,500 PUT/COPY/POST/DELETE or 5,500 GET/HEAD requests per second per partitioned prefix.

For ABR workflows, using slash (/) as the delimiter for each live channel format and associated quality variant provides this prefix. The syntax for the paths for the outputs of MediaLive are outlined in the documentation, which provides this prefix.

Amazon S3 performance is functionally identical to that of AWS Elemental MediaStore when measured at p99.9 latencies for PUT and GET requests to playlist and media segment sized objects.

Consistency

Amazon S3 provides strong read-after-write consistency for PUT and DELETE requests of objects in your Amazon S3 bucket in all AWS Regions. This behavior applies to both writes of new objects as well as PUT requests that overwrite existing objects and DELETE requests. For more information, see Amazon S3 data consistency model.

After a successful write of a new object or an overwrite of an existing object, any subsequent read request immediately receives the latest version of the object. Amazon S3 also provides strong consistency for list operations, so after a write, you can immediately perform a listing of the objects in a bucket with any changes reflected. If there is a failed write from the encoder posting a manifest or segment, a retry must be configured to occur for continuity with available segments.

Resiliency

The output of redundant live encoding pipelines can be to a single Amazon S3 bucket with a different prefix, or two separate Amazon S3 buckets. When the redundant manifest feature is enabled, the main playlist for each pipeline references both its own child manifests and the child manifests for the other pipeline. If there is a problem with a pipeline, there will be a problem with the child manifests for that pipeline. The downstream player can then refer back to the main manifest to find the child manifest for the other pipeline.

Security

Amazon S3 allows customers to store and secure data from unauthorized access with encryption features and access management tools. Amazon S3 maintains compliance programs, such as PCI-DSS, HIPAA/HITECH, FedRAMP, EU Data Protection Directive, and FISMA, to help you meet regulatory requirements. AWS also supports numerous auditing capabilities to monitor access requests to your Amazon S3 resources.

Content security for media delivery is provided via encryption at the live encoder and access tokenization using the Secure Media Delivery at the Edge on AWS solution. However, full Digital Rights Management (DRM) requires the use of other services such as AWS Elemental MediaPackage.

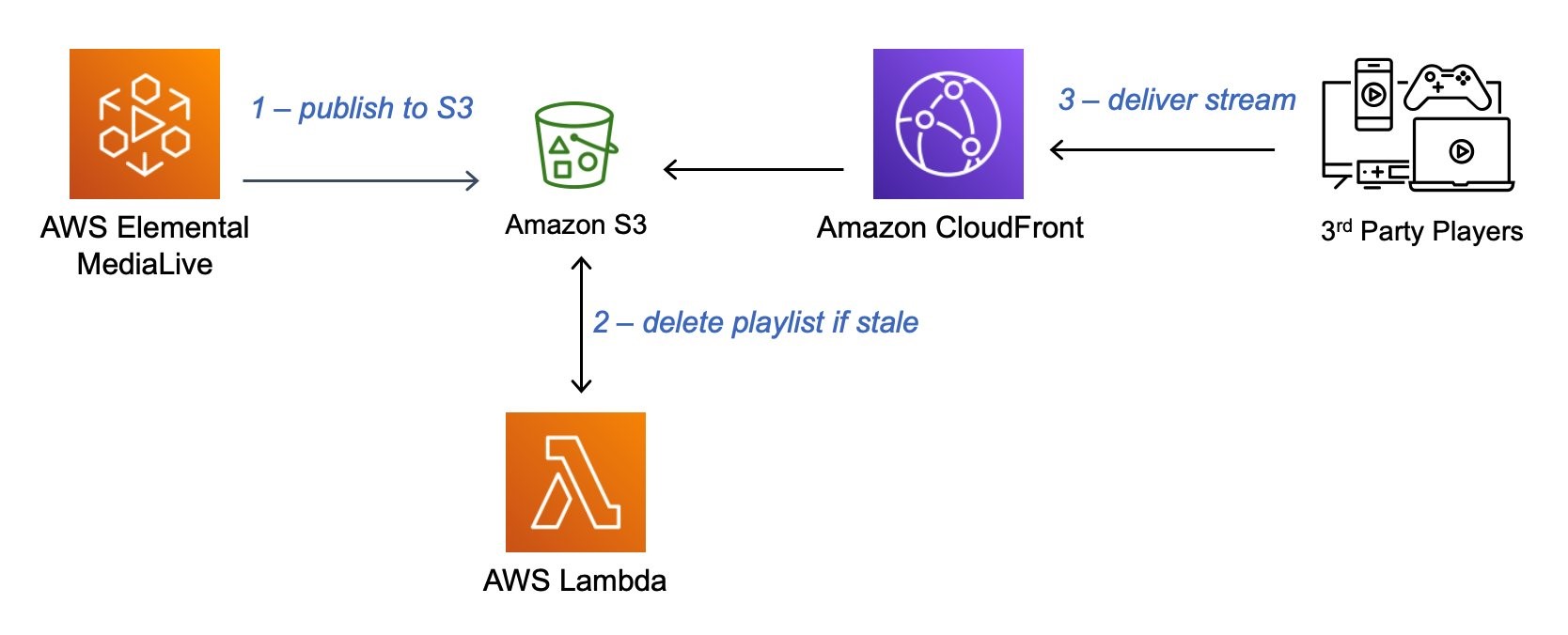

Stale manifest invalidation

When an encoder fails to upload an update to the media playlist, it’s often preferable to have this object return a 404 when it’s considered stale. A stale playlist contains only segments that are behind the live edge and thus may require a playback switch to a different variant playlist. By returning a 404, the client playback device is able to make a switching decision in an attempt to continue playback rather than getting stuck on the stale playlist.

Once deployed, this reference SAM implementation will monitor any playlist files uploaded to the nominated Amazon S3 bucket and delete when and if a stale playlist is detected: https://github.com/aws-samples/amazon-cloudfront-s3-hls-invalidator.

It is necessary to only check variant playlists that do not have an endlist, which is the HLS tag #EXT-X-ENDLIST. The master multivariant playlist must remain in the Amazon S3 bucket, as this is typically only posted from the live encoder when starting the stream and then not updated.

At the live encoder, adding a timestamp to segment names is recommended in order to prevent segments from overriding each other if the channel restarts and to ensure CloudFront has a different cache key. For MediaLive use, see Designing the segmentModifier using Identifiers for variable data.

Pricing

Like most AWS services, there is no minimum charge with Amazon S3 or AWS Lambda. You pay only for what you use, and the cost components to consider for a live streaming application are storage, requests, data transfer, and Lambda execution time. You can read more about Amazon S3 pricing here and AWS Lambda here.

Summary

A reference architecture is available to set up Live Streaming on AWS with Amazon S3 and this post outlines some best practices to optimize for resilience with stale manifest invalidation. For additional background, see AWS re:Invent 2022 – Deep dive on Amazon S3.