Bagaimana konten ini?

- Pelajari

- Bangun Agen AI yang Berskala: Siklus Hidup Praktis untuk Arsitektur Agen Startup

Bangun Agen AI yang Berskala: Siklus Hidup Praktis untuk Arsitektur Agen Startup

Sebagian besar startups membangun agen mereka secara berlebihan. Sebelum mereka memiliki 100 pengguna, mereka langsung melompat ke orkestrasi multiagen, grafik memori, runtime, dan mesin kebijakan. Agen tidak memulai sebagai platform; mereka mulai sebagai fitur produk. Jika Anda memikirkan pengembangan agen melalui lensa siklus hidup, yang sejalan dengan pertumbuhan pelanggan, arsitekturnya menjadi jelas. Biasanya lebih sederhana dibandingkan dengan yang disarankan oleh hiruk pikuk ekosistem.

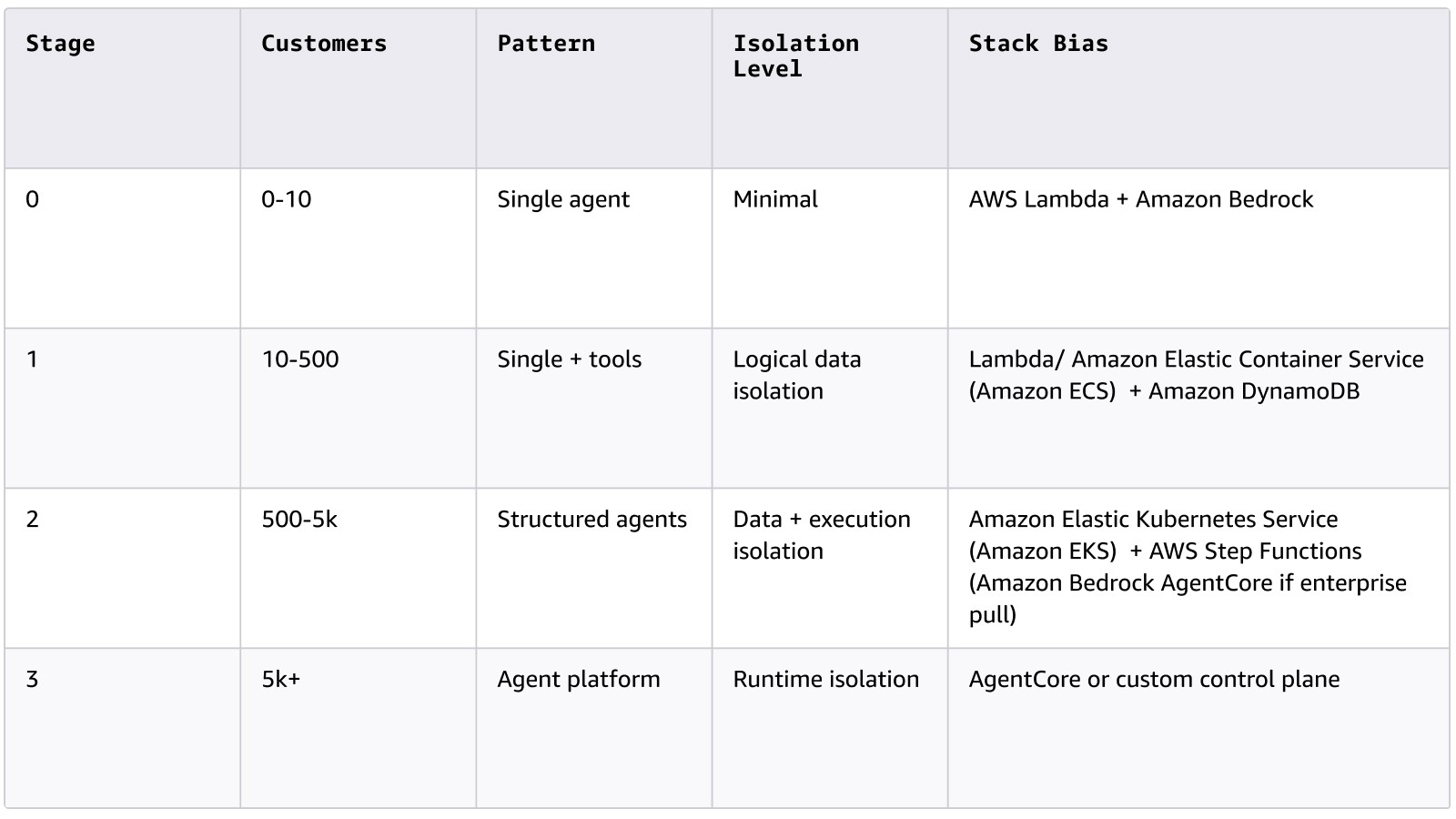

Berikut adalah model kematangan praktis untuk membangun agen tanpa terlalu banyak merancang sejak dini.

Sekilas tentang siklus hidup agen

Tahap 0: “Apakah Ini Berhasil?”

0–10 pelanggan | Pra-PMF

Pada tahap ini, Anda tidak membangun sistem agen, Anda membangun satu agen yang berfokus pada satu hasil. Biasanya hanya bergantung pada beberapa alat dan berjalan dengan pelaksanaan stateless. Pada intinya, ini adalah putaran penalaran dengan pemanggilan alat.

Arsitektur

Pengguna → API Gateway → Komputasi (AWS Lambda) → LLM (Amazon Bedrock) → Alat → Respons

Tidak ada identitas persistensi, tidak ada memori jangka panjang, dan tidak ada mesin orkestrasi.

Tumpukan yang Direkomendasikan

Model

Gunakan alat evaluasi bawaan untuk membandingkan performa, biaya, dan akurasi di seluruh model, dengan fleksibilitas untuk beralih model saat Anda berkembang.

Pelaksanaan

- AWS Lambda (default)

- Amazon Elastic Container Service (Amazon ECS)/AWS Fargate jika berbasis kontainer

Penyimpanan (jika diperlukan)

Kerangka kerja

- Panggilan SDK mentah

- Light Strands Agents SDK(SDK agen sumber terbuka untuk perulangan penalaran dan orkestrasi alat) atau LangChain untuk penanganan alat terstruktur

Hindari kerangka kerja multiagen dan runtime di sini.

Tujuan: Untuk memvalidasi putaran penalaran memberikan nilai nyata.

Tahap 1: “Hal ini Mulai Digunakan”

10–500 pelanggan | Traksi awal

Saat penggunaan nyata dimulai, persyaratan baru muncul. Pengguna mengharapkan kontinuitas sesi, kasus edge muncul dengan cepat, prompt terbukti rapuh, dan sistem harus menangani penggunaan bersamaan. Anda mungkin masih memiliki satu agen utama, tetapi sekarang membutuhkan struktur.

Jadi, apa yang perlu diubah? Pertama, Anda harus memperkenalkan memori sesi, output terstruktur, dan abstraksi alat yang lebih jelas. Batasan pengaman dan observabilitas dasar juga menjadi penting bagi Anda untuk memahami serta menstabilkan sistem di bawah penggunaan nyata.

Tumpukan yang Direkomendasikan

Pelaksanaan

- AWS Lambda atau Amazon ECS

- Amazon Elastic Kubernetes Service (Amazon EKS) hanya jika Anda sudah native Kubernetes

Status

- DynamoDB (persistensi sesi)

- Amazon S3 (artefak)

- Basis data vektor, seperti Amazon S3 Vectors, hanya jika pengambilan adalah inti

Kerangka kerja

- Strands Agents SDK (struktur penalaran bersih)

- LangChain (komposisi alat)

- LlamaIndex (kasus penggunaan intensif pengambilan)

Observabilitas

- Amazon CloudWatch (metrik dan log)

- AWS X-Ray (pelacakan terdistribusi)

- Amazon Managed Grafana (visualisasi data)

Tetap hindari kerumunan. Sebagian besar produk di sini mendapat manfaat dari satu putaran penalaran disiplin.

Tujuan: Keandalan di bawah beban pengguna nyata.

Tahap 2: “Sekarang, Ini Adalah Sistem”

500–5.000 pelanggan | Kompleksitas penskalaan

Pada tahap kedua, sistem mulai berperilaku seperti infrastruktur nyata. Anda berurusan dengan sesi bersamaan, alur kerja yang berjalan lama, dan pelaksanaan asinkron. Output sekarang mungkin penting untuk bisnis, biaya menjadi lebih sensitif, dan pelanggan korporasi mulai mengajukan pertanyaan serius. Ini adalah titik infleksi nyata pertama.

Untuk beroperasi secara efektif pada tahap ini, Anda membutuhkan alur kerja persistensi, isolasi tenant dan sesi yang jelas, prompt dan alat berversi, serta jalur evaluasi untuk terus menguji dan meningkatkan sistem.

Isolasi: Apa yang Sebenarnya Anda Butuhkan

Pada tahap ini, isolasi bukan opsional. Namun, isolasi memiliki lapisan:

1. Isolasi Data (Wajib)

- Partisi DynamoDB yang terlingkup tenant

- Namespace vektor per tenant

- Prefiks/bucket Amazon S3 per tenant

- AWS Identity and Access Management (IAM)-kredensial alat yang terlingkup

- Enkripsi dengan AWS Key Management Service (KMS)

Ini adalah table stake.

2. Isolasi Pelaksanaan (Sering Diperlukan)

- Batas konkurensi per tenant

- Pisahkan kumpulan pekerja untuk tenant premium

- Pembatas laju dan pemutus sirkuit

- Mungkin memisahkan akun AWS untuk pelanggan besar

Ini melindungi terhadap tetangga yang derau.

3. Isolasi Tingkat Runtime (Terkadang Diperlukan)

- Sandboxing yang kuat

- Penegakan kebijakan terpusat

- Kontrol audit terstandardisasi

- Hapus batasan tenansi di lapisan pelaksanaan

Di sinilah runtime agen terkelola masuk.

Jalur Arsitektur Default

Untuk sebagian besar startups di Tahap 2:

Alur kerja

- AWS Step Functions

- Amazon EventBridge

- Temporal (jika orkestrasi eksternal lebih disukai)

Pelaksanaan

- Amazon EKS menjadi umum di sini

- Amazon ECS untuk model yang lebih sederhana

Kerangka kerja

- Strands Agents SDK untuk penalaran terstruktur

- LangGraph untuk aliran kontrol eksplisit

- CrewAI hanya jika spesialisasi multiagen nyata dibutuhkan

Alur kerja primitif fleksibel. Mereka memungkinkan Anda melakukan iterasi logika produk dengan cepat sambil tetap memberi Anda pelaksanaan persistensi dan percobaan ulang.

Kapan Mengadopsi AgentCore di Tahap 2

Amazon Bedrock AgentCore adalah platform agentik untuk membangun dan mengoperasikan agen AI dengan cepat, aman, dan dalam skala besar. Platform ini menyediakan layanan runtime seperti akses alat yang aman, memori, penegakan kebijakan, serta pemantauan operasional, agar tim Anda dapat fokus pada performa agen tanpa harus membangun lapisan infrastruktur mereka sendiri.

Pindah ke AgentCore lebih awal jika 2+ di antaranya benar:

- Kesepakatan korporasi bergantung pada jaminan isolasi

- Tinjauan keamanan menuntut audit formal dan model tenansi

- Anda membangun sendiri penegakan kebijakan dan perekat isolasi.

- Berbagai agen/produk membutuhkan lapisan runtime bersama

- Konkurensi tinggi memerlukan kontrol pelaksanaan terstandardisasi

Aturan praktis:

- Gunakan alur kerja primitif saat membentuk produk

- Gunakan AgentCore saat Anda menstandardisasi operasi

Tujuan: Infrastruktur yang dapat diandalkan dengan isolasi yang tepat.

Tahap 3: “Anda Menjalankan Platform Agen”

5.000+ pelanggan | Eksposur korporasi

Pada tahap ketiga Anda tidak lagi membangun agen, Anda mengoperasikan banyak agen di banyak tenant. Persyaratan kepatuhan, atribusi biaya, dan Perjanjian Tingkat Layanan

Ekspektasi (SLA) sekarang menjadi bagian dari sistem. Sekarang, isolasi tingkat runtime telah menjadi pilihan arsitektur yang rasional.

Tumpukan yang Direkomendasikan

Runtime Agen

- Runtime AWS AgentCore

- Alternatifnya, bidang kontrol kustom di Amazon EKS

Keamanan

- Izin alat yang terlingkup AWS IAM

- Batasan tenant yang kuat

- Segmentasi Cloud Privat Virtual (VPC)

Tata Kelola

- Atribusi biaya per tenant

- Pencatatan audit

- Penegakan kebijakan terpusat

Anda telah lulus dari fitur ke platform.

AWS vs. Kerangka Kerja: Jaga Batasan Bersih

Gunakan AWS untuk:

- Pelaksanaan persistensi

- Isolasi

- Identitas

- Observabilitas

- Tata Kelola

Gunakan kerangka kerja (Strands Agents SDK, LangChain, LangGraph, CrewAI) untuk:

- Menyusun penalaran

- Komposisi alat

- Pola perencanaan/pelaksanaan

Masalah infrastruktur milik cloud primitif, sedangkan masalah penalaran milik kerangka kerja agen. Mencampur lapisan-lapisan tersebut sering menciptakan kompleksitas yang tidak perlu.

Untuk mengetahui selengkapnya tentang alat AWS yang dirancang untuk membangun AI dan alur kerja agentik, saksikan pidato Matt Garman tentang pengantar Amazon Q Developer di AWS re:Invent 2025. Amazon Q adalah platform agen AI yang berfokus pada developer yang membantu Anda membangun dan melakukan deployment aplikasi unik lebih cepat.

Prinsip Inti

Jangan membangun platform agen. Bangun agen yang mendapatkan hak untuk menjadi platform. Isolasi, orkestrasi, dan tata kelola harus dipaksa berdasarkan pertumbuhan pelanggan, bukan ambisi arsitektur. Agen adalah sistem terdistribusi dengan putaran penalaran di dalamnya. Tambahkan kompleksitas hanya ketika kenyataan menuntutnya.

Jika Anda adalah startups tahap awal yang ingin berinovasi dengan AI agentik, AWS Activate dapat membantu Anda maju dari prototipe ke produksi. Program startup unggulan kami menyediakan kredit AWS, panduan teknis, dan dukungan arsitektur, agar Anda dapat fokus membangun agen yang memberikan nilai dan mengembangkan platform seiring pertumbuhan bisnis Anda. Bergabunglah di jaringan kami yang terdiri dari lebih dari 350.000 startups global serta mulai menskalakan dengan agen AI sekarang.

Bagaimana konten ini?