Artificial Intelligence

Automate continuous model improvement with Amazon Rekognition Custom Labels and Amazon A2I: Part 1

If you need to integrate image analysis into your business process to detect objects or scenes unique to your business domain, you need to build your own custom machine learning (ML) model. Building a custom model requires advanced ML expertise and can be a technical challenge if you have limited ML knowledge. Because model performance can change over inference results, you need to implement an automated ML workflow that can continuously retrain a model with newly captured and human-labeled images. Incorporating a human review process and having access to a readily available human labeling workforce can pose a business challenge. In addition, you need to consider adding flexibility into the ML workflow to allow for change without requiring development rework as business objectives evolve over time. Developing a customizable ML workflow that behaves similar to a business rule engine requires significant upfront investment, which can be a resource challenge.

This post is the first in a two-part series that explains how to implement an automated Amazon Rekognition Custom Labels and Amazon Augmented AI (Amazon A2I) ML workflow that can provide continuous model improvement without requiring ML expertise.

With Amazon Rekognition Custom Labels, you can easily build and deploy ML models to identify custom objects that are specific to your business domain. Because Amazon Rekognition Custom Labels is built off Amazon Rekognition trained models, you only need to use a small set of training images to build your custom model, without requiring any ML knowledge. When combined with Amazon A2I, you can quickly integrate a human review process into your ML workflow to capture and label images for model training. Amazon A2I provides the capability to integrate your own, contracted, or readily accessible Amazon Mechanical Turk workforce to provide the human label review. With AWS Step Functions, you can create and run a series of checkpoints and event-driven processes to orchestrate the entire ML workflow with minimal upfront development. By incorporating AWS Systems Manager Parameter Store, you can use parameters as variables for Step Functions checkpoints to customize the behaviors of the ML workflow as needed.

In this post, we explain how we use Step Functions and Parameter Store to allow a model operator to configure the ML workflow, similar to a business rule engine, without requiring development rework.

For this use case, we want to build a Amazon Rekognition Custom Labels model for custom logo detection with training images. As we start using the model, we capture inference images with low detection confidence for human labeling. Captured images that can be properly labeled are added to the training images for model training as part the of continuous model improvement process.

Automate continuous model improvement with Amazon Rekognition Custom Labels and Amazon A2I

|

Solution overview

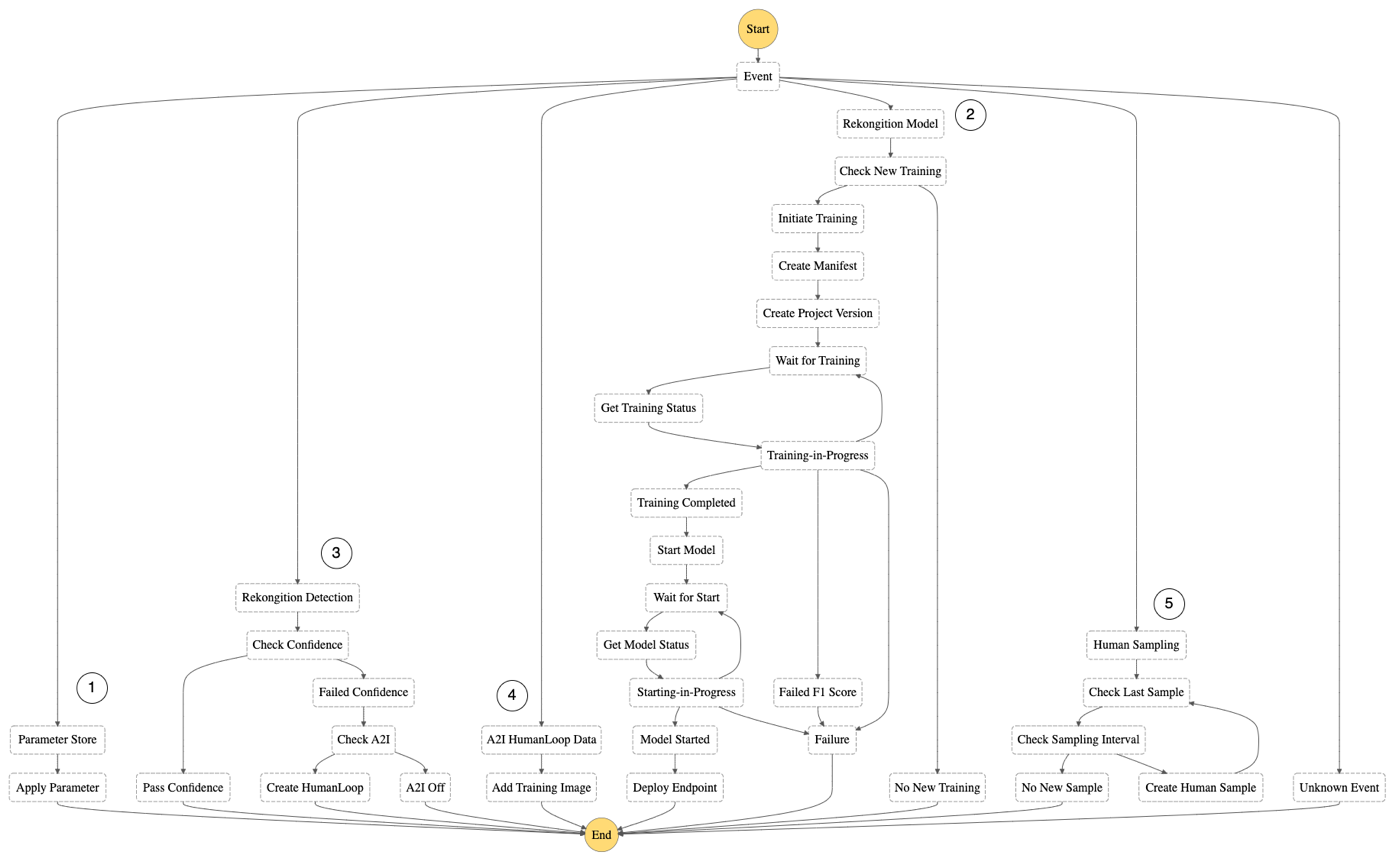

The objective of this ML workflow is to continuously improve the accuracy of the model based on inference performance. Specific to this Amazon Rekognition Custom Labels use case, inference images with a confidence level below the acceptance criteria need to be captured and labeled by a human workforce with Amazon A2I for new model training. The following diagram illustrates this ML workflow.

We add flexibility to this ML workflow by parameterizing some of the processes, as indicated in green in the preceding diagram. Parameterizing the workflow allows a model operator to make changes to the processes without requiring development. We provide seven configurable parameters:

- Enable-Automatic-Training – A toggle switch to enable or disable automatic model training and deployment

- Automatic-Training-Poll-Frequency – The frequency to check for new model training

- Minimum-Untrained-Images – The minimum number of new images added since the last successful training for new training to start

- Minimum-F1-Score – The minimum F1 score for a trained model to be acceptable for deployment

- Minimum-Inference-Units – The minimum number of inference units to use to deploy the model

- Minimum-Label-Detection-Confidence – The minimum confidence of a detection to be considered acceptable

- Enable-A2I-Workflow – A toggle switch to enable or disable the Amazon A2I human labeling process

- Enable-Automatic-Human-Sampling – A toggle switch to enable or disable the human sampling workflow

- Automatic-Human-Sampling-Frequency – A timer to set a schedule to check for the criteria for new human sampling

- Human-Sampling-Interval – The minimum number of new inferences added since the last human sample

We use Step Functions to deploy the following state machine for the orchestration of the workflow.

The state machine is event-driven and divided into four separate states:

- The Parameter Store state is invoked by an Amazon EventBridge event pattern rule triggered by changes to certain parameters. When the model operator updates a parameter, the state machine invokes an AWS Lambda function to apply the changes to the impacted resources. For example, when the model operator updates the parameter

Enable-Automatic-Trainingvalue tofalse, the state machine invokes a Lambda function to disable the automatic model training process. - The Amazon Rekognition Model state is invoked by an EventBridge schedule rule on a polling schedule set by the model operator as a parameter. The state machine first evaluates whether new model training criteria, set by the model operator as a parameter, has been met before initiating training. When the training is complete, the state machine evaluates the F1 score of the new model against the acceptance criteria for model deployment. If the F1 score meets the acceptance criteria, the state machine initiates a blue/green deployment process. The blue/green deployment process includes starting the new model, updating the new model inference endpoint, and stopping the previous running model.

- The Amazon Rekognition Detection state is invoked by an Amazon Simple Storage Service (Amazon S3)

PutObjectevent. When the model consumer uploads images to Amazon S3, aPutObjectevent invokes a Lambda function to redirect the required action to the state machine. The state machine invokes another Lambda function to perform the custom label detection. Next, the state machine evaluates the inference confidence against the confidence level set by the model operator as a parameter. The state machine initiates an Amazon A2I human review workflow if the inference confidence is below the criteria, provided that the Amazon A2I workflow is enabled. - The A2I Human Loop Data state is invoked by an S3

PutObjectevent. When the Amazon A2I human review workflow is complete, the output is stored in Amazon S3 by Amazon A2I by default. An S3PutObjectevent invokes a Lambda function to redirect the required action to the state machine. The state machine invokes another Lambda function to evaluate the human loop response and place a copy of the initial image into an S3 folder corresponding to the evaluated label. This is the process in which new human-labeled images are added to the training dataset. - The Human Sampling state is invoked by an EventBridge schedule rule on a polling schedule set by the model operator as a parameter. The state machine invokes a Lambda function to first query the image inference log to find the last human sampled inference and then search for the next qualified inference to be sampled based on the sampling interval as set by the model operator. If qualified, that inference is marked as “sampled” and a human review workflow is created. The process repeats again until all qualified inferences are marked for human sampling in the same invocation.

Architecture overview

This solution is built on AWS serverless architecture. The architecture is shown in the following diagram.

We use Amazon Rekognition Custom Labels as the core ML service. A Recognition Custom Labels project is created as part the initial AWS CloudFormation deployment process. Creating, starting, and stopping project versions are performed automatically as orchestrated by the state machine backed by Lambda.

We use Amazon A2I to provide a human labeling workflow to label captured images during the inference process. A flow definition is created as part of the CloudFormation stack. An Amazon A2I human labeling task is generated by Lambda as part of the custom label detection process.

We optionally deploy an Amazon SageMaker Ground Truth private workforce and team, if none existed, as part of the CloudFormation stack. The human flow definition has a dependency for the Ground Truth private team to function.

We optionally deploy an Amazon Cognito user pool and app client, if none existed, as part of the CloudFormation stack. The Ground Truth private workforce has a dependency for the user pool and app client to function.

We use Parameter Store in two different ways. Firstly, we provide a set of seven single-value parameters for the model operator to use to configure the ML workflow. Secondly, we provide a JSON-based parameter for the system to use to store environmental variables and operational data.

We use two EventBridge rules to initiate Step Functions state machine runs. The first rule is based on a Systems Manager event pattern. The Systems Manager rule is triggered by changes to the Parameter Store and initiates the state machine to invoke a Lambda function to apply changes to the impacted resources. The second rule is a schedule rule. The schedule rule is triggered periodically to initiate the state machine to invoke a Lambda function to check for new model training.

We use an Amazon DynamoDB NoSQL database to log all Rekognition and A2I events and results for performance analysis and model drift detection. Although we did NOT include an analytics feature in this example, you can use AWS Glue and Amazon Athena to run interactive ad hoc SQL queries against the inference logs. With Amazon QuickSight, you can create real-time analytics dashboard to visualize the inference logs.

We use a Step Functions state machine to orchestrate the ML workflow. The state machine initiates different processes based on events received from EventBridge and responses from Lambda. In addition, the state machine uses an internal process such as Wait to wait for model training and deployment to complete and Choice to evaluate for next tasks.

We use an S3 bucket and a set of predefined folders to store training and inference images and model artifacts. Each folder has a dedicated purpose. The model operator uploads new images to the folder images_labeled_by_folder for training, and the model consumer uploads inference images to the folder images_for_detection for custom label detection.

We use three different sets of Lambda functions:

- The first set consists of two Lambda functions that build the Amazon Rekognition Custom Labels project and Amazon A2I human flow definition. These two Lambda functions are only used initially as part of the CloudFormation stack deployment process.

- The second set of Lambda functions are invoked by the state machine to run Amazon Rekognition and Amazon A2I APIs, create manifest files for training, collect labeled images for training, and manage system resources.

- The last set is a single Lambda function to redirect S3

PutObjectevents to the state machine.

We use Amazon Simple Notification Service (Amazon SNS) as a communication mechanism to alert the model operator and model consumer of relevant model training and detection events. All SNS messages are published by the corresponding Lambda functions.

Conclusion

In this post, walked through a continuous model improvement ML workflow with Amazon Rekognition Custom Labels and Amazon A2I. We explained how we use Step Functions to orchestrate model training and deployment, and custom label detection backed by a human labeling private workforce. We described how we use Parameter Store to parameterize the ML workflow to provide flexibility without needing development rework.

In Part 2 of this series, we provide step-by-step instructions to deploy the solution with AWS CloudFormation.

About the Authors

Les Chan is a Sr. Enterprise Solutions Architect at Amazon Web Services. He helps AWS Partners enable their AWS technical capacities and build solutions around AWS services. His expertise spans application architecture, DevOps, serverless, and machine learning.

Les Chan is a Sr. Enterprise Solutions Architect at Amazon Web Services. He helps AWS Partners enable their AWS technical capacities and build solutions around AWS services. His expertise spans application architecture, DevOps, serverless, and machine learning.

Daniel Duplessis is a Principal Partner Solutions Architect at Amazon Web Services, based out of Toronto. He helps AWS Partners and customers in enterprise segments build solutions using AWS services. His favorite technical domains are serverless and machine learning.

Daniel Duplessis is a Principal Partner Solutions Architect at Amazon Web Services, based out of Toronto. He helps AWS Partners and customers in enterprise segments build solutions using AWS services. His favorite technical domains are serverless and machine learning.