AWS Partner Network (APN) Blog

Migrating ETL Operations from SSIS Packages to AWS Lambda Functions

By Saleha Haider, Sr. Partner Solutions Architect at AWS

By Jeffrey Bramlett, Technical Architect at Cognizant

By Maruthi Turaga, Sr. Full Stack Engineer at Cognizant

|

|

|

Many Windows solutions have used Microsoft SQL Server Integration Services (SSIS) as a method for performing extract, transform, and load (ETL) operations. Legacy SSIS packages that have been handed down to different developers over the years can be complex, cumbersome, and difficult to support.

SSIS packages typically execute on a local machine, and are integral to data integration in organizations. These are part of a bigger process around ETL operations.

A cloud-based solution can provide a simpler way of doing the same work, creating a process for disengaging from specific data systems, and making the development and maintenance of these processes easier to manage.

However, proprietary technologies such as SSIS packages can complicate efforts to move to cloud-native services such as Amazon Relational Database Services (Amazon RDS).

Cognizant is an AWS Partner Network (APN) Premier Consulting Partner and AWS Managed Service Provider. Cognizant has AWS Competencies in Healthcare, Life Sciences, Migration, and Financial Services, in addition to the AWS Lambda Service Delivery designation.

In this post, we’ll describe an architecture and several techniques Cognizant uses to help clients with ETL operations based on legacy SSIS packaging move their applications to the Amazon Web Services (AWS) Cloud.

Migrating from SSIS Packages to AWS Lambda Functions

A web-based toy store was receiving updated promotional prices for children’s toys and related products it sold on its web site. To modernize the company’s web application, Cognizant recommend migrating to an Amazon Aurora database service.

To accomplish this task, the team at Cognizant refactored the SSIS packages using Amazon Simple Storage Service (Amazon S3), Amazon Simple Queue Service (SQS), and AWS Lambda.

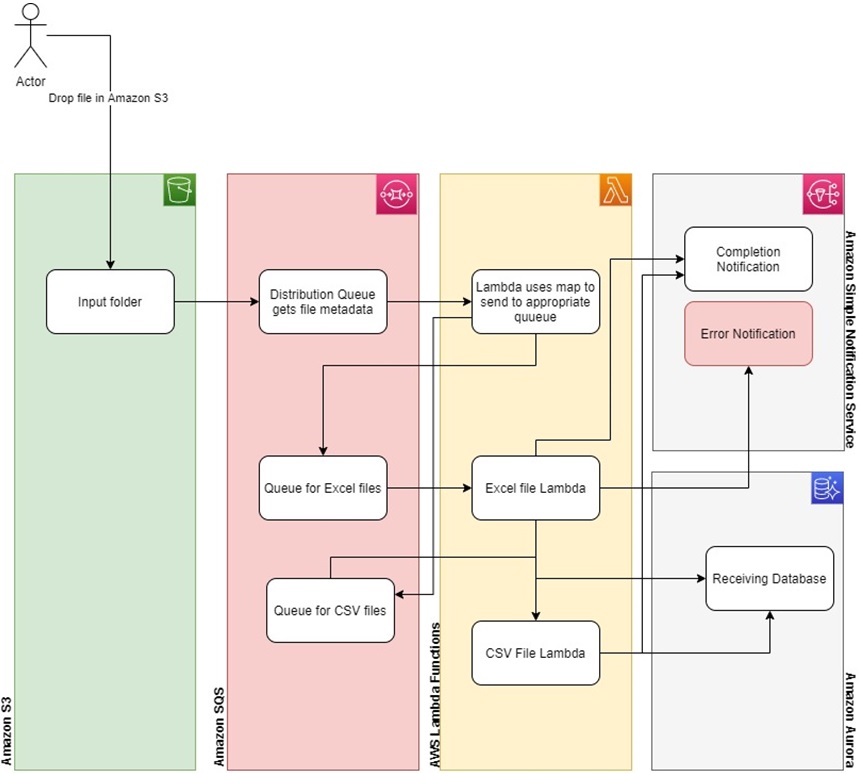

Figure 1 – Flow of new, cloud-native application.

This solution uses the “new/create” event for an Amazon S3 location to start the processing of input files. The file metadata is passed to the listening queue (SQS) and Lambda functions, but the files themselves are not. Queues in Amazon SQS are used to persist the messages and distribute the file metadata to the Lambda functions, which can scale in and out based on policies.

The distribution queue maintains the new file metadata and forwards this to the waiting bus Lambda function. This Lambda function applies a map to the file metadata so it forwards the file metadata to the appropriate queues (SQS), either the Excel file queue or the CSV file queue. Once forwarded, the Lambda function reads the file and takes the appropriate actions.

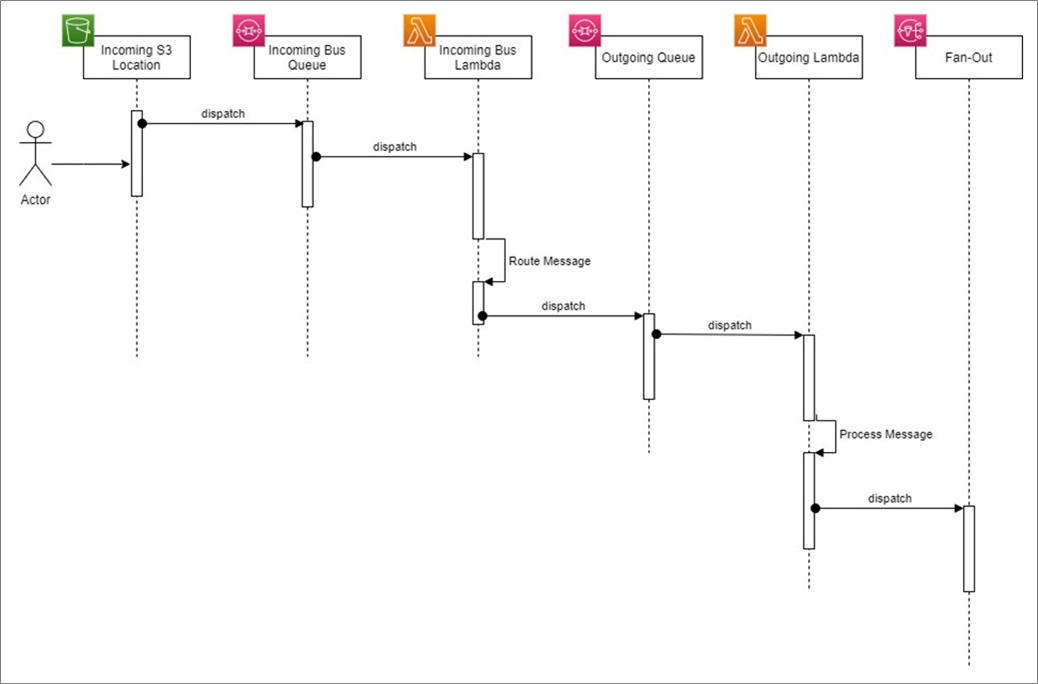

Figure 2 – Application processing sequence.

Handling failure scenarios

- Exceptions can occur in any of the Lambda functions as they execute. Handling these failures can take different forms. Each needs to be considered separately to respond appropriately to the failure.

- The client can send a fan-out notification using Amazon Simple Notification Service (SNS) to initiate multiple actions for each of the events. For example, an SNS message by email can inform of the failure and re-submit to the S3 bucket.

Post-processing recommendations

- The S3 input location only forwards new file information to the routing queue. The file can remain in this location and not be processed again. This location can be cleaned up using a policy to move the files into Amazon S3 Glacier on a pre-determined schedule.

Solution Architecture

Next, we’ll show you the legacy architecture, followed by the new architecture.

Legacy architecture

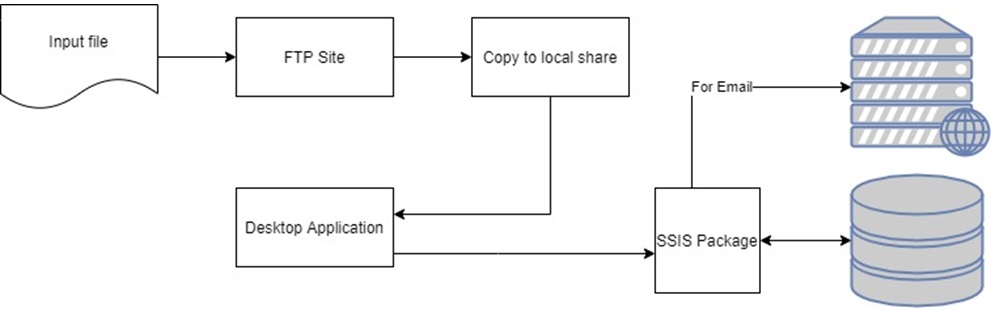

Users at the toy store company posted files into an FTP site for processing. This event triggered a copy of those files to a local share on a pre-determined server. Running on this server was a local service or application that watched the file location and, when the file was detected, engaged the SSIS package run files.

Figure 3 – Legacy SSIS-based architecture.

Cloud-native architecture

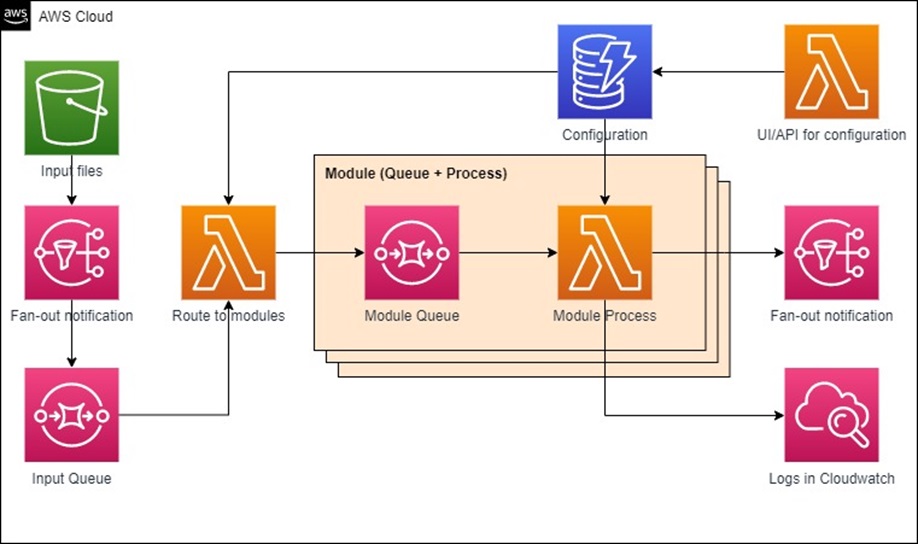

As shown in Figure 4 below, instead of using an FTP site, the new architecture uses an Amazon S3 location, and attaches events for any new files to that location. The new file event pushes the file metadata to either a fan-out notification (which allows for multiple actions), or directly to the queue for the routing Lambda function.

Figure 4 – Cloud-native architecture.

The routing Lambda function gets the file metadata and applies some mapping logic to forward the file metadata to the appropriate queues for processing.

The processing queues (module) forward the file metadata to the processing Lambda function or maybe an AWS Step Function (ordered Lambdas with state) to be processed.

The processing Lambda functions perform the work on the file contents, and send out logs and notifications, as required.

For managing application state, you can use a data store to maintain state info for example in Amazon DynamoDB.

Even though SSIS is supported in Amazon RDS for SQL Server, you may want to modernize your ETL processing using AWS Lambda and .NET Core.

Sample Code for Reading Amazon S3 Messages

In the sample use case scenario, a file is uploaded to Amazon S3, which triggers a message to be written into the SQS queue configured. Based on the incoming SQS message, the configured Lambda function is invoked.

The following sample code from the Lambda function allows you to read the file from the S3 bucket based on message notification from an S3 event, following AWS serverless best practices.

Complete code for the sample is available on GitHub.

Lessons Learned

One of the biggest challenges to the modernization of the client’s application was the complexity of the SSIS processes. The SSIS packages were coupled to SQL Server objects to make a shared system for processing, which created complex dependencies.

When planning the modernization, the client had to consider database object changes and/or running SSIS packages. Their focus was always to simplify the process as they modernized.

Other challenges were deciding where and how to slice the AWS Lambda functions to fit into the requirements of 3GB max memory, and to be mindful of any long-running processes that could take more than 15 minutes to process.

Here are a few more challenges the client faced, followed by Cognizant’s recommendations:

Managing state

SSIS packages manage state values that are changed and updated while the package is being run.

Possible solutions:

- “State” can be managed as an object(s) and into an AWS Lambda function or a Step Function as JSON text.

- “State” can be managed from the API.

- “State” can be managed using Amazon S3.

Branching logic

SSIS packages may contain if/else logic based on values in the package state values.

Possible solutions:

- Branch execution in the Lambda implementation that may include calling other Lambda functions or Step Functions.

- Use declarative branching logic in the Lambda function runtime.

Looping

SSIS packages can perform loops with execution occurring within the loop.

Possible solutions:

- Implementing the loop in the Lambda function.

- Using a Step Function that checks the state before running (“short circuit”).

Local file storage usage

Local files are being used to a variety of purposes, posing a problem when converting an SSIS package. These local files include other SSIS packages or data files.

Possible solutions:

- Design to exclude the local files, possibly using Step Functions or other Lambda functions for other SSIS packages.

- Put data files in S3 instead of local files.

“Look Ups” from existing tables

SSIS packages typically use in-line SQL to get values from an existing database. These values are used for actions including calculations, values in the “state” being used by the SSIS execution, further in-line SQL, and execution of stored procedures and/or user-defined functions.

Possible solutions:

- Design around requesting values, where possible.

- Use an API service (also an AWS Lambda function) to get these values. The implementation could then change independent of the Lambda function.

- Use Amazon S3 to store the data.

- Use Amazon DynamoDB for document-based data.

- Use Amazon Aurora for stored data to query.

- Use Amazon ElastiCache, or memorialize responses that are commonly using stored procedures or user-defined functions.

Conclusion

A cloud-based solution for ETL operations is a simpler way of doing the same work, creating a process for disengaging from specific data systems, and making the development and maintenance of these processes easier to manage. However, legacy SSIS packages that are complex, cumbersome, and difficult to support can complicate migration of ETL processes to cloud-native.

Re-factoring or re-engineering legacy processes based on SSIS packages using the architecture based on AWS Lambda functions, Amazon SQS, and Amazon S3, can simplify your modernization and help you overcome the challenges posed by legacy systems.

Resources

- Sample code on GitHub

- Building Lambda functions with C#

- Announcing AWS Lambda support for .NET Core 3.1

- Configuring Amazon S3 event notifications

.

.

Cognizant – APN Partner Spotlight

Cognizant is an APN Premier Consulting Partner. They transform customers’ business, operating, and technology models for the digital era by helping organizations envision, build, and run more innovative and efficient businesses.

Contact Cognizant | Practice Overview

*Already worked with Cognizant? Rate this Partner

*To review an APN Partner, you must be an AWS customer that has worked with them directly on a project.