Artificial Intelligence

Tag: Natural Language Processing

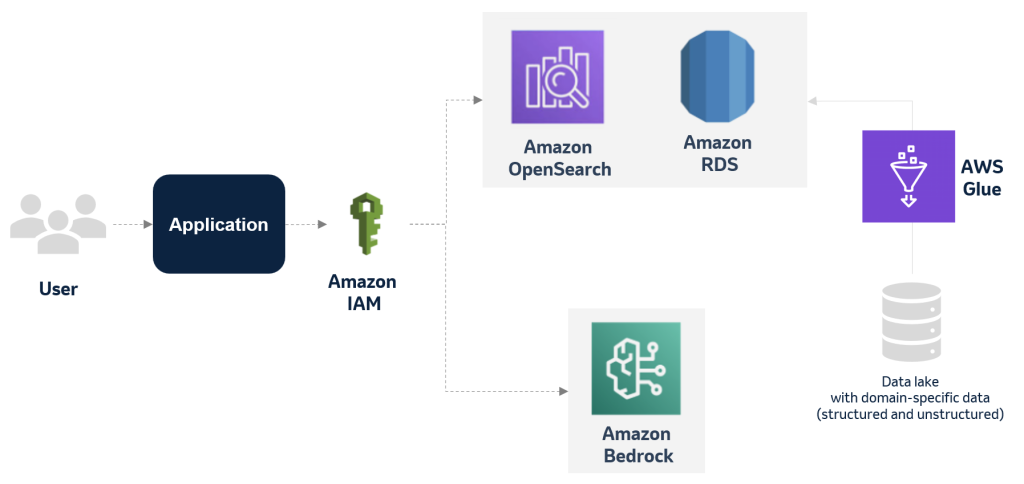

MSD explores applying generative Al to improve the deviation management process using AWS services

This blog post has explores how MSD is harnessing the power of generative AI and databases to optimize and transform its manufacturing deviation management process. By creating an accurate and multifaceted knowledge base of past events, deviations, and findings, the company aims to significantly reduce the time and effort required for each new case while maintaining the highest standards of quality and compliance.

Choosing the right approach for generative AI-powered structured data retrieval

In this post, we explore five different patterns for implementing LLM-powered structured data query capabilities in AWS, including direct conversational interfaces, BI tool enhancements, and custom text-to-SQL solutions.

A generative AI prototype with Amazon Bedrock transforms life sciences and the genome analysis process

This post explores deploying a text-to-SQL pipeline using generative AI models and Amazon Bedrock to ask natural language questions to a genomics database. We demonstrate how to implement an AI assistant web interface with AWS Amplify and explain the prompt engineering strategies adopted to generate the SQL queries. Finally, we present instructions to deploy the service in your own AWS account.

Build an AI-powered document processing platform with open source NER model and LLM on Amazon SageMaker

In this post, we discuss how you can build an AI-powered document processing platform with open source NER and LLMs on SageMaker.

Reducing hallucinations in LLM agents with a verified semantic cache using Amazon Bedrock Knowledge Bases

This post introduces a solution to reduce hallucinations in Large Language Models (LLMs) by implementing a verified semantic cache using Amazon Bedrock Knowledge Bases, which checks if user questions match curated and verified responses before generating new answers. The solution combines the flexibility of LLMs with reliable, verified answers to improve response accuracy, reduce latency, and lower costs while preventing potential misinformation in critical domains such as healthcare, finance, and legal services.

How Aetion is using generative AI and Amazon Bedrock to translate scientific intent to results

Aetion is a leading provider of decision-grade real-world evidence software to biopharma, payors, and regulatory agencies. In this post, we review how Aetion is using Amazon Bedrock to help streamline the analytical process toward producing decision-grade real-world evidence and enable users without data science expertise to interact with complex real-world datasets.

How Aetion is using generative AI and Amazon Bedrock to unlock hidden insights about patient populations

In this post, we review how Aetion’s Smart Subgroups Interpreter enables users to interact with Smart Subgroups using natural language queries. Powered by Amazon Bedrock and Anthropic’s Claude 3 large language models (LLMs), the interpreter responds to user questions expressed in conversational language about patient subgroups and provides insights to generate further hypotheses and evidence.

Using natural language in Amazon Q Business: From searching and creating ServiceNow incidents and knowledge articles to generating insights

In this post, we’ll demonstrate how to configure an Amazon Q Business application and add a custom plugin that gives users the ability to use a natural language interface provided by Amazon Q Business to query real-time data and take actions in ServiceNow.

Reducing hallucinations in large language models with custom intervention using Amazon Bedrock Agents

This post demonstrates how to use Amazon Bedrock Agents, Amazon Knowledge Bases, and the RAGAS evaluation metrics to build a custom hallucination detector and remediate it by using human-in-the-loop. The agentic workflow can be extended to custom use cases through different hallucination remediation techniques and offers the flexibility to detect and mitigate hallucinations using custom actions.

Build generative AI applications on Amazon Bedrock with the AWS SDK for Python (Boto3)

In this post, we demonstrate how to use Amazon Bedrock with the AWS SDK for Python (Boto3) to programmatically incorporate FMs. We explore invoking a specific FM and processing the generated text, showcasing the potential for developers to use these models in their applications for a variety of use cases