What is Amazon SageMaker Inference?

Amazon SageMaker AI makes it easier to deploy ML models including foundation models (FMs) to make inference requests at the best price performance for any use case. From low latency and high throughput to long-running inference, you can use SageMaker AI for all your inference needs. SageMaker AI is a fully managed service and integrates with MLOps tools, so you can scale your model deployment, reduce inference cost, manage models more effectively in production, and reduce operational burden.

Benefits of SageMaker Model Deployment

Wide range of inference options

Real-Time Inference

Serverless Inference

Asynchronous Inference

Batch Transform

Scalable and cost-effective inference options

Single-model endpoints

One model on a container hosted on dedicated instances or serverless for low latency and high throughput.

Multiple models on a single endpoint

Host multiple models to the same instance to better utilize the underlying accelerators, reducing deployment costs by up to 50%. You can control scaling policies for each FM separately, making it easier to adapt to model usage patterns while optimizing infrastructure costs.

Serial inference pipelines

Multiple containers sharing dedicated instances and executing in a sequence. You can use an inference pipeline to combine preprocessing, predictions, and post-processing data science tasks.

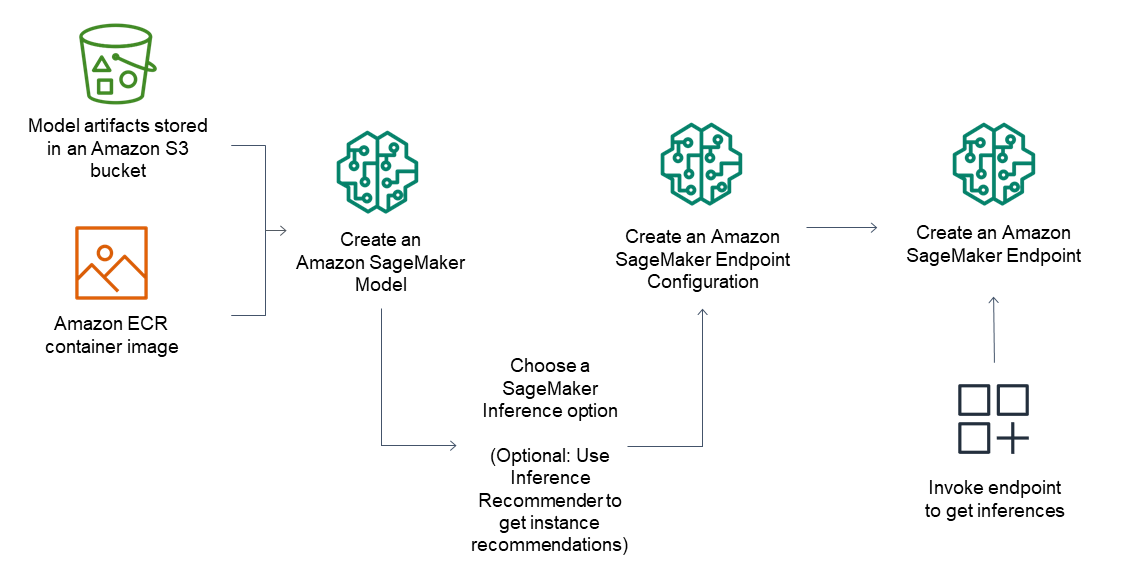

Support for most machine learning frameworks and model servers

Amazon SageMaker inference supports built-in algorithms and prebuilt Docker images for some of the most common machine learning frameworks such as TensorFlow, PyTorch, ONNX, and XGBoost. If none of the pre-built Docker images serve your needs, you can build your own container for use with CPU backed multi-model endpoints. SageMaker inference supports most popular model servers such as TensorFlow Serving, TorchServe, NVIDIA Triton, AWS multi-model server.

Amazon SageMaker AI offers specialized deep learning containers (DLCs), libraries, and tooling for model parallelism and large model inference (LMI), to help you improve performance of foundational models. With these options, you can deploy models including foundation models (FMs) quickly for virtually any use case.

Achieve high inference performance at low cost

Achieve high inference performance at low cost

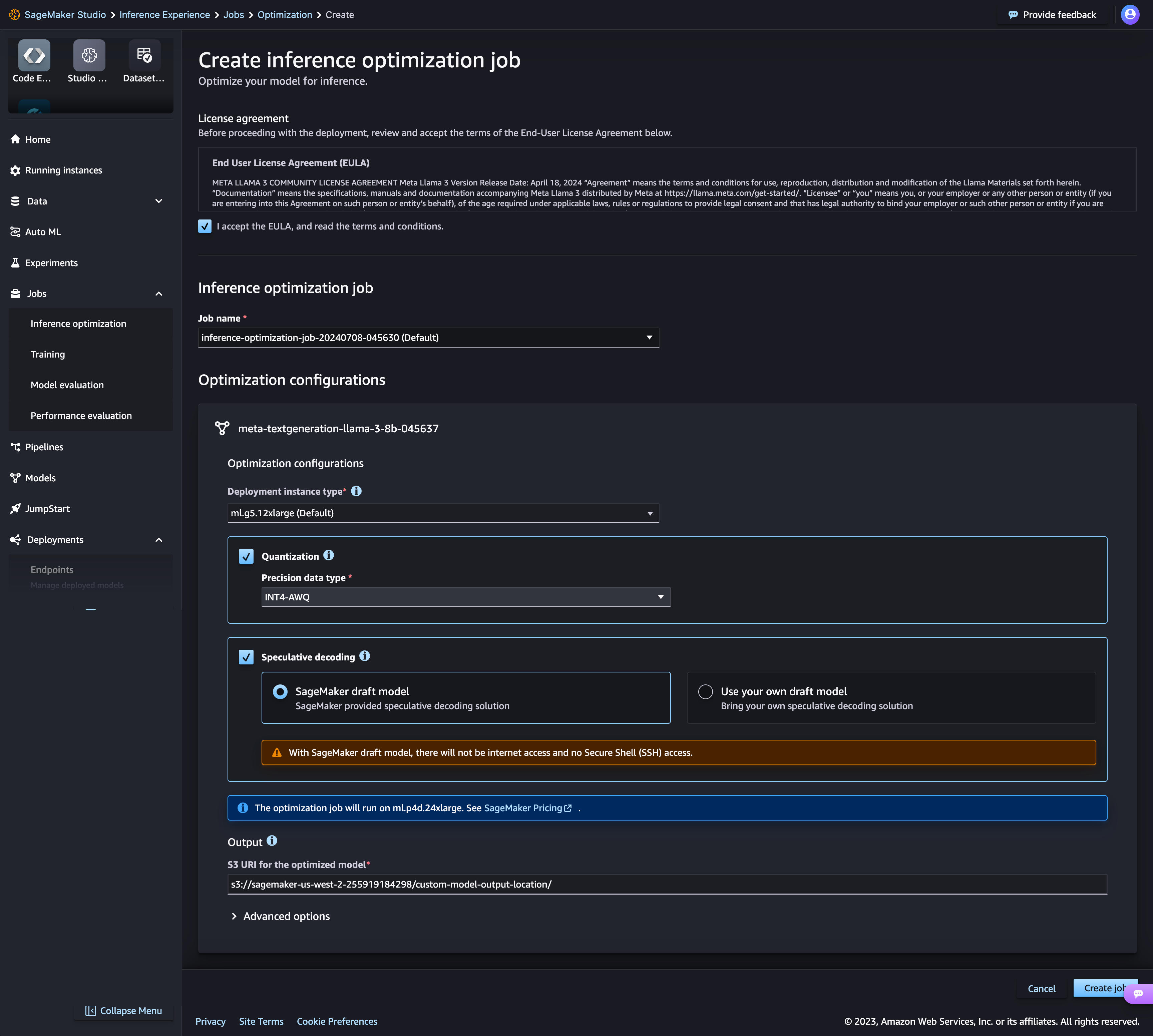

Amazon SageMaker AI's new inference optimization toolkit delivers up to ~2x higher throughput while reducing costs by up to ~50% for generative AI models such as Llama 3, Mistral, and Mixtral models. For example, with a Llama 3-70B model, you can achieve up to ~2400 tokens/sec on a ml.p5.48xlarge instance v/s ~1200 tokens/sec previously without any optimization. You can select a model optimization technique such as Speculative Decoding, Quantization and Compilation or combine several techniques, apply them to your models, run benchmark to evaluate the impact of the techniques on output quality and inference performance, and deploy models in just a few clicks.

Deploy models on the most high-performing infrastructure or go serverless

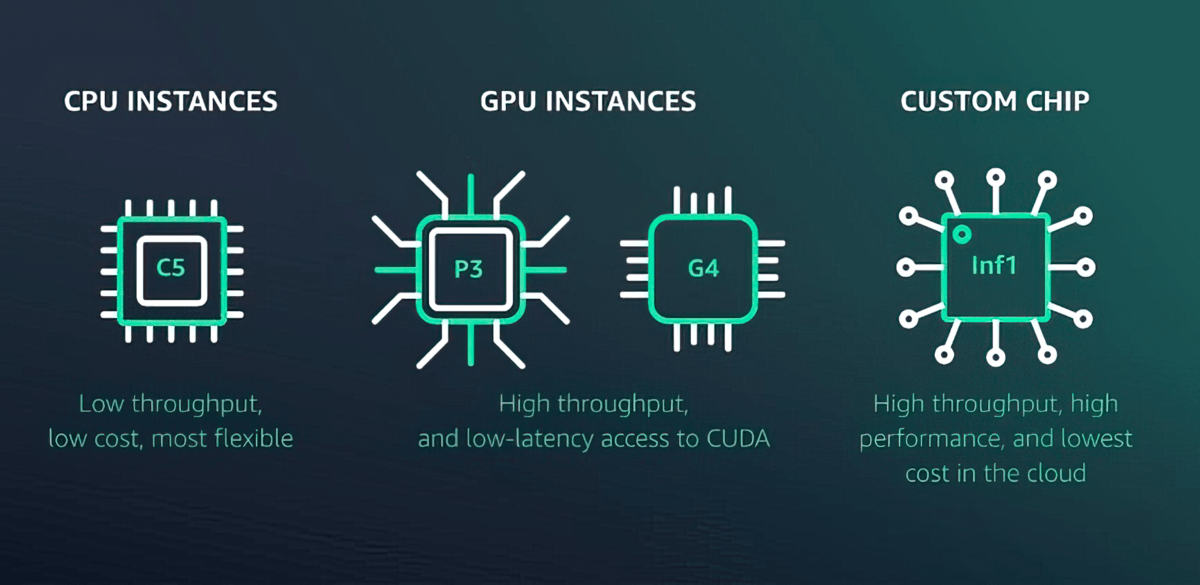

Amazon SageMaker AI offers more than 70 instance types with varying levels of compute and memory, including Amazon EC2 Inf1 instances based on AWS Inferentia, high-performance ML inference chips designed and built by AWS, and GPU instances such as Amazon EC2 G4dn. Or, choose Amazon SageMaker Serverless Inference to easily scale to thousands of models per endpoint, millions of transactions per second (TPS) throughput, and sub10 millisecond overhead latencies.

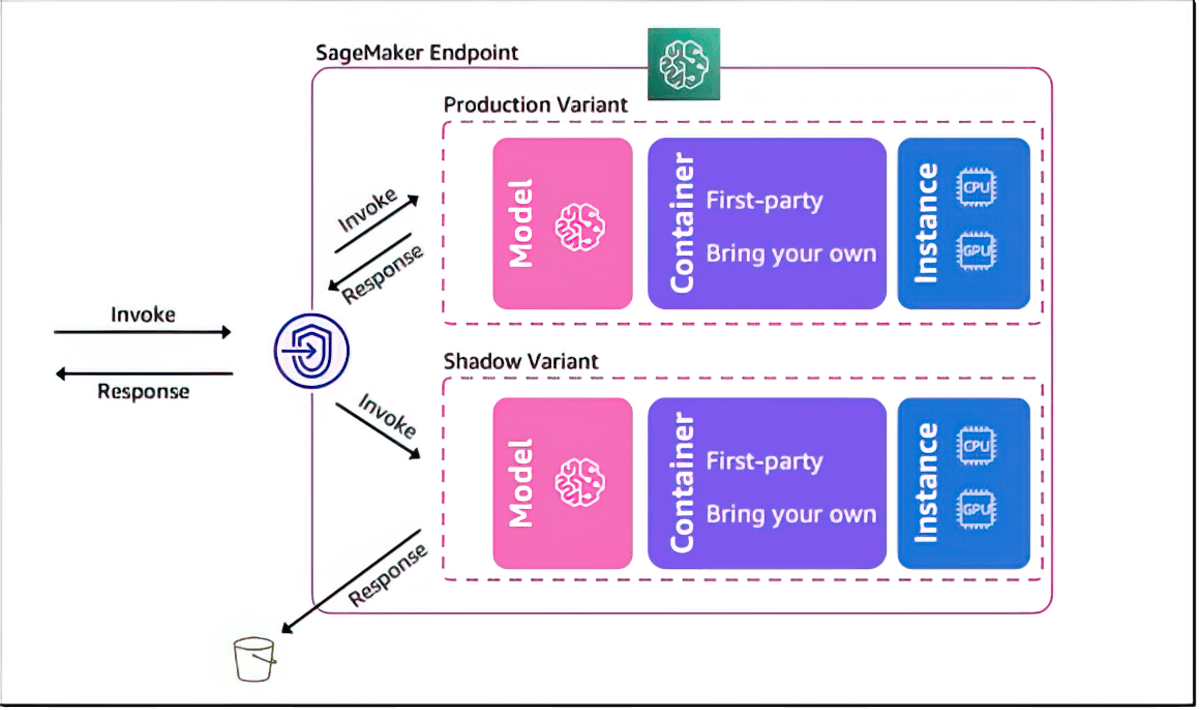

Shadow test to validate performance of ML models

Amazon SageMaker AI helps you evaluate a new model by shadow testing its performance against the currently SageMaker-deployed model using live inference requests. Shadow testing can help you catch potential configuration errors and performance issues before they impact end users. With SageMaker AI, you don’t need to invest weeks of time building your own shadow testing infrastructure. Just select a production model that you want to test against, and SageMaker AI automatically deploys the new model in shadow mode and routes a copy of the inference requests received by the production model to the new model in real time.

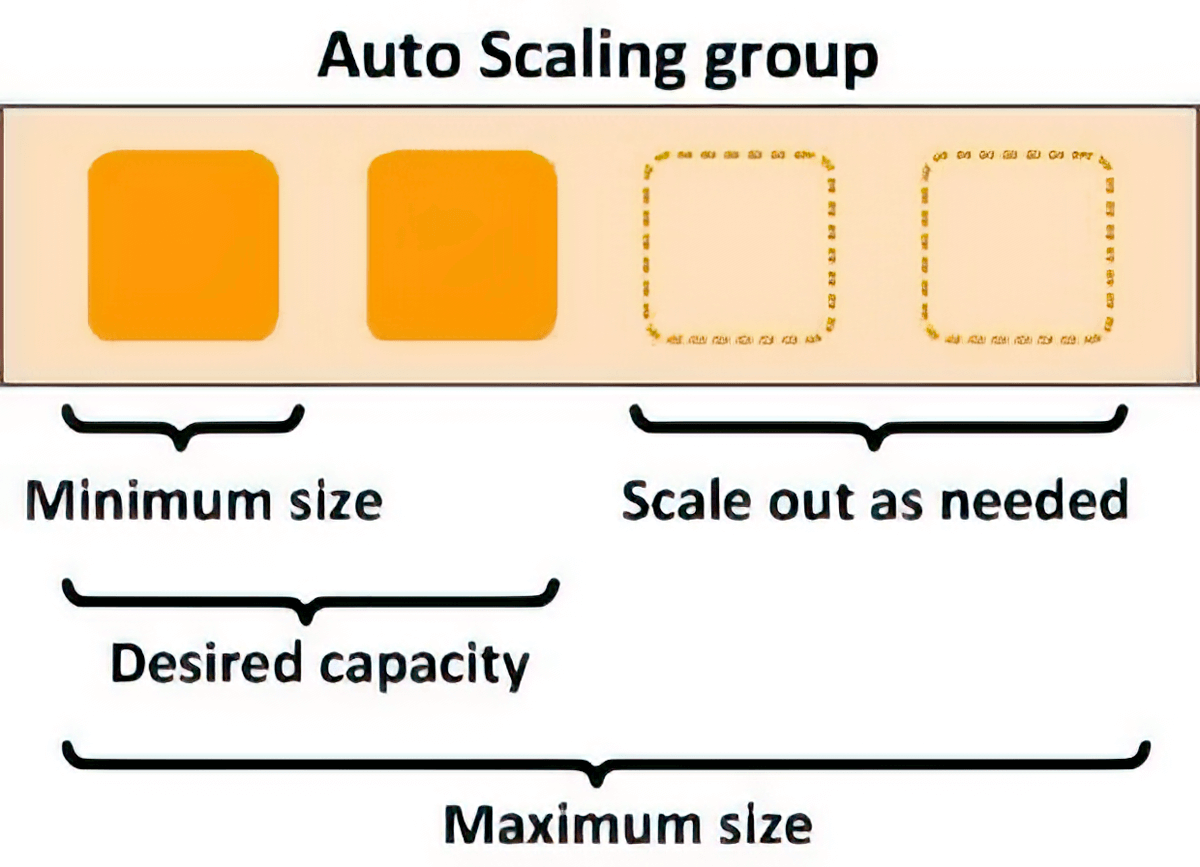

Autoscaling for elasticity

You can use scaling policies to automatically scale the underlying compute resources to accommodate fluctuations in inference requests. You can control scaling policies for each ML model separately to handle the changes in model usage easily, while also optimizing infrastructure costs.

Latency improvement and Intelligent routing

You can reduce inference latency for ML models by intelligently routing new inference requests to instances that are available instead of randomly routing requests to instances that are already busy serving inference requests, allowing you to achieve 20% lower inference latency on average.

Reduce operational burden and accelerate time to value

Fully managed model hosting and management

As a fully managed service, Amazon SageMaker AI takes care of setting up and managing instances, software version compatibilities, and patching versions. It also provides built-in metrics and logs for endpoints that you can use to monitor and receive alerts.

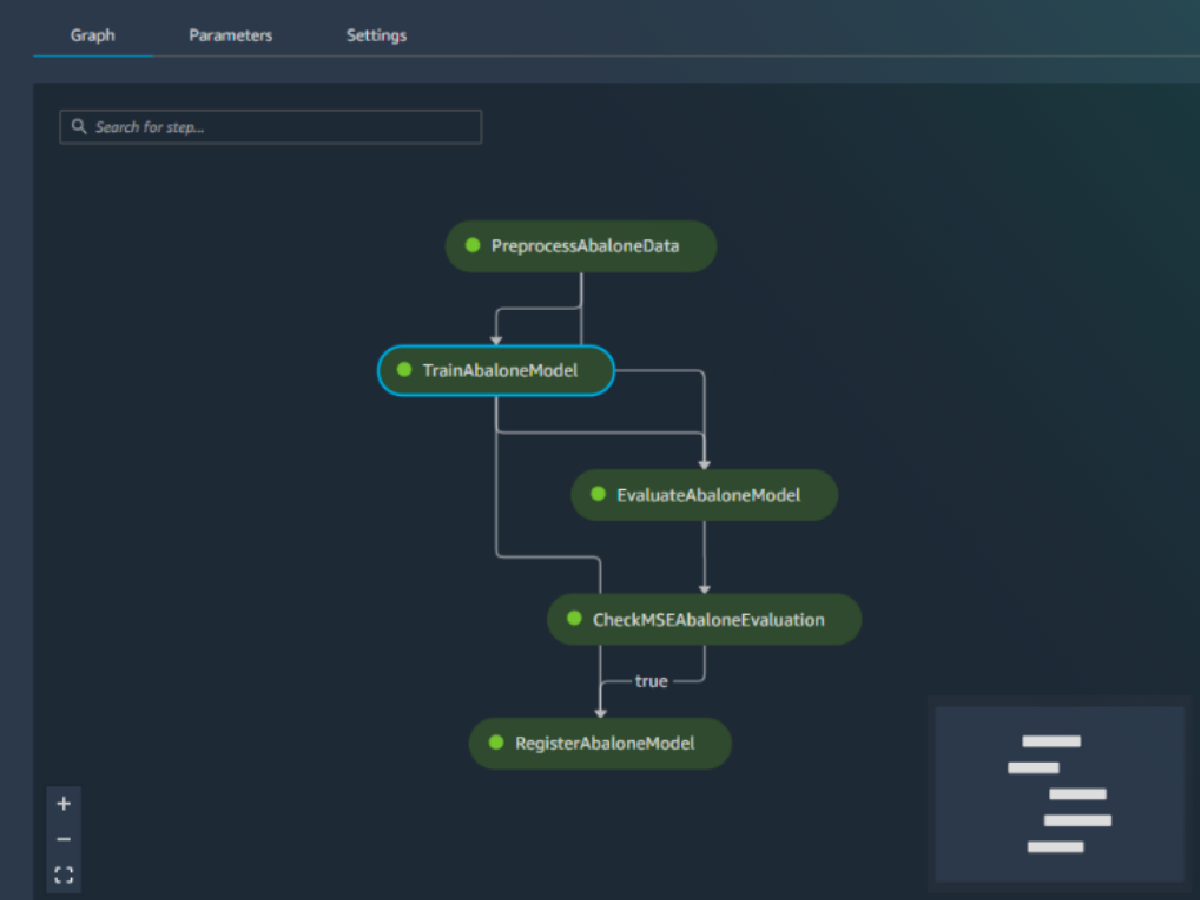

Built-in integration with MLOps features

Amazon SageMaker AI model deployment features are natively integrated with MLOps capabilities, including SageMaker Pipelines (workflow automation and orchestration), SageMaker Projects (CI/CD for ML), SageMaker Feature Store (feature management), SageMaker Model Registry (model and artifact catalog to track lineage and support automated approval workflows), SageMaker Clarify (bias detection), and SageMaker Model Monitor (model and concept drift detection). As a result, whether you deploy one model or tens of thousands, SageMaker AI helps off-load the operational overhead of deploying, scaling, and managing ML models while getting them to production faster.