Overview

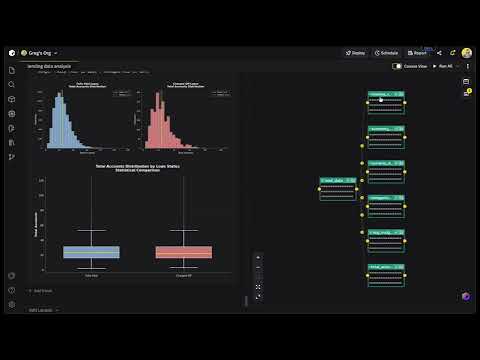

Product video

Zerve replaces the typical data science toolchain (notebook for exploration, IDE for cleanup, separate infra for deployment) with a single environment where all three happen together. Deploy Zerve in your own AWS VPC to keep data inside your infrastructure, or run it in Zerve's managed cloud to get started without any setup. Your team can work in either a canvas or notebook-based interface, both of which connect to GitHub and support any external IDE. Python, R, and SQL run in the same project with full interoperability between languages. If someone writes a transformation in SQL and a colleague picks it up in Python downstream, the serialized artifacts carry over without any manual export step. Zerve sessions produce deterministic output. The thing that trips up most notebook-based workflows, where results change depending on cell execution order or kernel state, does not happen here. What you run interactively is what runs in production. Environment setup, dependency management, and cloud orchestration are all handled at the platform level. Starting a new project or onboarding someone to an existing one takes a few clicks. You do not need a DevOps ticket. GPUs and other compute resources get provisioned per task on demand; they are not sitting idle between runs. You can deploy work as a scheduled job, an API endpoint, or an app. Or just export to whatever CI/CD pipeline your org already uses. Supported Languages and Compute Zerve runs Python, R, SQL, GraphQL, PySpark, and Markdown blocks. You pick the compute type per cell: Lambda, Fargate, GPU, or Kubernetes. Each project locks its own dependency versions. Integrations Snowflake, PostgreSQL, MySQL, and MariaDB all have native connectors in Zerve. On the AI side, both Hugging Face and AWS Bedrock plug in directly, so your team can work with LLMs (open-source or managed) without having to stand up hosting for them. If you want to trigger Zerve from other tools in your stack, the developer API works with Airflow and GitHub Actions, or really anything that can hit a REST endpoint. Security and Deployment If you self-host, everything runs in your AWS account through a CloudFormation template. Your data, your execution outputs, your secrets, all stored on your side. Zerve keeps your AWS credentials in an encrypted vault and only touches them at execution time. Nothing gets stored on Zerve's end. On the access control side, you get RBAC, SSO, and the kind of granular permissions that security teams expect before signing off. Zerve works as a fully self-hosted install or a hybrid setup, whatever fits your org. Getting Set Up The CloudFormation path takes about ten minutes. Generate an API key in your Zerve org settings, open the QuickStart template, drop in your key and a domain name, and most of the other parameters are pre-filled. Teams that want to evaluate first can use Zerve's managed cloud, which has a free tier with compute and storage credits.

Highlights

- Stable and Interactive: Data scientists typically explore in notebooks, then rewrite everything in an IDE before it can go to production. That rewrite takes time, and sometimes a completely different person does it. Zerve eliminates that second step. The code you write while exploring your data already produces stable, reproducible output. What you build interactively is what you deploy.

- Decoupled Compute and Storage: Zerve separates compute from storage so work is saved, versioned, and available to your whole team automatically. Compute resources like GPUs and extra memory are provisioned per task and release when the task finishes, so you only pay for what you use. Python, R, and SQL share the same storage layer, meaning a data engineer writing SQL and a data scientist in Python contribute to the same pipeline with no file exports.

- Real-Time Coding Collaboration: Multiple people can write and run code in the same Zerve project at the same time, like Google Docs, but for executable code. You see each other's changes live, comment inline, and review before merging. Team members working in Python, R, and SQL all share the same workspace and the same saved outputs, so there are no separate projects or manual data handoffs between roles. Github integration keeps everything synced with your existing source control.

Details

Introducing multi-product solutions

You can now purchase comprehensive solutions tailored to use cases and industries.

Features and programs

Financing for AWS Marketplace purchases

Pricing

Free trial

Dimension | Description | Cost/month |

|---|---|---|

Zerve AI Data Science Development Environment | - | $1.00 |

Vendor refund policy

There are no refunds offered.

Custom pricing options

How can we make this page better?

Legal

Vendor terms and conditions

Content disclaimer

Delivery details

Software as a Service (SaaS)

SaaS delivers cloud-based software applications directly to customers over the internet. You can access these applications through a subscription model. You will pay recurring monthly usage fees through your AWS bill, while AWS handles deployment and infrastructure management, ensuring scalability, reliability, and seamless integration with other AWS services.

Resources

Vendor resources

Support

Vendor support

Please reach out to sales@zerve.ai with any questions or for options on contract or pricing terms.

Technical Support: For help setting up your account, technical queries or exploring the platform please reach out to support@zerve.ai

For additional training:

AWS infrastructure support

AWS Support is a one-on-one, fast-response support channel that is staffed 24x7x365 with experienced and technical support engineers. The service helps customers of all sizes and technical abilities to successfully utilize the products and features provided by Amazon Web Services.