Overview

How it work

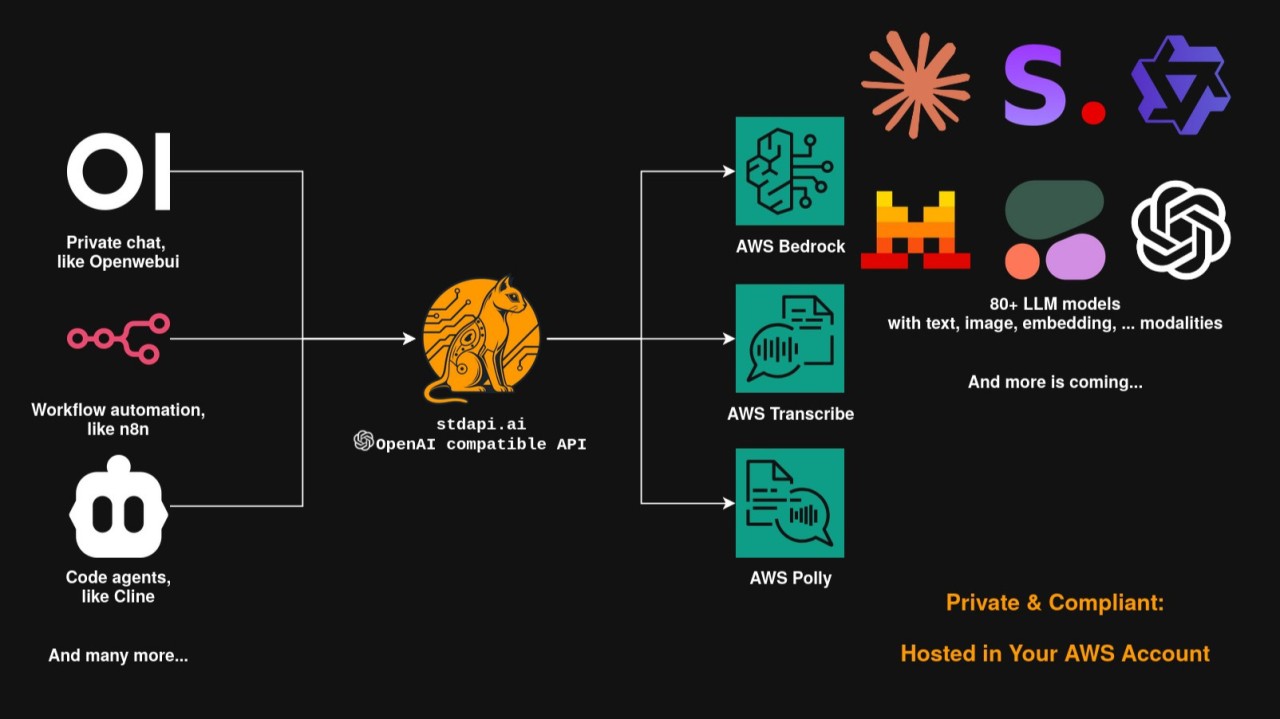

Drop-in API gateway for AWS Bedrock and AI services.

Most AI tools and applications only support OpenAI or Anthropic APIs and cannot connect to AWS Bedrock or AWS AI services like Transcribe, Polly, and Translate. stdapi.ai solves this with a production-ready OpenAI and Anthropic compatible AI gateway that makes AWS Bedrock and AI services instantly accessible to any existing tool - Open WebUI, n8n, Continue.dev, Cursor, LangChain, and 1000+ applications. No custom integration required.

WHY CHOOSE STDAPI.AI

Complete OpenAI & Anthropic API Support - Chat, embeddings, images, audio (speech/transcription/translation). Works with your existing applications out of the box.

80+ AI Models - Access Claude, Moonshot Kimi, MiniMax, Nova, Llama, Qwen, and more. Switch models instantly with no vendor lock-in.

Enterprise Compliance - All data stays in your AWS account. Configure allowed regions for HIPAA, GDPR, and FedRAMP compliance through AWS Bedrock certifications.

Purpose-Built for AWS Bedrock - Not a generic proxy. Deep integration with reasoning modes, prompt caching, guardrails, service tiers, inference profiles, and prompt routers. Built to leverage every Bedrock feature through standard API parameters.

MODELS AND CAPABILITIES

AI Models - Anthropic Claude, Moonshot Kimi, MiniMax, Amazon Nova, Meta Llama, Alibaba Qwen, Zhipu GLM, Stability AI, Mistral, Cohere, Nvidia, AI21 Labs.

AWS Services - Polly (text-to-speech), Transcribe (speech-to-text with diarization), Translate (multi-language).

Advanced Features - Reasoning modes, prompt caching, guardrails, inference profiles, cross-region routing, prompt routers.

POPULAR USE CASES

Private ChatGPT Alternative - Deploy Open WebUI or LibreChat with enterprise security. Multi-modal chat, RAG document analysis, web search. Full data control.

Workflow Automation - Connect AI to 400+ services via n8n, Make, or Zapier. Automate support, content creation, and data processing without technical setup.

AI Coding Assistants - Use Continue.dev, Cline, Cursor, Claude Code, Windsurf in VS Code or JetBrains IDEs. Access Claude, Moonshot Kimi, Qwen Coder via OpenAI or Anthropic-compatible interface.

Knowledge Management - Add AI to Obsidian, Notion, Logseq. Semantic search, auto-summarization, smart organization.

Team Bots - Deploy to Slack, Discord, Teams, Telegram. Q&A bots, documentation search, task automation.

Autonomous Agents & MCP - Every API endpoint is exposed as a Model Context Protocol tool (Streamable HTTP and SSE transports). OpenClaw, Claude Code, Cursor, LangGraph, CrewAI, and any MCP-compatible agent connect directly with no HTTP client code. Agents auto-discover capabilities via RFC 8288 Link headers and the machine-readable API catalog.

KEY BENEFITS

Multi-Region Quota Multiplier - Each AWS region has independent quota. 3 regions means 3x tokens per minute and 3x daily limits. One endpoint with automatic failover and routing. Configure EU-only, US-only, or global for compliance.

Production Infrastructure - Terraform/OpenTofu deployment. ECS Fargate auto-scaling, ALB with HTTPS, WAF, VPC, KMS encryption. AWS Well-Architected Framework.

Built-in Security - CloudWatch monitoring, OpenTelemetry tracing, API key auth via Systems Manager, CORS, SSRF protection.

Cost Efficiency - Pay-per-use for models via Bedrock. No monthly minimums. Prompt caching and Fargate Spot reduce costs further.

No Vendor Lock-in - Standard OpenAI & Anthropic APIs. Switch providers anytime by changing one config value.

Automatic Model Fallback - When AWS retires a model, requests are transparently redirected to its replacement. Your applications survive model deprecations without code changes.

GET STARTED

Start with a 14-day free trial on AWS Marketplace. Deploy in minutes with Terraform templates. Comprehensive docs and step-by-step guides for Open WebUI, n8n, Continue.dev, and LangChain at https://stdapi.ai . Production-ready from day one.

Highlights

- Works instantly with 1000+ OpenAI and Anthropic compatible applications - Open WebUI, n8n, Continue.dev, Cursor, LangChain, Obsidian, Slack bots, and agent frameworks like OpenClaw, LangGraph, and CrewAI. Full API coverage: chat, embeddings, images, speech, transcription, and files. Expose all endpoints as MCP tools (Streamable HTTP and SSE) for direct agent access. Switch between 80+ models including Claude, Kimi, MiniMax, Nova, Llama, and Qwen instantly.

- All data stays in your AWS account and never reaches model providers. Configure allowed regions for GDPR, HIPAA, and FedRAMP compliance. Production-ready infrastructure via Terraform: ECS Fargate with auto-scaling, ALB with HTTPS, WAF, VPC, KMS encryption, CloudWatch monitoring, and OpenTelemetry tracing. Follows the AWS Well-Architected Framework.

- Pay only for what you use through AWS Marketplace - no monthly minimums, no subscriptions, and no markup on AWS Bedrock model pricing. Reduce costs further with prompt caching and Fargate Spot. Standard OpenAI and Anthropic APIs mean zero vendor lock-in: switch AI providers anytime with no impact on your applications.

Details

Introducing multi-product solutions

You can now purchase comprehensive solutions tailored to use cases and industries.

Features and programs

Financing for AWS Marketplace purchases

Pricing

Free trial

Dimension | Description | Cost/unit/hour |

|---|---|---|

Hours | Container Hours | $0.10 |

Vendor refund policy

stdapi.ai offers refunds on a case-by-case basis. We encourage you to try our free tier first to evaluate the product before purchasing.

To request a refund, contact support@stdapi.ai with your AWS Marketplace order ID and reason. Our team will review your request promptly.

For more information, visit https://stdapi.ai/operations_getting_started/

How can we make this page better?

Legal

Vendor terms and conditions

Content disclaimer

Delivery details

Amazon ECS Deployment with Terraform/OpenTofu

- Amazon ECS

- Amazon EKS

Container image

Containers are lightweight, portable execution environments that wrap server application software in a filesystem that includes everything it needs to run. Container applications run on supported container runtimes and orchestration services, such as Amazon Elastic Container Service (Amazon ECS) or Amazon Elastic Kubernetes Service (Amazon EKS). Both eliminate the need for you to install and operate your own container orchestration software by managing and scheduling containers on a scalable cluster of virtual machines.

Version release notes

See https://stdapi.ai/roadmap/ for more information

Additional details

Usage instructions

AFTER SUBSCRIBING - WHAT TO DO NEXT

Go to the deployment guide at https://stdapi.ai/operations_getting_started/ - it walks through prerequisites, Terraform deployment, first API call, and troubleshooting.

Ready-to-deploy Terraform examples: https://github.com/stdapi-ai/samples (includes single-region production, multi-region EU/GDPR, multi-region US, and Open WebUI.)

OVERVIEW

Hardened container image for production use. Typical deployment is ECS Fargate provisioned via the official Terraform module, which creates VPC, ALB with HTTPS, auto-scaling, WAF, S3, CloudWatch, IAM, and KMS. Manual deployment on ECS or EKS is also supported.

The Terraform module is published at https://registry.terraform.io/modules/stdapi-ai/stdapi-ai/aws/latest and produces a complete, AWS Well-Architected deployment from a few input variables. Customize via variables for domain/HTTPS, auto-scaling, allowed Bedrock regions, API authentication, WAF, monitoring, and existing-VPC integration.

For deployment patterns beyond the samples (existing VPC, existing ALB, manual ECS, cost-optimized Fargate Spot, multi-region routing), see https://stdapi.ai/operations_deploy_advanced/ .

CONFIGURATION REFERENCE

Every environment variable and Terraform input is documented at https://stdapi.ai/operations_configuration/ .

API REFERENCE

OpenAI-compatible endpoints (/v1/), Anthropic-compatible endpoints (/anthropic/v1/), and native /search_models for agents: https://stdapi.ai/api_overview/

MONITORING

CloudWatch logs and metrics, ECS health checks, OpenTelemetry and AWS X-Ray integration (optional). Details at https://stdapi.ai/operations_logging_monitoring/ .

TROUBLESHOOTING

Common first-deployment issues (503 during ECS warmup, TLS warning on default ALB domain, 403 auth, 404 model not found, Bedrock throttling, S3 errors): https://stdapi.ai/operations_troubleshooting/ .

SUPPORT

Documentation: https://stdapi.ai Email: support@stdapi.ai GitHub: https://github.com/stdapi-ai/stdapi.ai/issues

SECURITY

Container runs as non-root with minimal attack surface. Vulnerability scans and prompt patching. Region-specific deployment supporting HIPAA, GDPR, and FedRAMP through AWS Bedrock certifications. CloudWatch audit logs. Security details at https://stdapi.ai/operations_authentication_security/ .

Resources

Vendor resources

Support

Vendor support

Email support available at support@stdapi.ai for deployment assistance, configuration questions, and troubleshooting. Response within 1-2 business days.

Community support via GitHub Issues at https://github.com/stdapi-ai/stdapi.ai/issues for bug reports, feature requests, and discussions.

Comprehensive documentation at https://stdapi.ai includes Getting Started guides, API reference, integration examples, and troubleshooting guides.

AWS infrastructure support

AWS Support is a one-on-one, fast-response support channel that is staffed 24x7x365 with experienced and technical support engineers. The service helps customers of all sizes and technical abilities to successfully utilize the products and features provided by Amazon Web Services.

Similar products