Minimizing correlated failures in distributed systems

Architecture | LEVEL 300

Achieving fault tolerance

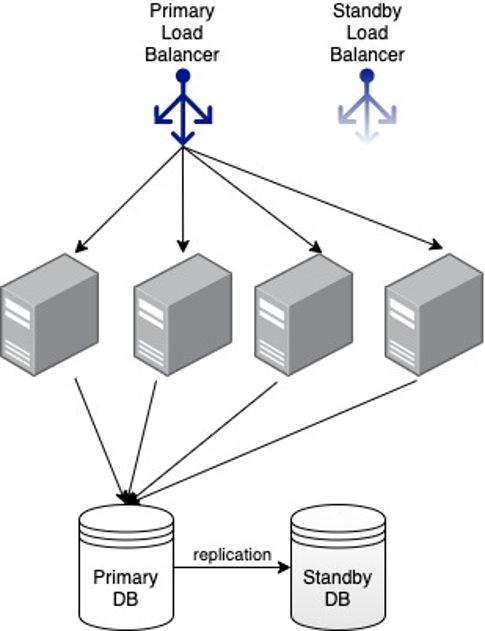

To achieve fault tolerance, a typical Amazon service at that time ran on multiple physical servers behind a load balancer that distributed incoming requests among those servers. To deal with server failures, the load balancer sent a periodic health check (typically a small HTTP request to a well-known URL) to each server. If any of the servers failed to respond several times in a row, the load balancer took that server out of consideration for future requests. To learn more, check out David Yanacek’s article on health checks in this Amazon Builders’ Library article: Implementing health checks. To avoid the load balancer itself becoming a single point of failure, two load balancers were arranged into a primary and secondary pair, and the domain name system (DNS) was used to fail over to the secondary if the primary failed. If the system needed persistent storage, a similar technique was used to set up a pair of replicated relational databases, with an active primary and a standby secondary. The following diagram shows an example of this architecture:

Now we use load balancers like Application Load Balancer or Network Load Balancer, and storage services like Amazon DynamoDB to provide scalable and fault-tolerant building blocks for our services, but the core principle remains unchanged. If we can architect our system to run on multiple redundant servers, and continue to operate even if some of those servers fail, then the availability of the overall system will be much greater than the availability of any single server. This follows from the way probabilities compound. For example, if each server has a 0.01% chance of failing on any given day, then by running on two servers, the probability of both of them failing on the same day is 0.000001%. We go from a somewhat unlikely event, to an incredibly unlikely one!

Using Regions and Availability Zones

At Amazon, we approach reducing such risks by organizing the underlying infrastructure into multiple Regions and Availability Zones. Each Region is designed to be isolated from all the other Regions, and it consists of multiple Availability Zones. Each Availability Zone consists of one or more discrete data centers that have redundant power, network, and connectivity, and are physically separated by a meaningful distance from other Availability Zones. Infrastructure services like Amazon Elastic Compute Cloud (EC2), Amazon Elastic Block Store (EBS), Elastic Load Balancing (ELB), and Amazon Virtual Private Cloud (VPC) run independent stacks in each Availability Zone that share little to nothing with other Availability Zones. Because any change brings with it a risk of failure, infrastructure teams configure their continuous deployment systems to avoid deploying changes to multiple Availability Zones at the same time. To learn more about our approach to safe, continuous deployments, read Clare Liguori’s article on Automating safe, hands-off deployments in the Amazon Builders’ Library.

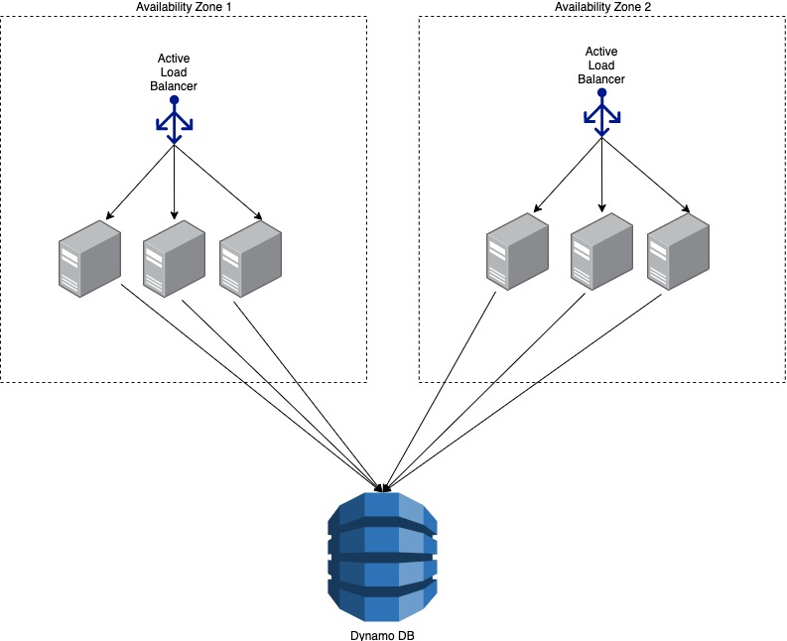

Separation of infrastructure into independent Availability Zones allows service teams to architect and deploy their services in a way that reduces the risk that a single infrastructure failure will have an impact on the majority of their fleet. By the mid-to-late 2000s, a typical Amazon service used an architecture that looked like this:

We found Availability Zones to be so powerful at reducing the risk of correlated infrastructure failures, that when Amazon EC2 went public in 2008, Availability Zones were a core feature. These days, AWS customers and internal service teams alike use Availability Zones to build highly available applications on top of EC2. My colleagues Becky Weiss and Mike Furr wrote about getting the most out of Availability Zones in this Amazon Builders’ Library article: Static stability using Availability Zones.

Conclusion

Redundancy has been instrumental in helping us build highly available systems, even while using relatively inexpensive hardware components. By architecting our systems to run on multiple servers, we can make sure the system remains available even if some of those servers fail. However, both known and hidden factors can introduce risks of correlated failures, which eat away at the benefits of redundancy in the distributed systems. Some examples of factors that could cause correlated failures are:

- Infrastructure components like power, cooling, and network.

- Common dependencies like DNS.

- Operator actions that touch every server.

- Every server in the fleet having identical behavior and resource limits.

At Amazon, we look at ways to reduce such risks. Availability Zones are one such powerful mechanism. By organizing infrastructure into independent locations that share as little as possible with each other we give our own teams and customers a powerful tool to improve availability of their services. Beyond that, our service teams look for techniques like shuffle sharding and jitter to reduce the risk of a single software problem impacting all the servers in the fleet.

Did you find what you were looking for today?

Let us know so we can improve the quality of the content on our pages