Overview

Open WebUI

Open WebUI is an open-source, self hosted AI platform.

Open WebUI

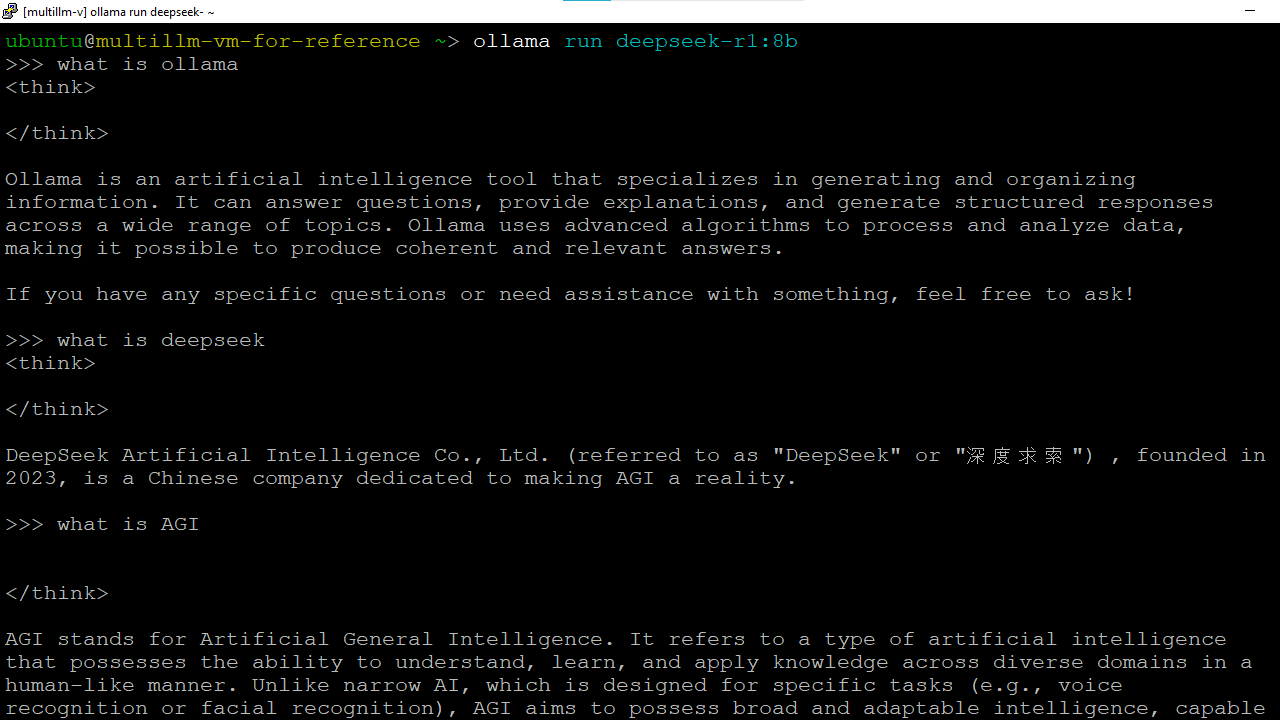

Running prompts from Ollama Command Line

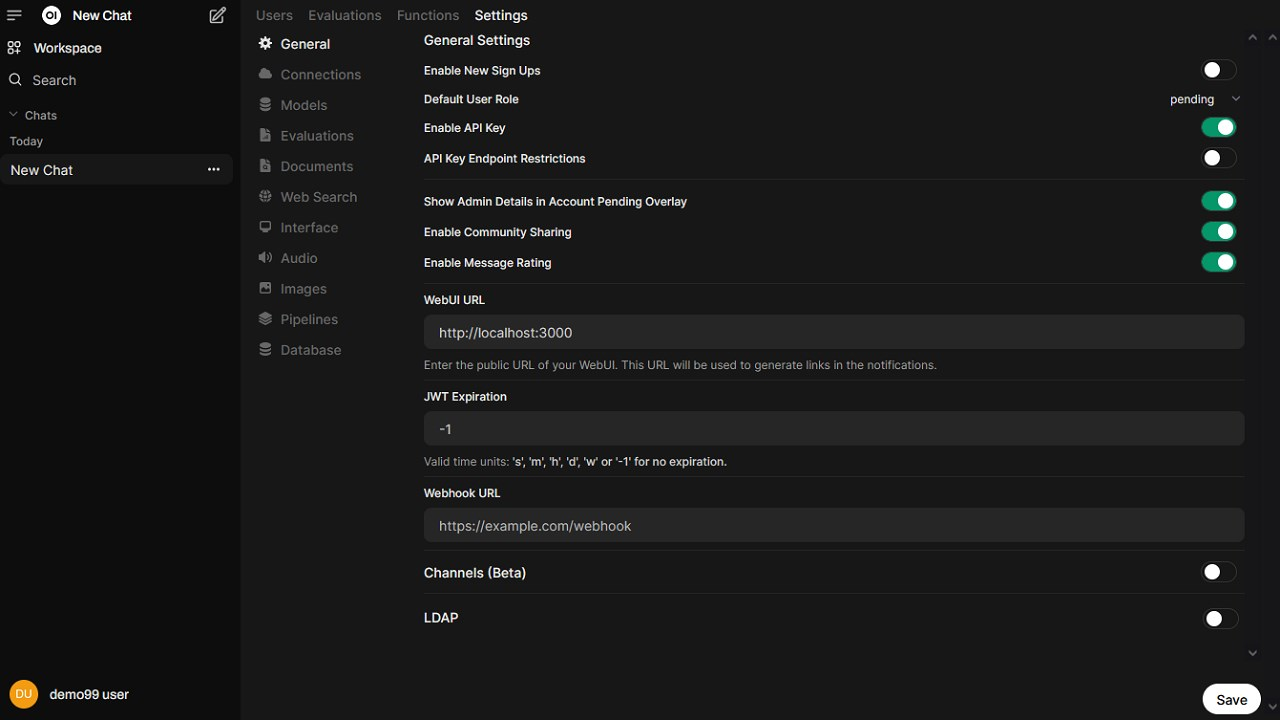

Open WebUI settings

open-webui-get-started-page

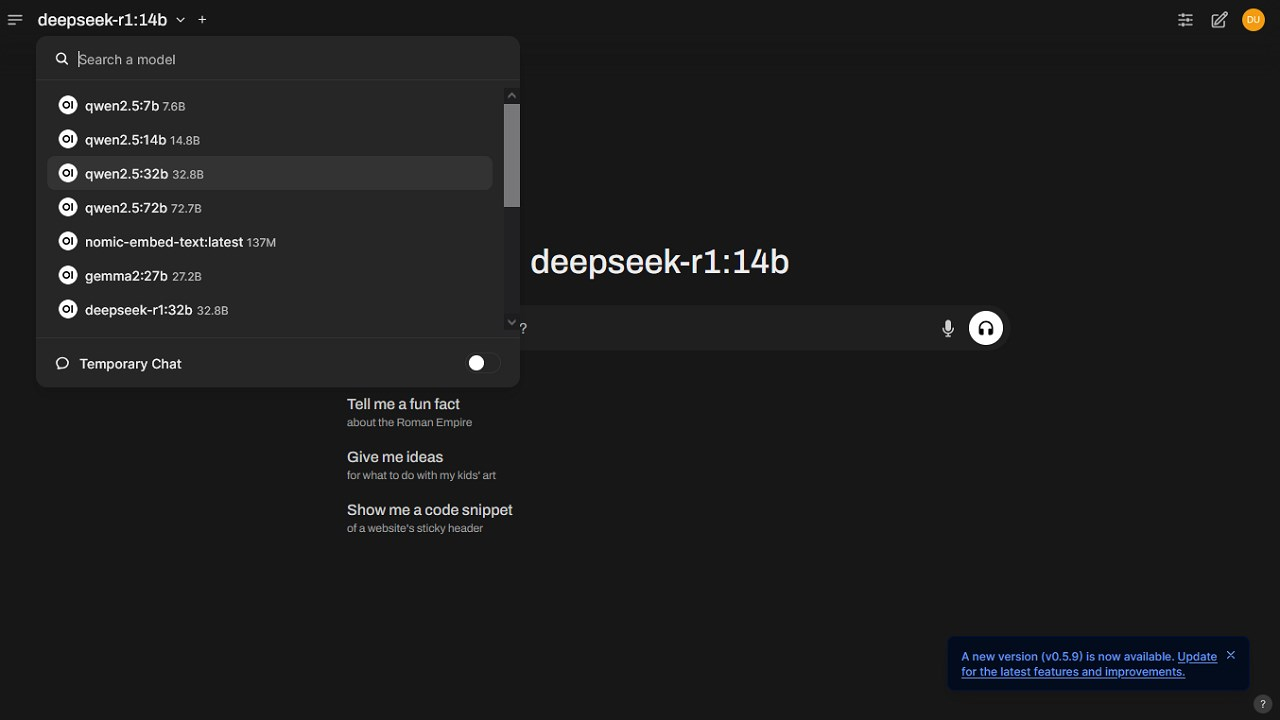

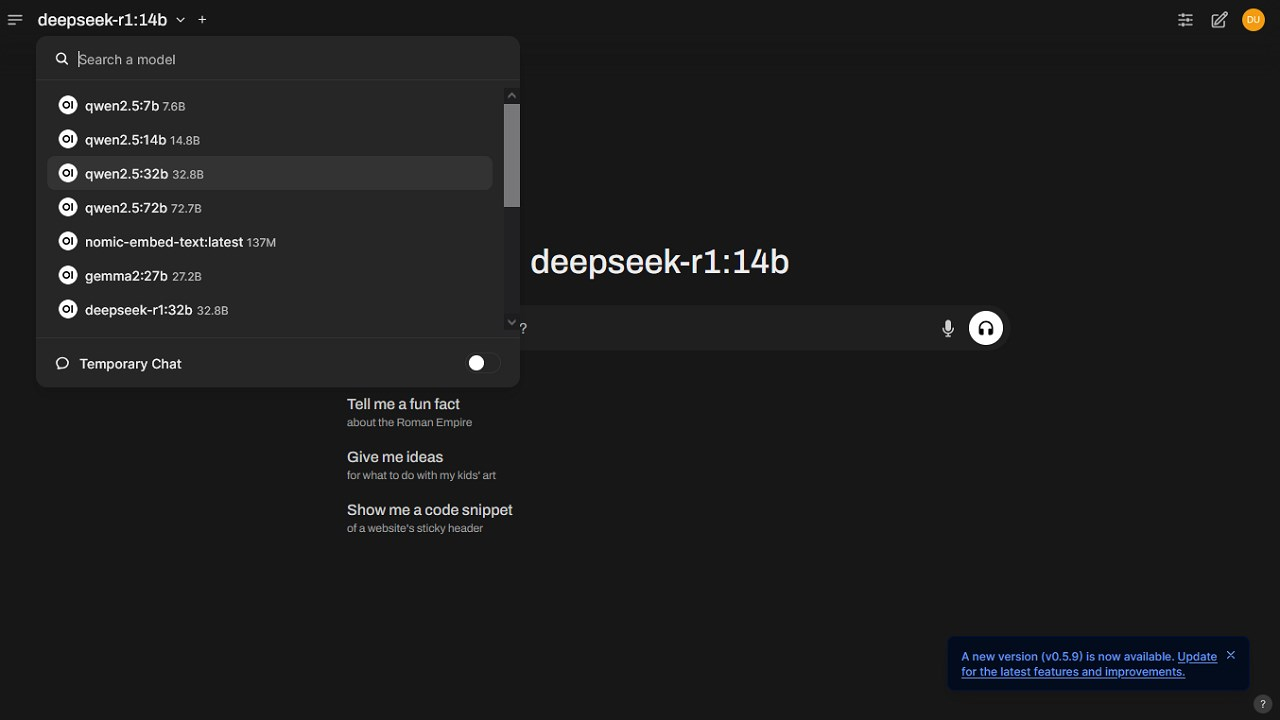

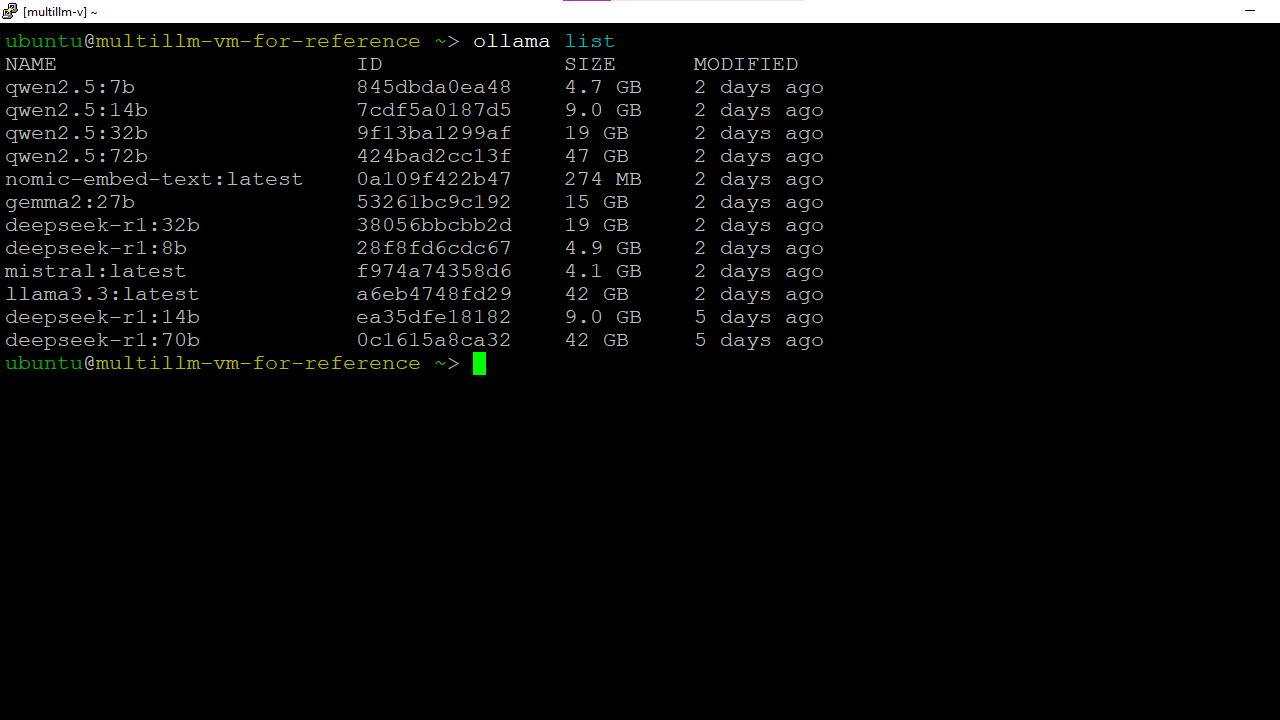

Ollama models.

This is a repackaged open source software product wherein additional charges apply for support by TechLatest.net.

Note: We provide free demo access for the "GPU Supported DeepSeek & Llama-powered All-in-One LLM Suite." To request a free demo, please reach out to us at marketing@techlatest.net with the subject "Free Demo Access Request - [Your Company Name]"

Important: For step by step guide on how to setup this vm , please refer to our Getting Started guide

Ollama is a robust platform designed to simplify the management of large language models (LLMs). It provides a streamlined interface for downloading, running, and fine-tuning models from various vendors, making it easier for developers to build, deploy, and scale AI applications.

Alongside, the VM is preconfigured with multiple cutting-edge models and allows users to pull and install additional LLMs as needed.

The LLMs can be utilized via API integration as well as Open-WebUI based intuitive Chat UI to directly interact with multiple LLMs interactively.

This virtual machine comes with GPU support, enabling faster model execution but at a higher cost. If you are looking for a more cost-effective solution and do not require GPU acceleration, then please visit DeepSeek & Llama powered All-in-One LLM Suite

What is included in the VM :

1. GPU Support: GPU-boosted LLMs for real-time inference, training, and seamless deployment

2. Preconfigured Models:

- DeepSeek-R1: with 8B, 14B, 32B, 70B parameters

- LLaMA 3.3

- Mistral

- Gemma 2 (27B)

- Qwen 2.5: with 7B, 14B, 32B, 72B parameters

- Nomic Embed Text

3. Open-WebUI :

- User-Friendly Interface: Open-WebUI offers an intuitive platform for managing Large Language Models (LLMs), enhancing user interaction through a chat-like interface.

- Advanced Features: Supports Markdown, LaTeX, and code highlighting, making it versatile for various applications.

- Centralized Access Control : Support RBAC (Role Based Access Control) to manage access

- Accessibility: Designed to work seamlessly on both desktop and mobile devices, ensuring users can engage with LLMs anywhere.

4. Ollama :

- Simplified Model Management: Ollama streamlines the process of deploying and interacting with LLMs, making it easier for developers and AI enthusiasts.

- Integration with Open-WebUI: Offers a cohesive experience by allowing users to manage models directly through the Open-WebUI interface.

- Real-Time Capabilities: Enables dynamic content retrieval during interactions, enhancing the context and relevance of responses.

Key Benefits:

- Privacy First: Your data remains secure and private, with no risk of 3rd party data exposure

- No Vendor-Lockin : No need for expensive vendor subscriptions

- Multipurpose : Weather you are single user or a team within an Enterprise, you can use this vm for various purposes such as AI app development using APIs, AI chat alternative to commercial offerings, LLM inference etc.

- GPU Support : Run the LLMs on GPU based instances enabling faster model execution and training

Why Choose Techlatest VM Offer?

- Cost and Time Efficient: Consolidate your models into a single environment, eliminating setup overhead and bandwidth cost of model download

- Seamless API Integration: Integrate models directly into your applications for custom work-flows and automation

- Effortless Model Management: Simplify model installation and management with Ollama intuitive system, enabling easy customization of your AI environment.

- Side-by-Side Model Comparison: Evaluate different models in parallel in Open-WebUI to quickly determine which one best fits your needs.

Disclaimer: Other trademarks and trade names may be used in this document to refer to either the entities claiming the marks and/or names or their products and are the property of their respective owners. We disclaim proprietary interest in the marks and names of others.

In order to deploy and use this VM offer, users are required to comply with Ollama, Openwebui and preconfigured models licenses and term of agreement.

Refer to below links on the Licensing terms:

https://github.com/ollama/ollama/blob/main/LICENSE https://github.com/open-webui/open-webui/blob/main/LICENSE

https://www.ollama.com/library/llama3.3/blobs/53a87df39647

https://www.ollama.com/library/llama3.3/blobs/bc371a43ce90

https://www.ollama.com/library/deepseek-r1/blobs/6e4c38e1172f

https://www.ollama.com/library/qwen2.5/blobs/832dd9e00a68

https://www.ollama.com/library/mistral/blobs/43070e2d4e53

https://www.ollama.com/library/gemma2:27b/blobs/097a36493f71

https://www.ollama.com/library/nomic-embed-text/blobs/c71d239df917

Highlights

- Includes models from DeepSeeK, Llama, Gemma, Mistral, Qwen & lot more with GPU support

Details

Introducing multi-product solutions

You can now purchase comprehensive solutions tailored to use cases and industries.

Features and programs

Financing for AWS Marketplace purchases

Pricing

Dimension | Cost/hour |

|---|---|

g4dn.xlarge Recommended | $0.15 |

g3s.xlarge | $0.15 |

g3.16xlarge | $0.15 |

g5.16xlarge | $0.15 |

g5.12xlarge | $0.15 |

g4dn.8xlarge | $0.15 |

g5.xlarge | $0.15 |

g4dn.4xlarge | $0.15 |

g4dn.2xlarge | $0.15 |

g4dn.16xlarge | $0.15 |

Vendor refund policy

Will be charged for usage, can be canceled anytime and usage fee is non refundable.

How can we make this page better?

Legal

Vendor terms and conditions

Content disclaimer

Delivery details

64-bit (x86) Amazon Machine Image (AMI)

Amazon Machine Image (AMI)

An AMI is a virtual image that provides the information required to launch an instance. Amazon EC2 (Elastic Compute Cloud) instances are virtual servers on which you can run your applications and workloads, offering varying combinations of CPU, memory, storage, and networking resources. You can launch as many instances from as many different AMIs as you need.

Version release notes

ollama version upgraded to 0.18.0 and open webui version upgraded 0.8.10

Additional details

Usage instructions

-

On the EC2 Console page, instance is up and running. To connect to this instance through putty, copy the IPv4 Public IP Address. (refer Putty Guide available at https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/connect-linux-inst-from-windows.html for details on how to connect using putty/ssh).

-

Open putty, paste the IP address and browse your private key you downloaded while deploying the VM, by going to SSH- >Auth->Credentials , click on Open.

-

Login as ubuntu user.

-

Update the password of ubuntu user using below command : sudo passwd ubuntu

-

Once ubuntu user password is set, access the GUI environment using RDP on Windows machine or Remmina on Linux machine.

-

Copy the Public IP of the VM and paste it in the RDP. Login with ubuntu user and its password.

-

To access the Open-WebUI , open your browser and copy paste the public IP of the VM as https://public_ip_of_vm

-

Click on Get Started Link at the bottom and Create your first admin account on the registration page.

For step by step guide to provision and use this VM , please visit AWS Getting Started Guide

Support

Vendor support

Email: info@techlatest.net

AWS infrastructure support

AWS Support is a one-on-one, fast-response support channel that is staffed 24x7x365 with experienced and technical support engineers. The service helps customers of all sizes and technical abilities to successfully utilize the products and features provided by Amazon Web Services.

Similar products