Data Lakes on AWS

Break down data silos and enable analytics at scale in an Amazon S3 data lake

Overview

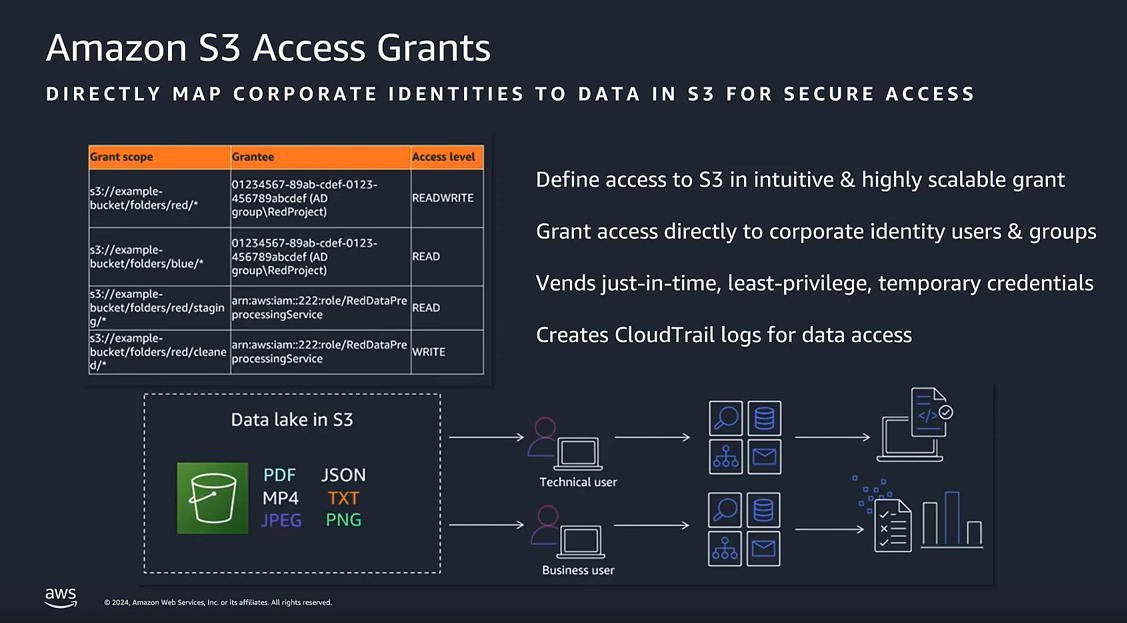

Data lakes on AWS help you break down data silos to maximize end-to-end data insights. With Amazon Simple Storage Service (Amazon S3) as your data lake foundation, you can tap into AWS analytics services to support your data needs from data ingestion, movement, and storage to big data analytics, streaming analytics, business intelligence, machine learning (ML), and more – all with the best price performance. More than 1,000,000 data lakes run on AWS.

Amazon S3 is the best place to build data lakes because of its unmatched durability, availability, scalability, security, compliance, and audit capabilities. With AWS Lake Formation, you can build secure data lakes in days instead of months. AWS Glue then allows seamless data movement between data lakes and your purpose-built data and analytics services.

Benefits of data lakes with AWS

-

Because Amazon S3 scales cost-effectively, practically without limit, you can store all of your data, from any source, and unlock its value.

-

With all of your data available for analysis, organizations can accelerate innovation, like discovering new opportunities for savings or personalization. A broader data continuum is accessible for ML and predictive analytics.

-

With purpose-built AWS analytics services, you can quickly extract data insights using the most appropriate tool for the job, optimized to give you the best performance, scale, and cost for your needs.

-

With the most serverless options for data analytics in the cloud, AWS analytics services are easy to use, administer, and manage.

Data governance on data lakes with Amazon S3 and Amazon DataZone

Essential pillars for data lakes on AWS

With data lakes built on Amazon S3, you can use native AWS services to run big data analytics, artificial intelligence (AI), ML, high-performance computing (HPC) and media data processing applications to gain insights from your unstructured datasets. When coupled with AWS Lake Formation and AWS Glue, it's easy to simplify data lake creation and management with end-to end data integration and centralized, database-like permissions and governance. AWS analytic solutions, like Glue, Amazon EMR, and Amazon Athena make it easy to query your data lake directly.

You can import any amount of data, in real-time or batch, with AWS Glue. Data can be collected from multiple sources and moved into the data lake in its original format – and AWS analytics services can also be used to query your data lake directly. Having data integration, discovery, preparation, and transformation tools like AWS Glue allows you to scale while saving time defining data structures, schema, and transformations.

With an array of data sources and formats in your data lake, being able to crawl, catalog, index, and secure data is critical to ensure access to users. AWS Glue provides a streamlined and centralized data catalog so you can better understand the data in your data lake. AWS Lake Formation lets you centralize data governance and security so you can deploy data with confidence.

It’s easy for diverse users across your organization, like data scientists, data developers, and business analysts, to access data with their choice of purpose-built AWS analytics tools and frameworks. You can easily and quickly run analytics without the need to move your data to a separate analytics system.

Data lakes on AWS allow you to innovate faster with the most comprehensive set of AI and ML services. With ML-enabled on your data lakes, you can make accurate predictions, gain deeper insights from your data, reduce operational overhead, and improve customer experience.