What is a Service Mesh?

What is a service mesh?

A service mesh is a software layer that handles all communication between services in applications. This layer is composed of containerized microservices. As applications scale and the number of microservices increases, it becomes challenging to monitor the performance of the services. To manage connections between services, a service mesh provides new features like monitoring, logging, tracing, and traffic control. It’s independent of each service’s code, which allows it to work across network boundaries and with multiple service management systems.

Why do you need a service mesh?

In modern application architecture, you can build applications as a collection of small, independently deployable microservices. Different teams may build individual microservices and choose their coding languages and tools. However, the microservices must communicate for the application code to work correctly.

Application performance depends on the speed and resiliency of communication between services. Developers must monitor and optimize the application across services, but it’s hard to gain visibility due to the system's distributed nature. As applications scale, it becomes even more complex to manage communications.

There are two main drivers to service mesh adoption, which we detail next.

Service-level observability

As more workloads and services are deployed, developers find it challenging to understand how everything works together. For example, service teams want to know what their downstream and upstream dependencies are. They want greater visibility into how services and workloads communicate at the application layer.

Service-level control

Administrators want to control which services talk to one another and what actions they perform. They want fine-grained control and governance over the behavior, policies, and interactions of services within a microservices architecture. Enforcing security policies is essential for regulatory compliance.

What are the benefits of a service mesh?

A service mesh provides a centralized, dedicated infrastructure layer that handles the intricacies of service-to-service communication within a distributed application. Next, we give several service mesh benefits.

Service discovery

Service meshes provide automated service discovery, which reduces the operational load of managing service endpoints. They use a service registry to dynamically discover and keep track of all services within the mesh. Services can find and communicate with each other seamlessly, regardless of their location or underlying infrastructure. You can quickly scale by deploying new services as required.

Load balancing

Service meshes use various algorithms—such as round-robin, least connections, or weighted load balancing—to distribute requests across multiple service instances intelligently. Load balancing improves resource utilization and ensures high availability and scalability. You can optimize performance and prevent network communication bottlenecks.

Traffic management

Service meshes offer advanced traffic management features, which provide fine-grained control over request routing and traffic behavior. Here are a few examples.

Traffic splitting

You can divide incoming traffic between different service versions or configurations. The mesh directs some traffic to the updated version, which allows for a controlled and gradual rollout of changes. This provides a smooth transition and minimizes the impact of changes.

Request mirroring

You can duplicate traffic to a test or monitoring service for analysis without impacting the primary request flow. When you mirror requests, you gain insights into how the service handles particular requests without affecting the production traffic.

Canary deployments

You can direct a small subset of users or traffic to a new service version, while most users continue to use the existing stable version. With limited exposure, you can experiment with the new version's behavior and performance in a real-world environment.

Security

Service meshes provide secure communication features such as mutual TLS (mTLS) encryption, authentication, and authorization. Mutual TLS enables identity verification in service-to-service communication. It helps ensure data confidentiality and integrity by encrypting traffic. You can also enforce authorization policies to control which services access specific endpoints or perform specific actions.

Monitoring

Service meshes offer comprehensive monitoring and observability features to gain insights into your services' health, performance, and behavior. Monitoring also supports troubleshooting and performance optimization. Here are examples of monitoring features you can use:

- Collect metrics like latency, error rates, and resource utilization to analyze overall system performance

- Perform distributed tracing to see requests' complete path and timing across multiple services

- Capture service events in logs for auditing, debugging, and compliance purposes

How does a service mesh work?

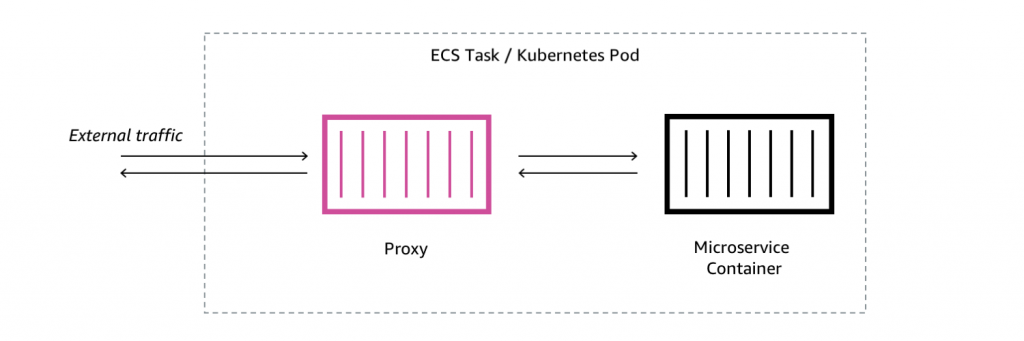

A service mesh removes the logic governing service-to-service communication from individual services and abstracts communication to its own infrastructure layer. It uses several network proxies to route and track communication between services.

A proxy acts as an intermediary gateway between your organization’s network and the microservice. All traffic to and from the service is routed through the proxy server. Individual proxies are sometimes called sidecars, because they run separately but are logically next to each service. Taken together, the proxies form the service mesh layer.

There are two main components in service mesh architecture—the control plane and the data plane.

Data plane

The data plane is the data handling component of a service mesh. It includes all the sidecar proxies and their functions. When a service wants to communicate with another service, the sidecar proxy takes these actions:

- The sidecar intercepts the request

- It encapsulates the request in a separate network connection

- It establishes a secure and encrypted channel between the source and destination proxies

The sidecar proxies handle low-level messaging between services. They also implement features, like circuit breaking and request retries, to enhance resiliency and prevent service degradation. Service mesh functionality—like load balancing, service discovery, and traffic routing—is implemented in the data plane.

Control plane

The control plane acts as the central management and configuration layer of the service mesh.

With the control plane, administrators can define and configure the services within the mesh. For example, they can specify parameters like service endpoints, routing rules, load balancing policies, and security settings. Once the configuration is defined, the control plane distributes the necessary information to the service mesh's data plane.

The proxies use the configuration information to decide how to handle incoming requests. They can also receive configuration changes and adapt their behavior dynamically. You can make real-time changes to the service mesh configuration without service restarts or disruptions.

Service mesh implementations typically include the following capabilities in the control plane:

- Service registry that keeps track of all services within the mesh

- Automatic discovery of new services and removal of inactive services

- Collection and aggregation of telemetry data like metrics, logs, and distributed tracing information

What is Istio?

Istio is an open-source service mesh project designed to work primarily with Kubernetes. Kubernetes is an open-source container orchestration platform used to deploy and manage containerized applications at scale.

Istio’s control plane components run as Kubernetes workloads themselves. It uses a Kubernetes Pod—a tightly coupled set of containers that share one IP address—as the basis for the sidecar proxy design.

Istio’s layer 7 proxy runs as another container in the same network context as the main service. From that position, it can intercept, inspect, and manipulate all network traffic heading through the Pod. Yet, the primary container needs no alteration or even knowledge that this is happening.

What are the challenges of open-source service mesh implementations?

Here are some common service mesh challenges associated with open-source platforms like Istio, Linkerd, and Consul.

Complexity

Service meshes introduce additional infrastructure components, configuration requirements, and deployment considerations. They have a steep learning curve, which requires developers and operators to gain expertise in using the specific service mesh implementation. It takes time and resources to train teams. An organization must ensure teams have the necessary knowledge to understand the intricacies of service mesh architecture and configure it effectively.

Operational overheads

Service meshes introduce additional overheads to deploy, manage, and monitor the data plane proxies and control plane components. For instance, you have to do the following:

- Ensure high availability and scalability of the service mesh infrastructure

- Monitor the health and performance of the proxies

- Handle upgrades and compatibility issues

It's essential to carefully design and configure the service mesh to minimize any performance impact on the overall system.

Integration challenges

A service mesh must integrate seamlessly with existing infrastructure to perform its require functions. This includes container orchestration platforms, networking solutions, and other tools in the technology stack.

It can be challenging to ensure compatibility and smooth integration with other components in complex and diverse environments. Ongoing planning and testing are required to change your APIs, configuration formats, and dependencies. The same is true if you need to upgrade to new versions anywhere in the stack.

How can AWS support your service mesh requirements?

AWS App Mesh is a fully managed and highly available service mesh from Amazon Web Service (AWS). App Mesh makes it easy to monitor, control, and debug the communications between services.

App Mesh uses Envoy, an open-source service mesh proxy deployed alongside your microservice containers. You can use it with microservice containers managed by Amazon Elastic Container Service (Amazon ECS), Amazon Elastic Kubernetes Service (Amazon EKS), AWS Fargate, and Kubernetes on AWS. You can also use it with services on Amazon Elastic Compute Cloud (Amazon EC2).

Get started with service mesh on AWS by creating an account today.

Next Steps on AWS

Browse all cloud computing concepts

Browse all cloud computing concepts content here:

Did you find what you were looking for today?

Let us know so we can improve the quality of the content on our pages