- AWS Marketplace

- Top tools and patterns

- Building agentic systems

Introduction to AI agents

A clear, practical overview of AI agents: How they relate to foundation models, access tools and data, automate workflows, and deliver measurable business value

Overview

Technical workshop: Introduction to AI agents

Join AWS experts for a hands-on workshop featuring tools from Anthropic, Hugging Face, and Cohere. Real demos, real code, ship faster.

Introduction to AI agents

Set foundation for agent adoption and indentify use cases where agents reduce latency, automate workflow, or improve decision accuracy.

Featured AI tools in AWS Marketplace

Build with foundation models, deployment platforms, and AI infrastructure—all available through your AWS account.

A brief history of artificial intelligence

Artificial intelligence has evolved over several decades, moving through cycles of optimism and so-called “AI winters.” These cycles have been shaped by advances in computing power, data availability, software engineering practices, and overall market readiness. Early AI systems relied on explicitly defined rules and logic. While they performed reasonably well in narrowly scoped environments, they were difficult to extend and expensive to maintain. Any change in behavior required manual updates, and handling ambiguity was often painful.

The introduction of machine learning and deep neural networks shifted this approach. Instead of writing in logic directly, developers trained models to recognize patterns from data, enabling advances in areas such as classification, recommendation systems, and language processing. Even so, most of these systems remained task-specific. They performed inference when invoked, but they did not plan, adapt, or operate beyond a predefined execution path and a very narrowly scoped request - complex requests and ambiguity usually ended up in less than useful output.

Large language models (LLMs) marked a significant transition. Trained on huge volumes of text and code, these models demonstrated the ability to generalize across domains, interpret intent, and perform multi-step reasoning. One of their most important contributions, however, was not only technical. By introducing natural language as a primary interface, LLMs dramatically lowered the barrier to experimentation and integration, allowing a much broader set of practitioners to work with advanced AI capabilities.

As these models matured, they expanded beyond text. Multimodal models can now process combinations of text, images, audio, and structured data, enabling systems to reason over documents, diagrams, screenshots, and conversations. This expansion significantly broadened the range of problems AI systems could address and brought them closer to real-world operating conditions.

At the same time, the surrounding landscape evolved. Standardized APIs, managed services, and common integration patterns made it easier to consume models as reusable components rather than as research artifacts. This shift reminds us of earlier changes in software development, where teams moved from tightly coupled, compile-time systems to higher-level abstractions, shared libraries, and managed runtimes. As complexity moved into well-defined platforms, development velocity increased.

AI agents emerge from this context. They represent a move away from single-step inference toward systems that reason, act, and iterate over time. Rather than treating models as endpoints, agentic systems treat them as decision-making components within a broader application architecture.

Large language models

Large language models (LLMs) provide the reasoning capability at the center of most agent-based solutions. They interpret goals, evaluate options, and generate structured outputs that drive actions, decisions, and behavior.

On AWS, managed services provide a consistent abstraction for accessing foundation models. These services handle authentication, scaling, monitoring, and governance, allowing development teams to integrate models into existing technology stacks without managing infrastructure directly. This approach accelerates development and simplifies adoption within enterprise environments.

When designing agentic solutions, it is also important to understand model constraints. LLMs operate within a context window that limits how much information they can consider at once. Instructions, user input, retrieved data, tool outputs, and conversation history must all be carefully managed to fit within this window. This has given rise to a new discipline commonly referred to as context engineering.

One mechanism used to address these limitations is memory. Short-term memory preserves state within a single task or session, while long-term memory allows agents to work with relevant information across interactions. In practice, memory is implemented through external data stores and retrieval mechanisms rather than within the model itself. The orchestration layer determines what information is retrieved and when it is included in the model’s context.

These considerations directly affect agent reliability and cost. Larger context windows increase flexibility, but they also introduce higher latency and expense - and are not necesarilly desired depending on the design of the system. Well-designed agentic systems balance model capability with intentional context management to deliver consistent results at scale and within budget.

Models and tools provided by partners such as Anthropic, Cohere, and Hugging Face—available through AWS Marketplace—can be incorporated into these architectures when appropriate. Regardless of the model source, the emphasis remains on how models are used within a system rather than on the models themselves.

From AI that responds to agents that act

Traditional LLM-based applications typically follow a request–response pattern: a user submits input, the model generates output, and the interaction ends. While this pattern is effective—and already transformative—for many use cases, it limits automation to single-step interactions. It also requires humans to continuously guide the model’s reasoning and confines the model to providing information rather than acting on the user’s behalf.

AI agents operate differently. An agent receives a goal and executes a loop: it evaluates the current context, plans next steps, invokes tools or data sources, observes the result,

and adjusts its behavior accordingly. This loop—often referred to as the thought–action–observation cycle—continues until the goal is achieved, deferred, or escalated.

Tools and data are indispensable to this process. Tools allow agents to interact with external systems such as databases, APIs, workflows, or messaging services. Data provides grounding, enabling agents to base decisions on current and authoritative information rather than on model inference alone. It also allows agents to maintain awareness of prior user interactions and the outputs of previous agent actions.

Planning is a central role of the model within this loop. The model decomposes goals into steps, determines which tools to use, and sequences the required actions. Over time, this enables specialization. Different agents can be configured with distinct tools, instructions, and models, allowing them to focus on functions such as research, execution, validation, or coordination.

As systems scale, agents often operate together. Supervisory architectures allow a coordinating agent to delegate tasks to specialized agents, while swarm-style approaches enable multiple agents to work in parallel and converge on outcomes through iteration. These patterns support more complex workflows while preserving modularity and will be explored in later modules of this series.

Agentic systems

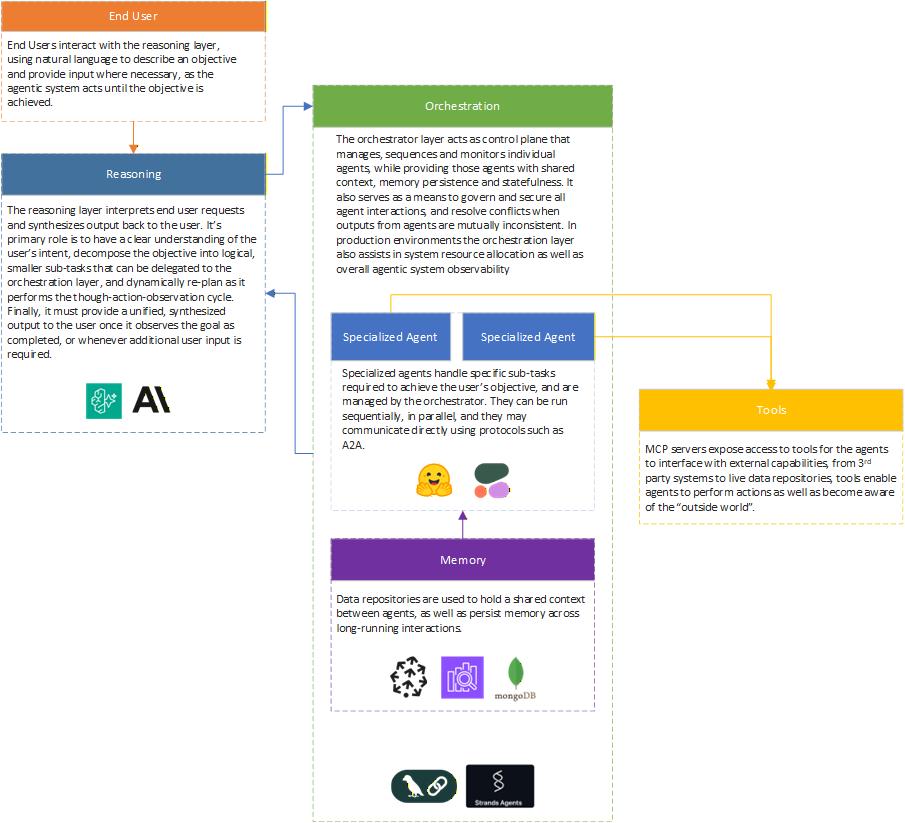

Before examining the components of an agentic system, it is important to clarify a key distinction. An AI agent is an individual, goal-directed component. An agentic system is the broader environment that supports agents through orchestration, tooling, identity, policies, and evaluation.

This distinction matters in practice. Individual agents are often straightforward to prototype, but production deployments require agentic systems to manage scale, governance, and reliability. Treating agents as part of a system—rather than as isolated scripts—enables long-term adoption and reuse.

Components

An AI agent is a single unit of behavior. An agentic system provides the structure that allows agents to operate reliably and at scale.

At a high level, agentic systems consist of three elements:

- Reasoning: One or more models responsible for interpreting goals and making decisions.

- Tools: Interfaces to external capabilities, including data stores, messaging systems, and execution environments.

- Orchestration: The control layer that manages context, state, tool invocation, and execution flow.

In AWS-centric architectures, tools often map directly to managed services. Data stores support state and memory, messaging services enable asynchronous coordination, and managed runtimes host agent logic securely and scalably.

Design patterns

Several architectural patterns are commonly used in agentic systems. Single-agent loops are suitable for well-scoped tasks, while supervisor–worker patterns support delegation and aggregation. Parallel or swarm-style patterns enable exploration and refinement, and human-in-the-loop patterns introduce checkpoints for review and approval.

These patterns allow teams to align agent behavior with business requirements and risk tolerance. They will be explored in greater depth in Module 4 and revisited in Module 6, where multi-agent orchestration and governance are addressed.

Interoperability

As agent deployments expand, interoperability becomes increasingly important. Agents must interact with users, systems, and other agents through well-defined interfaces. Standardized communication and clear contracts—such as Model Context Protocol (MCP) and Agent-to-Agent (A2A), which will be discussed in Module 4—help ensure that agentic systems remain composable and maintainable over time.

Challenges and Concerns

As with most advances in technology, agents and agentic systems are not silver bullets. They introduce new operational considerations and challenges. Because agents act autonomously, errors can propagate quickly if safeguards are not in place. Observability, security and identity controls, and evaluation mechanisms must be designed into the system from the start.

Fortunately, many of the lessons learned from building and operating distributed software systems over the past decades apply directly here. Established frameworks, practices, and operational disciplines provide a strong foundation for addressing these challenges in agentic systems.

How agents fit in the real world of business

Enterprise adoption of agents typically begins with specific and limited workflows. Early use cases often focus on assisting users, retrieving information, or automating repetitive steps. These scenarios allow teams to build familiarity and confidence with agent behavior and development practices while maintaining control.

Over time, organizations may expand the scope of agent autonomy. Agents can be permitted to execute actions directly, coordinate across systems, or operate continuously in the background. This progression must be intentional - trust is built through measurement, iteration, and governance rather than assumed upfront. On Module 5 we will look into various different domain-specific examples where Agents have proven successful in the enterprise.

Not all workflows are well suited to agentic automation. Successful organizations apply agents selectively, focusing on areas where variability, scale, and cognitive load justify their use. Governance mechanisms ensure that any expansion remains aligned with business objectives.

Getting started guide

Design

Start with a clearly defined use case. Specify the agent’s objective, success criteria, and boundaries. Decide which actions the agent can perform autonomously and where human approval is required.

Identify the minimal set of tools and data sources needed to achieve the objective. Limiting scope at this stage improves reliability and simplifies evaluation. It is also critical to have identified the tools, frameworks and development environment that will be used - these will be looked in depth on Modules 2 and Module 3.

Develop

Agent development benefits from cross-functional collaboration. Application developers, platform engineers, security teams, and domain experts each contribute to overall system quality.

Development typically includes:

- An orchestration layer to manage agent loops (Module 6)

- Secure access to models and tools (Module 8)

- Centralized logging and tracing (Module 12)

- Clear ownership of prompts, tools, and policies (Module 14)

Agents should be built and managed using the same practices applied to other software products, including versioning, documentation, and lifecycle management from the outset.

See strands code sample below.

Evaluate

Evaluation is critical for agent reliability. Because agent outputs are probabilistic, traditional pass/fail testing is no longer sufficient.

Effective evaluation practices include:

- Defining quality criteria tied to business outcomes

- Maintaining representative evaluation datasets

- Using automated scoring to compare changes over time

- Monitoring latency, cost, and task success rates in production

Evaluation should be continuous. Each update to prompts, tools, or models should be measured against established baselines to ensure that improvements are intentional and measurable. Deploying and testing multiple agent versions in parallel is also a key capability for enterprise-grade agentic systems, a topic explored further in Module 11.

Strands code sample

from strands import Agent, tool

from strands.models import BedrockModel

from datetime import datetime

from typing import Optional

# =============================================================================

# TOOLS LAYER - Actions the agent can perform

# =============================================================================

@tool

def get_weather(city: str) -> dict:

# Simulated weather data (in production, call a real API)

weather_data = {

"San Francisco": {"temp_f": 62, "conditions": "Partly Cloudy", "humidity": 75},

"New York": {"temp_f": 78, "conditions": "Sunny", "humidity": 55},

"Seattle": {"temp_f": 58, "conditions": "Rainy", "humidity": 85},

"Austin": {"temp_f": 95, "conditions": "Hot and Sunny", "humidity": 40},

}

result = weather_data.get(city, {"temp_f": 70, "conditions": "Unknown", "humidity": 50})

result["city"] = city

result["timestamp"] = datetime.now().isoformat()

return result

@tool

def calculate_travel_time(origin: str, destination: str, mode: str = "driving") -> dict:

# Simulated travel calculations (in production, use Google Maps API, etc.)

base_times = {

("San Francisco", "San Jose"): {"driving": 45, "transit": 75, "walking": 600},

("San Francisco", "Oakland"): {"driving": 25, "transit": 40, "walking": 180},

("New York", "Brooklyn"): {"driving": 30, "transit": 25, "walking": 120},

}

key = (origin, destination)

reverse_key = (destination, origin)

if key in base_times:

times = base_times[key]

elif reverse_key in base_times:

times = base_times[reverse_key]

else:

# Default estimate

times = {"driving": 30, "transit": 45, "walking": 90}

duration_minutes = times.get(mode, times["driving"])

return {

"origin": origin,

"destination": destination,

"mode": mode,

"duration_minutes": duration_minutes,

"duration_text": f"{duration_minutes} minutes" if duration_minutes < 60 else f"{duration_minutes // 60}h {duration_minutes % 60}m"

}

@tool

def get_local_events(city: str, category: Optional[str] = None) -> list:

# Simulated events data

all_events = {

"San Francisco": [

{"name": "Jazz Night at SFJAZZ", "category": "music", "time": "8:00 PM", "venue": "SFJAZZ Center"},

{"name": "Giants vs Dodgers", "category": "sports", "time": "7:15 PM", "venue": "Oracle Park"},

{"name": "Street Food Festival", "category": "food", "time": "11:00 AM", "venue": "Ferry Building"},

{"name": "AI Meetup", "category": "tech", "time": "6:30 PM", "venue": "Moscone Center"},

],

"New York": [

{"name": "Broadway Show: Hamilton", "category": "music", "time": "7:30 PM", "venue": "Richard Rodgers Theatre"},

{"name": "Yankees Game", "category": "sports", "time": "7:05 PM", "venue": "Yankee Stadium"},

{"name": "Smorgasburg Food Market", "category": "food", "time": "11:00 AM", "venue": "Williamsburg"},

],

}

events = all_events.get(city, [])

if category:

events = [e for e in events if e["category"] == category.lower()]

return events

# =============================================================================

# ORCHESTRATION LAYER - Strands Agent Configuration

# =============================================================================

def create_agent() -> Agent:

# Configure the reasoning layer (Amazon Bedrock with Claude)

model = BedrockModel(

model_id="us.anthropic.claude-sonnet-4-20250514-v1:0",

region_name="us-west-2"

)

# System prompt guides the reasoning layer

system_prompt = """You are a helpful local guide assistant. You help users plan

their day by providing weather information, travel times, and local events.

When a user asks about planning activities:

1. First check the weather to ensure conditions are suitable

2. Look up relevant local events based on their interests

3. Calculate travel times if they need to get somewhere

4. Synthesize all information into a helpful recommendation

Be concise and practical in your responses."""

# Create the agent - this is the orchestration layer

agent = Agent(

model=model,

system_prompt=system_prompt,

tools=[get_weather, calculate_travel_time, get_local_events]

)

return agent

def main():

# Create the agent

agent = create_agent()

# Example query that will trigger multiple tool calls

user_query = """

I'm in San Francisco and want to do something fun this evening.

What's the weather like, and are there any music events happening?

If there's something good, how long would it take me to get there from the Ferry Building?

"""

# Run the agent - this triggers the full three-layer flow

response = agent(user_query)

print(response)

if __name__ == "__main__":

main()Featured AI tools in AWS Marketplace

Build with foundation models, deployment platforms, and AI infrastructure — all available through your AWS account.

Why AWS Marketplace for on-demand cloud tools

Free to try. Deploy in minutes. Pay only for what you use.

Featured tools are designed to plug in to your AWS workflows and integrate with your favorite AWS services.

Subscribe through your AWS account with no upfront commitments, contracts, or approvals.

Try before you commit. Most tools include free trials or developer-tier pricing to support fast prototyping.

Only pay for what you use. Costs are consolidated with AWS billing for simplified payments, cost monitoring, and governance.

A broad selection of tools across observability, security, AI, data, and more can enhance how you build with AWS.

Continue your journey

Module 1 sets the foundation. Continue with frameworks and evaluation.